VQPP: Video Query Performance Prediction Benchmark

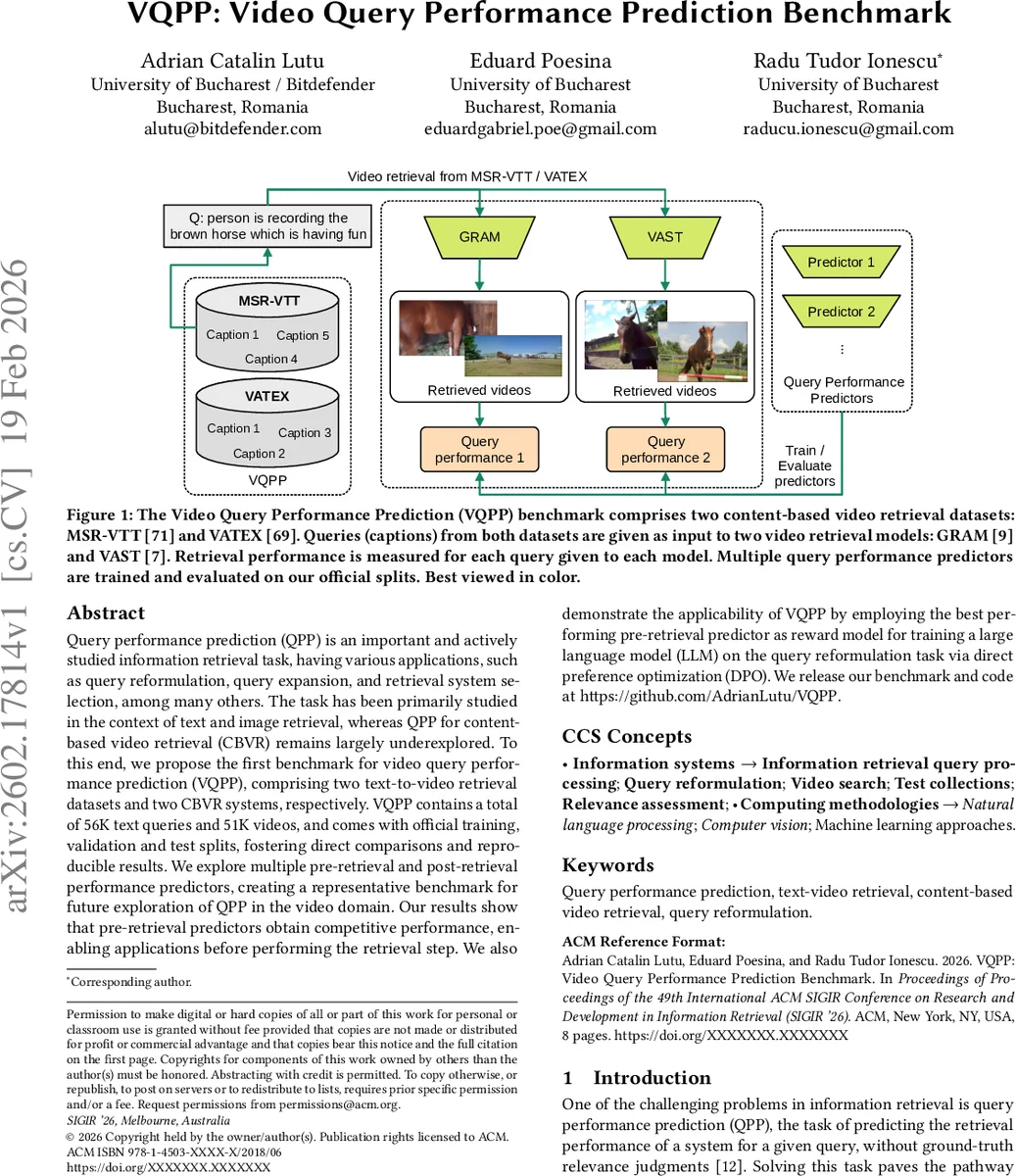

Query performance prediction (QPP) is an important and actively studied information retrieval task, having various applications, such as query reformulation, query expansion, and retrieval system selection, among many others. The task has been primarily studied in the context of text and image retrieval, whereas QPP for content-based video retrieval (CBVR) remains largely underexplored. To this end, we propose the first benchmark for video query performance prediction (VQPP), comprising two text-to-video retrieval datasets and two CBVR systems, respectively. VQPP contains a total of 56K text queries and 51K videos, and comes with official training, validation and test splits, fostering direct comparisons and reproducible results. We explore multiple pre-retrieval and post-retrieval performance predictors, creating a representative benchmark for future exploration of QPP in the video domain. Our results show that pre-retrieval predictors obtain competitive performance, enabling applications before performing the retrieval step. We also demonstrate the applicability of VQPP by employing the best performing pre-retrieval predictor as reward model for training a large language model (LLM) on the query reformulation task via direct preference optimization (DPO). We release our benchmark and code at https://github.com/AdrianLutu/VQPP.

💡 Research Summary

The paper introduces VQPP, the first benchmark dedicated to query performance prediction (QPP) for content‑based video retrieval (CBVR). While QPP has been extensively studied for text and image search, the video domain—characterized by temporal dynamics and multimodal representations—has received little attention. To fill this gap, the authors assemble a large‑scale dataset comprising 56 000 natural‑language queries and 51 000 videos drawn from two widely used text‑to‑video collections: MSR‑VTT and VATEX. For each query, they obtain retrieval results from two state‑of‑the‑art CBVR systems, GRAM and VAST, thereby defining four evaluation scenarios (two datasets × two retrieval models). Ground‑truth performance is measured using Reciprocal Rank (RR) and Recall@10, and the top‑100 retrieved videos for every query‑model pair are released to enable post‑retrieval predictor development.

The benchmark provides official train/validation/test splits, dense performance annotations, and pre‑computed retrieval outputs, allowing researchers to focus on building QPP estimators without the heavy computational burden of running video retrieval pipelines. The authors implement a broad spectrum of predictors, categorised as pre‑retrieval (query‑only) and post‑retrieval (result‑based). Pre‑retrieval baselines include simple linguistic heuristics such as query length, synset count, and part‑of‑speech ratios. More sophisticated pre‑retrieval models fine‑tune transformer‑based language models (BERT, RoBERTa) on the VQPP training data. Post‑retrieval predictors exploit score distributions, clustering tendencies, query‑clarity scores, and other signals derived from the retrieved list.

Experimental results show that deep pre‑retrieval models, especially a fine‑tuned BERT predictor, consistently outperform both traditional linguistic baselines and the evaluated post‑retrieval methods across all four scenarios. The BERT predictor achieves the highest Pearson (ρ) and Kendall (τ) correlations with the true RR/Recall@10 values, demonstrating that accurate difficulty estimation is possible without inspecting any retrieved videos. While some post‑retrieval approaches attain competitive scores, they require the full retrieval output and are computationally more demanding.

Beyond benchmarking, the authors demonstrate a practical application of VQPP: they use the best pre‑retrieval predictor as a reward model in a Direct Preference Optimization (DPO) framework to fine‑tune a large language model (Phi‑4‑mini‑instruct) for query reformulation. By generating pairs of reformulated queries and ranking them with the BERT predictor, the DPO‑trained LLM learns to produce queries that lead to higher retrieval performance. Empirical evaluation confirms that the reformulated queries improve RR and Recall@10 compared with the original queries, validating the utility of QPP as a supervisory signal for downstream tasks.

In summary, the paper makes four key contributions: (1) the creation and public release of VQPP, the first large‑scale benchmark for video QPP, (2) a comprehensive empirical study of diverse pre‑ and post‑retrieval predictors, establishing strong baselines for future work, (3) the demonstration that a high‑performing QPP model can serve as an effective reward function for LLM‑based query reformulation, and (4) open‑source code and data to foster reproducibility. The benchmark opens numerous research avenues, including the integration of multimodal large models, exploitation of video metadata, real‑time difficulty‑aware retrieval routing, and personalized query rewriting strategies.

Comments & Academic Discussion

Loading comments...

Leave a Comment