Enabling Training-Free Text-Based Remote Sensing Segmentation

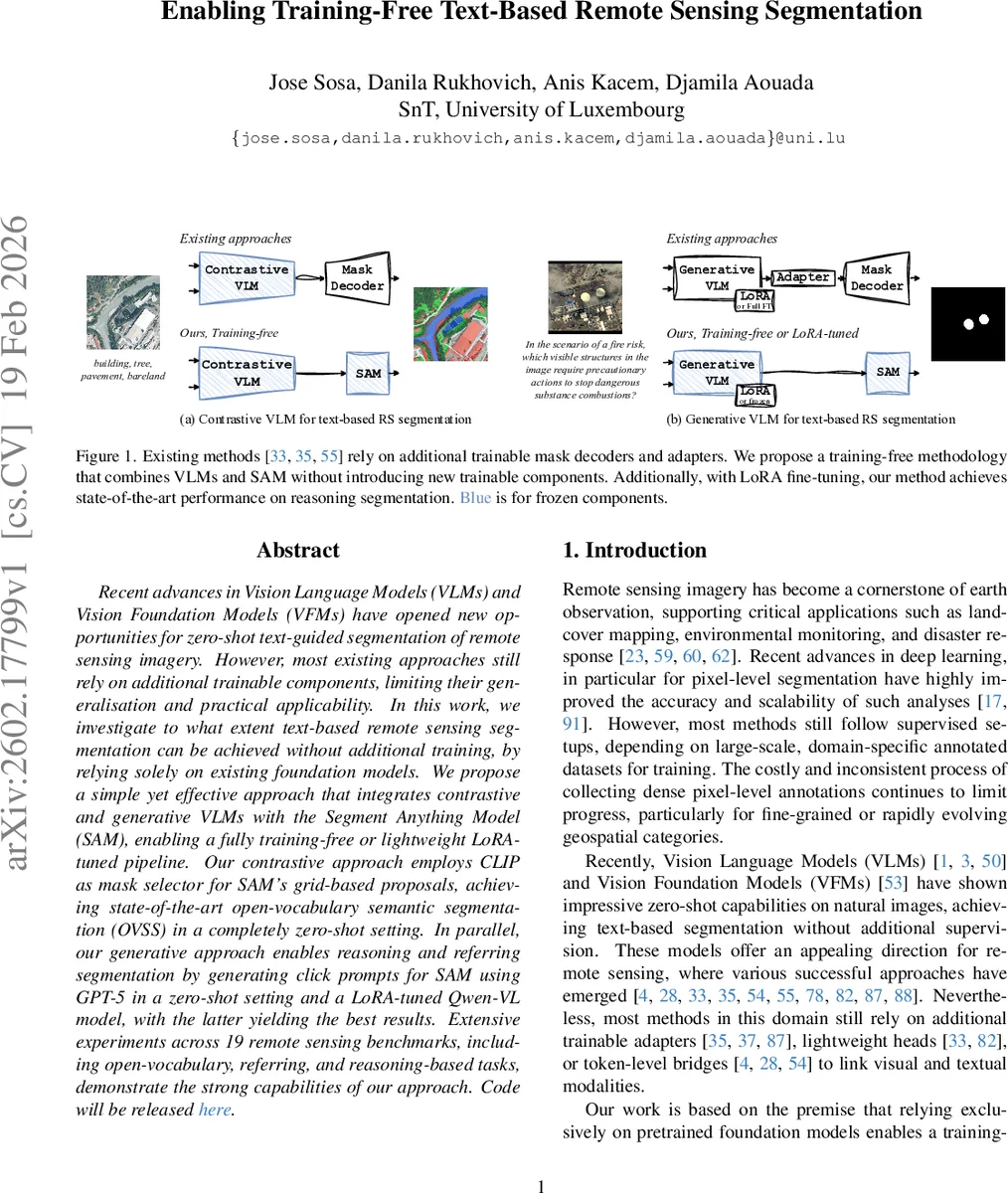

Recent advances in Vision Language Models (VLMs) and Vision Foundation Models (VFMs) have opened new opportunities for zero-shot text-guided segmentation of remote sensing imagery. However, most existing approaches still rely on additional trainable components, limiting their generalisation and practical applicability. In this work, we investigate to what extent text-based remote sensing segmentation can be achieved without additional training, by relying solely on existing foundation models. We propose a simple yet effective approach that integrates contrastive and generative VLMs with the Segment Anything Model (SAM), enabling a fully training-free or lightweight LoRA-tuned pipeline. Our contrastive approach employs CLIP as mask selector for SAM’s grid-based proposals, achieving state-of-the-art open-vocabulary semantic segmentation (OVSS) in a completely zero-shot setting. In parallel, our generative approach enables reasoning and referring segmentation by generating click prompts for SAM using GPT-5 in a zero-shot setting and a LoRA-tuned Qwen-VL model, with the latter yielding the best results. Extensive experiments across 19 remote sensing benchmarks, including open-vocabulary, referring, and reasoning-based tasks, demonstrate the strong capabilities of our approach. Code will be released at https://github.com/josesosajs/trainfree-rs-segmentation.

💡 Research Summary

The paper tackles the problem of text‑guided segmentation of remote sensing imagery without introducing any trainable components beyond existing foundation models. The authors observe that most recent remote‑sensing segmentation methods still rely on additional mask decoders, adapters, or token‑level bridges that must be trained on domain‑specific data, limiting their generalisation and practical deployment. To address this, they propose two complementary pipelines that combine pre‑trained Vision‑Language Models (VLMs) with the Segment Anything Model (SAM), a powerful vision foundation model capable of generating category‑agnostic masks from simple prompts such as clicks or bounding boxes.

The first pipeline is contrastive and fully zero‑shot. It uses CLIP, a contrastive VLM that aligns image and text embeddings, to compute a per‑pixel foreground probability map for a given textual query. Simultaneously, SAM is run on the input image with a regular grid of click prompts, producing a large set of candidate masks. For each mask, the proportion of pixels whose CLIP‑derived probability exceeds a threshold (e.g., 0.5) is measured; masks that satisfy this criterion are retained. In multi‑class scenarios, each retained mask is assigned the class that dominates its interior based on the CLIP probabilities, after applying a debiasing step that subtracts a scaled

The second pipeline is generative and can operate either zero‑shot or with lightweight LoRA fine‑tuning. Here, a generative VLM such as GPT‑5 or the open‑source Qwen‑VL receives the image and a textual instruction (which may be a short phrase, a full sentence, or a multi‑step reasoning prompt) and outputs a set of click coordinates labeled as positive or negative. These clicks are directly fed to SAM, which produces the final mask. To improve click generation, the authors automatically synthesize click sequences from existing pixel‑wise masks: starting from an initial positive click, SAM generates a mask; discrepancies with the ground‑truth mask are identified, and additional positive or negative clicks are placed in under‑segmented or over‑segmented regions, respectively. This iterative process continues until a target IoU or click budget is reached, yielding synthetic click‑mask pairs that serve as supervision for fine‑tuning the generative VLM. LoRA is applied only to the VLM, keeping SAM completely frozen; the fine‑tuning updates a tiny fraction of parameters (well under 0.1% of the total), preserving the efficiency of the system.

The authors evaluate both pipelines across 19 remote‑sensing benchmarks covering three task families: (i) open‑vocabulary semantic segmentation (short class names), (ii) referring segmentation (full sentences describing a specific object), and (iii) reasoning‑based segmentation (multi‑step logical queries). The contrastive CLIP‑SAM pipeline excels on the first family, delivering the highest mean Intersection‑over‑Union (mIoU) among zero‑shot methods. The generative pipeline, especially when the Qwen‑VL model is LoRA‑tuned, outperforms all prior work on referring and reasoning tasks, demonstrating strong language understanding and spatial grounding capabilities. Notably, the system achieves these results without training any new mask decoder or adapter, and with minimal computational overhead compared to full‑fine‑tuned baselines.

Key insights from the study include: (1) pre‑trained VLMs already contain sufficient cross‑modal knowledge to guide a generic mask generator like SAM; (2) contrastive VLMs are best suited for short, unambiguous prompts, while generative VLMs handle complex, context‑rich instructions; (3) lightweight LoRA fine‑tuning of the generative VLM can bridge the performance gap between pure zero‑shot and fully supervised methods; (4) automatic conversion of pixel masks to click sequences provides an effective supervision signal for training click‑generation models without manual annotation.

In conclusion, the paper establishes a new paradigm for remote‑sensing segmentation: by leveraging only existing foundation models—CLIP, Qwen‑VL (or GPT‑5), and SAM—researchers can achieve state‑of‑the‑art performance across diverse text‑guided tasks while eliminating the need for task‑specific training data or additional model components. The authors plan to release their code and pretrained adapters, facilitating immediate adoption in applications such as disaster response, land‑cover monitoring, and rapid geospatial analysis.

Comments & Academic Discussion

Loading comments...

Leave a Comment