Pushing the Frontier of Black-Box LVLM Attacks via Fine-Grained Detail Targeting

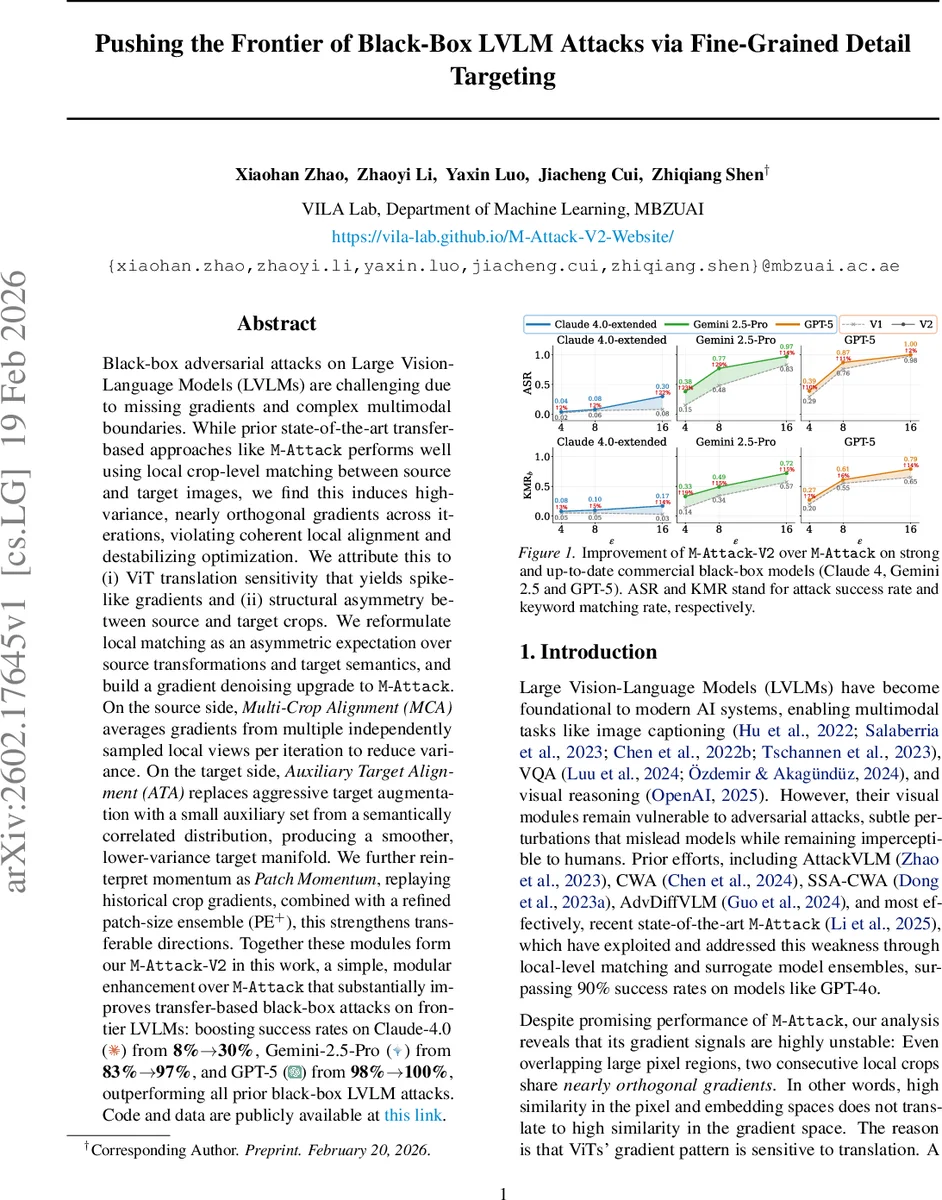

Black-box adversarial attacks on Large Vision-Language Models (LVLMs) are challenging due to missing gradients and complex multimodal boundaries. While prior state-of-the-art transfer-based approaches like M-Attack perform well using local crop-level matching between source and target images, we find this induces high-variance, nearly orthogonal gradients across iterations, violating coherent local alignment and destabilizing optimization. We attribute this to (i) ViT translation sensitivity that yields spike-like gradients and (ii) structural asymmetry between source and target crops. We reformulate local matching as an asymmetric expectation over source transformations and target semantics, and build a gradient-denoising upgrade to M-Attack. On the source side, Multi-Crop Alignment (MCA) averages gradients from multiple independently sampled local views per iteration to reduce variance. On the target side, Auxiliary Target Alignment (ATA) replaces aggressive target augmentation with a small auxiliary set from a semantically correlated distribution, producing a smoother, lower-variance target manifold. We further reinterpret momentum as Patch Momentum, replaying historical crop gradients; combined with a refined patch-size ensemble (PE+), this strengthens transferable directions. Together these modules form M-Attack-V2, a simple, modular enhancement over M-Attack that substantially improves transfer-based black-box attacks on frontier LVLMs: boosting success rates on Claude-4.0 from 8% to 30%, Gemini-2.5-Pro from 83% to 97%, and GPT-5 from 98% to 100%, outperforming prior black-box LVLM attacks. Code and data are publicly available at: https://github.com/vila-lab/M-Attack-V2.

💡 Research Summary

The paper investigates why the state‑of‑the‑art transfer‑based black‑box attack on large vision‑language models (LVLMs), M‑Attack, suffers from highly unstable gradients despite its local‑crop matching strategy. The authors discover that ViT‑based LVLMs exhibit extreme translation sensitivity: even sub‑pixel shifts alter token assignments, causing spike‑like, almost orthogonal gradients across overlapping crops. Moreover, the source and target crops play asymmetric roles—source crops directly affect pixel‑space representations while target crops merely shift the reference embedding—leading to a mismatch in the optimization objective. These factors produce high‑variance, near‑orthogonal gradients that destabilize the attack optimization.

To address this, the authors reformulate local matching as an asymmetric expectation over source transformations and target semantics, and introduce three modular enhancements that together constitute M‑Attack‑V2. First, Multi‑Crop Alignment (MCA) draws K independent random crops per iteration and averages their gradients, providing an unbiased Monte‑Carlo estimator whose variance scales as 1/K. Empirically, using K = 10 already raises the cosine similarity of successive gradients from near zero to ~0.2, and larger K further accelerates convergence. Second, Auxiliary Target Alignment (ATA) augments the single target embedding with a small set of semantically similar auxiliary images. Mild random transformations are applied to these anchors, yielding a low‑variance, richer semantic manifold that balances exploration and exploitation. Theoretical analysis under Lipschitz continuity and bounded auxiliary drift shows that ATA reduces embedding drift compared with aggressive target augmentations. Third, the authors introduce Patch Momentum (PM), which replays historical crop gradients across iterations, and a refined Patch Ensemble (PE+) that combines models with diverse patch sizes to capture multi‑scale features. Together, these components amplify transferable directions and focus attention on salient object regions.

Extensive experiments on frontier commercial LVLMs—Claude‑4.0, Gemini‑2.5‑Pro, and GPT‑5—demonstrate substantial gains: attack success rates improve from 8 % to 30 % on Claude‑4.0, from 83 % to 97 % on Gemini‑2.5‑Pro, and from 98 % to 100 % on GPT‑5, surpassing all prior black‑box LVLM attacks. The paper provides a clean, modular upgrade to M‑Attack, offers theoretical justification for variance reduction, and releases code and data publicly, thereby advancing the frontier of black‑box adversarial attacks on multimodal AI systems.

Comments & Academic Discussion

Loading comments...

Leave a Comment