FAMOSE: A ReAct Approach to Automated Feature Discovery

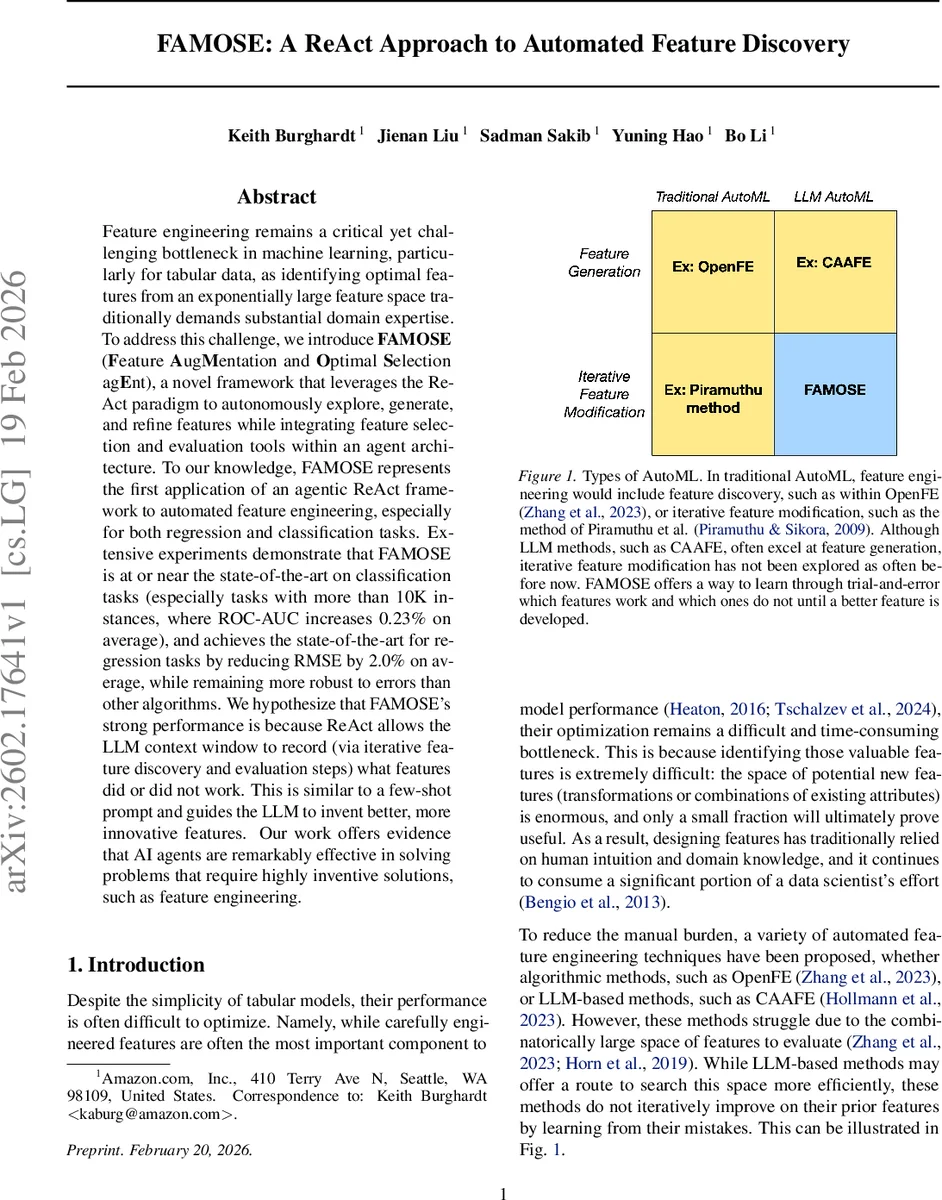

Feature engineering remains a critical yet challenging bottleneck in machine learning, particularly for tabular data, as identifying optimal features from an exponentially large feature space traditionally demands substantial domain expertise. To address this challenge, we introduce FAMOSE (Feature AugMentation and Optimal Selection agEnt), a novel framework that leverages the ReAct paradigm to autonomously explore, generate, and refine features while integrating feature selection and evaluation tools within an agent architecture. To our knowledge, FAMOSE represents the first application of an agentic ReAct framework to automated feature engineering, especially for both regression and classification tasks. Extensive experiments demonstrate that FAMOSE is at or near the state-of-the-art on classification tasks (especially tasks with more than 10K instances, where ROC-AUC increases 0.23% on average), and achieves the state-of-the-art for regression tasks by reducing RMSE by 2.0% on average, while remaining more robust to errors than other algorithms. We hypothesize that FAMOSE’s strong performance is because ReAct allows the LLM context window to record (via iterative feature discovery and evaluation steps) what features did or did not work. This is similar to a few-shot prompt and guides the LLM to invent better, more innovative features. Our work offers evidence that AI agents are remarkably effective in solving problems that require highly inventive solutions, such as feature engineering.

💡 Research Summary

The paper introduces FAMOSE (Feature Augmentation and Optimal Selection Agent), a novel automated feature‑engineering framework that leverages the ReAct (Reasoning‑and‑Acting) paradigm to create an autonomous AI agent capable of iteratively generating, evaluating, and refining features for tabular data. Traditional AutoML pipelines either rely on exhaustive combinatorial searches with predefined transformation rules (e.g., OpenFE, AutoFeat) or use large language models (LLMs) to produce a static list of candidate features (e.g., CAAFE). Both approaches suffer from the curse of dimensionality and from LLM hallucinations because they lack a feedback loop that learns from empirical performance.

FAMOSE addresses these limitations by embedding a ReAct‑style agent that acts as a “data scientist.” The agent first extracts metadata (column names, data types) using a lightweight tool, then proposes new features expressed as Python code. The code is executed in a sandbox; the resulting feature column is added to the dataset, and a base model (any tabular learner) is trained on the augmented data. Model performance on a held‑out validation split (ROC‑AUC for classification, RMSE for regression) is compared against the baseline without the new feature. If the improvement exceeds a pre‑set threshold (typically 1 %), the feature is kept as a candidate; otherwise the agent revises its code and tries again. This reasoning‑acting loop runs for up to ten internal steps per round and up to twenty rounds, stopping early when no further gain is observed.

After the iterative discovery phase, FAMOSE applies a traditional feature‑selection algorithm—minimum redundancy‑maximum relevance (mRMR)—to prune the candidate set, removing redundant columns while preserving those most correlated with the target. This choice contrasts with recent LLM‑based selectors, which the authors argue are less reliable and more computationally expensive.

The authors evaluate FAMOSE on a diverse benchmark suite: 20 classification tasks (binary and multi‑class) and 7 regression tasks, covering three orders of magnitude in dataset size, including several Kaggle datasets with >10 K instances. Baselines include OpenFE, AutoFeat, CAAFE, FeatLLM, and standard pipelines without automated feature engineering. Experiments employ 5‑fold cross‑validation, and performance is reported as the average gain over baselines.

Key findings:

- On large classification datasets (>10 K samples), FAMOSE improves ROC‑AUC by an average of 0.23 % (with some datasets seeing >1 % gain), outperforming all baselines.

- On regression problems, it reduces RMSE by an average of 2 %, achieving state‑of‑the‑art results.

- The agent’s iterative loop reduces hallucination: when generated code fails or produces spurious features, regex‑based error correction forces the agent to revise its proposal, leading to higher robustness.

- The framework remains stable even when other AutoML tools crash or produce no useful features, demonstrating superior fault tolerance.

- Each generated feature is accompanied by a natural‑language justification, enhancing interpretability for end‑users and aligning with regulatory demands for explainable AI.

The authors hypothesize that the ReAct paradigm’s ability to retain a “memory” of past successes and failures within the LLM’s context window acts like a few‑shot prompt, guiding the model toward more inventive transformations. Empirical results support this claim: the agent learns to focus on transformations that have historically yielded signal, avoiding redundant or noisy operations.

Implementation details: FAMOSE builds on the open‑source SmolAgents framework, stripping it down to a Python code executor and a metadata generator. The prompt given to the LLM defines a data‑scientist role, supplies dataset descriptions, and instructs the agent to (1) generate a large set of features using any mathematical operation, (2) explain each feature’s utility, (3) evaluate it with the provided tool, (4) aim for at least a 1 % performance boost, and (5) return the best feature. The loop continues until the improvement goal is met or the step limit is reached.

In conclusion, FAMOSE demonstrates that an agentic ReAct architecture can overcome the primary drawbacks of existing automated feature‑engineering methods—search scalability, hallucination, and lack of iterative learning—while delivering state‑of‑the‑art predictive performance and interpretable feature explanations. The work opens avenues for extending agentic reasoning to other AutoML components (hyperparameter tuning, model selection) and to multimodal data scenarios.

Comments & Academic Discussion

Loading comments...

Leave a Comment