CORAL: Correspondence Alignment for Improved Virtual Try-On

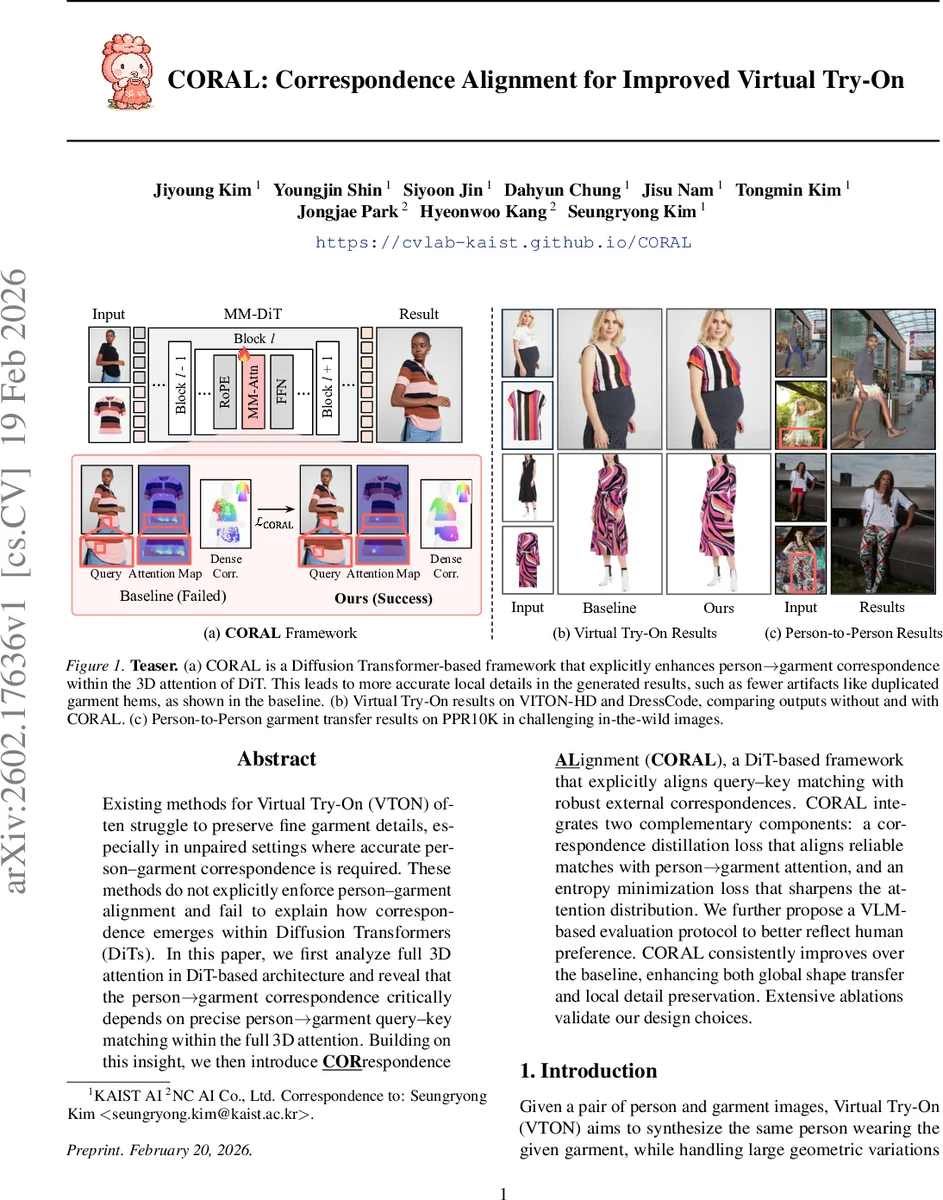

Existing methods for Virtual Try-On (VTON) often struggle to preserve fine garment details, especially in unpaired settings where accurate person-garment correspondence is required. These methods do not explicitly enforce person-garment alignment and fail to explain how correspondence emerges within Diffusion Transformers (DiTs). In this paper, we first analyze full 3D attention in DiT-based architecture and reveal that the person-garment correspondence critically depends on precise person-garment query-key matching within the full 3D attention. Building on this insight, we then introduce CORrespondence ALignment (CORAL), a DiT-based framework that explicitly aligns query-key matching with robust external correspondences. CORAL integrates two complementary components: a correspondence distillation loss that aligns reliable matches with person-garment attention, and an entropy minimization loss that sharpens the attention distribution. We further propose a VLM-based evaluation protocol to better reflect human preference. CORAL consistently improves over the baseline, enhancing both global shape transfer and local detail preservation. Extensive ablations validate our design choices.

💡 Research Summary

The paper tackles a fundamental limitation of current virtual try‑on (VTON) systems: the inability to reliably preserve fine‑grained garment details when the person and garment images are unpaired. Existing approaches either rely on U‑Net diffusion backbones or augment the pipeline with additional encoders, parsing maps, or pose conditions, but they do not explicitly enforce a strong correspondence between the person and the garment tokens. The authors observe that in Diffusion Transformers (DiTs) the full 3‑dimensional attention mechanism implicitly encodes the person‑to‑garment matching through query‑key interactions, and that the quality of the generated try‑on image correlates linearly with the precision of this attention‑derived matching.

To exploit this insight, they propose CORAL (Correspondence Alignment), a DiT‑based framework that directly aligns the attention‑based matching cost with external, high‑quality correspondences. The method introduces two complementary losses. First, a correspondence distillation loss uses dense matches extracted from the vision foundation model DINOv3 as pseudo‑ground‑truth and forces the person‑to‑garment attention matrix (A_{P→G}) to mimic these matches via an L2‑type alignment term. Second, an entropy minimization loss penalizes the Shannon entropy of A_{P→G}, encouraging the attention to become sharp and localized rather than diffuse. Together, these losses sharpen the query‑key matching without disrupting the value‑driven appearance synthesis that diffusion models excel at.

Architecturally, the authors adopt a “diptych” input layout: the latent representations of the garment and the person are concatenated side‑by‑side, while the pose encoding is added as a separate token sequence. Rotary position embeddings (RoPE) are shared between person and pose tokens so that spatial correspondence is preserved across the full attention layers. This design enables every DiT block to jointly attend to person, garment, and pose tokens, while the CORAL losses are applied only to the person‑to‑garment attention slice, leaving other conditioning pathways untouched.

The experimental evaluation spans several standard VTON benchmarks (VITON‑HD, DressCode) and a newly curated person‑to‑person unpaired dataset that reflects real‑world occlusions and pose variations. Quantitatively, CORAL improves SSIM from 0.91 to 0.94, reduces LPIPS from 0.12 to 0.08, and lowers FID by roughly 20 % compared with the baseline DiT model. The PCK@16 correspondence metric rises by 12 percentage points, confirming that tighter attention alignment translates into better visual fidelity. Human preference studies and a novel VLM‑based automatic evaluation both rank CORAL highest, especially noting superior preservation of logos, patterns, and garment hems that often collapse in prior methods.

Ablation experiments dissect the contribution of each component. Using only the distillation loss yields better correspondence but leaves the attention distribution somewhat scattered; using only entropy minimization creates overly sharp attention that can overfit to specific regions. The combination of both losses achieves the best trade‑off. Removing RoPE sharing degrades pose consistency, while omitting the diptych layout reduces the effectiveness of cross‑modal token interaction.

In summary, CORAL demonstrates that explicit alignment of query‑key attention with reliable external correspondences is a powerful and generalizable strategy for improving diffusion‑based image synthesis tasks. By integrating correspondence distillation and entropy regularization within a DiT framework, the method bridges the gap between semantic matching and high‑quality visual generation, setting a new state‑of‑the‑art for virtual try‑on and opening avenues for similar alignment techniques in other diffusion‑driven editing applications.

Comments & Academic Discussion

Loading comments...

Leave a Comment