AutoNumerics: An Autonomous, PDE-Agnostic Multi-Agent Pipeline for Scientific Computing

PDEs are central to scientific and engineering modeling, yet designing accurate numerical solvers typically requires substantial mathematical expertise and manual tuning. Recent neural network-based approaches improve flexibility but often demand hig…

Authors: Ji, a Du, Youran Sun

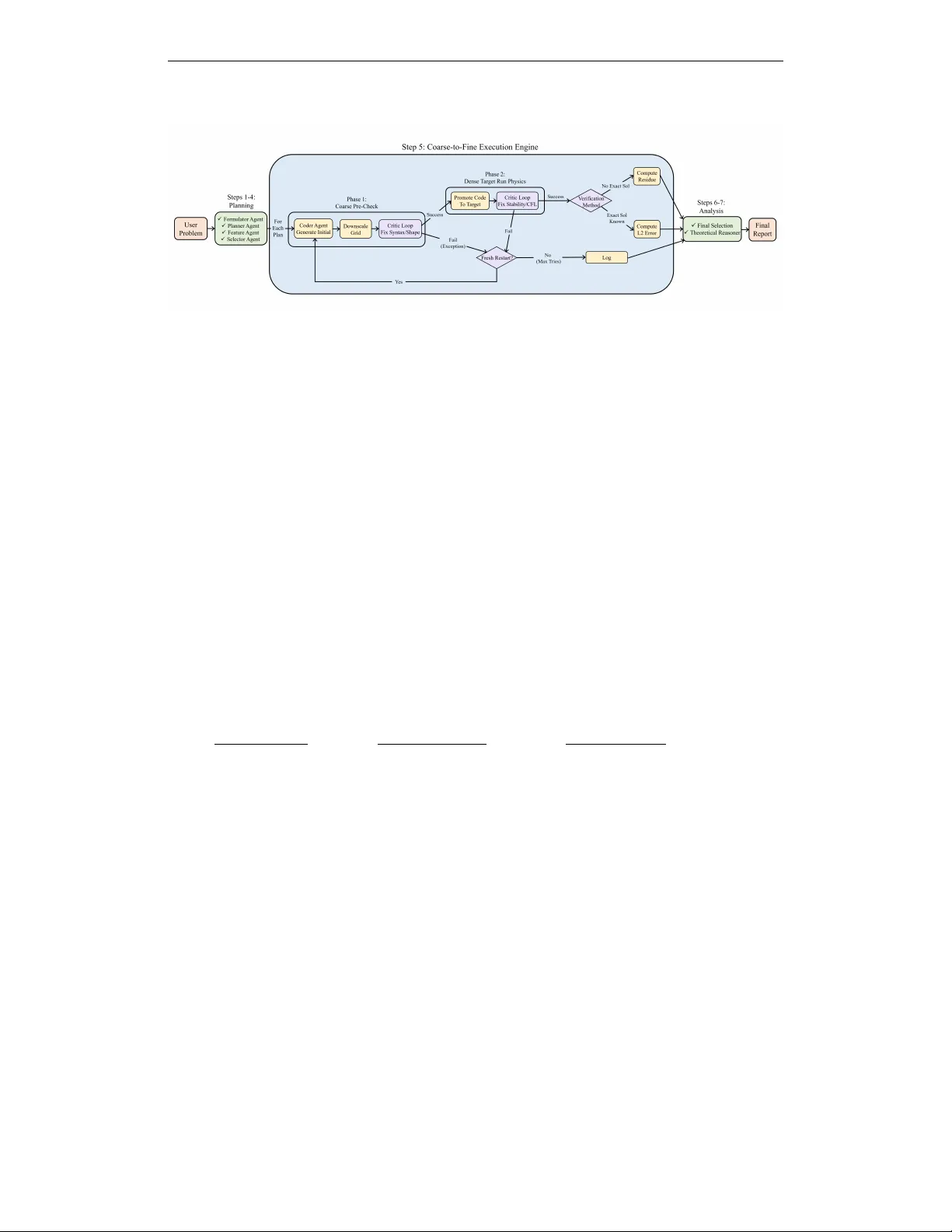

A U T O N U M E R I C S : A N A U T O N O M O U S , P D E - A G N O S T I C M U LT I - A G E N T P I P E L I N E F O R S C I E N T I FI C C O M P U T I N G Jianda Du 1 Y ouran Sun 1 Haizhao Y ang 1 , 2 , ∗ 1 Department of Mathematics, Univ ersity of Maryland, College P ark, MD, USA 2 Department of Computer Science, Univ ersity of Maryland, College P ark, MD, USA jdu37576@umd.edu sun1245@umd.edu hzyang@umd.edu A B S T R A C T PDEs are central to scientific and engineering modeling, yet designing accu- rate numerical solvers typically requires substantial mathematical e xpertise and manual tuning. Recent neural network-based approaches improv e flexibility but often demand high computational cost and suffer from limited interpretability . W e introduce AutoNumerics , a multi-agent frame work that autonomously de- signs, implements, debugs, and verifies numerical solvers for general PDEs di- rectly from natural language descriptions. Unlike black-box neural solvers, our framew ork generates transparent solvers grounded in classical numerical analy- sis. W e introduce a coarse-to-fine ex ecution strategy and a residual-based self- verification mechanism. Experiments on 24 canonical and real-world PDE prob- lems demonstrate that AutoNumerics achieves competitiv e or superior accu- racy compared to existing neural and LLM-based baselines, and correctly selects numerical schemes based on PDE structural properties, suggesting its viability as an accessible paradigm for automated PDE solving. 1 I N T R O D U C T I O N Partial differential equations (PDEs) form the mathematical foundation of modern physics, engi- neering, and many areas of scientific computing. Accurately solving PDEs is therefore a central task in computational research. T raditionally , constructing a reliable numerical solver for a new PDE requires substantial expertise in numerical analysis, including the selection of appropriate dis- cretization schemes (e.g., finite difference, finite element, or spectral methods) and verification of stability and con vergence conditions such as the Courant–Friedrichs–Le wy (CFL) constraint (LeV - eque, 2007). These classical approaches provide strong mathematical guarantees and interpretabil- ity , but their expert-dri ven design can limit accessibility and slo w solver development for newly arising PDE models. Neural network-based approaches such as physics-informed neural networks (PINNs) (Raissi et al., 2019) and operator-learning framew orks (Lu et al., 2019; Li et al., 2020) reduce reliance on hand- crafted discretizations b ut introduce new concerns around computational cost and interpretability . Large language models (LLMs) have recently demonstrated strong capabilities in scientific code generation (Zhang et al., 2024), and existing LLM-assisted PDE efforts include neural solver de- sign (He et al., 2025; Jiang & Karniadakis, 2025), tool-oriented systems that in vok e libraries such as FEniCS (Liu et al., 2025; W u et al., 2025), and code-generation paradigms (Li et al., 2025). Ho w- ev er , these approaches either produce black-box networks, are constrained by fixed library APIs, or lack mechanisms for autonomous debugging and correctness verification. W e propose that LLMs can serv e as numerical ar chitects that directly generate transparent solver code from first principles, preserving interpretability while automating solver construction. T ranslating this vision into a reliable system poses se veral technical challenges. First, LLM- generated code often contains syntax errors or logical flaws, and debugging these errors on high- * Corresponding author . 1 resolution grids is both time-consuming and computationally wasteful. Second, verifying solver correctness becomes difficult for PDEs lacking analytical solutions. Third, lar ge-scale temporal simulations may lead to memory exhaustion. W e address these challenges with three corresponding solutions. A coarse-to-fine execution strategy first debugs logic errors on low-resolution grids be- fore running on high-resolution grids. A residual-based self-verification mechanism ev aluates solver quality for problems without analytical solutions by computing PDE residual norms. A history dec- imation mechanism enables lar ge-scale temporal simulations through sparse storage of intermediate states. Building on these design principles, we propose AutoNumerics , a multi-agent autonomous framew ork. The system receives natural language problem descriptions, proposes multiple can- didate numerical strategies through a planning agent, implements ex ecutable solvers, and systemat- ically e valuates their correctness and performance. W e ev aluate the framew ork on 24 representativ e PDE problems spanning canonical benchmarks and real-world applications. Results demonstrate consistent numerical scheme selection, stable solver synthesis, and reliable accuracy across diverse PDE classes. Position relativ e to prior work. Existing LLM-assisted PDE efforts include neural solver de- sign (He et al., 2025; Jiang & Karniadakis, 2025), tool-oriented systems that inv oke libraries such as FEniCS (Liu et al., 2025; W u et al., 2025), and code-generation paradigms (Li et al., 2025). AutoNumerics dif fers from all three. It generates interpretable classical numerical schemes (not black-box networks), automatically detects and filters ill-designed or non-expert numerical plan configurations, deri ves discretizations from first principles (not fixed library APIs), and includes a coarse-to-fine ex ecution strategy with residual-based self-verification for autonomous correctness assessment. A detailed re view of related work is pro vided in Appendix A. Contributions. The primary contributions of this work are: • A multi-agent framework ( AutoNumerics ) that autonomously constructs transparent numer- ical PDE solvers from natural language descriptions. • A reasoning module that detects ill-designed or non-expert PDE specifications and proacti vely filters or revises numerical plans that may lead to instability or in valid solutions. • A coarse-to-fine execution strate gy that decouples logic debugging from stability v alidation. • A residual-based self-verification mechanism for solver e v aluation without analytical solutions. • A benchmark suite of 200 PDEs and systematic ev aluation on 24 representative problems, with comparisons to neural network baselines and CodePDE. 2 M E T H O D 2 . 1 P R O B L E M F O R M U L A T I O N A N D P L A N G E N E R A T I O N AutoNumerics consists of multiple specialized LLM agents coordinated by a central dispatcher . The system takes a natural language PDE problem description as input and produces executable numerical solver code with accuracy metrics as output. The overall architecture is illustrated in Figure 1. The pipeline begins with the Formulator Agent, which con verts the natural language description into a structured specification containing governing equations, boundary and initial conditions, and phys- ical parameters. The Planner Agent then proposes multiple candidate schemes covering different discretization methods (e.g., finite difference, spectral, finite volume) and time-stepping strategies (explicit, implicit), while avoiding configurations that violate basic numerical stability and consis- tency principles. The Feature Agent extracts numerical features from both the problem and the proposed schemes, and the Selector Agent scores and ranks these candidates, further filtering out ill-designed or nonphysical plans before selecting the top- k for ex ecution. 2 . 2 C O A R S E - T O - F I N E E X E C U T I O N Debugging LLM-generated code directly on high-resolution grids is computationally wasteful. W e decouple logic debugging from stability validation through a coarse-to-fine strategy . In the coarse- 2 Figure 1: The AutoNumerics pipeline. Steps 1–4 handle problem formulation and plan selection. Step 5 implements the coarse-to-fine execution strategy with Fresh Restart logic. Steps 6–7 perform verification and theoretical analysis. grid phase, the solver runs at reduced resolution, and the Critic Agent fixes logic issues (syntax errors, shape mismatches). Once logic v alidation passes, the code is promoted to the high-resolution grid, where failures are treated as numerical stability issues and addressed by adjusting the time step. If repair attempts exceed the retry limit M at either stage, the system triggers a Fresh Restart. The current code is discarded and the Coder Agent generates a new implementation from scratch, enabling the system to escape failed code paths. For large-scale temporal simulations, the Coder Agent is instructed to store solution snapshots only at sparse intervals to a void memory e xhaustion. 2 . 3 V E R I FI C A T I O N A N D A N A L Y S I S V erifying solver correctness is a core challenge in automated PDE solving. Let u denote the numer- ical solution, u ∗ the analytic solution (when av ailable), and L the PDE operator . When an explicit analytic solution exists, we compute the relative L 2 error; when no analytic solution is av ailable, we e valuate the relativ e PDE residual; and for implicit analytic relations (e.g., conservation laws F ( u ) = 0 ), we measure the relati ve implicit residual. These three errors are defined respecti vely as e L 2 = ∥ u − u ∗ ∥ L 2 (Ω) ∥ u ∗ ∥ L 2 (Ω) + ϵ , e res = ∥L ( u ) − f ∥ L 2 (Ω) ∥ f ∥ L 2 (Ω) + ϵ , e impl = ∥ F ( u ) ∥ L 2 (Ω) ∥ F ref ∥ L 2 (Ω) + ϵ , where ϵ = 10 − 12 (1) Generated solvers are required to compute and return residuals, and the system enforces validity checks on these v alues. Finally , a Reasoning Agent generates theoretical analysis for the best- performing scheme. 3 E X P E R I M E N T S & R E S U LT S 3 . 1 E X P E R I M E N TA L S E T U P Benchmark: W e e valuate our framew ork on two benchmarks: (1) CodePDE Benchmark. T o enable fair comparison with existing neural network solvers and LLM-based methods, we adopt the benchmark proposed by CodePDE, which comprises 5 representativ e PDEs: 1D Advection, 1D Burgers, 2D Reaction-Diffusion, 2D Compressible Navier-Stokes (CNS), and 2D Darcy Flow . These problems span linear and nonlinear equations, elliptic and time-dependent types, as well as div erse boundary conditions and levels of numerical stiffness. (2) Our Benchmark. T o more com- prehensiv ely assess the generality of our frame work, we construct a large-scale benchmark suite containing 200 different PDEs, covering a wide range of common PDE families (Advection, Burg- ers, Fokk er-Planck, Heat, Maxwell, Poisson, etc.). The PDEs in our benchmark range from 1D to 5D in spatial dimension and span elliptic, parabolic, hyperbolic types as well as PDE systems. They include linear and nonlinear, stiff and non-stiff, steady-state and time-dependent problems, with Dirichlet, Neumann, and periodic boundary conditions. 3 Numerical Settings: The Planner Agent generates 10 candidate solv er schemes and scores each one for ev ery PDE problem. The top-5 schemes are passed to the Coder Agent for implementation. W e set the maximum number of retries for code generation, coarse-grid execution, and high-resolution ex ecution to 2, 4, and 6, respectiv ely . The maximum wall-clock time for each coarse-grid or high- resolution run is 120 seconds. Evaluation Metrics: W e ev aluate solver accuracy using the three metrics defined in Section 2.3 ( e L 2 , e impl , e res ) 1, depending on the av ailable reference information. W e also report e xecution time, defined as the wall-clock time from solver generation to the first successful e v aluation. 3 . 2 R E S U LT S A N D A N A L Y S I S T able 1: nRMSE (normalized root mean square error) comparison with neural network baselines and CodePDE. All LLM-based methods (CodePDE and Ours) use GPT -4.1. CodePDE results are obtained under the Reasoning + Debugging + Refinement setting (best of 12). nRMSE ( ↓ ) Advection Burgers React-Diff CNS Darcy Geom. Mean U-Net 5 . 00 × 10 − 2 2 . 20 × 10 − 1 6 . 00 × 10 − 3 3 . 60 × 10 − 1 − − FNO 7 . 70 × 10 − 3 7 . 80 × 10 − 3 1 . 40 × 10 − 3 9 . 50 × 10 − 2 9 . 80 × 10 − 3 9 . 52 × 10 − 3 PINN 7 . 80 × 10 − 3 8 . 50 × 10 − 1 8 . 00 × 10 − 2 − − − ORCA 9 . 80 × 10 − 3 1 . 20 × 10 − 2 3 . 00 × 10 − 3 6 . 20 × 10 − 2 − − PDEformer 4 . 30 × 10 − 3 1 . 46 × 10 − 2 − − − − UPS 2 . 20 × 10 − 3 3 . 73 × 10 − 2 5 . 57 × 10 − 2 4 . 50 × 10 − 3 − − CodePDE 1 . 01 × 10 − 3 3 . 15 × 10 − 4 1 . 44 × 10 − 1 1 . 53 × 10 − 2 4 . 88 × 10 − 3 5 . 08 × 10 − 3 Central Difference (Ill-designed) 7 . 05 × 10 12 1 . 64 × 10 − 2 1 . 23 × 10 − 1 3 . 85 2 . 34 × 10 − 1 − Ours 4 . 18 × 10 − 14 1 . 79 × 10 − 5 8 . 98 × 10 − 7 1 . 82 × 10 − 4 4 . 84 × 10 − 13 9 . 00 × 10 − 9 W e select 24 representativ e problems from our 200-PDE benchmark suite, spanning 1D to 5D and cov ering elliptic, parabolic, and hyperbolic types (full results in Appendix T able 2). Among the 19 problems with explicit analytic solutions, 11 achieve relativ e L 2 errors of 10 − 6 or better , with Poisson ( 5 . 41 × 10 − 16 ) and Helmholtz 2D ( 3 . 50 × 10 − 16 ) reaching near machine precision. Bihar- monic ( 6 . 14 × 10 − 1 ) and 5D Helmholtz ( 9 . 8 × 10 − 1 ) are notable failure cases, indicating limited capability on fourth-order and high-dimensional PDEs. End-to-end runtimes fall between 20 and 130 seconds for most problems. A step-by-step walkthrough of the full pipeline on one example problem is provided in Appendix C. T able 1 compares our method with six neural network baselines, CodePDE, and an ill-designed solver on the fiv e CodePDE benchmark problems; all baseline results are reproduced from Li et al. (2025). Our method achieves the lowest nRMSE on all fiv e problems, with a geometric mean of 9 . 00 × 10 − 9 , approximately six orders of magnitude belo w CodePDE ( 5 . 08 × 10 − 3 ) and the Fourier Neural Operator (FNO, 9 . 52 × 10 − 3 ). As a reference point, this ill-designed central finite-difference baseline, obtained from an existing online implementation and applied nai vely without stability safe- guards, yields extremely large nRMSE across the fi ve PDEs, reaching 7 . 05 × 10 12 on the advection case. This counterexample highlights the importance of stability-aware plan generation and selec- tion in our pipeline for pre venting such ill-designed solvers from being executed. Analysis of the selected schemes across all 24 problems (see Appendix T able 5) reveals a consistent pattern: the Planner Agent selects Fourier spectral methods for periodic-boundary problems, finite dif ference or finite element methods for Dirichlet-boundary parabolic problems, and Chebyshev spectral methods for Dirichlet-boundary elliptic problems. 4 C O N C L U S I O N The Planner and Selector agents embed stability- and consistency-aware numerical reasoning into the generation process, enabling the pipeline to detect and e xclude ill-designed or nonphysical solver configurations prior to e xecution. Through a subsequent coarse-to-fine execution strategy and residual-based self-verification, the system then performs end-to-end solver construction and qual- ity assessment without requiring analytical solutions. Experiments on 24 benchmark PDEs indicate that the framew ork selects numerical schemes consistent with PDE structural properties (e.g., spec- tral methods for periodic domains, finite differences for Dirichlet boundaries), and achiev es lower error than both neural netw ork baselines and CodePDE on the majority of the CodePDE benchmark 4 problems. The frame work still exhibits limited accuracy on high-dimensional ( ≥ 5D) and high-order PDEs, and our e valuation co vers only re gular domains. The system is also coupled to a single LLM (GPT -4.1), and the generated code lacks formal conv ergence or stability guarantees. A C K N O W L E D G M E N T S The authors were partially supported by the US National Science Foundation under awards IIS- 2520978, GEO/RISE-5239902, the Of fice of Na val Research A ward N00014- 23-1-2007, DOE (ASCR) A ward DE-SC0026052, and the D ARP A D24AP00325-00. Appro ved for public release; distribution is unlimited. R E F E R E N C E S S ¨ oren Arlt, Haonan Duan, Felix Li, Sang Michael Xie, Y uhuai W u, and Mario Krenn. Meta- designing quantum experiments with language models, 2024. Andres M Bran, Sam Cox, Oliv er Schilter, Carlo Baldassari, Andre w D White, and Philippe Schwaller . Chemcro w: Augmenting large-language models with chemistry tools. arXiv preprint arXiv:2304.05376 , 2023. Johannes Brandstetter, Daniel W orrall, and Max W elling. Message passing neural pde solvers. arXiv pr eprint arXiv:2202.03376 , 2022. Ricardo Buitrago, T anya Marwah, Albert Gu, and Andrej Risteski. On the benefits of memory for modeling time-dependent PDEs. In The Thirteenth International Conference on Learning Repr esentations , 2025. Claudio Canuto, M Y ousuff Hussaini, Alfio Quarteroni, and Thomas A Zang. Spectral methods: evolution to comple x geometries and applications to fluid dynamics . Springer Science & Business Media, 2007. Shuhao Cao. Choose a transformer: Fourier or galerkin. Advances in neur al information processing systems , 34:24924–24940, 2021. Xin He, Liangliang Y ou, Hongduan T ian, Bo Han, Ivor Tsang, and Y ew-Soon Ong. Lang-pinn: From language to physics-informed neural networks via a multi-agent frame work, 2025. URL https://arxiv.org/abs/2510.05158 . Qile Jiang and George Karniadakis. Agenticsciml: Collaborati ve multi-agent systems for emergent discov ery in scientific machine learning, 2025. URL 07262 . Zhengyao Jiang, Dominik Schmidt, Dhruv Srikanth, Dixing Xu, Ian Kaplan, Deniss Jacenko, and Y uxiang W u. Aide: Ai-driven exploration in the space of code. arXiv pr eprint arXiv:2502.13138 , 2025. Randall J LeV eque. F inite differ ence methods for ordinary and partial differ ential equations: steady- state and time-dependent pr oblems . SIAM, 2007. Shanda Li, T anya Marwah, Junhong Shen, W eiwei Sun, Andrej Risteski, Y iming Y ang, and Ameet T alwalkar . Codepde: An inference framework for llm-driv en pde solver generation, 2025. URL https://arxiv.org/abs/2505.08783 . Zongyi Li, Nikola K ov achki, Kamyar Azizzadenesheli, Burigede Liu, Kaushik Bhattacharya, An- drew Stuart, and Anima Anandkumar . F ourier neural operator for parametric partial differential equations, 2020. URL . Jianming Liu, Ren Zhu, Jian Xu, Kun Ding, Xu-Y ao Zhang, Gaofeng Meng, and Cheng-Lin Liu. Pde-agent: A toolchain-augmented multi-agent framew ork for pde solving, 2025. URL https: //arxiv.org/abs/2512.16214 . 5 Lu Lu, Pengzhan Jin, and Geor ge Em Karniadakis. Deeponet: Learning nonlinear operators for iden- tifying differential equations based on the univ ersal approximation theorem of operators, 2019. URL . Lu Lu, Pengzhan Jin, Guofei Pang, Zhongqiang Zhang, and George Em Karniadakis. Learning nonlinear operators via deeponet based on the universal approximation theorem of operators. Natur e machine intelligence , 3(3):218–229, 2021. Pingchuan Ma, Tsun-Hsuan W ang, Minghao Guo, Zhiqing Sun, Joshua B. T enenbaum, Daniela Rus, Chuang Gan, and W ojciech Matusik. Llm and simulation as bilevel optimizers: A new paradigm to advance ph ysical scientific discovery . ArXiv , abs/2405.09783, 2024. Michael McCabe, Bruno R ´ egaldo-Saint Blancard, Liam Holden Parker , Ruben Ohana, Miles Cran- mer , Alberto Bietti, Michael Eickenber g, Siav ash Golkar , Geraud Krawezik, Francois Lanusse, Mariel Pettee, Tiberiu T esileanu, Kyunghyun Cho, and Shirley Ho. Multiple physics pretraining for spatiotemporal surrogate models. In The Thirty-eighth Annual Confer ence on Neural Infor- mation Pr ocessing Systems , 2024. Maziar Raissi, Paris Perdikaris, and George E Karniadakis. Physics-informed neural networks: A deep learning framew ork for solving forward and in verse problems inv olving nonlinear partial differential equations. Journal of Computational Physics , 378:686–707, 2019. Bernardino Romera-Paredes, Mohammadamin Barekatain, Alexander Novik ov , Matej Balog, M Pa wan K umar , Emilien Dupont, Francisco JR Ruiz, Jordan S Ellenberg, Pengming W ang, Omar Fawzi, et al. Mathematical discov eries from program search with large language models. Natur e , 625(7995):468–475, 2024. Junhong Shen, T an ya Marwah, and Ameet T alwalkar . UPS: Efficiently b uilding foundation models for PDE solving via cross-modal adaptation. T ransactions on Mac hine Learning Resear ch , 2024. Mauricio Soroco, Jialin Song, Mengzhou Xia, Kye Emond, W eiran Sun, and Wuyang Chen. Pde- controller: Llms for autoformalization and reasoning of pdes. arXiv preprint , 2025. Shashank Subramanian, Peter Harrington, Kurt Keutzer , W ahid Bhimji, Dmitriy Morozov , Michael Mahoney , and Amir Gholami. T owards foundation models for scientific machine learning: Char- acterizing scaling and transfer behavior . arXiv preprint , 2023. Xiangru T ang, Bill Qian, Rick Gao, Jiakang Chen, Xinyun Chen, and Mark Gerstein. Biocoder: A benchmark for bioinformatics code generation with large language models, 2024. Ke W ang, Houxing Ren, Aojun Zhou, Zimu Lu, Sichun Luo, W eikang Shi, Renrui Zhang, Linqi Song, Mingjie Zhan, and Hongsheng Li. Mathcoder: Seamless code integration in llms for en- hanced mathematical reasoning, 2023. Haoyang W u, Xinxin Zhang, and Lailai Zhu. Automated code dev elopment for pde solvers using large language models, 2025. URL . Y u Zhang, Xiusi Chen, Bowen Jin, Sheng W ang, Shuiwang Ji, W ei W ang, and Jiawei Han. A com- prehensiv e survey of scientific large language models and their applications in scientific discov ery , 2024. URL . Jianwei Zheng, LiweiNo, Ni Xu, Junwei Zhu, XiaoxuLin, and Xiaoqin Zhang. Alias-free mamba neural operator . In The Thirty-eighth Annual Conference on Neural Information Processing Sys- tems , 2024. Lianhao Zhou, Hongyi Ling, Cong Fu, Y epeng Huang, Michael Sun, W endi Y u, Xiaoxuan W ang, Xiner Li, Xingyu Su, Junkai Zhang, Xiusi Chen, Chenxing Liang, Xiaofeng Qian, Heng Ji, W ei W ang, Marinka Zitnik, and Shuiwang Ji. Autonomous agents for scientific discov ery: Orchestrat- ing scientists, language, code, and physics, 2025. URL 09901 . O.C. Zienkie wicz and R.L. T aylor . The F inite Element Method: Its Basis and Fundamentals . Butterworth-Heinemann, 2013. 6 A R E L A T E D W O R K Classical Numerical Methods. Classical numerical analysis remains the foundation for solving PDEs. The finite dif ference method approximates deriv ati ves using grid-based differences (LeV - eque, 2007). The finite element method represents solutions ov er mesh elements (Zienkiewicz & T aylor, 2013). Spectral methods expand solutions in global basis functions (Canuto et al., 2007). Despite their mathematical rigor , constructing effecti ve solvers typically requires substantial ex- pertise in discretization design and stability verification, motiv ating interest in automated solver construction. Neural and Data-Driven PDE Solvers. Scientific machine learning has introduced neural- network-based approaches for approximating PDE solutions, including PINNs (Raissi et al., 2019) and neural operators (Lu et al., 2021; Li et al., 2020). Subsequent work explores T ransformers (Cao, 2021), message-passing neural networks (Brandstetter et al., 2022), state-space models (Zheng et al., 2024; Buitrago et al., 2025), and pretrained multiphysics foundation models (Shen et al., 2024; Sub- ramanian et al., 2023; McCabe et al., 2024). LLMs f or Scientific Computing and PDE A utomation. Large language models hav e demon- strated strong capability in generating executable scientific code across chemistry (Bran et al., 2023), physics (Arlt et al., 2024), mathematics (W ang et al., 2023), and computational biology (T ang et al., 2024). Agentic reasoning frameworks extend these capabilities through planning and structured tool interaction (Romera-Paredes et al., 2024; Ma et al., 2024; Jiang et al., 2025; Zhou et al., 2025). FunSearch (Romera-Paredes et al., 2024) demonstrates program search for mathematical structure discov ery , while PDE-Controller (Soroco et al., 2025) explores LLM-dri ven autoformalization for PDE control. Closer to automated PDE solving, neural solver design framew orks construct PINNs via multi-agent reasoning (He et al., 2025; Jiang & Karniadakis, 2025), tool-oriented systems or- chestrate libraries such as FEniCS (Liu et al., 2025; W u et al., 2025), and code-generation paradigms synthesize candidate solvers (Li et al., 2025). 7 B F U L L B E N C H M A R K R E S U LT S T able 2 reports per-problem accurac y and runtime for all 24 benchmark PDEs. T able 2: Ev aluation of proposed framework across 24 benchmark PDEs. The upper block reports relativ e L 2 error for problems with known analytic solutions; the lo wer block reports relative residual error . PDE Dim Error Runtime (s) Explicit analytic solution available (Relative L 2 err or) Advection 2 1 . 13 × 10 − 13 29.8 Allen-Cahn 1 2 . 23 × 10 − 4 19.8 Biharmonic 2 6 . 14 × 10 − 1 89.3 Con vection Dif fusion 2 8 . 57 × 10 − 3 34.6 Euler 1 5 . 21 × 10 − 14 26.0 Heat 1 3 . 21 × 10 − 7 97.4 Heat 2 1 . 50 × 10 − 4 228.1 Helmholtz 2 3 . 50 × 10 − 16 66.3 Helmholtz 5 9 . 8 × 10 − 1 65.8 KdV 1 2 . 36 × 10 − 7 52.2 Laplace 2 1 . 24 × 10 − 5 85.9 Maxwell 3 1 . 00 × 10 − 3 126.1 Navier –Stokes 2 8 . 08 × 10 − 6 64.5 Poisson 2 5 . 41 × 10 − 16 68.9 Reaction Diffusion 2 9 . 88 × 10 − 6 199.5 Schr ¨ odinger 1 5 . 40 × 10 − 14 32.2 Shallow W ater 1 1 . 67 × 10 − 10 18.5 V orticity 2 3 . 32 × 10 − 4 54.1 W ave 1 8 . 34 × 10 − 10 73.1 Implicit analytic solution available (Relative implicit r esidual err or) Burgers (in viscid) 1 5 . 65 × 10 − 4 23.4 No analytic solution (Relative r esidual err or) Burgers (viscous) 1 8 . 95 × 10 − 14 63.1 Cahn–Hilliard 1 9 . 88 × 10 − 4 114.9 Fokker –Planck 2 2 . 24 × 10 − 3 44.3 Gray–Scott 2 1 . 10 × 10 − 3 23.7 8 C P I P E L I N E W A L K T H R O U G H : 2 D A D V E C T I O N W e walk through the full pipeline output for 2D Advection ( u t + c x u x + c y u y = 0 , periodic BCs, c x =0 . 3 , c y =0 . 2 ). Step 1: Planner Agent. The Planner generates 10 candidate schemes spanning spectral, finite difference (FD), finite volume (FV), and finite element (FEM) methods with v arious time inte grators (RK4: classical fourth-order Runge-Kutta; IMEX: implicit-explicit; ETDRK4: exponential time differencing RK4). The Selector Agent scores each based on expected accuracy , stability , and cost. T able 3 lists all candidates. T able 3: Candidate schemes generated by the Planner Agent and scored by the Selector Agent for the 2D Advection problem. Plan Score Method Rationale (summary) Spectral (RK4, high-res) 90 Spectral Fourier Optimal for smooth periodic adv ection FD (WENO3+RK3, high-res) 85 Finite Difference High-order upwind, conservati ve Spectral (ETDRK4, med-res) 80 Spectral Fourier Good accuracy-cost balance FV (MUSCL+RK2, med-res) 75 Finite V olume Limiter controls oscillations FD (semi-Lagrangian, med-res) 70 Finite Difference Large time steps, second-order FEM (IMEX, med-res) 60 Finite Element Stable but dif fusion treatment irrelev ant FD (upwind, low-res) 55 Finite Difference Stable but first-order accuracy FD (Crank-Nicolson, med-res) 50 Finite Difference No upwinding, oscillation risk FV (upwind, low-res) 50 Finite V olume Stable but lo w accuracy FEM (backward Euler , med-res) 45 Finite Element Implicit cost unjustified Step 2: Coder + Critic Agents. The top-5 plans are implemented and executed through the coarse-to-fine pipeline. T able 4 reports the ex ecution results. T able 4: Execution results for the top-5 candidate schemes on the 2D Adv ection problem. Plan Residual L 2 Runtime (s) Attempts Restarts Spectral (RK4, high-res) 1 . 75 × 10 − 3 23.8 2 0 FD (WENO3+RK3, high-res) 3 . 18 × 10 4 57.5 4 0 Spectral (ETDRK4, med-res) 8 . 02 × 10 − 15 35.3 4 0 FV (MUSCL+RK2, med-res) 1 . 94 × 10 − 1 33.2 2 0 FD (semi-Lagrangian, med-res) 2 . 27 × 10 − 2 15.8 2 0 Step 3: Final Selection. The Selector Agent chooses Spectral (ETDRK4, med-r es) based on its residual of 8 . 02 × 10 − 15 (near machine precision) at a moderate runtime of 35.3 s. The high- resolution spectral plan, despite scoring highest in planning, produces a lar ger residual ( 1 . 75 × 10 − 3 ), likely due to time-stepping error at coarser ∆ t . The FD plan di ver ges entirely (residual 3 . 18 × 10 4 ). This example illustrates how the pipeline’ s e valuate-then-select strate gy can override initial scoring when ex ecution results differ from e xpectations. 9 D S C H E M E S E L E C T I O N R E S U L T S T able 5 lists the numerical scheme automatically selected by the Planner Agent for each benchmark PDE. The schemes are grouped by boundary condition type. For periodic-boundary problems, the pipeline consistently selects Fourier spectral methods. For Dirichlet-boundary parabolic problems, finite difference (FD) or finite element methods (FEM) with implicit time stepping are preferred. For Dirichlet-boundary elliptic problems, Chebyshe v spectral methods are selected. T able 5: Numerical schemes selected by the Planner Agent for each benchmark PDE. PDE Dim. BC PDE T ype Selected Scheme P eriodic boundary conditions Advection 2 Periodic Hyperbolic Spectral Fourier (RK4) Con vection Dif fusion 2 Periodic Parabolic Spectral Fourier (IMEX) Schr ¨ odinger 1 Periodic Dispersiv e Spectral Fourier (Split-Step) Navier –Stokes 2 Periodic Parabolic FEM (IMEX) Shallow W ater 1 Periodic Hyperbolic FD (explicit) Dirichlet boundary conditions, par abolic Allen-Cahn 1 Dirichlet Parabolic FD (Crank-Nicolson) Burgers (viscous) 1 Dirichlet Parabolic FD (implicit) Heat 1 Dirichlet Parabolic FEM (Crank-Nicolson) Heat 2 Dirichlet Parabolic FD (Crank-Nicolson) Reaction Diffusion 2 Dirichlet Parabolic FD (IMEX) Dirichlet boundary conditions, elliptic Helmholtz 2 Dirichlet Elliptic Spectral Chebyshe v Laplace 2 Dirichlet Elliptic Spectral Chebyshe v Poisson 2 Dirichlet Elliptic Spectral Chebyshe v Dirichlet boundary conditions, hyperbolic W ave 1 Dirichlet Hyperbolic Spectral (explicit) A I U S A G E This work used lar ge language models for language polishing, formatting assistance, and limited code suggestions. 10

Original Paper

Loading high-quality paper...

Comments & Academic Discussion

Loading comments...

Leave a Comment