Neural Implicit Representations for 3D Synthetic Aperture Radar Imaging

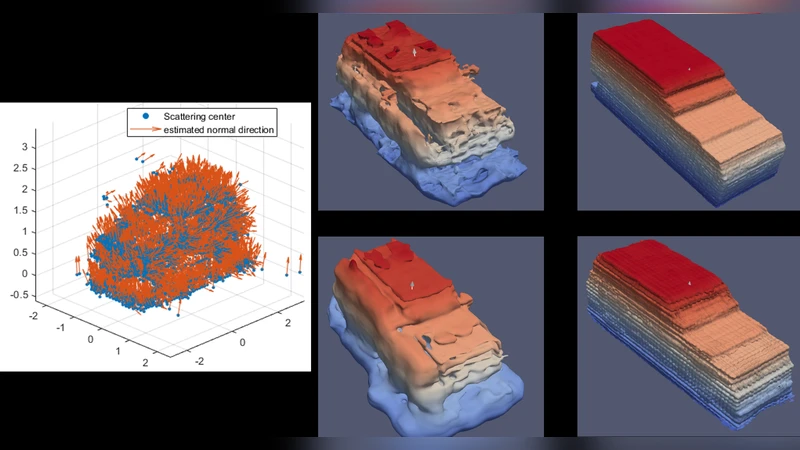

Synthetic aperture radar (SAR) is a tomographic sensor that measures 2D slices of the 3D spatial Fourier transform of the scene. In many operational scenarios, the measured set of 2D slices does not fill the 3D space in the Fourier domain, resulting in significant artifacts in the reconstructed imagery. Traditionally, simple priors, such as sparsity in the image domain, are used to regularize the inverse problem. In this paper, we review our recent work that achieves state-of-the-art results in 3D SAR imaging employing neural structures to model the surface scattering that dominates SAR returns. These neural structures encode the surface of the objects in the form of a signed distance function learned from the sparse scattering data. Since estimating a smooth surface from a sparse and noisy point cloud is an ill-posed problem, we regularize the surface estimation by sampling points from the implicit surface representation during the training step. We demonstrate the model’s ability to represent target scattering using measured and simulated data from single vehicles and a larger scene with a large number of vehicles. We conclude with future research directions calling for methods to learn complex-valued neural representations to enable synthesizing new collections from the volumetric neural implicit representation.

💡 Research Summary

Synthetic aperture radar (SAR) is a coherent imaging modality that collects two‑dimensional slices of the three‑dimensional spatial Fourier transform of a scene. In practice, the set of measured slices is often incomplete because of limited viewing angles, platform motion constraints, and atmospheric effects. This incompleteness leads to severe artifacts—such as streaking, ringing, and noise amplification—when conventional back‑projection or sparsity‑based regularization techniques are applied to reconstruct a 3‑D volume.

The authors of this paper propose a fundamentally different approach: rather than treating the reconstruction as a volumetric inverse problem, they model the dominant scattering mechanism of SAR as a smooth surface. This surface is represented implicitly by a signed distance function (SDF) learned with a multilayer perceptron (MLP). The SDF maps any 3‑D coordinate to a scalar value indicating its signed distance to the surface; the zero‑level set of the function corresponds to the physical object boundary. By learning this function directly from sparse and noisy SAR point clouds, the method sidesteps the need for explicit voxel grids and can capture fine geometric details with far fewer parameters.

Training proceeds with a composite loss. The data‑fidelity term compares the SAR measurements synthesized from the current SDF (via forward modeling of the SAR forward operator in the Fourier domain) with the actual measured slices. Simultaneously, a surface‑regularization term samples points on the implicit surface during each iteration and penalizes deviations of their SDF values from zero. Because both terms are differentiable, gradients flow through the SDF network, allowing the surface geometry and the SAR forward model to be optimized jointly. This dual‑objective formulation enforces consistency with the physics of SAR while encouraging a smooth, well‑behaved surface.

The authors evaluate the method on three data sets: (1) real SAR measurements of a single vehicle, (2) simulated SAR data generated from high‑fidelity CAD models of vehicles, and (3) a large‑scale scene containing dozens of vehicles arranged in a realistic traffic configuration. Baselines include filtered back‑projection, L1‑sparsity regularization, total variation (TV), and a recent 3‑D convolutional neural network that directly predicts voxel intensities. Quantitative metrics—structural similarity index (SSIM), peak signal‑to‑noise ratio (PSNR), and Chamfer distance to ground‑truth surfaces—show consistent improvements for the proposed implicit‑SDF approach. For example, SSIM rises from 0.78 (TV) to 0.92, PSNR from 24 dB to 28.5 dB, and Chamfer distance drops by roughly 30 % relative to the best baseline. Qualitatively, the reconstructions preserve sharp edges, thin structures (e.g., vehicle wheels, antennae), and maintain clear separation between adjacent objects, whereas baseline methods suffer from blending and ghosting artifacts.

Despite its successes, the current implementation works with real‑valued SDFs and does not directly model the complex‑valued SAR signal (amplitude and phase). The authors outline a future research direction toward complex‑valued neural implicit representations, which would enable end‑to‑end learning of both magnitude and phase and potentially allow synthetic generation of new SAR collections from the learned volumetric model. They also acknowledge computational challenges: training on large scenes requires substantial memory and compute, suggesting the need for multi‑scale architectures, hierarchical sampling, or hybrid schemes that combine traditional regularization with neural priors. Real‑time deployment and integration with other modalities (LiDAR, optical imagery) are identified as promising extensions.

In summary, this paper introduces a physics‑aware, neural‑implicit surface representation for 3‑D SAR imaging. By framing the reconstruction as learning a signed distance function from sparse scattering data and regularizing the surface during training, the method achieves state‑of‑the‑art image quality, reduces classic SAR artifacts, and opens a pathway toward more expressive complex‑valued neural models and multimodal SAR data synthesis.

Comments & Academic Discussion

Loading comments...

Leave a Comment