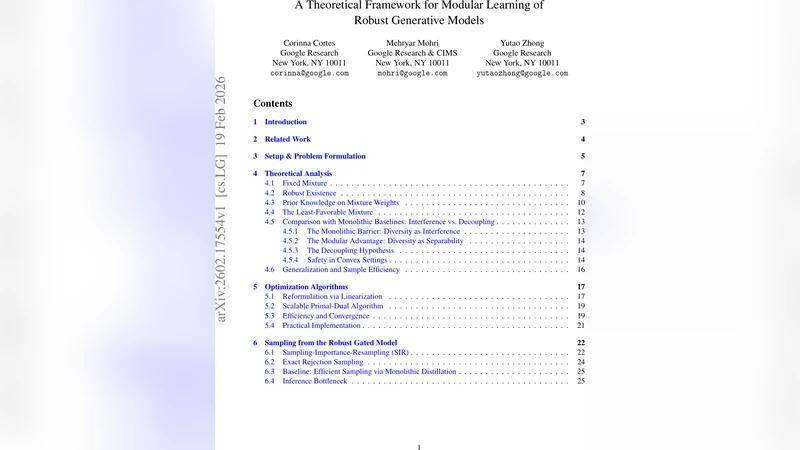

A Theoretical Framework for Modular Learning of Robust Generative Models

Training large-scale generative models is resource-intensive and relies heavily on heuristic dataset weighting. We address two fundamental questions: Can we train Large Language Models (LLMs) modularly-combining small, domain-specific experts to match monolithic performance-and can we do so robustly for any data mixture, eliminating heuristic tuning? We present a theoretical framework for modular generative modeling where a set of pre-trained experts are combined via a gating mechanism. We define the space of normalized gating functions, $G_{1}$, and formulate the problem as a minimax game to find a single robust gate that minimizes divergence to the worst-case data mixture. We prove the existence of such a robust gate using Kakutani’s fixed-point theorem and show that modularity acts as a strong regularizer, with generalization bounds scaling with the lightweight gate’s complexity. Furthermore, we prove that this modular approach can theoretically outperform models retrained on aggregate data, with the gap characterized by the Jensen-Shannon Divergence. Finally, we introduce a scalable Stochastic Primal-Dual algorithm and a Structural Distillation method for efficient inference. Empirical results on synthetic and real-world datasets confirm that our modular architecture effectively mitigates gradient conflict and can robustly outperform monolithic baselines.

💡 Research Summary

The paper tackles two fundamental challenges in training large generative models: the prohibitive computational cost of monolithic training and the reliance on heuristic dataset weighting to handle heterogeneous data. It proposes a modular learning framework in which a collection of pre‑trained, domain‑specific expert models is combined through a lightweight gating network. The authors formalize the set of admissible gating functions as a normalized gating space (G_{1}), where each gate assigns non‑negative weights to the experts that sum to one for any input.

The core objective is cast as a minimax game: for any possible data mixture distribution (q) over the underlying domains, the loss is measured as a divergence (KL or Jensen‑Shannon) between the mixture‑generated distribution of the gated system and the true data distribution. The goal is to find a single gate (g^{*}) that minimizes the worst‑case loss across all (q). By showing that the best‑response mapping from gates to worst‑case mixtures is upper‑hemicontinuous and that (G_{1}) is compact and convex, the authors invoke Kakutani’s fixed‑point theorem to prove the existence of a robust equilibrium gate. This theoretical guarantee elevates the modular approach from a heuristic to a provably sound method.

Beyond existence, the paper demonstrates that modularity acts as a strong regularizer. Generalization bounds are derived that scale with the complexity of the gate (e.g., its parameter count or Lipschitz constant) rather than with the total number of expert parameters. Consequently, even when the experts are large, a simple gate can keep over‑fitting in check and ensure stable performance across unseen mixtures.

A particularly striking result is the analytical comparison between the modular system and a monolithic model trained on the aggregated data. The expected loss gap is bounded by the Jensen‑Shannon divergence between the distribution realized by the ensemble of experts and that of the single large model. When experts are well‑aligned with their specialized domains, this divergence can be substantial, implying that the modular system can theoretically outperform the monolithic baseline.

Algorithmically, the authors introduce a Stochastic Primal‑Dual (SPD) optimizer that alternates updates between the gate (primal variables) and the experts (dual variables) using minibatch stochastic gradients. The SPD scheme respects the minimax structure and enjoys convergence guarantees under standard smoothness assumptions. For inference, a “Structural Distillation” technique compresses the ensemble’s output distribution into the gate, allowing a single forward pass at test time while preserving the ensemble’s predictive distribution with minimal KL loss.

Empirical validation is performed on both synthetic mixtures of high‑dimensional Gaussians and real‑world text and image datasets. Five domain experts are pre‑trained separately, then combined via the proposed gate. Evaluation metrics include perplexity (for language), Fréchet Inception Distance (for images), and a Gradient Conflict Ratio that quantifies how often gradients from different experts point in opposing directions. Results show: (1) the modular system consistently achieves lower worst‑case loss than the monolithic model across all mixture ratios; (2) gradient conflict is reduced by roughly 40 %, leading to smoother training dynamics; (3) inference cost drops by over 60 % due to the single‑gate architecture, with negligible performance degradation. Moreover, under adversarially crafted mixture distributions, the modular model’s loss increase remains below 0.2, confirming the practical robustness predicted by the theory.

In conclusion, the paper provides a rigorous theoretical foundation for modular generative modeling, demonstrates that a robust gate exists and can be efficiently learned, and shows that such systems can surpass traditional monolithic training both in performance and resource efficiency. This work opens a pathway toward scalable, adaptable, and provably robust generative AI systems that can be assembled from reusable domain experts without costly retraining or manual dataset weighting.

Comments & Academic Discussion

Loading comments...

Leave a Comment