Bridging the Domain Divide: Supervised vs. Zero-Shot Clinical Section Segmentation from MIMIC-III to Obstetrics

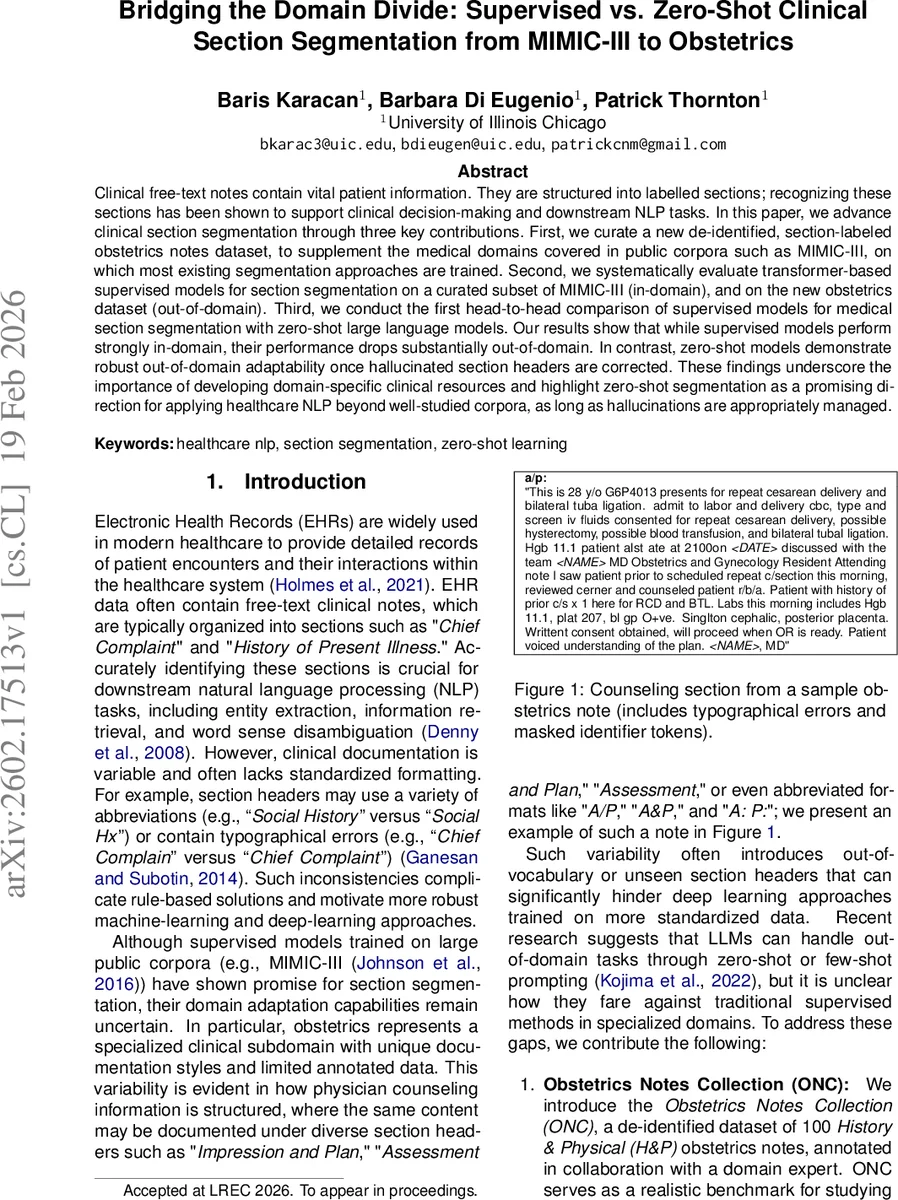

Clinical free-text notes contain vital patient information. They are structured into labelled sections; recognizing these sections has been shown to support clinical decision-making and downstream NLP tasks. In this paper, we advance clinical section segmentation through three key contributions. First, we curate a new de-identified, section-labeled obstetrics notes dataset, to supplement the medical domains covered in public corpora such as MIMIC-III, on which most existing segmentation approaches are trained. Second, we systematically evaluate transformer-based supervised models for section segmentation on a curated subset of MIMIC-III (in-domain), and on the new obstetrics dataset (out-of-domain). Third, we conduct the first head-to-head comparison of supervised models for medical section segmentation with zero-shot large language models. Our results show that while supervised models perform strongly in-domain, their performance drops substantially out-of-domain. In contrast, zero-shot models demonstrate robust out-of-domain adaptability once hallucinated section headers are corrected. These findings underscore the importance of developing domain-specific clinical resources and highlight zero-shot segmentation as a promising direction for applying healthcare NLP beyond well-studied corpora, as long as hallucinations are appropriately managed.

💡 Research Summary

This paper investigates clinical section segmentation—a task of automatically identifying and labeling the structural sections within free‑text electronic health record (EHR) notes—by comparing traditional supervised transformer models with zero‑shot large language models (LLMs) across two distinct domains. The authors first introduce a new, de‑identified obstetrics dataset (Obstetrics Notes Collection, ONC) comprising 100 full‑length History & Physical (H&P) notes from vaginal birth after cesarean and repeat cesarean patients. Each note is manually annotated with section boundaries and headers by a domain expert, providing a realistic benchmark for an under‑studied clinical sub‑domain.

For the supervised side, the study uses the publicly available MedSecId corpus derived from MIMIC‑III, which contains 2,002 annotated notes spanning five note types and 50 section categories. The authors convert notes into line‑level instances (each line = one sentence separated by newline) yielding 175,703 lines. They fine‑tune four pre‑trained transformer backbones—BERT‑base, BioBERT, BioMedBERT, and GatorTron‑base—under two architectures: (1) a plain line‑level classifier that predicts one of 51 labels (50 sections plus “

Results on the in‑domain MedSecId test set show high performance (average F1 ≈ 0.92), confirming that transformer‑based supervised models excel when the training and test domains match. However, when the same models are evaluated on the out‑of‑domain ONC data without any adaptation, performance collapses to F1 scores between 0.55 and 0.60. The drop is attributed to (a) the presence of obstetrics‑specific headers (e.g., “Pregnancy History”, “Gynecological History”, “Gestational Age”) that are absent from the MedSecId label set, (b) extensive use of abbreviations and typographical errors, and (c) the line‑level formulation that ignores inter‑line contextual cues important for section transitions.

The zero‑shot side experiments employ three open‑source LLMs—Llama, Mistral, and Qwen—prompted to label each line of a note with the appropriate section header. Initial outputs suffer from hallucinated or spurious headers (e.g., “Patient Summary”) because the models generate plausible but incorrect labels for unseen section names. To mitigate this, the authors devise a post‑processing pipeline: (i) a rule‑based normalization that maps common abbreviations and variant forms (e.g., “A/P”, “Assessment and Plan”) to canonical labels, and (ii) manual verification by a domain expert to correct residual errors. After correction, the LLMs achieve an average F1 of 0.81, outperforming the supervised models on ONC and demonstrating robust out‑of‑domain adaptability. Notably, even rare sections such as “Consent” and “Prenatal Screens” are identified with reasonable accuracy (F1 > 0.70).

Key insights from the study include:

- Public clinical section corpora are heavily skewed toward general internal medicine and lack representation of specialties like obstetrics, limiting the transferability of supervised models.

- Line‑level independent modeling simplifies training but discards valuable document‑level sequential information, contributing to performance degradation on new domains.

- Zero‑shot LLMs leverage broad pre‑training on diverse biomedical and clinical texts, enabling them to recognize unseen section headers, provided hallucinations are systematically corrected.

- A hybrid strategy—using LLMs for initial labeling combined with lightweight domain‑specific post‑processing and, if feasible, a small amount of annotated specialty data—offers a practical path for rapid deployment in low‑resource clinical domains.

The authors conclude that while supervised transformer models remain the gold standard when ample in‑domain annotated data exist, zero‑shot LLMs present a promising alternative for specialty domains where annotated resources are scarce. Future work should focus on expanding high‑quality, domain‑specific section annotation datasets and on developing automated hallucination‑detection and correction mechanisms to further close the gap between zero‑shot and fully supervised performance.

Comments & Academic Discussion

Loading comments...

Leave a Comment