DRetHTR: Linear-Time Decoder-Only Retentive Network for Handwritten Text Recognition

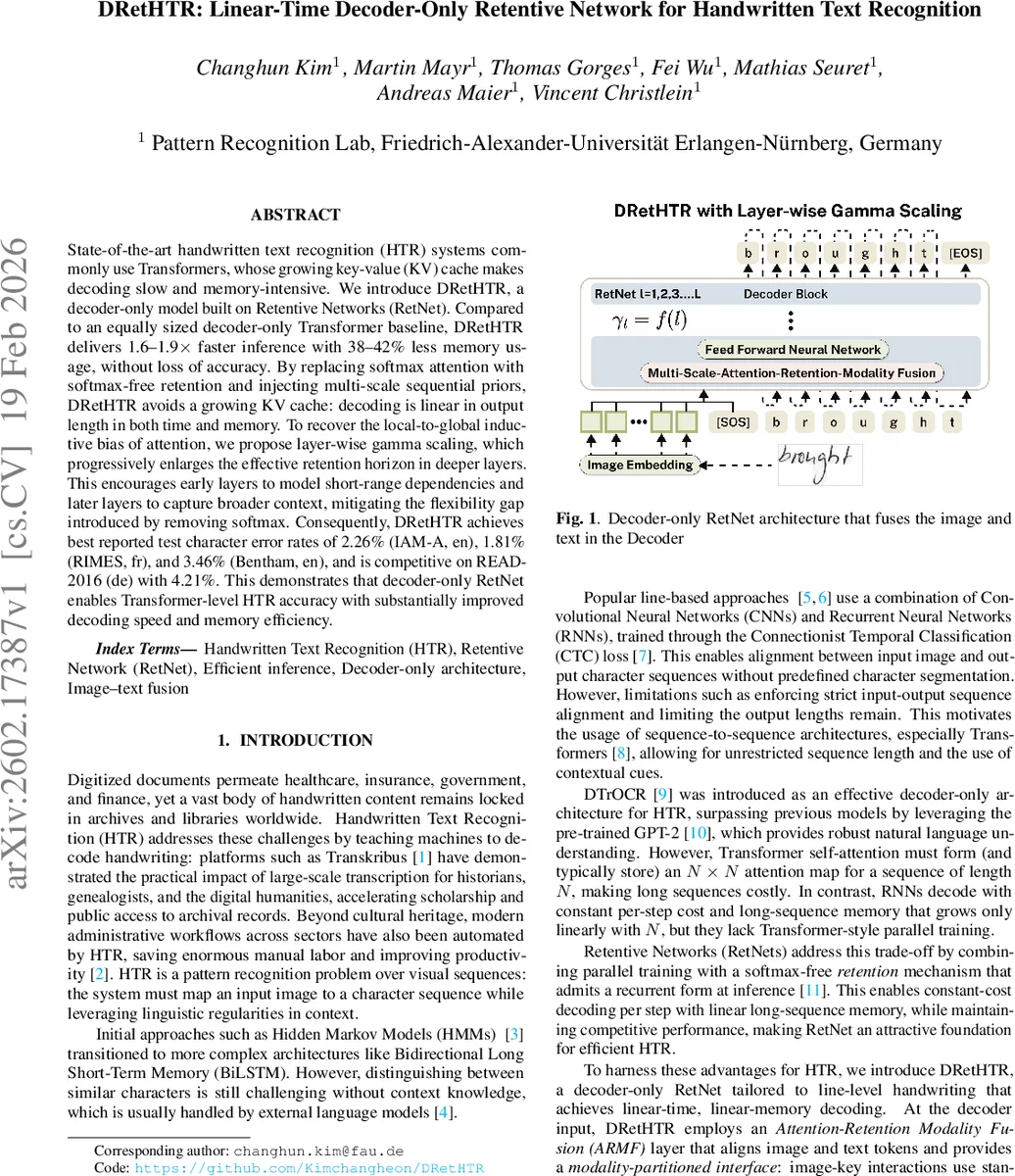

State-of-the-art handwritten text recognition (HTR) systems commonly use Transformers, whose growing key-value (KV) cache makes decoding slow and memory-intensive. We introduce DRetHTR, a decoder-only model built on Retentive Networks (RetNet). Compared to an equally sized decoder-only Transformer baseline, DRetHTR delivers 1.6-1.9x faster inference with 38-42% less memory usage, without loss of accuracy. By replacing softmax attention with softmax-free retention and injecting multi-scale sequential priors, DRetHTR avoids a growing KV cache: decoding is linear in output length in both time and memory. To recover the local-to-global inductive bias of attention, we propose layer-wise gamma scaling, which progressively enlarges the effective retention horizon in deeper layers. This encourages early layers to model short-range dependencies and later layers to capture broader context, mitigating the flexibility gap introduced by removing softmax. Consequently, DRetHTR achieves best reported test character error rates of 2.26% (IAM-A, en), 1.81% (RIMES, fr), and 3.46% (Bentham, en), and is competitive on READ-2016 (de) with 4.21%. This demonstrates that decoder-only RetNet enables Transformer-level HTR accuracy with substantially improved decoding speed and memory efficiency.

💡 Research Summary

**

Handwritten Text Recognition (HTR) has recently been dominated by Transformer‑based architectures, which excel at modeling long‑range dependencies and leveraging powerful language priors. However, the self‑attention mechanism that underlies these models incurs a quadratic memory cost during autoregressive decoding because a key‑value (KV) cache must grow linearly with the generated sequence length. This makes inference slow and memory‑hungry, especially for long handwritten lines that can contain hundreds of characters.

The paper “DRetHTR: Linear‑Time Decoder‑Only Retentive Network for Handwritten Text Recognition” proposes a fundamentally different approach: it replaces the softmax‑based self‑attention of a decoder‑only Transformer with the softmax‑free retention mechanism of Retentive Networks (RetNet). RetNet augments the usual query, key, and value matrices with complex‑phase factors and a lower‑triangular decay matrix D, enabling parallel training while collapsing to a constant‑time recurrent update at inference:

Sₙ = γ·Sₙ₋₁ + kₙᵀ·vₙ, output = qₙ·Sₙ

Here γ∈(0,1) controls how quickly past information decays. The recurrence eliminates the need for a growing KV cache, yielding O(1) per‑token computation and O(N) total memory for a sequence of length N.

To adapt this mechanism to HTR, the authors design DRetHTR, a decoder‑only architecture that fuses visual and textual modalities inside the RetNet decoder. The key components are:

-

Image Embedding – An EfficientNet‑V2 backbone extracts a feature map from the line image. After reshaping and a linear projection, the visual feature becomes a sequence of image tokens (one per horizontal slice) with learned positional encodings.

-

Text Embedding – Characters are tokenized, padded to a fixed length, and embedded with sinusoidal positional encodings.

-

Attention‑Retention Modality Fusion (ARMF) – Instead of applying the same operation to all tokens, ARMF mixes two mechanisms:

- Softmax‑based multi‑head attention for image‑image and image‑text interactions, preserving the rich alignment capability of standard attention.

- Retention for text‑text interactions, providing a recurrent form that does not require a KV cache.

A mask D blocks image queries from attending to text keys, while text queries attend causally to previous text keys with an exponential decay governed by γ. Because image tokens are processed in parallel, the softmax step does not introduce a growing cache; only the text stream uses the recurrent state.

-

Layer‑wise γ Scaling – To compensate for the loss of the softmax’s adaptive weighting, the authors gradually increase γ across decoder layers. Shallow layers use a small γ (emphasizing short‑range dependencies), whereas deeper layers use larger γ values (allowing longer‑range context). This “local‑to‑global” schedule mimics the inductive bias of self‑attention, encouraging early layers to focus on fine‑grained visual cues and later layers to aggregate broader linguistic context.

-

Feed‑Forward and Normalization – Each decoder layer follows the standard Residual → LayerNorm → Position‑wise Feed‑Forward (Linear‑GELU‑Dropout) pattern, keeping the model size comparable to a vanilla decoder‑only Transformer.

Training and Evaluation

The model is trained on four widely used line‑level HTR datasets: IAM‑A (English), RIMES (French), Bentham (English), and READ‑2016 (German). A hybrid loss combining CTC (to align visual features with character sequences) and cross‑entropy (to strengthen language modeling) is employed. The authors construct a baseline decoder‑only Transformer with the same number of parameters and depth for a fair comparison.

Results

- Speed and Memory – DRetHTR achieves 1.6–1.9× faster inference than the Transformer baseline while reducing GPU memory consumption by 38–42 %. The linear‑time, linear‑memory claim is validated by profiling both training and inference on sequences up to 400 tokens.

- Accuracy – On the test splits, DRetHTR reaches state‑of‑the‑art character error rates (CER): 2.26 % on IAM‑A, 1.81 % on RIMES, 3.46 % on Bentham, and 4.21 % on READ‑2016. These numbers are either the best reported or on par with the strongest existing models (e.g., TrOCR, DTrOCR).

- Ablation Studies – Removing the layer‑wise γ schedule (using a fixed γ) degrades CER by 0.5–1.0 % points, confirming the importance of progressive horizon expansion. Replacing ARMF’s softmax component with pure retention harms accuracy dramatically, showing that visual‑text alignment still benefits from attention.

Significance

DRetHTR demonstrates that RetNet’s recurrence can be harnessed for a real‑world sequence‑to‑sequence task without sacrificing the expressive power of Transformers. By keeping the visual‑text attention softmax‑based and limiting retention to the autoregressive text stream, the model enjoys the best of both worlds: high‑quality alignment and linear‑time decoding. This makes it attractive for deployment on resource‑constrained platforms (e.g., mobile devices, edge servers) where memory bandwidth and latency are critical.

Limitations and Future Work

The current design focuses on single‑line transcription; extending to full‑page layout analysis, multi‑line handling, or integrating explicit language models remains open. Moreover, the decay factor γ is manually scheduled; learning a data‑dependent γ (as in gated RetNet) could further improve adaptability. The authors also suggest exploring hybrid encoder‑decoder variants, dynamic γ prediction, and quantization techniques for ultra‑low‑power inference.

Conclusion

The paper introduces DRetHTR, a decoder‑only RetNet tailored for handwritten text recognition. By innovatively fusing attention and retention (ARMF) and employing layer‑wise γ scaling, DRetHTR matches or exceeds Transformer‑level accuracy while delivering linear‑time, linear‑memory decoding. This work paves the way for efficient, high‑accuracy HTR systems suitable for real‑time and low‑resource applications.

Comments & Academic Discussion

Loading comments...

Leave a Comment