From Subtle to Significant: Prompt-Driven Self-Improving Optimization in Test-Time Graph OOD Detection

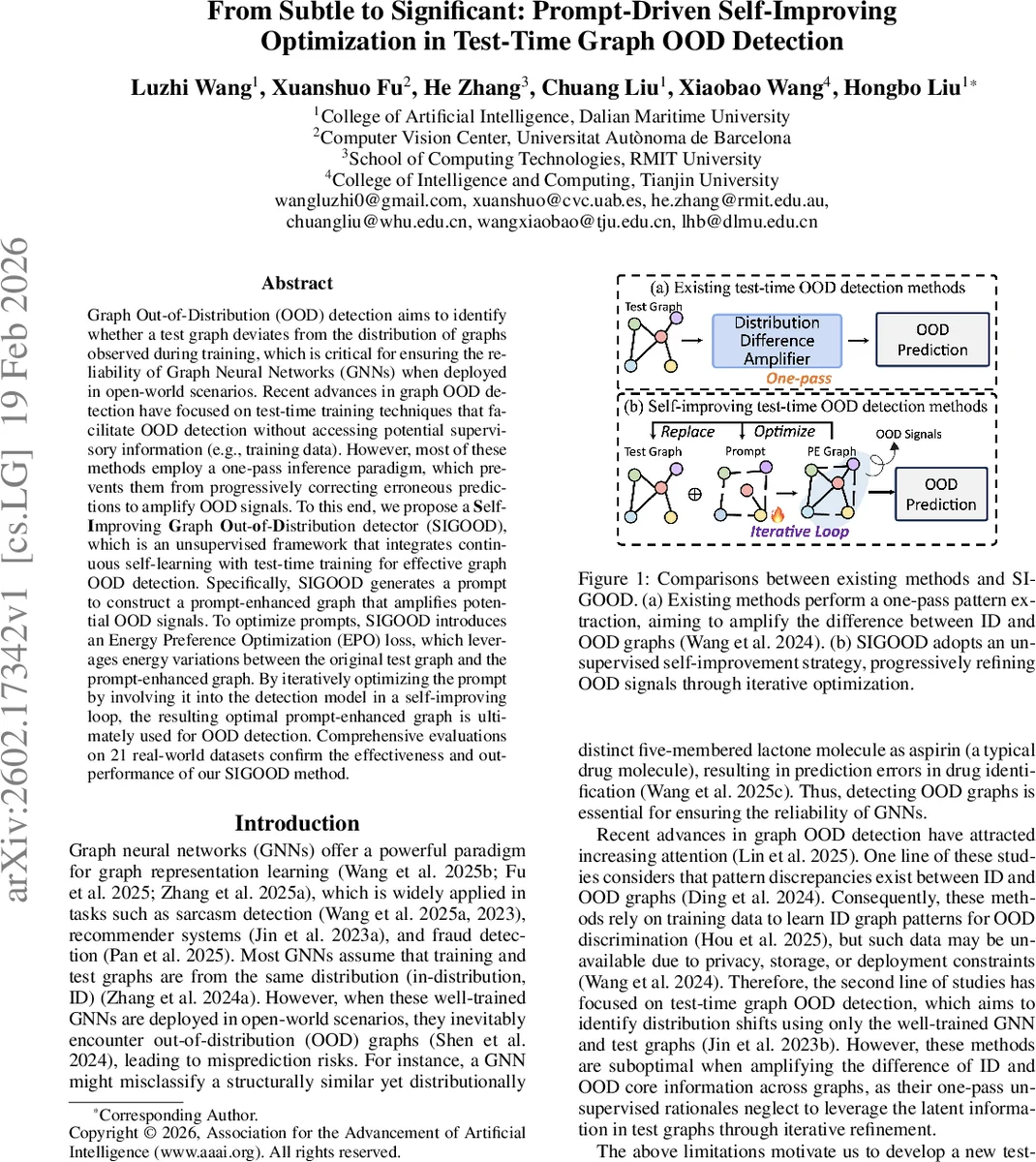

Graph Out-of-Distribution (OOD) detection aims to identify whether a test graph deviates from the distribution of graphs observed during training, which is critical for ensuring the reliability of Graph Neural Networks (GNNs) when deployed in open-world scenarios. Recent advances in graph OOD detection have focused on test-time training techniques that facilitate OOD detection without accessing potential supervisory information (e.g., training data). However, most of these methods employ a one-pass inference paradigm, which prevents them from progressively correcting erroneous predictions to amplify OOD signals. To this end, we propose a \textbf{S}elf-\textbf{I}mproving \textbf{G}raph \textbf{O}ut-\textbf{o}f-\textbf{D}istribution detector (SIGOOD), which is an unsupervised framework that integrates continuous self-learning with test-time training for effective graph OOD detection. Specifically, SIGOOD generates a prompt to construct a prompt-enhanced graph that amplifies potential OOD signals. To optimize prompts, SIGOOD introduces an Energy Preference Optimization (EPO) loss, which leverages energy variations between the original test graph and the prompt-enhanced graph. By iteratively optimizing the prompt by involving it into the detection model in a self-improving loop, the resulting optimal prompt-enhanced graph is ultimately used for OOD detection. Comprehensive evaluations on 21 real-world datasets confirm the effectiveness and outperformance of our SIGOOD method. The code is at https://github.com/Ee1s/SIGOOD.

💡 Research Summary

The paper addresses the critical problem of detecting out‑of‑distribution (OOD) graphs at test time, a scenario where a pretrained Graph Neural Network (GNN) encounters data that deviates from its training distribution. Existing test‑time OOD detectors either rely on static post‑hoc scores (distance or energy) or employ a single‑pass test‑time training (TTT) step, which limits their ability to progressively amplify subtle OOD cues. SIGOOD (Self‑Improving Graph OOD detector) proposes a novel, fully unsupervised framework that iteratively refines OOD signals by injecting a learnable “prompt” into the graph and optimizing it with an energy‑based loss.

The method works as follows. A pretrained GNN first encodes the input test graph Gₜ into node embeddings h. A lightweight three‑layer MLP, called the Prompt Generator (PG), transforms each node embedding into a prompt vector Pₘ. The prompt is added element‑wise to the original node embeddings, producing a prompt‑enhanced graph Gₚ. Because the GNN is biased toward in‑distribution (ID) patterns, the addition of a prompt tends to cause larger changes in the energy of OOD components than in ID components.

Energy is defined per node as ˆE(v)=−log∑₁²exp(f_i(v)), where f(v)∈ℝ² are logits for ID/OOD preference. For each node, the log‑energy difference ΔEᵥ=logˆE(v;Gₚ)−logˆE(u;Gₜ) (u being the corresponding node in Gₜ) is computed. Nodes with ΔEᵥ>0 are treated as OOD candidates, while ΔEᵥ<0 are treated as ID candidates.

The core optimization objective, Energy Preference Optimization (EPO) loss, combines a Bradley‑Terry pairwise preference model with a KL‑divergence regularizer: L_EPO = −log σ

Comments & Academic Discussion

Loading comments...

Leave a Comment