Do GPUs Really Need New Tabular File Formats?

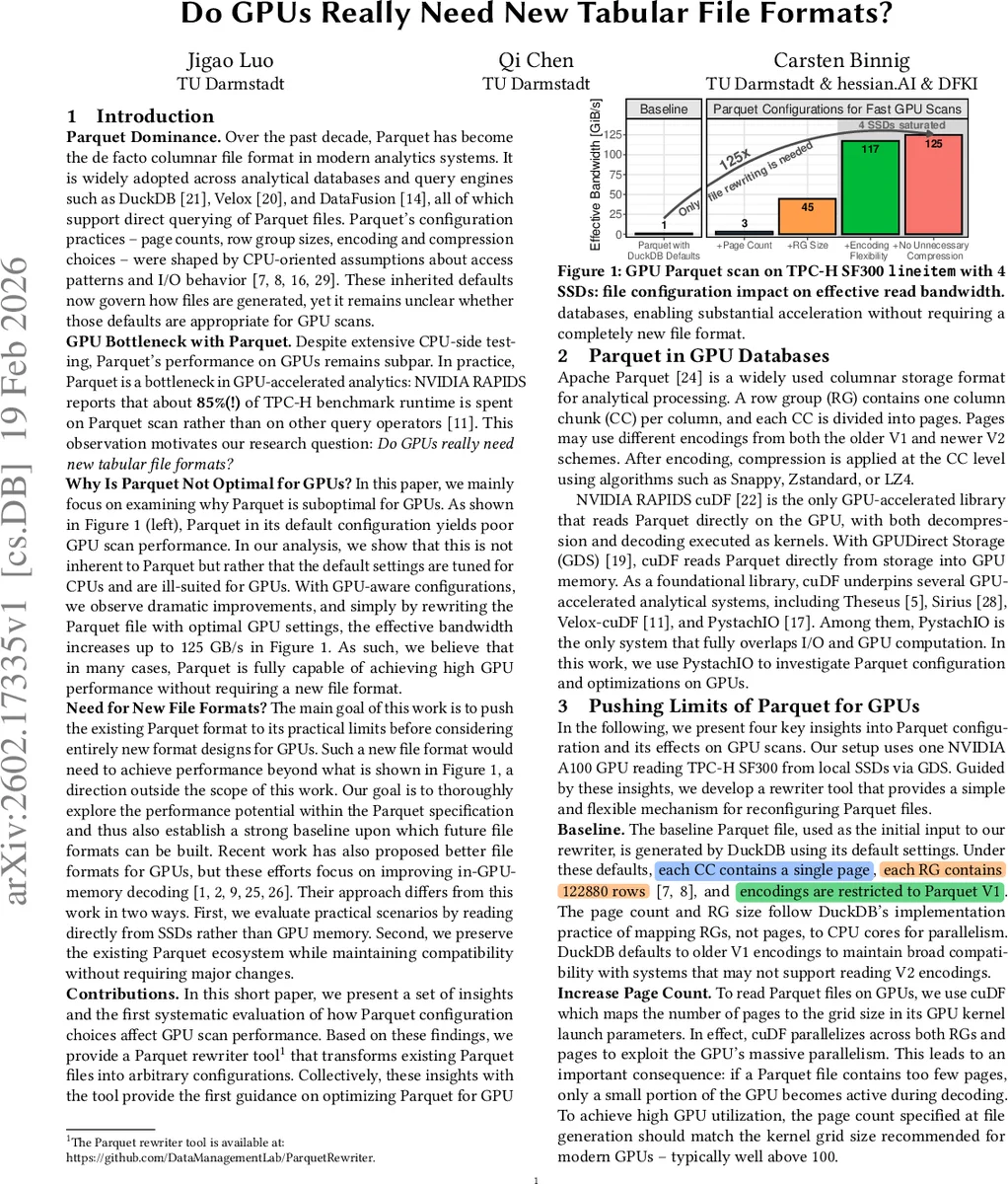

Parquet is the de facto columnar file format in modern analytical systems, yet its configuration guidelines have largely been shaped by CPU-centric execution models. As GPU-accelerated data processing becomes increasingly prevalent, Parquet files generated with CPU-oriented defaults can severely underutilize GPU parallelism, turning GPU scans into a performance bottleneck. In this work, we systematically study how Parquet configurations affect GPU scan performance. We show that Parquet’s poor GPU performance is not inherent to the format itself but rather a consequence of suboptimal configuration choices. By applying GPU-aware configurations, we increase effective read bandwidth up to 125 GB/s without modifying the Parquet specification.

💡 Research Summary

The paper investigates why the widely‑adopted Apache Parquet columnar file format, originally tuned for CPU‑centric workloads, becomes a performance bottleneck when used with modern GPU‑accelerated analytics. The authors ask whether GPUs truly need a brand‑new tabular file format or whether the existing Parquet format can be made GPU‑friendly through configuration changes alone.

Motivation and Problem Statement

Parquet’s default settings—page count, row‑group (RG) size, encoding choice, and compression algorithm—were derived from CPU‑oriented assumptions about parallelism and I/O behavior. In GPU environments, especially when using NVIDIA’s GPUDirect Storage (GDS) to read directly from SSDs into GPU memory, these defaults lead to under‑utilization of the GPU’s massive parallelism. RAPIDS cuDF measurements show that up to 85 % of TPC‑H benchmark runtime is spent scanning Parquet files, indicating a severe mismatch between file layout and GPU execution model.

Research Goal

The authors aim to answer the question “Do GPUs really need new tabular file formats?” by systematically exploring the performance ceiling of Parquet under GPU‑aware configurations. They deliberately avoid proposing a new file format; instead, they push the existing specification to its limits and provide a baseline for future format designs.

Experimental Setup

- Hardware: NVIDIA A100 GPU, four NVMe SSDs, GDS for direct GPU‑to‑SSD transfers.

- Data: TPC‑H SF300 lineitem table (hundreds of gigabytes).

- Software: cuDF‑based PystachIO for end‑to‑end query execution, DuckDB to generate baseline Parquet files, and a custom “Parquet Rewriter” built on Apache Arrow Rust.

Key Insights and Optimizations

-

Increase Page Count – cuDF maps the number of Parquet pages to the GPU kernel grid size. The default DuckDB output creates a single page per column chunk, yielding far fewer grid blocks than the GPU can schedule. Raising the page count to at least 100 aligns the grid size with modern GPUs, dramatically improving decoding throughput. Further increases give diminishing returns once the I/O path saturates.

-

Enlarge Row‑Group Size – GDS behaves differently from CPU I/O; it prefers megabyte‑scale transfers. DuckDB’s default RG size (~122 k rows, ~100 KB after compression) is too small, causing the storage bus to be under‑utilized. Experiments show that RG sizes on the order of millions of rows (4 M–10 M) enable each column chunk to reach several megabytes, allowing the SSD‑PCIe bus to be fully saturated and raising effective bandwidth to ~125 GB/s.

-

Encoding Flexibility – Most Parquet writers lock a single V1 encoding per column, whereas GPU‑friendly encodings (V2, dictionary, delta, etc.) can yield better compression ratios and lower decoding cost. The authors let each column chunk try a set of candidate encodings and select the one that minimizes encoded size. This per‑chunk flexibility reduces overall file size by ~30 % and improves effective bandwidth, especially when multiple SSDs are used and the GPU becomes compute‑bound.

-

Skip Unnecessary Compression – Compression is a trade‑off: it reduces I/O volume but adds decompression work on the GPU. The authors apply compression only when the size reduction exceeds a 10 % threshold. In multi‑SSD scenarios, avoiding low‑gain compression frees GPU compute cycles, leading to a ~10 % bandwidth increase. With a single SSD, the workload remains I/O‑bound, so the effect is negligible.

Parquet Rewriter Tool

To apply the four insights without altering the Parquet spec, the authors built a “Parquet Rewriter” that reads an existing file and rewrites it with user‑specified page count, RG size, encoding set, and compression policy. Implemented in Rust using Apache Arrow’s multithreaded parquet writer, the tool can process a 100 GB dataset in a few minutes on a modern CPU, making the one‑time offline cost acceptable. The rewritten files are often smaller, so storage overhead is not a concern.

Performance Results

- Baseline DuckDB Parquet (default settings) consumes ~85 % of TPC‑H runtime in the scan phase.

- Applying the four GPU‑aware settings yields up to 125 GB/s effective read bandwidth on a 4‑SSD configuration, translating into a >5× reduction in total query time.

- Scaling the number of SSDs shows near‑linear bandwidth growth when unnecessary compression is omitted, confirming that the GPU transitions from I/O‑bound to compute‑bound as the storage subsystem is saturated.

Discussion

The authors argue that the observed gains demonstrate that Parquet’s inherent design is not a barrier to high‑performance GPU analytics; rather, the default configuration is the obstacle. They compare their approach to recent “GPU‑friendly” formats (e.g., FastLanes, G‑ALP) that focus on in‑memory decoding speed. While those formats achieve terabytes‑per‑second decoding rates, the authors contend that in realistic workloads the dominant bottleneck is SSD‑to‑GPU transfer, not in‑memory computation. Hence, optimizing the existing Parquet layout is more pragmatic for current hardware.

They also note that the rewriter is hardware‑agnostic: the same tool can be used to generate CPU‑optimized Parquet files by selecting different parameters, suggesting a unified strategy for cross‑platform performance tuning.

Conclusion

Parquet can deliver high GPU scan performance without a radical redesign of the file format. By simply adjusting page count, row‑group size, encoding flexibility, and compression policy, the authors achieve up to 125 GB/s read bandwidth and dramatically reduce query runtimes. This work establishes a strong baseline for future GPU‑oriented file format research and demonstrates that, at least for many workloads, new formats are unnecessary; careful configuration of the existing format suffices.

Comments & Academic Discussion

Loading comments...

Leave a Comment