GASS: Geometry-Aware Spherical Sampling for Disentangled Diversity Enhancement in Text-to-Image Generation

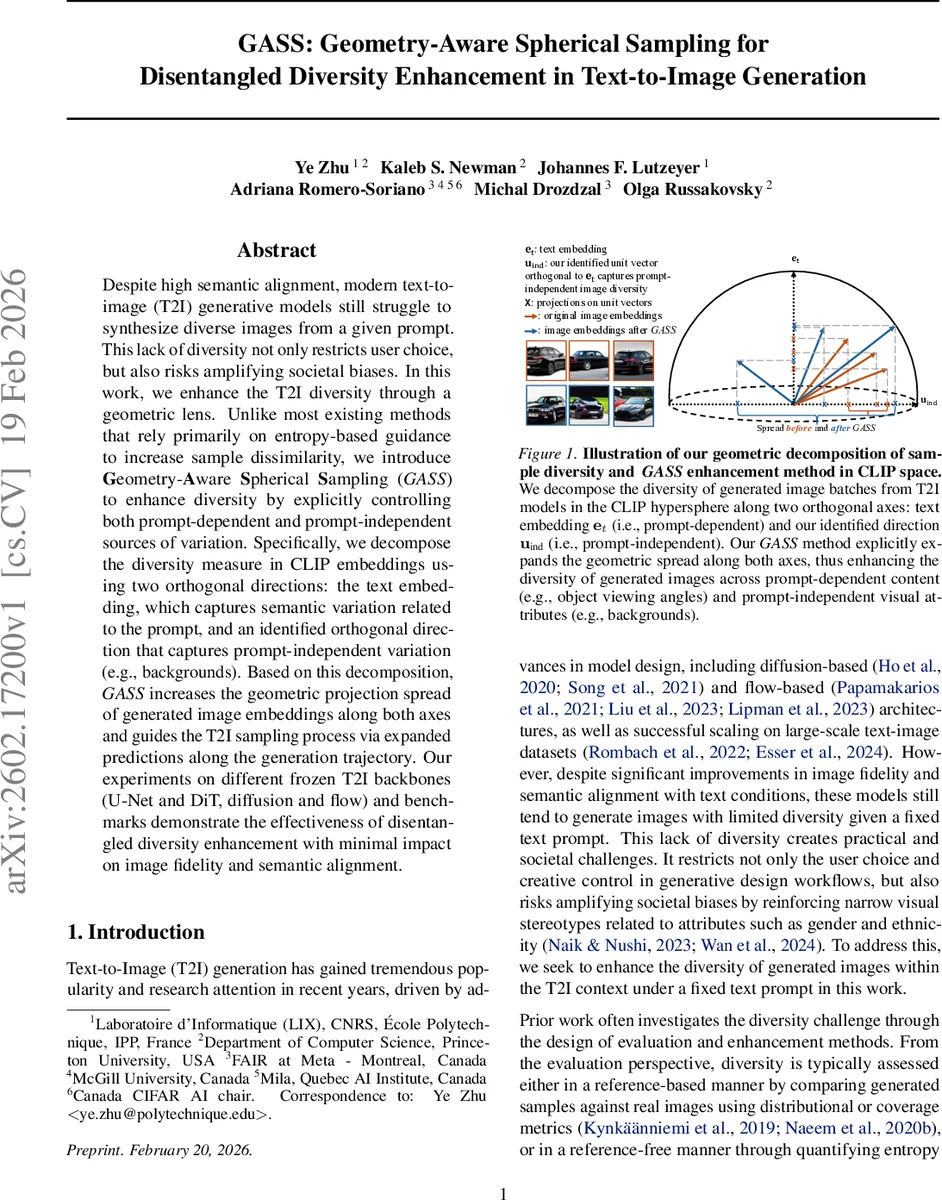

Despite high semantic alignment, modern text-to-image (T2I) generative models still struggle to synthesize diverse images from a given prompt. This lack of diversity not only restricts user choice, but also risks amplifying societal biases. In this work, we enhance the T2I diversity through a geometric lens. Unlike most existing methods that rely primarily on entropy-based guidance to increase sample dissimilarity, we introduce Geometry-Aware Spherical Sampling (GASS) to enhance diversity by explicitly controlling both prompt-dependent and prompt-independent sources of variation. Specifically, we decompose the diversity measure in CLIP embeddings using two orthogonal directions: the text embedding, which captures semantic variation related to the prompt, and an identified orthogonal direction that captures prompt-independent variation (e.g., backgrounds). Based on this decomposition, GASS increases the geometric projection spread of generated image embeddings along both axes and guides the T2I sampling process via expanded predictions along the generation trajectory. Our experiments on different frozen T2I backbones (U-Net and DiT, diffusion and flow) and benchmarks demonstrate the effectiveness of disentangled diversity enhancement with minimal impact on image fidelity and semantic alignment.

💡 Research Summary

The paper tackles the persistent lack of diversity in modern text‑to‑image (T2I) generation, a problem that limits user creativity and can reinforce societal biases. Existing diversity‑enhancement methods largely rely on entropy‑maximization or batch‑wise dissimilarity, which treat diversity as a single undifferentiated quantity and ignore the fact that variations can be either prompt‑dependent (semantic changes that still satisfy the text) or prompt‑independent (background, lighting, style).

GASS (Geometry‑Aware Spherical Sampling) introduces a geometric framework that explicitly separates these two sources of variation within the shared CLIP embedding space. By normalizing all CLIP image and text embeddings onto a unit hypersphere, the text embedding (e_t) is fixed as the first orthonormal basis vector. Each image embedding (e_i) is then decomposed as

(e_i = (e_i^\top e_t) e_t + \sum_{k=2}^d (e_i^\top u_k) u_k),

where the first term captures prompt‑dependent variation and the remaining orthogonal components capture the residual, prompt‑independent variation.

To identify a single dominant residual direction (u_{ind}), the authors generate a small set (N≈10) of random vectors orthogonal to (e_t) using Gram‑Schmidt, evaluate the mean absolute projection of the batch onto each candidate, and select the one with maximal energy. This yields a concise, data‑driven axis that explains most of the non‑semantic variance.

Diversity is then measured as the sum of spreads (e.g., standard deviation or mean absolute deviation) of the batch’s projections onto (e_t) and (u_{ind}), denoted (D_{dep}) and (D_{ind}) respectively. The total diversity score is (D_{total}=D_{dep}+D_{ind}). This disentangled metric allows precise assessment of how much variation is semantic versus stylistic.

During sampling, GASS leverages the differentiable CLIP image encoder to compute gradients that push the current sample toward larger spreads along both axes. At each diffusion or flow timestep (t), the update includes a term

(\nabla_{x_t}\big(\lambda_{dep},\text{Spread}{e_t}(P) + \lambda{ind},\text{Spread}{u{ind}}(P)\big)),

where (\lambda_{dep}) and (\lambda_{ind}) are user‑controllable weights. This guidance expands the geometric coverage of the generated embeddings while preserving alignment with the prompt because the projection onto (e_t) is still encouraged to stay high (CLIPScore).

The method is evaluated on multiple backbones—U‑Net diffusion, DiT transformer diffusion, and a flow‑based generator—demonstrating that GASS is model‑agnostic. Benchmarks include ImageNet (where real image embeddings provide a ground‑truth spread) and DrawBench (a diverse set of prompts). Compared to state‑of‑the‑art entropy‑based and Scendi methods, GASS achieves substantially higher (D_{ind}) (background and style diversity) while maintaining or slightly improving (D_{dep}). Crucially, image quality metrics such as FID and CLIPScore remain essentially unchanged, indicating that the approach does not sacrifice fidelity for diversity.

Ablation studies show that a modest candidate set (N=10) suffices to locate a stable (u_{ind}), and that the additional computational cost is only 5–10 % of the total sampling time. Visual examples illustrate that for a prompt like “A black colored car,” GASS produces variations in viewpoint and car model (prompt‑dependent) as well as a wide range of backgrounds, lighting conditions, and artistic styles (prompt‑independent), which prior methods rarely achieve without modifying the prompt.

The authors discuss limitations: only a single residual direction is used, whereas multiple orthogonal axes could capture richer style variations; the framework currently depends on CLIP embeddings, and extending it to other multimodal encoders (ALIGN, Florence) remains an open question; and user‑friendly interfaces for setting (\lambda) values are needed for practical deployment.

In summary, GASS provides a principled, geometry‑driven way to disentangle and amplify both semantic and non‑semantic diversity in T2I generation. Its sampling‑time guidance is lightweight, backbone‑agnostic, and demonstrably improves diversity without degrading image fidelity, offering a promising foundation for future work on controllable, bias‑aware generative models.

Comments & Academic Discussion

Loading comments...

Leave a Comment