BadCLIP++: Stealthy and Persistent Backdoors in Multimodal Contrastive Learning

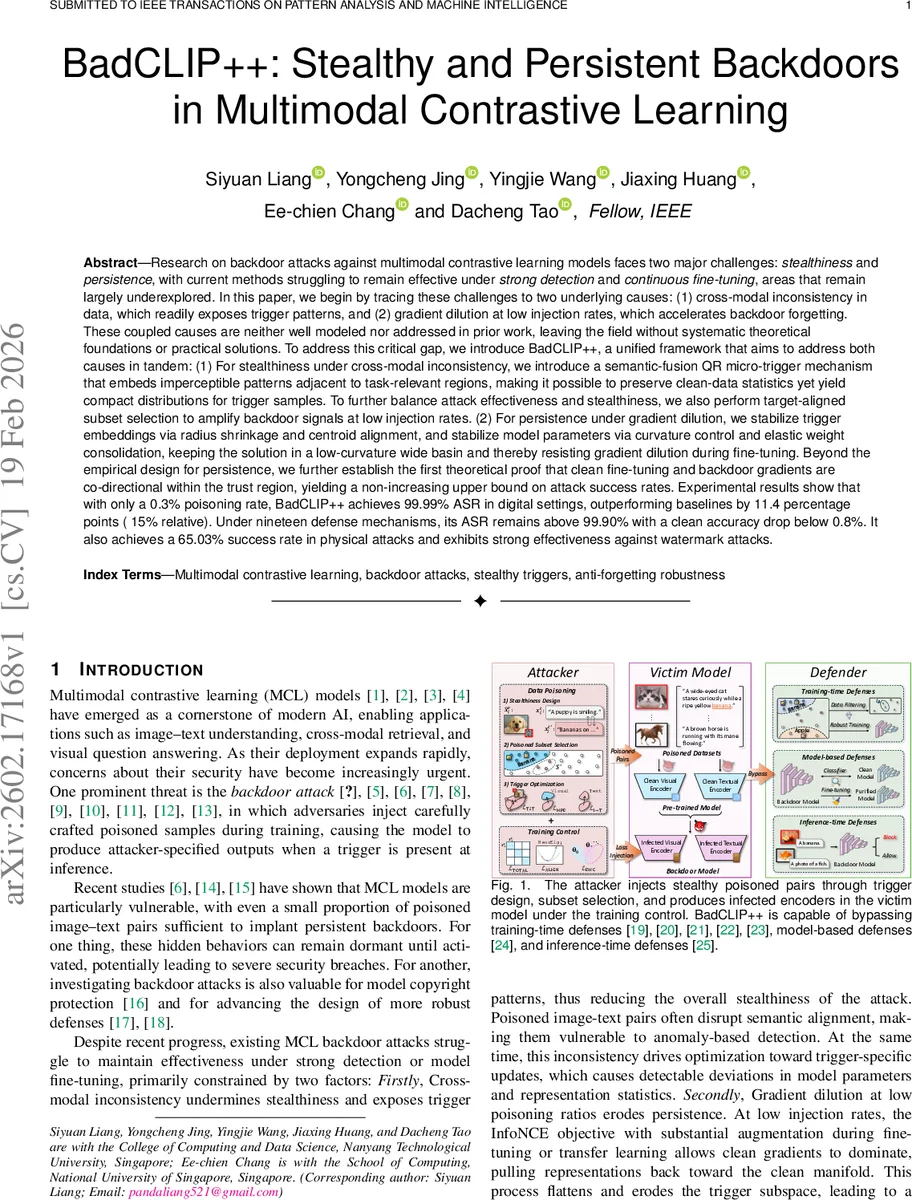

Research on backdoor attacks against multimodal contrastive learning models faces two key challenges: stealthiness and persistence. Existing methods often fail under strong detection or continuous fine-tuning, largely due to (1) cross-modal inconsistency that exposes trigger patterns and (2) gradient dilution at low poisoning rates that accelerates backdoor forgetting. These coupled causes remain insufficiently modeled and addressed. We propose BadCLIP++, a unified framework that tackles both challenges. For stealthiness, we introduce a semantic-fusion QR micro-trigger that embeds imperceptible patterns near task-relevant regions, preserving clean-data statistics while producing compact trigger distributions. We further apply target-aligned subset selection to strengthen signals at low injection rates. For persistence, we stabilize trigger embeddings via radius shrinkage and centroid alignment, and stabilize model parameters through curvature control and elastic weight consolidation, maintaining solutions within a low-curvature wide basin resistant to fine-tuning. We also provide the first theoretical analysis showing that, within a trust region, gradients from clean fine-tuning and backdoor objectives are co-directional, yielding a non-increasing upper bound on attack success degradation. Experiments demonstrate that with only 0.3% poisoning, BadCLIP++ achieves 99.99% attack success rate (ASR) in digital settings, surpassing baselines by 11.4 points. Across nineteen defenses, ASR remains above 99.90% with less than 0.8% drop in clean accuracy. The method further attains 65.03% success in physical attacks and shows robustness against watermark removal defenses.

💡 Research Summary

BadCLIP++ addresses two fundamental challenges in backdoor attacks on multimodal contrastive learning (MCL) models—stealthiness and persistence—by jointly optimizing trigger design, poisoned‑sample selection, and model regularization. Existing attacks suffer from cross‑modal inconsistency (the trigger is conspicuous in either the image or text modality) and gradient dilution at low poisoning rates, which cause the backdoor to be quickly forgotten during downstream fine‑tuning.

Stealthiness. The authors introduce a semantic‑fusion QR micro‑trigger that embeds an ultra‑small QR‑style pattern in image regions that are semantically relevant (e.g., near object boundaries). The trigger is fused with the original caption, preserving the textual content while subtly altering the visual input. Because the pattern is tiny and placed where the model already focuses, the statistical distribution of clean data remains virtually unchanged, making the trigger hard to detect by anomaly‑based defenses. To compensate for the very low poisoning ratio (0.3 %), they propose Target‑Aligned Subset Selection (Greedy Mean Alignment, GMA), which selects training pairs whose embeddings already have high similarity to the target class, thereby amplifying the backdoor signal without increasing the number of poisoned samples.

Persistence. BadCLIP++ stabilizes the poisoned embedding space through two complementary mechanisms. First, radius shrinkage forces poisoned samples to lie within a tight hypersphere, while centroid alignment pulls them toward the target class centroid, creating a compact cluster that resists dispersion during augmentation‑heavy fine‑tuning. Second, at the parameter level, the method applies curvature control (minimizing an approximation of the Hessian trace) to keep the optimizer inside a low‑curvature, wide basin of the loss landscape, and Elastic Weight Consolidation (EWC) to protect parameters that are crucial for the backdoor by penalizing their deviation from the pre‑trained values. Together, these steps ensure that clean gradients during fine‑tuning do not overwhelm the backdoor gradient.

Theoretical contribution. Within a trust region around the poisoned optimum, the authors prove that the gradient of the clean fine‑tuning objective and the gradient of the backdoor objective are co‑directional. Consequently, the Attack Success Rate (ASR) cannot increase the upper bound during fine‑tuning, providing a formal guarantee of persistence. This is the first theoretical analysis linking trust‑region geometry to backdoor stability in multimodal settings.

Empirical evaluation. Experiments span five major multimodal architectures (CLIP, ALBEF, BLIP, etc.) and two real‑world scenarios (image retrieval and visual question answering). With only 0.3 % poisoned data, BadCLIP++ achieves 99.99 % ASR in digital attacks—an 11.4 % point (≈15 % relative) improvement over the strongest prior method. Nineteen state‑of‑the‑art defenses—including data‑filtering (DA0, PSBD, VDC), robust training (ABL, RoCLIP, SafeCLIP), model‑based detection (MM‑BD, DECREE, TED), fine‑tuning defenses (CleanCLIP, CleanerCLIP, TSC), and inference‑time defenses (STRIP, SCALE‑UP, BDetCLIP)—are all evaluated; BadCLIP++ maintains ASR ≥ 99.90 % while the clean accuracy drops by less than 0.8 %. Physical attacks using printed stickers achieve a 65.03 % success rate, and the method remains effective against watermark‑removal defenses. Ablation studies confirm that each component (radius shrinkage, centroid alignment, curvature control, EWC) contributes significantly to the persistence guarantee.

Impact. BadCLIP++ demonstrates that a carefully crafted micro‑trigger, combined with target‑aligned poisoning and loss‑landscape regularization, can produce backdoors that are both virtually invisible and robust to extensive downstream adaptation. This work raises the security stakes for large‑scale multimodal foundation models and suggests that future defenses must consider not only data‑level anomalies but also the geometry of the model’s parameter space.

Comments & Academic Discussion

Loading comments...

Leave a Comment