TimeOmni-VL: Unified Models for Time Series Understanding and Generation

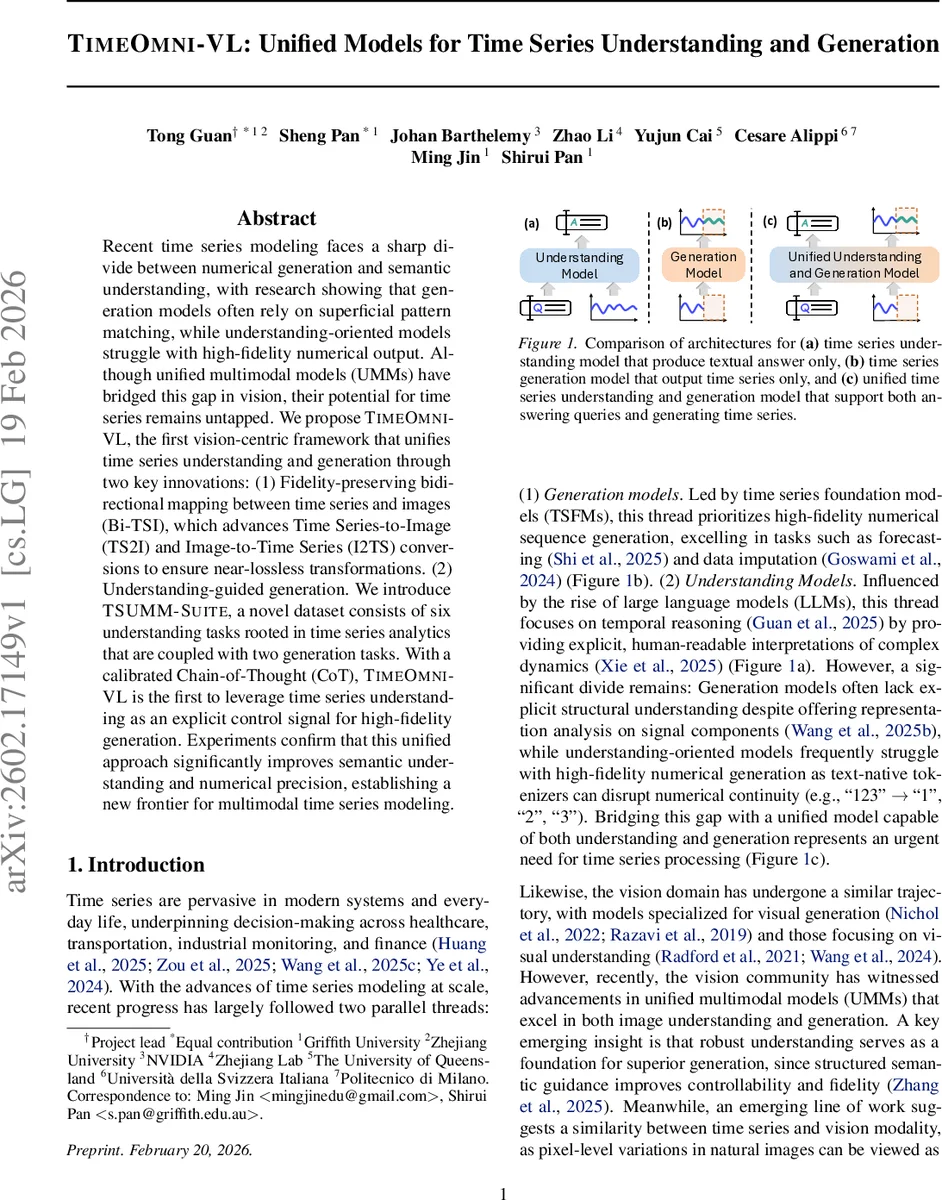

Recent time series modeling faces a sharp divide between numerical generation and semantic understanding, with research showing that generation models often rely on superficial pattern matching, while understanding-oriented models struggle with high-fidelity numerical output. Although unified multimodal models (UMMs) have bridged this gap in vision, their potential for time series remains untapped. We propose TimeOmni-VL, the first vision-centric framework that unifies time series understanding and generation through two key innovations: (1) Fidelity-preserving bidirectional mapping between time series and images (Bi-TSI), which advances Time Series-to-Image (TS2I) and Image-to-Time Series (I2TS) conversions to ensure near-lossless transformations. (2) Understanding-guided generation. We introduce TSUMM-Suite, a novel dataset consists of six understanding tasks rooted in time series analytics that are coupled with two generation tasks. With a calibrated Chain-of-Thought, TimeOmni-VL is the first to leverage time series understanding as an explicit control signal for high-fidelity generation. Experiments confirm that this unified approach significantly improves both semantic understanding and numerical precision, establishing a new frontier for multimodal time series modeling.

💡 Research Summary

TimeOmni‑VL introduces a vision‑centric unified framework that simultaneously tackles time‑series understanding and generation, addressing a long‑standing split between high‑fidelity numerical forecasting/imputation and semantic interpretation. The core innovation lies in a fidelity‑preserving bidirectional mapping between raw time‑series data and visual representations, called Bi‑TSI (Bidirectional Time Series ⇔ Image). Bi‑TSI improves upon prior VisionTS converters by adding Robust Fidelity Normalization (RFN) to keep high‑dynamic‑range signals within the 0‑255 pixel range without overflow, and Encoding Capacity Control to avoid implicit down‑sampling when projecting high‑dimensional series onto a fixed‑size canvas. These mechanisms ensure that the conversion to a TS‑image and back to a series incurs negligible loss, as demonstrated by a substantial reduction in mean absolute error during reconstruction.

The second pillar is “understanding‑guided generation.” Instead of treating Chain‑of‑Thought (CoT) merely as an explanatory text, TimeOmni‑VL embeds CoT inside explicit

To evaluate the approach, the authors construct TSUMM‑Suite, a benchmark comprising eight tasks: six understanding tasks (split into layout‑level and signal‑level analyses) and two generation tasks (forecasting and imputation). All tasks are derived from the same underlying series instances, ensuring that the understanding QA naturally becomes the control CoT for generation. The unified model is built on Bagel, a lightweight multimodal transformer that shares encoder‑decoder weights between image and text streams, making it backbone‑agnostic.

Training jointly optimizes both the understanding model and the generation module with a combined loss that supervises the textual answer, the CoT, and the generated series. Empirical results show near‑perfect accuracy (≈0.99) on four of the six understanding tasks, a mean absolute error reduction of 10‑15 % in the TS‑image ↔ series round‑trip, and an average 8.2 % improvement in forecasting RMSE when CoT conditioning is applied. Imputation performance also surpasses state‑of‑the‑art baselines, achieving a 2.3 dB gain in PSNR.

The paper acknowledges limitations: the image‑based pipeline incurs higher memory and compute costs, especially for high‑resolution TS‑images; reliance on a vision backbone may miss domain‑specific time‑series inductive biases; and the current CoT formulation is single‑step, leaving room for multi‑step reasoning. Future work is suggested in developing hybrid encoders that process raw series tokens alongside images, optimizing multi‑step CoT generation, and extending the framework to diverse domains such as healthcare, finance, and IoT.

In summary, TimeOmni‑VL demonstrates that by converting time‑series into a visual modality with lossless fidelity and by using semantic CoT as an explicit control signal, a unified multimodal model can achieve both high‑quality understanding and precise numerical generation. This work bridges the gap between generation‑centric and understanding‑centric time‑series research, opening a new frontier for multimodal temporal AI.

Comments & Academic Discussion

Loading comments...

Leave a Comment