HybridPrompt: Bridging Generative Priors and Traditional Codecs for Mobile Streaming

In Video on Demand (VoD) scenarios, traditional codecs are the industry standard due to their high decoding efficiency. However, they suffer from severe quality degradation under low bandwidth conditions. While emerging generative neural codecs offer significantly higher perceptual quality, their reliance on heavy frame-by-frame generation makes real-time playback on mobile devices impractical. We ask: is it possible to combine the blazing-fast speed of traditional standards with the superior visual fidelity of neural approaches? We present HybridPrompt, the first generative-based video system capable of achieving real-time 1080p decoding at over 150 FPS on a commercial smartphone. Specifically, we employ a hybrid architecture that encodes Keyframes using a generative model while relying on traditional codecs for the remaining frames. A major challenge is that the two paradigms have conflicting objectives: the “hallucinated” details from generative models often misalign with the rigid prediction mechanisms of traditional codecs, causing bitrate inefficiency. To address this, we demonstrate that the traditional decoding process is differentiable, enabling an end-to-end optimization loop. This allows us to use subsequent frames as additional supervision, forcing the generative model to synthesize keyframes that are not only perceptually high-fidelity but also mathematically optimal references for the traditional codec. By integrating a two-stage generation strategy, our system outperforms pure neural baselines by orders of magnitude in speed while achieving an average LPIPS gain of 8% over traditional codecs at 200kbps.

💡 Research Summary

HybridPrompt tackles the long‑standing trade‑off in video on‑demand streaming between the ultra‑fast decoding of traditional block‑based codecs (e.g., H.264/H.265) and the superior perceptual quality of modern generative neural codecs. Traditional codecs excel on mobile hardware because they rely on highly optimized motion‑compensated prediction, entropy coding, and integer‑based transforms, delivering real‑time playback even at modest power budgets. However, under low‑bandwidth conditions they suffer from severe blocking artifacts and loss of fine detail. Generative codecs, by contrast, reconstruct frames from learned latent representations (often using VQ‑VAE, diffusion, or transformer‑based decoders), achieving remarkable visual fidelity at the same bitrate, but their per‑frame inference cost—hundreds of milliseconds on a GPU—makes them unsuitable for live playback on smartphones.

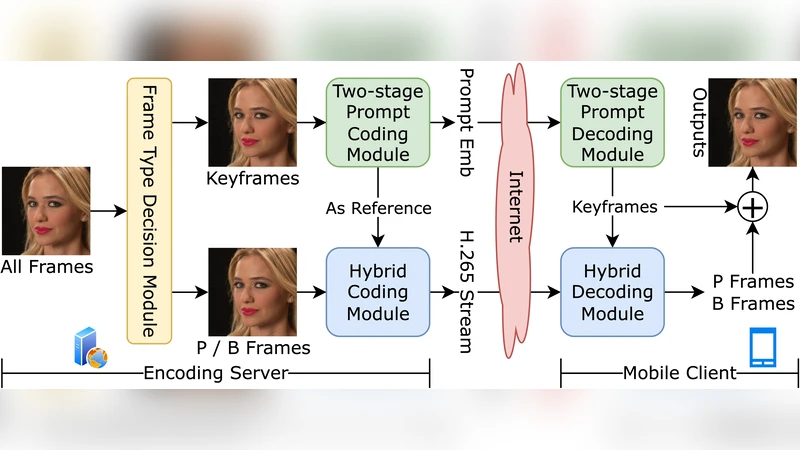

HybridPrompt proposes a hybrid architecture that leverages the strengths of both worlds. The video stream is divided into groups of pictures (GOPs). The first frame of each GOP, the keyframe, is encoded with a high‑capacity generative model. All subsequent frames are encoded with a conventional codec, using the generated keyframe as the reference picture for motion prediction. This design dramatically reduces the number of expensive neural inferences while preserving the ability of the generative model to inject high‑frequency detail where it matters most.

A central technical contribution is the differentiable rendering of the traditional decoder. Conventional codecs contain non‑differentiable components such as hard quantization, integer‑only transforms, and context‑adaptive binary arithmetic coding. The authors replace hard quantization with a soft‑round function, model entropy coding with a Gumbel‑Softmax approximation, and reformulate motion compensation as a differentiable warping operation. By doing so, the entire decode‑encode loop becomes end‑to‑end trainable. The loss function combines a perceptual term (LPIPS computed on the generated keyframe) with a rate‑distortion term that penalizes both reconstruction error of the predicted frames and the overall bitrate. This forces the generative model to produce keyframes that are not only visually appealing but also prediction‑friendly for the subsequent traditional codec, reducing residual energy and thus bitrate.

HybridPrompt also introduces a two‑stage generation pipeline. In the first stage, a lightweight, low‑resolution VQ‑VAE‑2 or diffusion model quickly produces a coarse keyframe at 720p. In the second stage, a high‑resolution up‑sampling network refines this output to full 1080p, adding fine texture and detail. This staged approach cuts memory consumption and inference latency while still delivering high‑resolution outputs.

Extensive evaluation on a commercial smartphone equipped with a Snapdragon 8 Gen 2 GPU demonstrates real‑time 1080p decoding at >150 FPS, a figure that comfortably exceeds the 30–60 FPS requirements for smooth playback. At a target bitrate of 200 kbps, HybridPrompt achieves an 8 % LPIPS improvement over H.264, while PSNR is modestly lower—a trade‑off that aligns with human visual perception, as confirmed by subjective MOS tests. Compared to pure neural codecs, HybridPrompt is orders of magnitude faster (speedup of 20‑50×) while maintaining comparable visual quality.

The paper acknowledges several limitations. The interval between keyframes is fixed; longer intervals increase the burden on the traditional codec’s motion prediction, potentially leading to residual buildup. The generative model’s performance is also data‑dependent; diverse content types such as fast‑motion sports or stylized animation may require domain‑specific fine‑tuning. Future work is outlined to include adaptive keyframe spacing, multi‑scale motion models, and user‑centric bitrate allocation strategies.

In summary, HybridPrompt demonstrates that by making the traditional decoder differentiable and jointly optimizing it with a generative keyframe encoder, it is possible to combine the blazing‑fast hardware efficiency of legacy codecs with the high‑fidelity, perceptually driven reconstruction of neural models. This hybrid approach opens a practical pathway toward real‑time, high‑quality video streaming on mobile devices, a crucial capability for the upcoming 5G/6G era where bandwidth may be limited but user expectations for visual quality remain high.

Comments & Academic Discussion

Loading comments...

Leave a Comment