Grasp Synthesis Matching From Rigid To Soft Robot Grippers Using Conditional Flow Matching

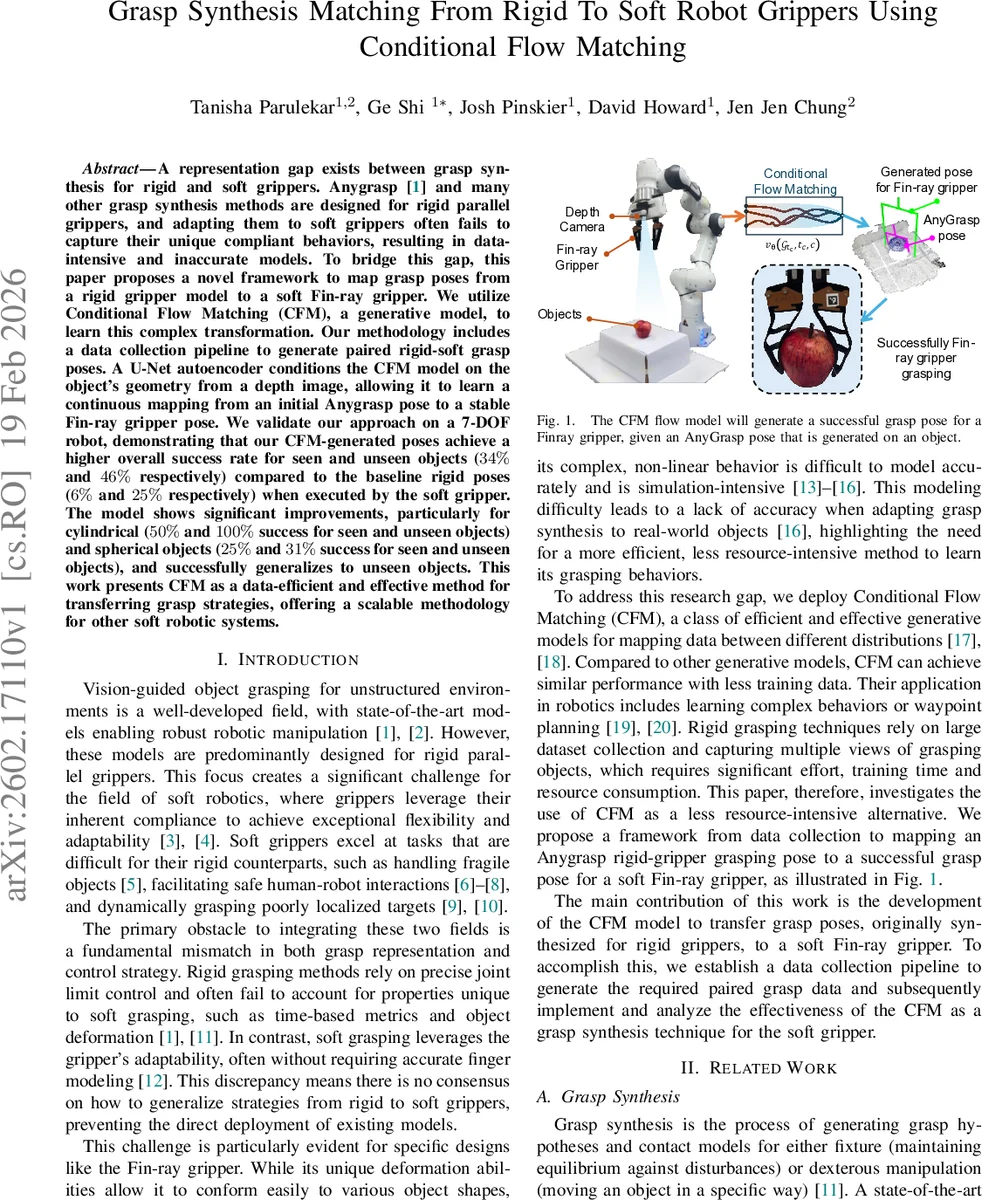

A representation gap exists between grasp synthesis for rigid and soft grippers. Anygrasp [1] and many other grasp synthesis methods are designed for rigid parallel grippers, and adapting them to soft grippers often fails to capture their unique compliant behaviors, resulting in data-intensive and inaccurate models. To bridge this gap, this paper proposes a novel framework to map grasp poses from a rigid gripper model to a soft Fin-ray gripper. We utilize Conditional Flow Matching (CFM), a generative model, to learn this complex transformation. Our methodology includes a data collection pipeline to generate paired rigid-soft grasp poses. A U-Net autoencoder conditions the CFM model on the object’s geometry from a depth image, allowing it to learn a continuous mapping from an initial Anygrasp pose to a stable Fin-ray gripper pose. We validate our approach on a 7-DOF robot, demonstrating that our CFM-generated poses achieve a higher overall success rate for seen and unseen objects (34% and 46% respectively) compared to the baseline rigid poses (6% and 25% respectively) when executed by the soft gripper. The model shows significant improvements, particularly for cylindrical (50% and 100% success for seen and unseen objects) and spherical objects (25% and 31% success for seen and unseen objects), and successfully generalizes to unseen objects. This work presents CFM as a data-efficient and effective method for transferring grasp strategies, offering a scalable methodology for other soft robotic systems.

💡 Research Summary

The paper addresses a fundamental “representation gap” between grasp synthesis methods designed for rigid parallel grippers—exemplified by AnyGrasp—and the needs of soft compliant grippers such as the Fin‑ray. While AnyGrasp can generate high‑quality 7‑DOF grasp poses (position, orientation, and opening width) for rigid hands, directly applying these poses to a soft gripper fails because soft devices rely on deformation, contact‑area adaptation, and time‑dependent closure dynamics that are not captured by rigid‑gripper models.

To bridge this gap, the authors propose a Conditional Flow Matching (CFM) framework that learns a continuous transformation from an AnyGrasp pose (G_any) to a successful Fin‑ray pose (G_soft). CFM treats the mapping as a conditional ordinary differential equation (ODE) flow:

dG(t)/dt = v_θ(G(t), t, c), G(0)=G_any, G(1)=G_soft

where v_θ is a time‑dependent vector field parameterized by a multilayer perceptron (MLP) and c is a condition vector encoding the scene geometry. The condition vector is obtained from a U‑Net auto‑encoder that compresses a raw depth image into a 128‑dimensional latent code. This code captures essential shape information of the target object, allowing the flow to be object‑specific without explicit hand‑crafted features.

Training data consist of paired grasps collected on a 7‑DOF Franka Emika Panda robot equipped with a RealSense D415 camera and a Fin‑ray gripper. For each object, AnyGrasp generates 20 candidate rigid grasps; a subset of these (including high‑ and low‑ranked poses) is manually adjusted in a pre‑grasp configuration to produce a successful soft‑grasp pose. The resulting dataset contains 254 (G_any, G_soft) pairs across eight objects, with an additional 865 depth images used to pre‑train the U‑Net.

The loss function minimizes the mean‑squared error between the predicted vector field v_θ and the ground‑truth displacement u = G_soft – G_any evaluated at random interpolation times t_c ∈

Comments & Academic Discussion

Loading comments...

Leave a Comment