AgentConductor: Topology Evolution for Multi-Agent Competition-Level Code Generation

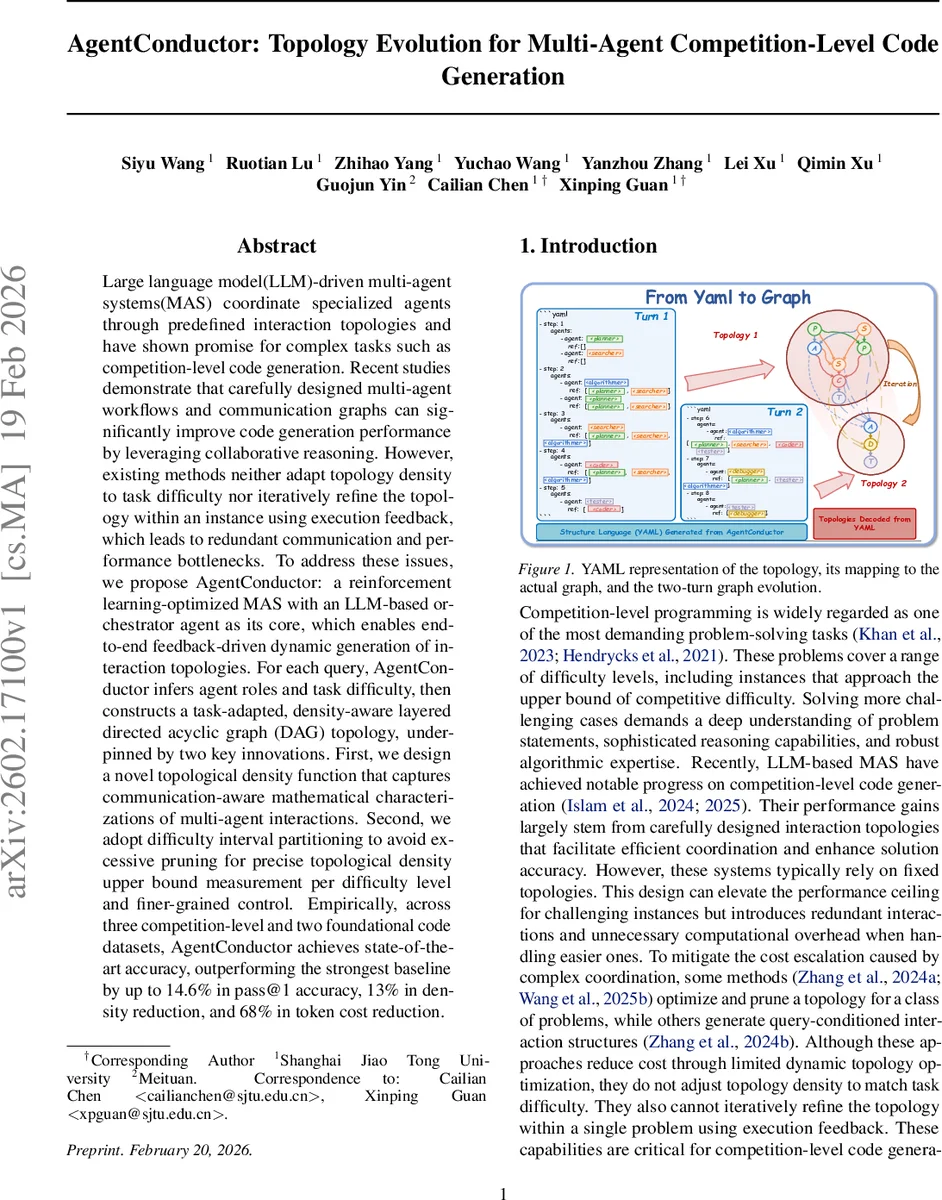

Large language model(LLM)-driven multi-agent systems(MAS) coordinate specialized agents through predefined interaction topologies and have shown promise for complex tasks such as competition-level code generation. Recent studies demonstrate that carefully designed multi-agent workflows and communication graphs can significantly improve code generation performance by leveraging collaborative reasoning. However, existing methods neither adapt topology density to task difficulty nor iteratively refine the topology within an instance using execution feedback, which leads to redundant communication and performance bottlenecks. To address these issues, we propose AgentConductor: a reinforcement learning-optimized MAS with an LLM-based orchestrator agent as its core, which enables end-to-end feedback-driven dynamic generation of interaction topologies. For each query, AgentConductor infers agent roles and task difficulty, then constructs a task-adapted, density-aware layered directed acyclic graph (DAG) topology, underpinned by two key innovations. First, we design a novel topological density function that captures communication-aware mathematical characterizations of multi-agent interactions. Second, we adopt difficulty interval partitioning to avoid excessive pruning for precise topological density upper bound measurement per difficulty level and finer-grained control. Empirically, across three competition-level and two foundational code datasets, AgentConductor achieves state-of-the-art accuracy, outperforming the strongest baseline by up to 14.6% in pass@1 accuracy, 13% in density reduction, and 68% in token cost reduction.

💡 Research Summary

AgentConductor introduces a novel reinforcement‑learning‑optimized multi‑agent system (MAS) for competition‑level code generation, centered on an LLM‑based orchestrator that dynamically creates and evolves interaction topologies. Existing LLM‑driven MAS either rely on static, pre‑defined graphs or apply one‑time pruning across an entire dataset, which fails to adapt graph density to the difficulty of individual problems and cannot incorporate execution feedback during inference. AgentConductor addresses these gaps through a three‑stage pipeline. First, a supervised fine‑tuning (SFT) phase equips the orchestrator (based on Qwen‑2.5‑Instruct‑3B) with priors over a diverse set of topologies expressed in a human‑readable YAML language. Second, a reinforcement‑learning (RL) phase optimizes a policy πθ using a multi‑objective reward that balances structural correctness, code accuracy (pass@k), and a newly defined graph‑density cost. The density function D(G) mathematically captures communication overhead as a function of edge count, node count, and depth, and is bounded differently for predefined difficulty intervals, allowing “dense” graphs for hard problems and “sparse” graphs for easy ones. Third, during inference the trained orchestrator is frozen and used to generate a layered directed acyclic graph (DAG) for each problem. The orchestrator first infers the problem’s difficulty, selects appropriate agent roles (planner, searcher, algorithmist, coder, tester, debugger, etc.), and emits a variable‑length YAML token sequence that encodes the DAG. The environment executes agents according to this graph, runs the generated code in a sandbox, and returns execution feedback (e.g., test failures, time‑outs). If the attempt fails, the feedback and interaction history are fed back into the orchestrator, which produces a revised topology in the next turn. This multi‑turn, feedback‑driven evolution continues until a correct solution is found or a turn limit is reached. Experiments span three competition‑level benchmarks (e.g., Codeforces, AtCoder, LeetCode) and two foundational code suites (HumanEval, MBPP). AgentConductor outperforms the strongest baselines by up to 14.6 % absolute improvement in pass@1, reduces average graph density by 13 %, and cuts token consumption by 68 %. Detailed analysis shows that difficulty‑aware density control yields the most gains on hard instances, while sparsity on easy instances curtails unnecessary communication. Zero‑shot transfer experiments demonstrate that the orchestrator generalizes to new datasets and can incorporate novel agent roles with minimal additional training. In sum, AgentConductor proves that jointly optimizing topology generation, difficulty‑aware density, and execution‑feedback loops can dramatically enhance both the efficiency and effectiveness of LLM‑based code generation systems.

Comments & Academic Discussion

Loading comments...

Leave a Comment