General sample size analysis for probabilities of causation: a delta method approach

Probabilities of causation (PoCs), such as the probability of necessity and sufficiency (PNS), are important tools for decision making but are generally not point identifiable. Existing work has derived bounds for these quantities using combinations of experimental and observational data. However, there is very limited research on sample size analysis, namely, how many experimental and observational samples are required to achieve a desired margin of error. In this paper, we propose a general sample size framework based on the delta method. Our approach applies to settings in which the target bounds of PoCs can be expressed as finite minima or maxima of linear combinations of experimental and observational probabilities. Through simulation studies, we demonstrate that the proposed sample size calculations lead to stable estimation of these bounds.

💡 Research Summary

**

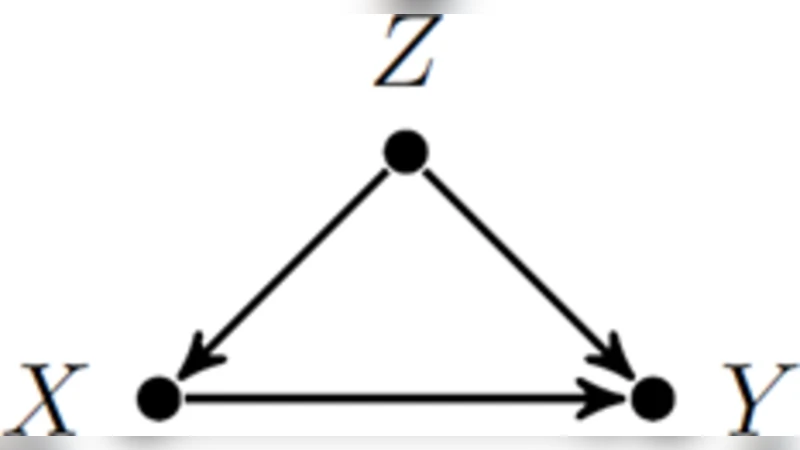

The paper addresses a gap in the literature on probabilities of causation (PoCs), such as the probability of necessity and sufficiency (PNS), by providing a systematic sample‑size framework. While previous work has focused on deriving sharp bounds for PoCs using a mixture of experimental (interventional) and observational data, it has largely ignored how many observations are needed to achieve a pre‑specified margin of error. The authors observe that many PoC bounds can be written as the minimum or maximum of a finite set of linear combinations of basic experimental and observational probabilities. This structural insight allows the problem to be cast in a form amenable to the delta method, a classic technique for approximating the variance of a smooth function of random variables via a first‑order Taylor expansion.

Under the standard assumption that each basic probability follows a binomial distribution and that experimental and observational samples are independent, the authors derive the gradient of the bound‑function with respect to the vector of underlying probabilities. By combining this gradient with the covariance matrix of the estimated probabilities, they obtain an approximate variance for the bound estimator:

\