WS-GRPO: Weakly-Supervised Group-Relative Policy Optimization for Rollout-Efficient Reasoning

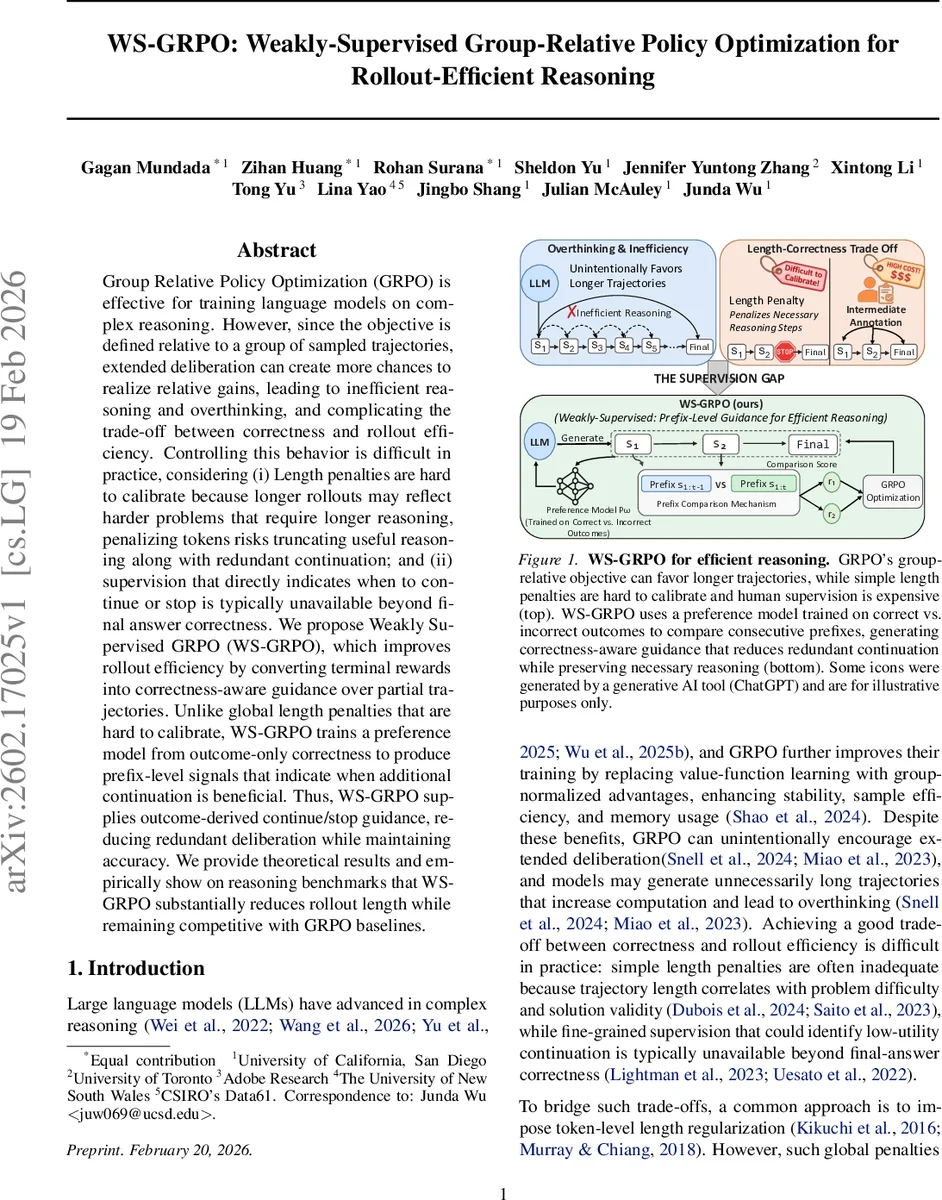

Group Relative Policy Optimization (GRPO) is effective for training language models on complex reasoning. However, since the objective is defined relative to a group of sampled trajectories, extended deliberation can create more chances to realize relative gains, leading to inefficient reasoning and overthinking, and complicating the trade-off between correctness and rollout efficiency. Controlling this behavior is difficult in practice, considering (i) Length penalties are hard to calibrate because longer rollouts may reflect harder problems that require longer reasoning, penalizing tokens risks truncating useful reasoning along with redundant continuation; and (ii) supervision that directly indicates when to continue or stop is typically unavailable beyond final answer correctness. We propose Weakly Supervised GRPO (WS-GRPO), which improves rollout efficiency by converting terminal rewards into correctness-aware guidance over partial trajectories. Unlike global length penalties that are hard to calibrate, WS-GRPO trains a preference model from outcome-only correctness to produce prefix-level signals that indicate when additional continuation is beneficial. Thus, WS-GRPO supplies outcome-derived continue/stop guidance, reducing redundant deliberation while maintaining accuracy. We provide theoretical results and empirically show on reasoning benchmarks that WS-GRPO substantially reduces rollout length while remaining competitive with GRPO baselines.

💡 Research Summary

Weakly‑Supervised Group‑Relative Policy Optimization (WS‑GRPO) addresses a critical inefficiency in modern large language models (LLMs) when they are used for multi‑step reasoning tasks. Existing Group‑Relative Policy Optimization (GRPO) improves sample efficiency and stability by normalizing advantages across a batch of sampled trajectories, but its relative‑gain objective unintentionally encourages longer rollouts: the more steps a model generates, the higher the chance that at least one trajectory in the group will achieve a relative advantage. This leads to “overthinking,” higher computational cost, and difficulty balancing correctness against rollout length. Simple token‑level length penalties are problematic because trajectory length often correlates with problem difficulty; penalizing length can truncate necessary reasoning on hard questions while failing to curb redundant steps on easy ones. Moreover, fine‑grained supervision indicating when to stop generation is rarely available beyond the binary final‑answer correctness label.

WS‑GRPO proposes a two‑stage solution that converts the sparse terminal reward (correct/incorrect answer) into dense, prefix‑level guidance without requiring any additional human annotation. In Phase I, the method builds a weakly‑supervised preference model Pω from outcome‑only labels. For each question, several full reasoning trajectories are sampled; correct trajectories are paired with incorrect ones, and a binary label y = 1 is assigned to the ordered pair (correct, incorrect) while y = 0 to the reverse ordering. The preference model receives as input the question together with the two full trajectories concatenated in a specially designed template, processes them with a FLAN‑T5 encoder, and outputs a probability that the first trajectory is preferred. Training uses a Bradley‑Terry‑style binary cross‑entropy loss, ensuring that the model learns to distinguish high‑quality reasoning from low‑quality reasoning solely from final‑answer outcomes.

In Phase II, the frozen preference model is repurposed to generate step‑wise pseudo‑rewards for each rollout produced by the current policy πθ. For a trajectory τ = (s₁, …, s_T) where each s_t is a sentence‑level reasoning step, the model compares consecutive prefixes (s₁:ₜ₋₁, s₁:ₜ). The probability that the longer prefix is preferred, r_stepₜ = Pω(q, s₁:ₜ₋₁, s₁:ₜ), serves as a dense reward indicating whether extending the reasoning at step t adds value. The average of these r_step values across a trajectory yields a prefix‑level reward R_pref. WS‑GRPO then combines R_pref with the original terminal reward (binary correctness) inside the GRPO objective: the group‑relative advantage ˆA_i is computed from the sum of terminal and prefix rewards, and the usual PPO‑style clipping loss with KL regularization is applied. Consequently, the policy receives a strong signal to stop generating when additional steps are unlikely to improve correctness, while still being encouraged to continue when the prefix reward suggests a meaningful gain.

The authors provide theoretical guarantees: (1) consistency of the preference model with respect to the underlying outcome distribution, (2) a preference‑error‑controlled robustness bound showing that bounded errors in Pω translate to bounded degradation in policy performance, and (3) a high‑probability generalization bound for the preference model trained on finite outcome data. These results justify that weak supervision from final answers can reliably induce useful intermediate signals.

Empirical evaluation is conducted on three reasoning benchmarks: GSM‑8K, MathQA, and MATH. Baselines include the original GRPO, a standard PPO policy, and GRPO augmented with a handcrafted token‑level length penalty. WS‑GRPO achieves a substantial reduction in average rollout length—between 28 % and 35 %—while maintaining or slightly improving answer accuracy (often within 0.5 % of the GRPO baseline). The method is especially effective on easier questions, where it cuts unnecessary steps by over 40 %, yet it preserves longer reasoning chains on harder problems, avoiding the accuracy drop seen with naive length penalties. Ablation studies confirm that the prefix‑level rewards derived from the preference model are the primary driver of efficiency gains; removing them reverts performance to that of vanilla GRPO. Additional experiments with different model sizes for the preference network demonstrate that even modestly sized preference models suffice to produce useful guidance.

The paper discusses limitations and future directions. The current implementation compares only adjacent prefixes (one‑step differences); exploring larger gaps could yield richer signals. The preference model, trained on full‑trajectory outcomes, may struggle when the quality difference between consecutive prefixes is subtle, suggesting a potential benefit from incorporating auxiliary signals (e.g., self‑consistency scores). Moreover, the approach assumes sentence‑level reasoning steps; adapting it to token‑level or more fine‑grained action spaces would broaden applicability. Finally, integrating WS‑GRPO with other rollout‑reduction techniques such as early‑exit transformers or dynamic‑depth networks is an interesting avenue.

In summary, WS‑GRPO introduces a principled weak‑supervision framework that transforms binary final‑answer correctness into correctness‑aware, prefix‑level continue/stop signals via a learned preference model. By embedding these dense signals into the GRPO objective, the method effectively curtails redundant deliberation, reduces computational cost, and preserves high reasoning accuracy, offering a practical solution for deploying large language models in resource‑constrained or real‑time reasoning scenarios.

Comments & Academic Discussion

Loading comments...

Leave a Comment