CAFE: Channel-Autoregressive Factorized Encoding for Robust Biosignal Spatial Super-Resolution

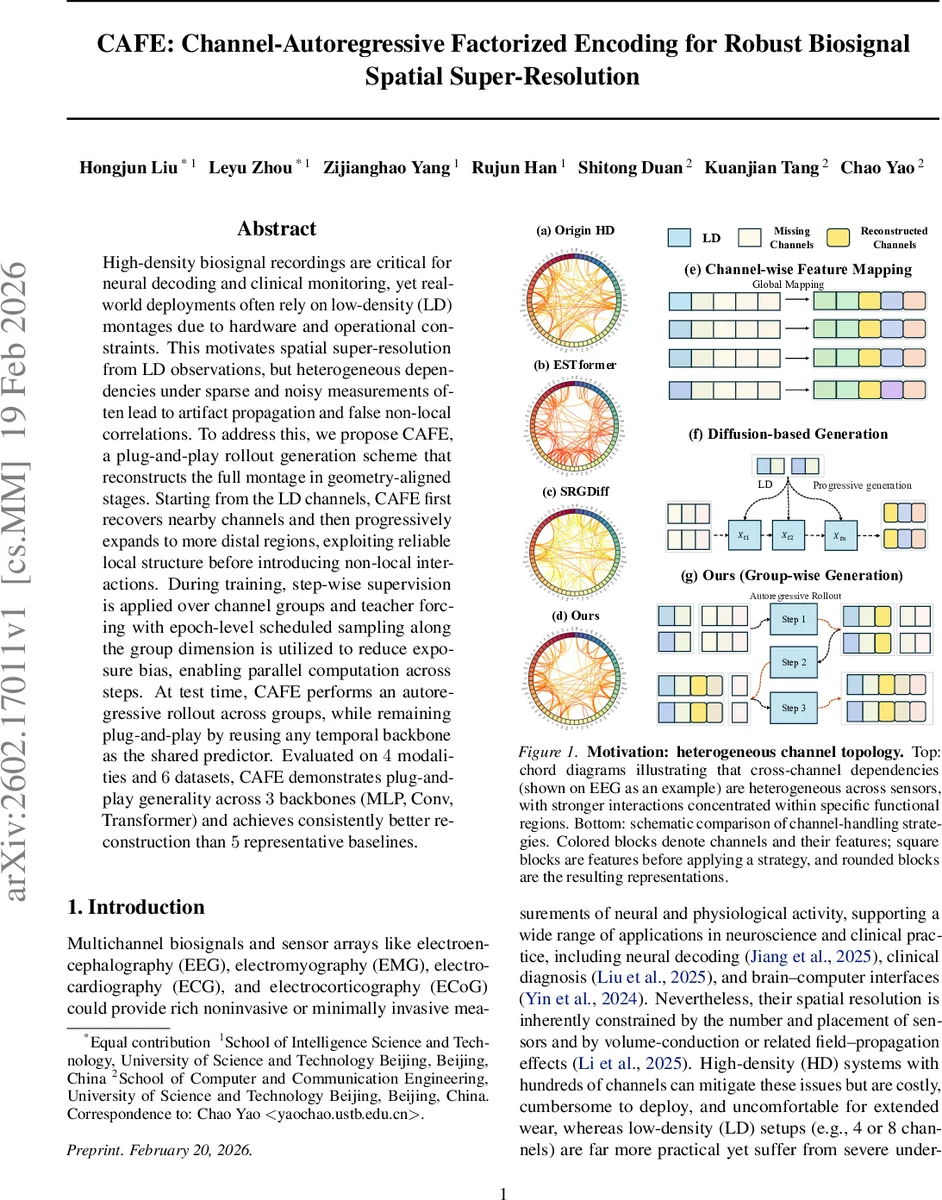

High-density biosignal recordings are critical for neural decoding and clinical monitoring, yet real-world deployments often rely on low-density (LD) montages due to hardware and operational constraints. This motivates spatial super-resolution from LD observations, but heterogeneous dependencies under sparse and noisy measurements often lead to artifact propagation and false non-local correlations. To address this, we propose CAFE, a plug-and-play rollout generation scheme that reconstructs the full montage in geometry-aligned stages. Starting from the LD channels, CAFE first recovers nearby channels and then progressively expands to more distal regions, exploiting reliable local structure before introducing non-local interactions. During training, step-wise supervision is applied over channel groups and teacher forcing with epoch-level scheduled sampling along the group dimension is utilized to reduce exposure bias, enabling parallel computation across steps. At test time, CAFE performs an autoregressive rollout across groups, while remaining plug-and-play by reusing any temporal backbone as the shared predictor. Evaluated on $4$ modalities and $6$ datasets, CAFE demonstrates plug-and-play generality across $3$ backbones (MLP, Conv, Transformer) and achieves consistently better reconstruction than $5$ representative baselines.

💡 Research Summary

The paper tackles the problem of spatial super‑resolution for multichannel biosignals, i.e., reconstructing high‑density (HD) recordings from low‑density (LD) observations that are common in real‑world deployments due to cost, comfort, and hardware constraints. Existing approaches—interpolation, direct feature‑mapping, and generative models (GANs, diffusion)—all rely on globally dense channel coupling. This global mixing is problematic because LD recordings are often sparse and noisy; non‑local channel interactions become unreliable, leading to artifact propagation and spurious long‑range correlations.

CAFE (Channel‑Autoregressive Factorized Encoding) introduces a fundamentally different decoding paradigm: it factorizes the reconstruction into a sequence of geometry‑aware steps, each recovering a small group of channels that are close to the already observed set. The method proceeds as follows. First, the canonical electrode coordinates are used to compute, for every missing channel, its average Euclidean distance to the LD anchors. Missing channels are sorted by this distance and partitioned into G = 3 contiguous groups using fixed split fractions (β₁ = 1/6, β₂ = 1/2). The first group contains the channels nearest to the LD set, the second group the next‑nearest, and the third the most distant.

A single shared predictor fθ—implemented as any temporal backbone (depthwise‑separable convolutional encoder, lightweight MLP with positional encodings, or a standard time‑series transformer)—is reused across all steps. At step g, a binary mask M_g marks the currently visible channels V_g = L ∪ ⋃_{k<g}U_k. The masked montage C_g = M_g ⊙ eX(g‑1) (where eX(g‑1) holds the LD channels and the predictions from previous steps) is fed to fθ, which outputs a full‑channel estimate bX(g). Only the entries belonging to the target group U_g are written back; all other channels remain unchanged. Repeating this for g = 1…G yields the final HD reconstruction.

Training uses teacher forcing: during each step the context is built from ground‑truth values of all earlier groups, ensuring that the predictor learns a clean conditional mapping. To mitigate exposure bias at inference—where the model must rely on its own predictions—a novel epoch‑level scheduled sampling scheme is introduced. After each epoch, a cache of the model’s own predictions for all groups is stored. In the next epoch, for each earlier group k < g a Bernoulli variable with probability π = 0.95 decides whether to use the ground‑truth or the cached prediction when constructing the context. Because the cache is fixed for the whole forward pass, all step contexts can be assembled in parallel, preserving computational efficiency. At test time π = 0, so the rollout is fully autoregressive.

The authors evaluate CAFE on six publicly available multichannel datasets covering four modalities: high‑density EEG (SEED, Localize‑MI), high‑density surface EMG (two gesture datasets), intracranial ECoG (AJILE12), and 12‑lead ECG (CPSC2018). For each dataset they test multiple up‑sampling factors (2× to 16×) and four predefined LD layouts that maximize spatial coverage. Metrics include signal fidelity (NMSE, Pearson correlation, reconstruction SNR), a perceptual EEG‑FID computed with a frozen EEGNet, spectral MAE on STFT power, and downstream utility (emotion classification accuracy on SEED and binary seizure detection on Localize‑MI).

Across all backbones, CAFE’s group‑wise autoregressive rollout consistently improves performance over the original one‑pass models. For example, with a convolutional backbone on SEED, NMSE drops from 0.18 to 0.12 (33 % reduction), PCC rises from 0.86 to 0.93 (+8 %), and SNR improves by 30 % (6.98 dB → 9.04 dB). Similar gains are observed for MLP and transformer backbones, and the relative improvements are even larger on high‑density modalities such as ECoG, where structured dependency expansion yields the most benefit. Compared with five strong baselines—including interpolation, feature‑mapping, and state‑of‑the‑art generative models (SRGDif f, ESTformer, SRGDiff, CGAN)—CAFE achieves the best scores on every metric, confirming its robustness and generality.

Key contributions are: (1) reformulating spatial super‑resolution as a next‑group conditional generation problem; (2) introducing a geometry‑aware, distance‑ordered group partition and an autoregressive decoding schedule that progressively expands the visible channel set; (3) demonstrating that the rollout is backbone‑agnostic, enabling plug‑and‑play use of any temporal model; and (4) providing a practical training scheme that combines teacher forcing with epoch‑level scheduled sampling to reduce exposure bias while retaining parallelizability.

The paper also discusses limitations. The current grouping uses fixed split fractions and a static number of steps, which may be suboptimal for irregular sensor layouts or highly imbalanced channel distributions. Future work could explore adaptive, data‑driven grouping strategies, investigate latency and memory footprints for real‑time streaming applications, and extend the approach to multimodal fusion scenarios where different sensor types share a common spatial layout.

In summary, CAFE offers a principled, efficient, and empirically validated solution for reconstructing high‑resolution multichannel biosignals from sparse measurements. By respecting the inherent locality of biosignal correlations and gradually introducing non‑local interactions, it avoids the pitfalls of global coupling, reduces artifact propagation, and delivers consistently higher fidelity reconstructions across diverse modalities and datasets. This makes it a compelling candidate for deployment in next‑generation brain‑computer interfaces, remote health monitoring, and any application that must trade off sensor density against signal quality.

Comments & Academic Discussion

Loading comments...

Leave a Comment