Sonar-TS: Search-Then-Verify Natural Language Querying for Time Series Databases

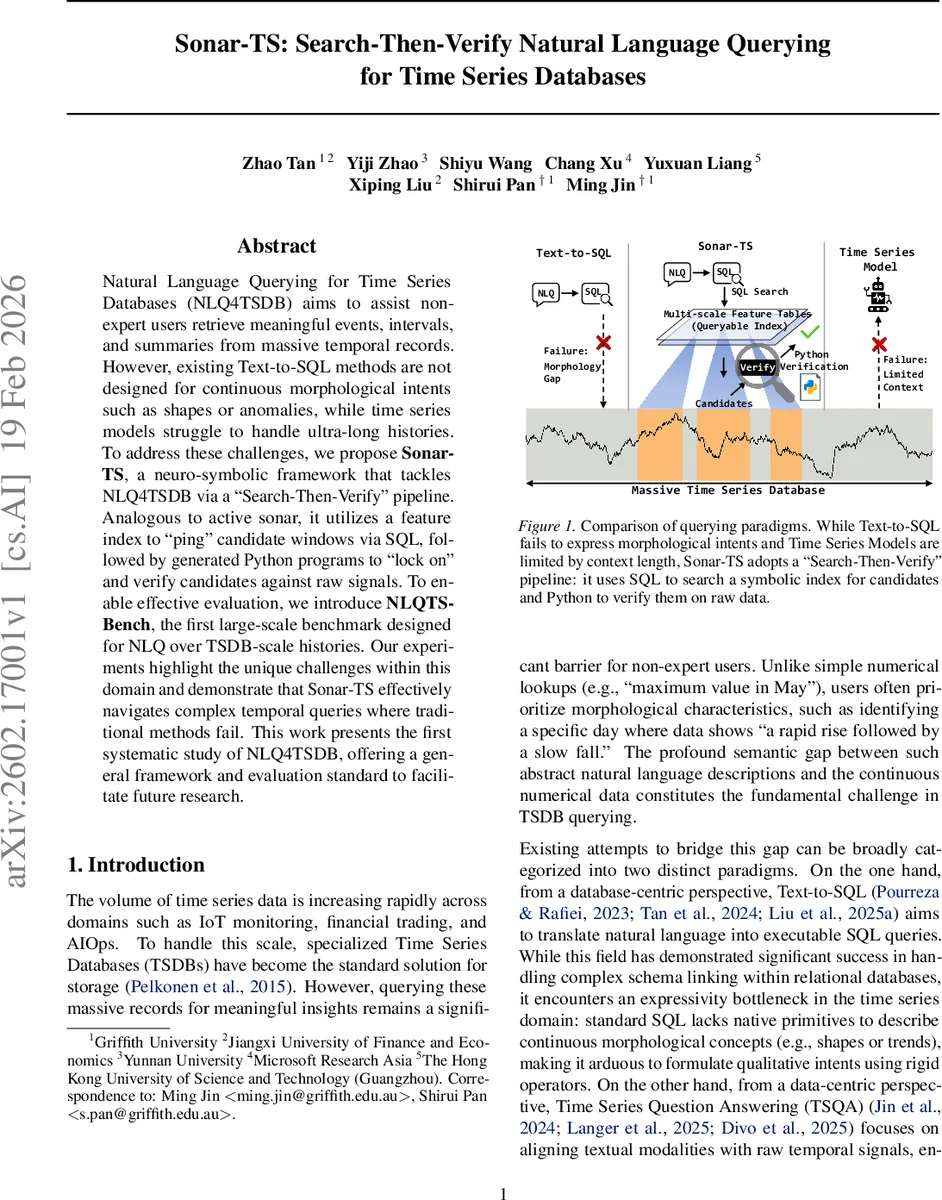

Natural Language Querying for Time Series Databases (NLQ4TSDB) aims to assist non-expert users retrieve meaningful events, intervals, and summaries from massive temporal records. However, existing Text-to-SQL methods are not designed for continuous morphological intents such as shapes or anomalies, while time series models struggle to handle ultra-long histories. To address these challenges, we propose Sonar-TS, a neuro-symbolic framework that tackles NLQ4TSDB via a Search-Then-Verify pipeline. Analogous to active sonar, it utilizes a feature index to ping candidate windows via SQL, followed by generated Python programs to lock on and verify candidates against raw signals. To enable effective evaluation, we introduce NLQTSBench, the first large-scale benchmark designed for NLQ over TSDB-scale histories. Our experiments highlight the unique challenges within this domain and demonstrate that Sonar-TS effectively navigates complex temporal queries where traditional methods fail. This work presents the first systematic study of NLQ4TSDB, offering a general framework and evaluation standard to facilitate future research.

💡 Research Summary

The paper introduces a new research problem called Natural Language Querying for Time Series Databases (NLQ4TSDB), which aims to let non‑expert users retrieve complex insights—such as specific shapes, anomalies, or causal relationships—from massive, long‑horizon time‑series collections using natural language. Existing approaches fall short: traditional Text‑to‑SQL pipelines excel at schema linking but cannot express continuous morphological intents (e.g., “rapid rise then slow fall”), while recent Time‑Series Question Answering (TSQA) models are limited by fixed‑size context windows and cannot ingest the millions of points typical of real‑world TSDBs.

To bridge this gap, the authors propose Sonar‑TS, a neuro‑symbolic framework that decomposes the task into a “Search‑Then‑Verify” pipeline, analogous to active sonar. The system consists of three stages.

-

Offline Data Processing – Raw series are segmented into multi‑scale windows (year, month, day, etc.). For each window the system computes lightweight statistical primitives (slope, variance, etc.) and symbolic shape signatures (e.g., SAX, shapelet descriptors). These descriptors are stored in compact feature tables that can be queried efficiently with standard SQL. The raw TSDB remains the source of truth and is accessed only later for precise verification.

-

Online Querying – Given a natural‑language query Q and a textual schema S, a large language model (LLM) acts as a planner. It generates a hybrid execution plan consisting of (a) an SQL fragment that searches the feature tables and returns candidate window identifiers, and (b) a Python snippet that fetches the raw slices for those candidates and performs task‑specific checks (peak detection, causal inference, periodicity analysis, etc.). This two‑step approach provides high recall in the first stage (thanks to the index) while guaranteeing exact results in the second stage because the raw data is examined directly. The planner also incorporates a cold‑start prompt and continuously refines its generation strategy using experience summaries from past queries.

-

Post‑Processing – The results are formatted into the appropriate output type (scalar, timestamp, interval, or structured report). Visualizations and concise textual summaries are added for user inspection.

To evaluate Sonar‑TS, the authors construct NLQTSBench, the first large‑scale benchmark specifically designed for NLQ over TSDB‑scale histories. NLQTSBench contains 831 validated queries, organized into a four‑level taxonomy:

- Level 1 – Basic Operations (numeric filtering, simple windowing).

- Level 2 – Pattern Recognition (shape identification, periodicity detection, subsequence matching).

- Level 3 – Semantic Reasoning (composite trends, contextual anomalies, causal anomalies across channels).

- Level 4 – Insight Synthesis (full‑report generation with trend segmentation and outlier auditing).

Each query operates on windows averaging ~11 000 points, while the underlying series contain >170 000 points, forcing solvers to perform true evidence localization rather than naïve down‑sampling. The benchmark provides deterministic evaluation scripts and metrics such as interval IoU and Set‑F1. A lightweight “NLQTSBench‑Lite” variant with fixed 512‑point windows is also released for evaluating context‑limited models.

Experimental results compare Sonar‑TS against strong baselines: (i) pure Text‑to‑SQL translators, (ii) end‑to‑end TSQA models (e.g., Time‑LLM, ChatTS), and (iii) hybrid pipelines that lack a dedicated feature index. Across Levels 2 and 3, Sonar‑TS achieves more than 30 % absolute improvement in accuracy, particularly on queries requiring shape grounding or cross‑channel causal reasoning. The feature index yields high‑recall candidate sets, dramatically reducing the number of raw‑data scans and enabling the Python verification step to run in milliseconds per candidate.

Key insights from the study are:

- Neuro‑symbolic synergy – Symbolic multi‑scale indices provide efficient coarse‑grained filtering, while LLM‑driven planning supplies the flexibility to interpret nuanced natural‑language intents and generate precise verification code.

- Scalability without sacrificing precision – By never performing a full scan of the raw TSDB, Sonar‑TS scales to ultra‑long histories, yet the final answer is always computed on the original data, preserving exactness.

- Benchmark necessity – NLQTSBench reveals that many existing models collapse when faced with long‑horizon evidence localization, underscoring the need for dedicated evaluation suites in this emerging domain.

The paper concludes with several avenues for future work: (a) enriching the feature index with more expressive shape descriptors while keeping storage overhead low, (b) improving the LLM planner through reinforcement learning from execution feedback, (c) extending the framework to real‑time streaming scenarios, and (d) exploring multimodal queries that combine textual descriptions with visual sketches. Sonar‑TS and NLQTSBench together lay a solid foundation for the next generation of natural‑language interfaces to large‑scale time‑series databases.

Comments & Academic Discussion

Loading comments...

Leave a Comment