DDiT: Dynamic Patch Scheduling for Efficient Diffusion Transformers

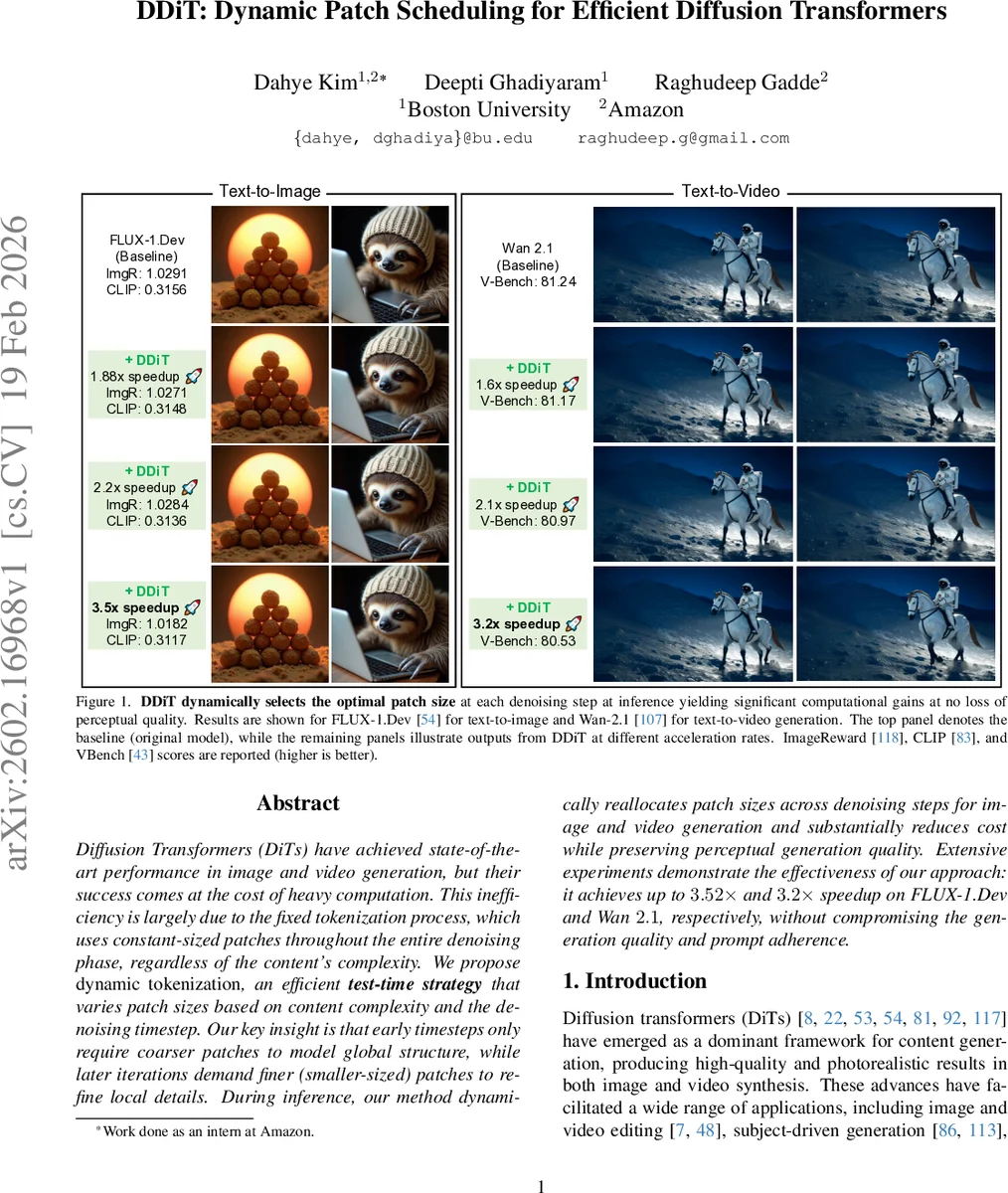

Diffusion Transformers (DiTs) have achieved state-of-the-art performance in image and video generation, but their success comes at the cost of heavy computation. This inefficiency is largely due to the fixed tokenization process, which uses constant-sized patches throughout the entire denoising phase, regardless of the content’s complexity. We propose dynamic tokenization, an efficient test-time strategy that varies patch sizes based on content complexity and the denoising timestep. Our key insight is that early timesteps only require coarser patches to model global structure, while later iterations demand finer (smaller-sized) patches to refine local details. During inference, our method dynamically reallocates patch sizes across denoising steps for image and video generation and substantially reduces cost while preserving perceptual generation quality. Extensive experiments demonstrate the effectiveness of our approach: it achieves up to $3.52\times$ and $3.2\times$ speedup on FLUX-1.Dev and Wan $2.1$, respectively, without compromising the generation quality and prompt adherence.

💡 Research Summary

Diffusion Transformers (DiTs) have become the state‑of‑the‑art backbone for high‑fidelity image and video synthesis, but their quadratic attention cost makes inference prohibitively expensive, especially for high‑resolution generation. The dominant inefficiency stems from the fixed tokenization scheme: the latent space of a pretrained VAE is uniformly divided into patches of a single size (p × p) and this granularity is kept constant across all denoising timesteps. In practice, however, the diffusion process is highly non‑uniform: early timesteps mainly shape the global layout, while later steps refine fine‑grained details. DDiT (Dynamic Patch Scheduling for Efficient Diffusion Transformers) exploits this observation by allowing the patch size to vary dynamically during inference, thereby allocating computational resources where they are most needed.

The method consists of two technical components. First, the authors extend the original patch‑embedding layer to support multiple patch sizes p_new = {k·p | k ∈ {1,2,4,…}}. For each new size a separate linear embedding weight and bias are introduced, and a Low‑Rank Adaptation (LoRA) branch is added to every transformer block. LoRA provides a lightweight pathway that adapts the pretrained DiT to the new token granularity without full retraining. Positional embeddings are reused by bilinearly interpolating them to the larger spatial resolution, and a learned “patch‑size token” is appended to the token sequence so the model can distinguish which granularity is active at a given step. A residual connection around the embedding/de‑embedding stages further stabilizes the transition between different granularities.

Second, a training‑free dynamic scheduler decides which patch size to use at each diffusion step. The scheduler estimates the latent evolution rate by computing a finite‑difference Δz_t = z_t − z_{t‑1} and measuring its within‑patch standard deviation σ_{p_i,t}. If the evolution is slow (low σ), the scheduler selects a coarser patch (larger p) because only global structure needs to be captured; if the evolution is rapid (high σ), it switches to a finer patch to preserve detail. This decision is made on‑the‑fly for every timestep, requiring only a few arithmetic operations.

Experiments are conducted on two large‑scale DiT models: FLUX‑1.Dev for text‑to‑image and Wan‑2.1 for text‑to‑video. Quantitative results show that DDiT achieves up to 3.52× speed‑up on FLUX‑1.Dev (ImageReward = 1.0182, CLIPScore = 0.3117) and up to 3.2× speed‑up on Wan‑2.1 (V‑Bench = 80.53 vs. 81.24 baseline) while preserving perceptual quality and prompt alignment. Various intermediate acceleration factors (1.88×, 2.2×, 3.5×) are also reported, all with negligible degradation in the respective metrics.

The paper’s contributions are threefold: (1) a minimal‑overhead architectural modification that enables multi‑scale patch processing; (2) a lightweight, training‑free scheduler that dynamically allocates computation based on latent dynamics; (3) extensive validation that the approach generalizes across image and video diffusion transformers.

Limitations include the reliance on a hand‑crafted threshold for the evolution rate, which may need tuning for highly complex prompts, and potential boundary artifacts when switching to very coarse patches. Future work could explore overlapping patches, multi‑scale feedback loops, or learned schedulers that further optimize the trade‑off between speed and fidelity.

Overall, DDiT offers a practical pathway to make diffusion‑based generative models substantially faster without sacrificing quality, opening the door to real‑time applications, deployment on resource‑constrained devices, and cost‑effective cloud inference.

Comments & Academic Discussion

Loading comments...

Leave a Comment