How Multimodal Large Language Models Support Access to Visual Information: A Diary Study With Blind and Low Vision People

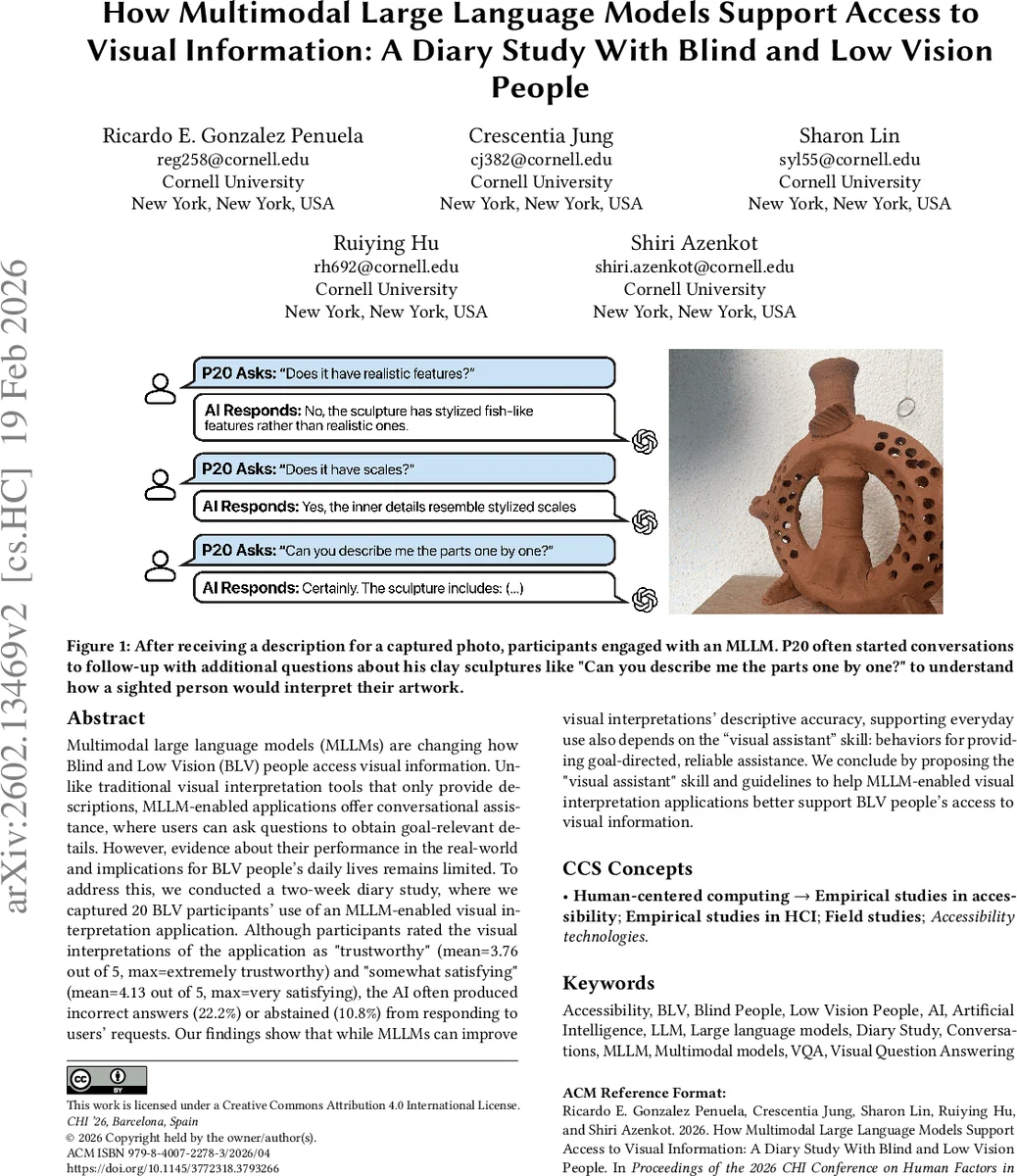

Multimodal large language models (MLLMs) are changing how Blind and Low Vision (BLV) people access visual information. Unlike traditional visual interpretation tools that only provide descriptions, MLLM-enabled applications offer conversational assistance, where users can ask questions to obtain goal-relevant details. However, evidence about their performance in the real-world and implications for BLV people’s daily lives remains limited. To address this, we conducted a two-week diary study, where we captured 20 BLV participants’ use of an MLLM-enabled visual interpretation application. Although participants rated the visual interpretations of the application as “trustworthy” (mean=3.76 out of 5, max=extremely trustworthy) and “somewhat satisfying” (mean=4.13 out of 5, max=very satisfying), the AI often produced incorrect answers (22.2%) or abstained (10.8%) from responding to users’ requests. Our findings show that while MLLMs can improve visual interpretations’ descriptive accuracy, supporting everyday use also depends on the “visual assistant” skill: behaviors for providing goal-directed, reliable assistance. We conclude by proposing the “visual assistant” skill and guidelines to help MLLM-enabled visual interpretation applications better support BLV people’s access to visual information.

💡 Research Summary

This paper investigates how multimodal large language models (MLLMs) can support blind and low‑vision (BLV) people in accessing visual information in everyday contexts. Building on prior work that highlighted the limitations of traditional AI‑powered visual interpretation tools (e.g., Seeing AI) – such as inaccurate captions, missed details, and inconsistent performance – the authors explore whether the conversational capabilities of modern MLLMs can bridge these gaps.

To answer this, a two‑week diary study was conducted with 20 BLV participants who were experienced users of visual‑interpretation applications. Participants used a custom‑built app that mirrors mainstream MLLM‑enabled tools (e.g., Seeing AI, Be My AI) and is powered by GPT‑4o. After each interaction they completed a short survey (trust and satisfaction), and the researchers collected the user‑taken photo, the AI‑generated description, and the full conversation log. In total, 551 diary entries were gathered.

Quantitative results show that participants rated the AI’s descriptions as fairly trustworthy (mean 3.76 / 5) and somewhat satisfying (mean 4.13 / 5). The baseline image captioning performed well, achieving an average accuracy of 2.9 / 3. However, when users asked follow‑up questions, 22.2 % of the responses contained factual errors (hallucinations) and 10.8 % were refusals or inconsistent handling of sensitive information. Errors were especially prevalent for text and graphic interpretation (34.6 % of follow‑up answers), leading to subtle but potentially dangerous misinformation such as incorrect medication dosages or wrong cooking times.

Behaviorally, participants initiated follow‑up dialogues in 68 % of entries, mainly in high‑stakes situations (medication verification, allergen checks, food safety) or when they needed non‑salient details. They avoided conversational use in public settings due to privacy concerns or when a quick overview sufficed. These patterns reveal that while MLLMs improve descriptive accuracy, real‑world utility hinges on “visual assistant” competencies—behaviors that make the system a reliable, goal‑directed partner rather than a mere caption generator.

From these observations the authors define a “visual assistant” skill and propose nine concrete behaviors that MLLMs should exhibit when assisting BLV users (e.g., prioritize factual correctness, explicitly signal uncertainty, protect sensitive data, limit verbosity, apologize and correct errors, allow user‑controlled personalization, etc.). They also identify three intervention points in the development pipeline: (1) training data augmentation with gold‑standard BLV‑assistant interactions, (2) system‑prompt engineering that embeds the visual‑assistant behaviors and aligns with user context and values, and (3) UI design that offers behavior controls and personalization settings.

The paper contributes (1) empirical insight into BLV users’ real‑world goals, contexts, and conversational strategies with an MLLM‑enabled visual interpreter, (2) evidence of both strengths (high description accuracy, user‑reported trust) and critical weaknesses (hallucinations in follow‑up, inconsistent handling of privacy‑sensitive queries), and (3) actionable design recommendations encapsulated in the visual‑assistant framework.

Limitations include the study’s timing (October‑December, a holiday period that may skew usage patterns), the participant pool being skewed toward expert users of existing tools (leaving novice perspectives unexplored), and potential observer effects due to data recording. Future work should broaden participant diversity, examine long‑term adoption, and develop methods for automatically detecting and mitigating hallucinations in conversational visual assistance.

Overall, the study demonstrates that MLLMs hold substantial promise for enhancing BLV individuals’ access to visual information, but realizing this promise requires careful attention to reliability, safety, and user‑controlled behavior—principles embodied in the proposed visual‑assistant skill.

Comments & Academic Discussion

Loading comments...

Leave a Comment