VFace: A Training-Free Approach for Diffusion-Based Video Face Swapping

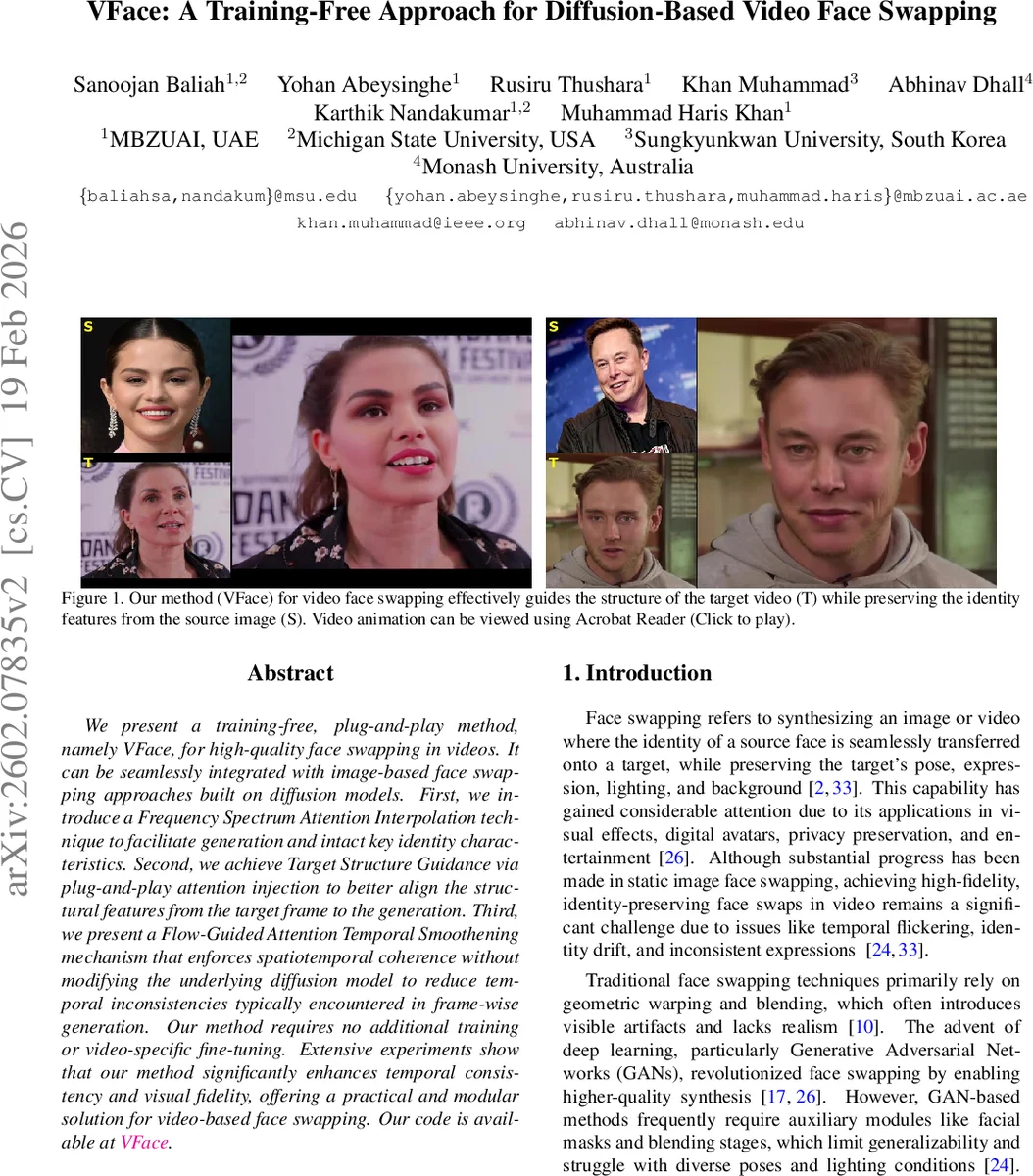

We present a training-free, plug-and-play method, namely VFace, for high-quality face swapping in videos. It can be seamlessly integrated with image-based face swapping approaches built on diffusion models. First, we introduce a Frequency Spectrum Attention Interpolation technique to facilitate generation and intact key identity characteristics. Second, we achieve Target Structure Guidance via plug-and-play attention injection to better align the structural features from the target frame to the generation. Third, we present a Flow-Guided Attention Temporal Smoothening mechanism that enforces spatiotemporal coherence without modifying the underlying diffusion model to reduce temporal inconsistencies typically encountered in frame-wise generation. Our method requires no additional training or video-specific fine-tuning. Extensive experiments show that our method significantly enhances temporal consistency and visual fidelity, offering a practical and modular solution for video-based face swapping. Our code is available at https://github.com/Sanoojan/VFace.

💡 Research Summary

The paper introduces VFace, a training‑free, plug‑and‑play framework that extends existing diffusion‑based image face‑swapping models to the video domain without any additional model retraining or fine‑tuning. The authors identify three major challenges when applying image‑level diffusion models to video: loss of temporal consistency, degradation of source identity due to dominant target‑frame conditioning, and the need for a mechanism that can preserve fine‑grained structural details while still injecting the source identity. To address these, VFace comprises three novel modules.

-

Target Structure Guidance (TSG) – For each target video frame, deterministic DDIM inversion is used to obtain a latent noise representation I_tar. A dual‑branch architecture runs a reconstruction pipeline (which denoises I_tar back to the original latent) and a generation pipeline (which denoises the same I_tar under swapping conditions). At every denoising timestep, the query and key tensors from the reconstruction branch are injected into the generation branch, effectively transferring pose, expression, and background structure from the target frame while allowing the source identity to be synthesized. This plug‑in attention injection requires no changes to the pretrained diffusion model.

-

Frequency Spectrum Attention Interpolation (FSAI) – Directly replacing attention maps can suppress source identity cues. To balance identity and structure, the authors transform the attention tensors (queries or keys) into the frequency domain using a one‑dimensional FFT. They then split the spectrum with a ratio ρ (e.g., 0.8), keep low‑frequency components from the source‑guided generation pipeline (which encode coarse identity and overall appearance) and high‑frequency components from the reconstruction pipeline (which encode fine structural details). An inverse FFT yields an interpolated attention tensor that preserves the source’s identity while inheriting the target’s motion‑consistent details. This operation is completely training‑free and can be applied at any denoising step.

-

Flow‑guided Attention Temporal Smoothening (FATS) – To mitigate frame‑wise stochastic flickering, optical flow is computed between consecutive frames. The flow is used to warp attention maps from the previous frame onto the current one, and a temporal averaging (or weighted blending) is performed across the warped maps. By propagating attention information along the motion field, the method enforces spatio‑temporal coherence without altering the underlying diffusion network.

The overall pipeline therefore consists of: (i) DDIM inversion of the target video, (ii) dual‑branch processing with attention injection (TSG), (iii) frequency‑aware blending of source and target attention (FSAI), and (iv) flow‑driven temporal smoothing (FATS). Because all components are modular and operate on intermediate attention tensors, VFace can be attached to any pretrained diffusion face‑swap model such as REFace or DiffSwap.

Experiments – The authors evaluate VFace on several public video datasets covering a wide range of poses, expressions, lighting conditions, and motion speeds. Quantitative metrics include identity preservation (ArcFace cosine similarity), perceptual similarity (LPIPS), video‑level fidelity (Frechet Video Distance, FVD), and a temporal warping error derived from optical flow consistency. Compared with naïve frame‑wise application of the baseline image swapper, VFace achieves a 12 % increase in identity similarity, a 0.07 reduction in LPIPS, and a 30 % reduction in FVD. User studies also report higher perceived realism and smoother motion. Ablation studies confirm that each module (TSG, FSAI, FATS) contributes significantly: removing FSAI leads to noticeable identity drift, while omitting FATS reintroduces flickering.

Strengths and Limitations – The primary strength is the complete elimination of any video‑specific training, making the method highly practical and adaptable to existing pipelines. The attention‑level operations are lightweight relative to full video‑diffusion models, and the use of frequency domain blending offers an elegant way to separate identity (low‑frequency) from fine structure (high‑frequency). Limitations include reliance on accurate optical flow; extreme fast motion or motion blur can degrade the flow estimation and thus the temporal smoothing. Additionally, FFT‑based interpolation on high‑resolution attention maps may become memory‑intensive, suggesting a need for more efficient implementations for 4K‑level videos.

Conclusion – VFace demonstrates that diffusion‑based face swapping can be extended to video with high temporal consistency and strong identity preservation, all without any additional training. By leveraging plug‑in attention injection, frequency‑domain attention interpolation, and flow‑guided temporal smoothing, the framework offers a modular, training‑free solution that can be readily integrated with any pretrained image‑based diffusion face‑swap model. Future work may explore more efficient frequency‑domain processing, alternative motion representations (e.g., transformer‑based temporal attention), and broader applications such as multi‑person video editing or real‑time deployment.

Comments & Academic Discussion

Loading comments...

Leave a Comment