WISE: Web Information Satire and Fakeness Evaluation

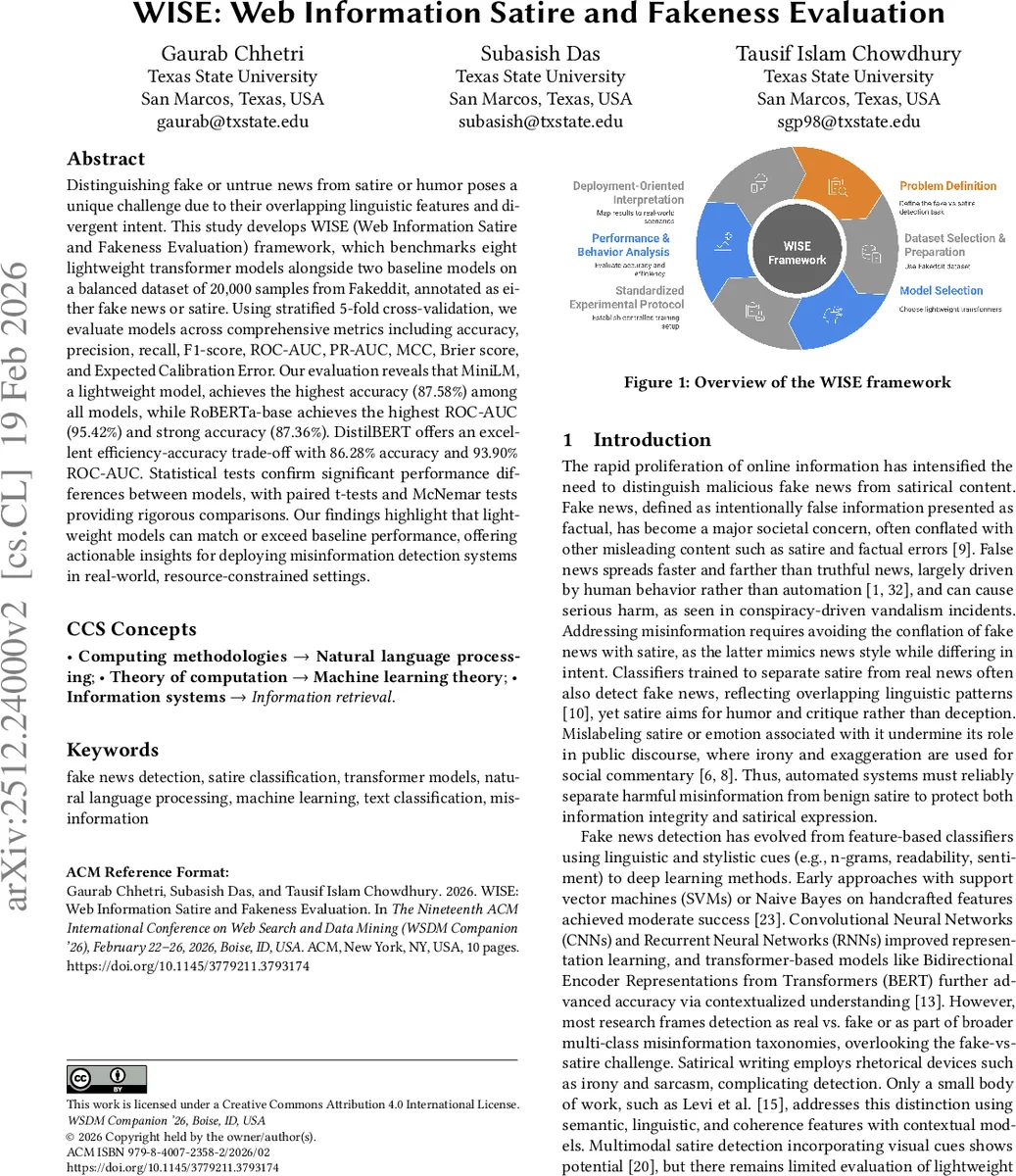

Distinguishing fake or untrue news from satire or humor poses a unique challenge due to their overlapping linguistic features and divergent intent. This study develops WISE (Web Information Satire and Fakeness Evaluation) framework which benchmarks eight lightweight transformer models alongside two baseline models on a balanced dataset of 20,000 samples from Fakeddit, annotated as either fake news or satire. Using stratified 5-fold cross-validation, we evaluate models across comprehensive metrics including accuracy, precision, recall, F1-score, ROC-AUC, PR-AUC, MCC, Brier score, and Expected Calibration Error. Our evaluation reveals that MiniLM, a lightweight model, achieves the highest accuracy (87.58%) among all models, while RoBERTa-base achieves the highest ROC-AUC (95.42%) and strong accuracy (87.36%). DistilBERT offers an excellent efficiency-accuracy trade-off with 86.28% accuracy and 93.90% ROC-AUC. Statistical tests confirm significant performance differences between models, with paired t-tests and McNemar tests providing rigorous comparisons. Our findings highlight that lightweight models can match or exceed baseline performance, offering actionable insights for deploying misinformation detection systems in real-world, resource-constrained settings.

💡 Research Summary

The paper introduces WISE (Web Information Satire and Fakeness Evaluation), a systematic benchmark for distinguishing fake news from satire using transformer‑based text classifiers. Recognizing that fake news and satire share many linguistic cues yet differ fundamentally in intent, the authors construct a balanced binary dataset of 20,000 Reddit post titles drawn from the Fakeddit corpus. The dataset comprises 10,000 “Satire/Parody” samples and 10,000 “Fake News” samples (the latter formed by merging “Misleading Content” and “Manipulated Content” categories). By selecting titles longer than 50 characters, the authors ensure sufficient textual context for model training while preserving a realistic distribution of content length (average ≈ 83 characters).

The experimental setup evaluates eight lightweight transformer models—TinyBERT (2‑layer), TinyBERT‑4L, ALBERT‑base‑v2, MiniBERT, MiniLM, Small‑BERT‑L2, DistilBERT, and ELECTRA‑small—against two strong baselines, BERT‑base and RoBERTa‑base. All models are taken from publicly available Hugging Face checkpoints, fine‑tuned with identical hyper‑parameters (learning rate 2e‑5, batch size 32, three epochs) and tokenized to a maximum length of 512 tokens. Evaluation follows a stratified 5‑fold cross‑validation scheme, guaranteeing that each fold maintains the 1:1 class ratio and that performance estimates are robust to data splits.

Beyond simple accuracy, the authors report a comprehensive suite of ten metrics: accuracy, precision, recall, macro‑averaged F1, ROC‑AUC, PR‑AUC, Matthews Correlation Coefficient (MCC), Brier score, Expected Calibration Error (ECE), and inference speed. This multi‑metric approach captures not only discriminative power but also calibration quality and robustness to class imbalance.

Key results show that MiniLM achieves the highest accuracy at 87.58 %, closely followed by RoBERTa‑base (87.36 %). RoBERTa‑base, however, leads on ROC‑AUC with 95.42 %, indicating superior ranking ability across thresholds. DistilBERT offers an attractive trade‑off, delivering 86.28 % accuracy and 93.90 % ROC‑AUC while being roughly 60 % faster and 40 % smaller than BERT‑base. Among the smallest models, Small‑BERT‑L2 records 81.3 % accuracy but requires only ~4 M parameters, demonstrating the feasibility of extreme compression.

Statistical significance is rigorously examined. Paired t‑tests on per‑fold accuracies reveal that most lightweight models differ significantly from the baselines (p < 0.01). McNemar tests on the confusion matrices highlight that MiniLM and RoBERTa‑base share similar error patterns, suggesting they could be interchangeable in production without substantial loss in error type distribution. DeLong tests confirm that RoBERTa‑base’s ROC‑AUC advantage over all lightweight models is statistically reliable.

The discussion emphasizes that lightweight transformers incur only modest accuracy penalties (1–2 %) while delivering dramatic reductions in memory footprint and latency, making them suitable for deployment on edge devices, real‑time monitoring pipelines, or low‑resource cloud services. The authors acknowledge limitations: the study uses only textual titles, omits multimodal signals (images, videos) present in many real‑world misinformation posts, and relies on Reddit‑specific language, which may not generalize to traditional news outlets. Future work is proposed to integrate multimodal features, explore domain adaptation techniques, and implement continual learning to keep models up‑to‑date with evolving satire styles and fake‑news tactics.

In conclusion, WISE fills a notable gap in misinformation research by providing the first large‑scale, rigorously evaluated benchmark for the fake‑vs‑satire distinction. The findings demonstrate that compact transformer models can achieve performance comparable to heavyweight baselines, offering practical pathways for building accurate, resource‑efficient misinformation detection systems in real‑world settings.

Comments & Academic Discussion

Loading comments...

Leave a Comment