GGBall: Graph Generative Model on Poincaré Ball

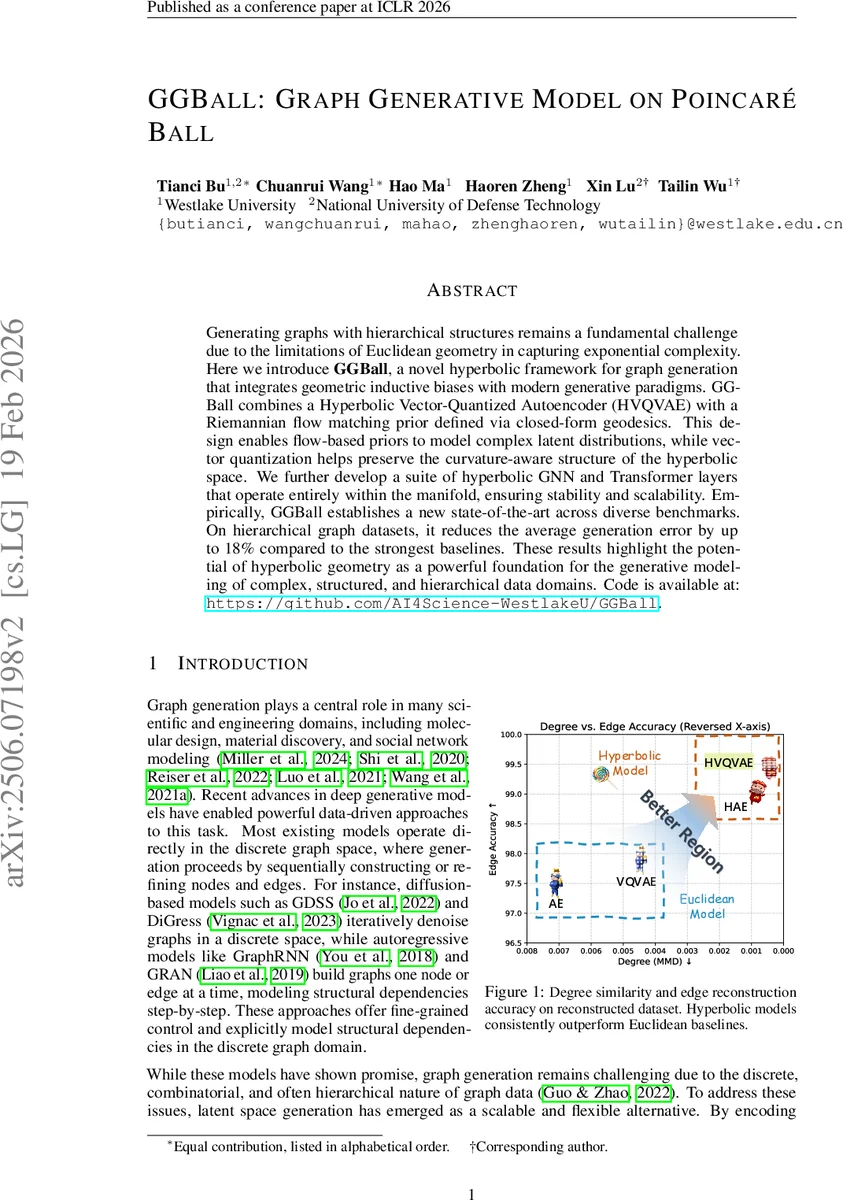

Generating graphs with hierarchical structures remains a fundamental challenge due to the limitations of Euclidean geometry in capturing exponential complexity. Here we introduce \textbf{GGBall}, a novel hyperbolic framework for graph generation that integrates geometric inductive biases with modern generative paradigms. GGBall combines a Hyperbolic Vector-Quantized Autoencoder (HVQVAE) with a Riemannian flow matching prior defined via closed-form geodesics. This design enables flow-based priors to model complex latent distributions, while vector quantization helps preserve the curvature-aware structure of the hyperbolic space. We further develop a suite of hyperbolic GNN and Transformer layers that operate entirely within the manifold, ensuring stability and scalability. Empirically, our model reduces degree MMD by over 75% on Community-Small and over 40% on Ego-Small compared to state-of-the-art baselines, demonstrating an improved ability to preserve topological hierarchies. These results highlight the potential of hyperbolic geometry as a powerful foundation for the generative modeling of complex, structured, and hierarchical data domains. Our code is available at \href{https://github.com/AI4Science-WestlakeU/GGBall}{here}.

💡 Research Summary

GGBall introduces a fully hyperbolic framework for graph generation built on the Poincaré ball model, addressing the long‑standing difficulty of faithfully modeling hierarchical structures in Euclidean latent spaces. The authors combine three key components: (1) a Hyperbolic Vector‑Quantized Autoencoder (HVQVAE) that encodes each graph into a set of discrete tokens residing on the Poincaré ball; (2) a flow‑matching prior defined by closed‑form geodesics, allowing expressive latent distributions without relying on predefined Gaussian noise; and (3) a suite of hyperbolic neural modules—including a message‑passing GNN and a Transformer—where all operations (aggregation, attention, normalization) are performed via logarithmic and exponential maps to the tangent space and Möbius arithmetic on the manifold.

The HVQVAE uses geodesic clustering to initialize a codebook and Riemannian optimization to preserve curvature during quantization, ensuring that node embeddings retain hierarchical relationships. The flow‑matching prior leverages the manifold’s geometry to define a transport map that aligns the learned latent distribution with a simple base distribution, effectively learning complex hierarchical priors.

Crucially, GGBall adopts a node‑only latent representation: edge existence probabilities are modeled directly as functions of hyperbolic distances and angular relationships between node embeddings. This eliminates the need for a separate edge latent space, reduces decoding complexity, and lets the geometry itself encode connectivity patterns.

Experimental evaluation spans synthetic hierarchical benchmarks (Community‑Small, Ego‑Small), real‑world molecular graphs (QM9), and standard graph generation baselines (GDSS, DiGress, GraphRNN, VQ‑GAE, etc.). GGBall achieves up to a 75 % reduction in degree MMD on Community‑Small, a 40 % reduction on Ego‑Small, and a 18 % overall generation error improvement across all datasets. On QM9, it reaches a novelty of 93.77 %—the highest reported—while maintaining >98 % chemical validity and the best combined VU.N score.

Ablation studies confirm that each component contributes meaningfully: removing vector quantization degrades reconstruction stability; replacing hyperbolic layers with Euclidean counterparts significantly harms hierarchical fidelity; and omitting the flow‑matching prior reduces the expressiveness of the latent prior.

The paper also discusses limitations: the current implementation relies on O(N²) pairwise distance calculations, which may hinder scalability to very large graphs, and it focuses exclusively on the Poincaré model without comparison to alternative hyperbolic representations (e.g., Lorentz). Future work is suggested on efficient approximations, domain‑specific decoders for chemistry, and broader exploration of non‑Euclidean manifolds.

In summary, GGBall demonstrates that a principled, end‑to‑end hyperbolic approach can substantially improve graph generation for hierarchical and compositional data, opening new avenues for non‑Euclidean generative modeling in network science, biology, and material discovery.

Comments & Academic Discussion

Loading comments...

Leave a Comment