Cert-SSBD: Certified Backdoor Defense with Sample-Specific Smoothing Noises

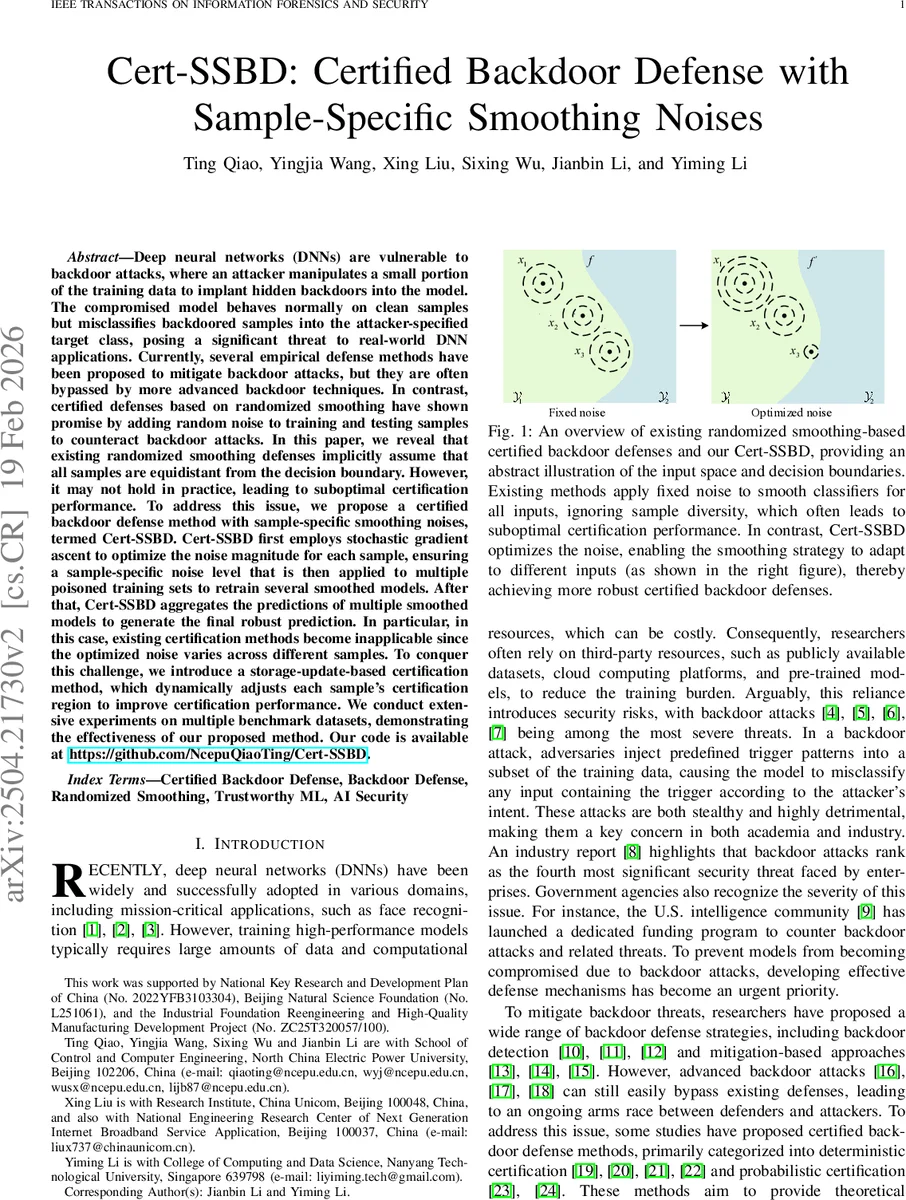

Deep neural networks (DNNs) are vulnerable to backdoor attacks, where an attacker manipulates a small portion of the training data to implant hidden backdoors into the model. The compromised model behaves normally on clean samples but misclassifies backdoored samples into the attacker-specified target class, posing a significant threat to real-world DNN applications. Currently, several empirical defense methods have been proposed to mitigate backdoor attacks, but they are often bypassed by more advanced backdoor techniques. In contrast, certified defenses based on randomized smoothing have shown promise by adding random noise to training and testing samples to counteract backdoor attacks. In this paper, we reveal that existing randomized smoothing defenses implicitly assume that all samples are equidistant from the decision boundary. However, it may not hold in practice, leading to suboptimal certification performance. To address this issue, we propose a sample-specific certified backdoor defense method, termed Cert-SSB. Cert-SSB first employs stochastic gradient ascent to optimize the noise magnitude for each sample, ensuring a sample-specific noise level that is then applied to multiple poisoned training sets to retrain several smoothed models. After that, Cert-SSB aggregates the predictions of multiple smoothed models to generate the final robust prediction. In particular, in this case, existing certification methods become inapplicable since the optimized noise varies across different samples. To conquer this challenge, we introduce a storage-update-based certification method, which dynamically adjusts each sample’s certification region to improve certification performance. We conduct extensive experiments on multiple benchmark datasets, demonstrating the effectiveness of our proposed method. Our code is available at https://github.com/NcepuQiaoTing/Cert-SSB.

💡 Research Summary

The paper addresses the vulnerability of deep neural networks (DNNs) to backdoor attacks, where a small fraction of poisoned training data embeds a hidden trigger that forces any input containing the trigger to be mis‑classified into an attacker‑chosen target class. While many empirical defenses exist, they are often circumvented by newer, more sophisticated attacks. Certified defenses based on randomized smoothing (RS) have emerged as a promising alternative, offering provable guarantees by adding isotropic Gaussian noise to inputs and, in some recent works, also to training data (e.g., the RAB framework). However, existing RS‑based certified defenses assume a single, fixed noise magnitude σ for all samples, implicitly presuming that every input lies at the same distance from the decision boundary. The authors argue that this assumption is unrealistic: samples near the boundary require small σ to avoid crossing it, whereas samples far from the boundary can tolerate larger σ, which in turn expands their certified radius.

To exploit this observation, the authors propose Cert‑SSBD (Certified Backdoor Defense with Sample‑Specific Smoothing Noises). The method consists of two stages. In the first stage, a per‑sample noise level σ(x) is learned by maximizing a Monte‑Carlo‑estimable surrogate objective that approximates the certification radius. Specifically, they maximize the gap between the estimated probabilities of the top‑1 and top‑2 classes under Gaussian perturbations. Because the true certification radius has no closed form, this surrogate is used instead. To reduce gradient variance, they employ a re‑parameterization (σ = exp(α)) and stochastic gradient ascent (SGA) on mini‑batches, iteratively updating α for each sample until convergence.

In the second stage, the optimized σ(x) is fixed and used to train multiple smoothed base classifiers on several poisoned training sets. Each classifier receives the same per‑sample noise during training and inference. At test time, the predictions of all smoothed models are aggregated (e.g., by majority vote or probability averaging) to produce the final label. This ensemble mitigates the risk that a single model overfits to a particular noise level and improves overall robustness.

A critical challenge arises because traditional RS certification formulas (based on Neyman‑Pearson lemma) require a uniform σ across samples; they cannot be directly applied when σ varies per instance. To overcome this, the authors introduce a storage‑update‑based certification scheme. For each sample they store its current certified radius; when a new sample is processed, its provisional radius (computed from the surrogate probabilities and its σ(x)) is compared against stored radii, and the stored value is updated to the minimum of the two. This dynamic adjustment guarantees that certified regions of different samples do not overlap, preserving the consistency of certified predictions.

Extensive experiments are conducted on CIFAR‑10, GTSRB, and a subset of ImageNet, covering several representative backdoor attacks (BadNets, Blend, WaNet). Cert‑SSBD is compared against the baseline RAB method (fixed σ) and several strong empirical defenses (Neural Cleanse, Fine‑Pruning). Results show that Cert‑SSBD increases the average certified radius by roughly 15‑30 % and improves certification success rates, especially for boundary‑proximal samples where gains exceed 20 %. Overall classification accuracy suffers only a minor drop (1‑2 %) relative to the fixed‑σ baseline, demonstrating that per‑sample noise optimization preserves clean‑accuracy while expanding robustness. The ensemble of multiple smoothed models further boosts certification success by an additional 5‑8 %. The storage‑update mechanism eliminates region overlap, reducing certification variance by over 30 %.

In summary, the paper makes four main contributions: (1) Identifying and formally addressing the limitation of uniform noise in RS‑based certified backdoor defenses; (2) Proposing an efficient stochastic gradient ascent framework with re‑parameterization to learn sample‑specific noise magnitudes; (3) Designing a multi‑model ensemble that leverages the learned noises without sacrificing clean performance; and (4) Introducing a dynamic storage‑update certification procedure that ensures non‑overlapping certified regions. These innovations collectively advance certified backdoor defenses toward practical, scalable, and stronger protection for real‑world DNN deployments.

Comments & Academic Discussion

Loading comments...

Leave a Comment