📝 Original Info

- Title: Causal and Compositional Abstraction

- ArXiv ID: 2602.16612

- Date: 2026-02-18

- Authors: Robin Lorenz, Sean Tull

📝 Abstract

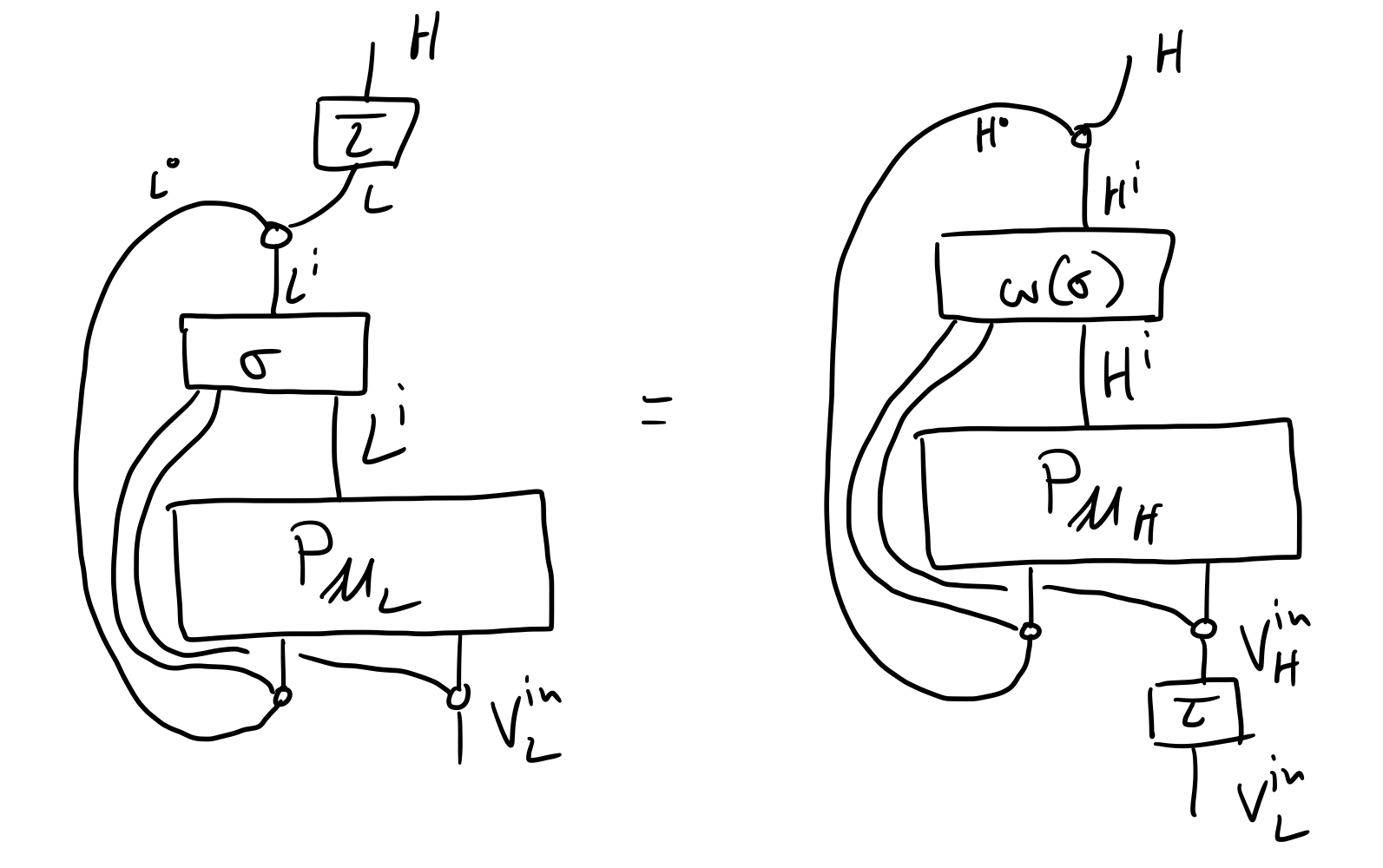

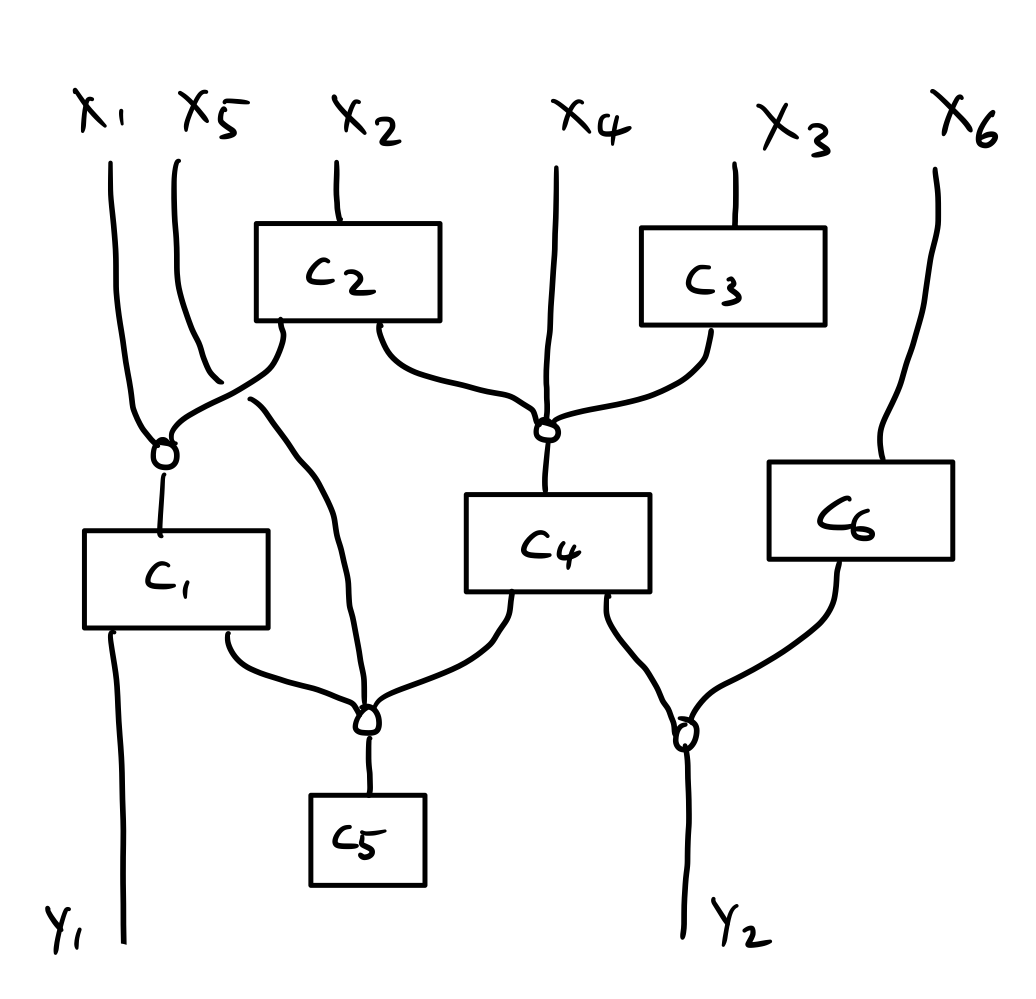

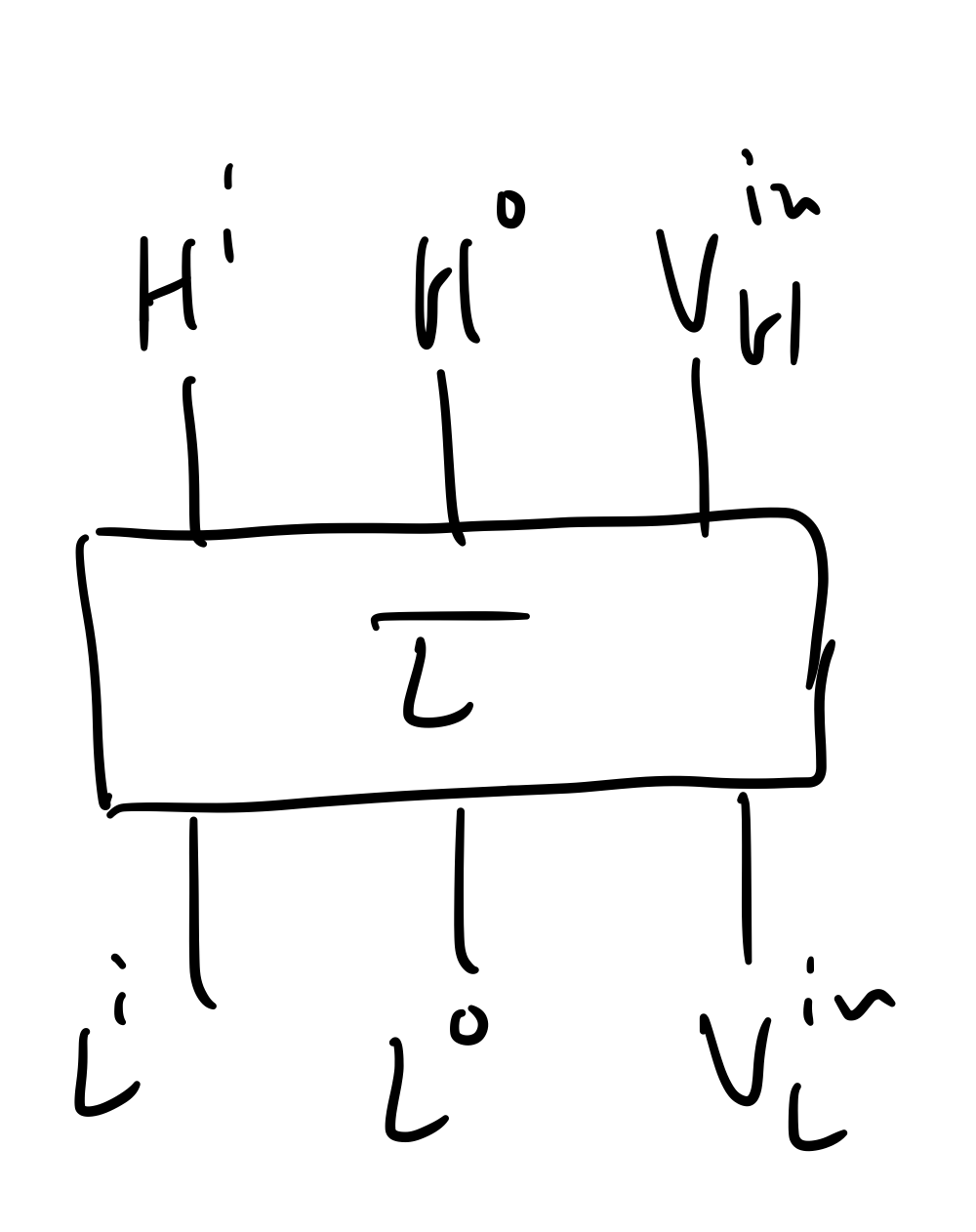

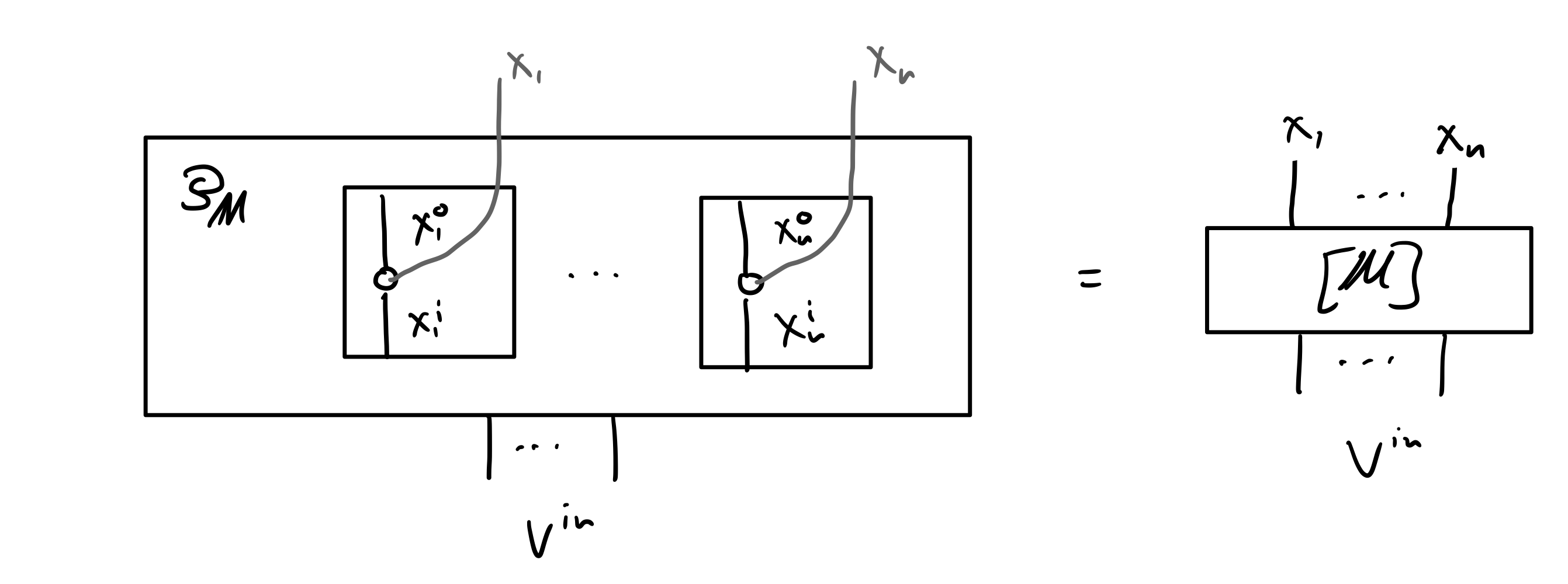

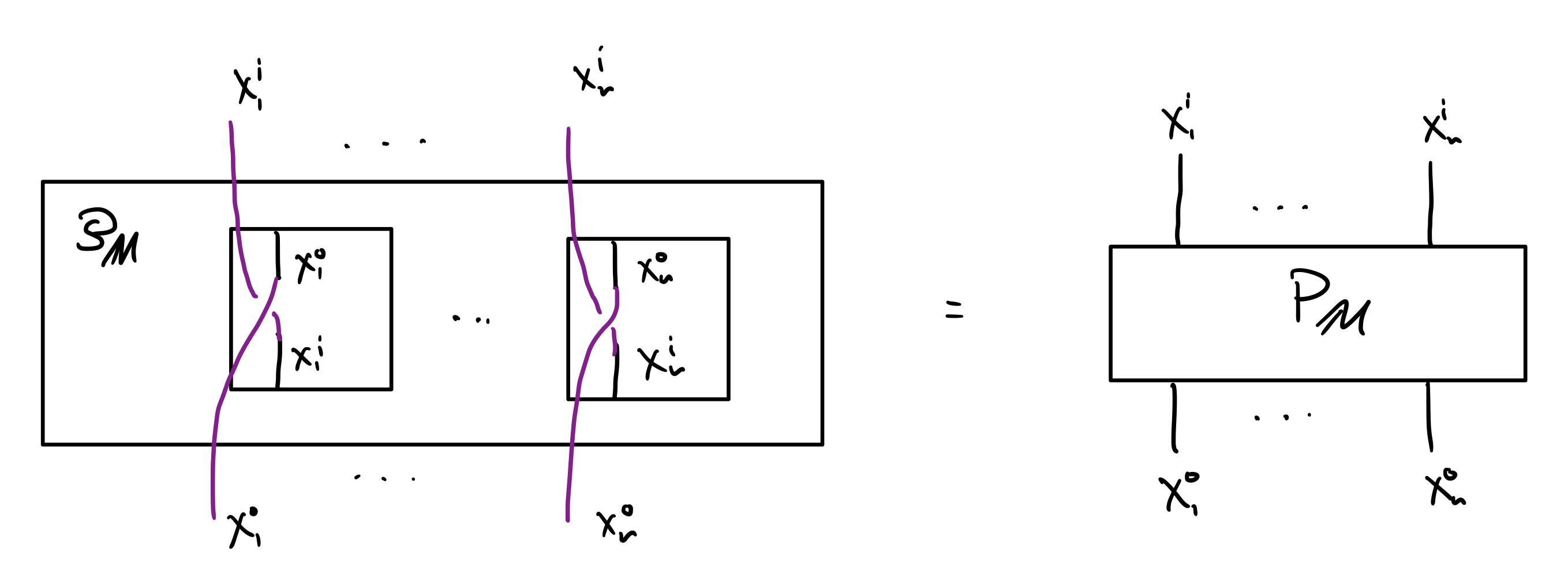

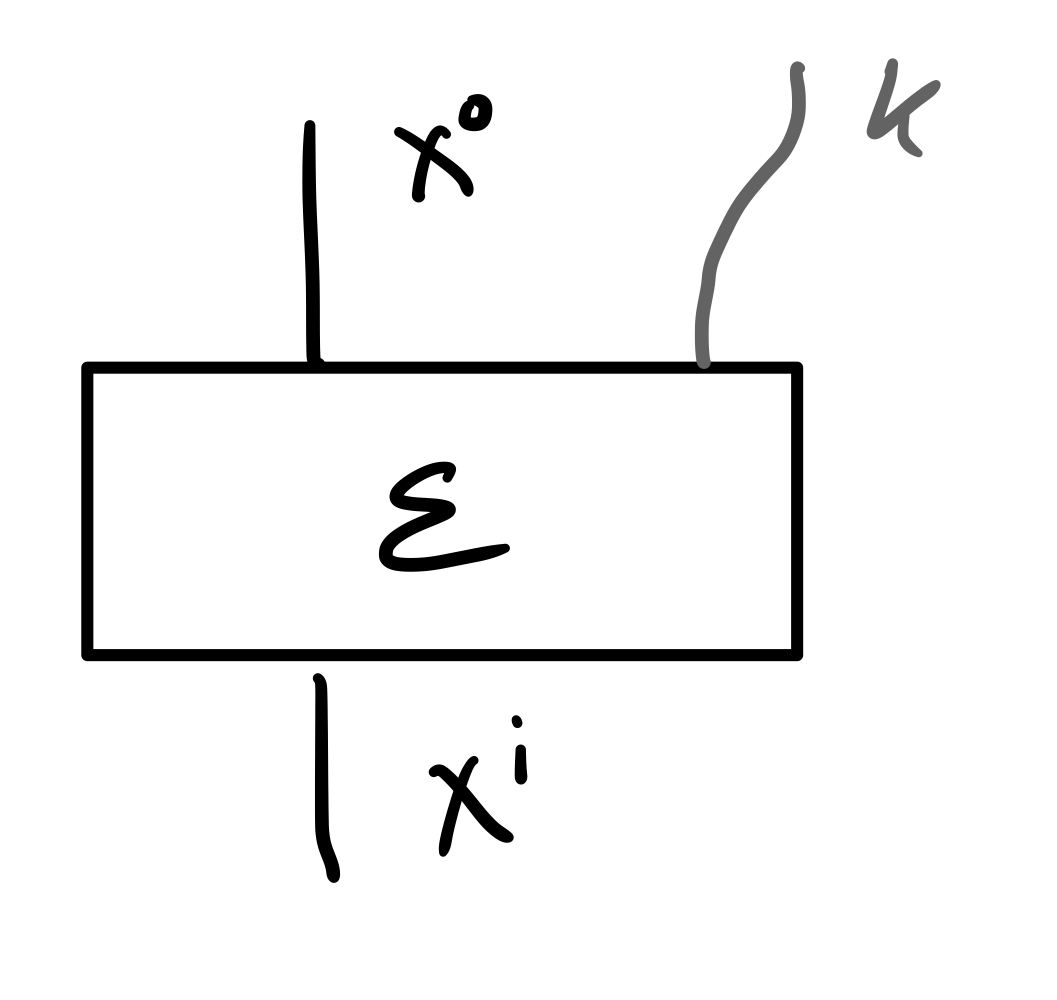

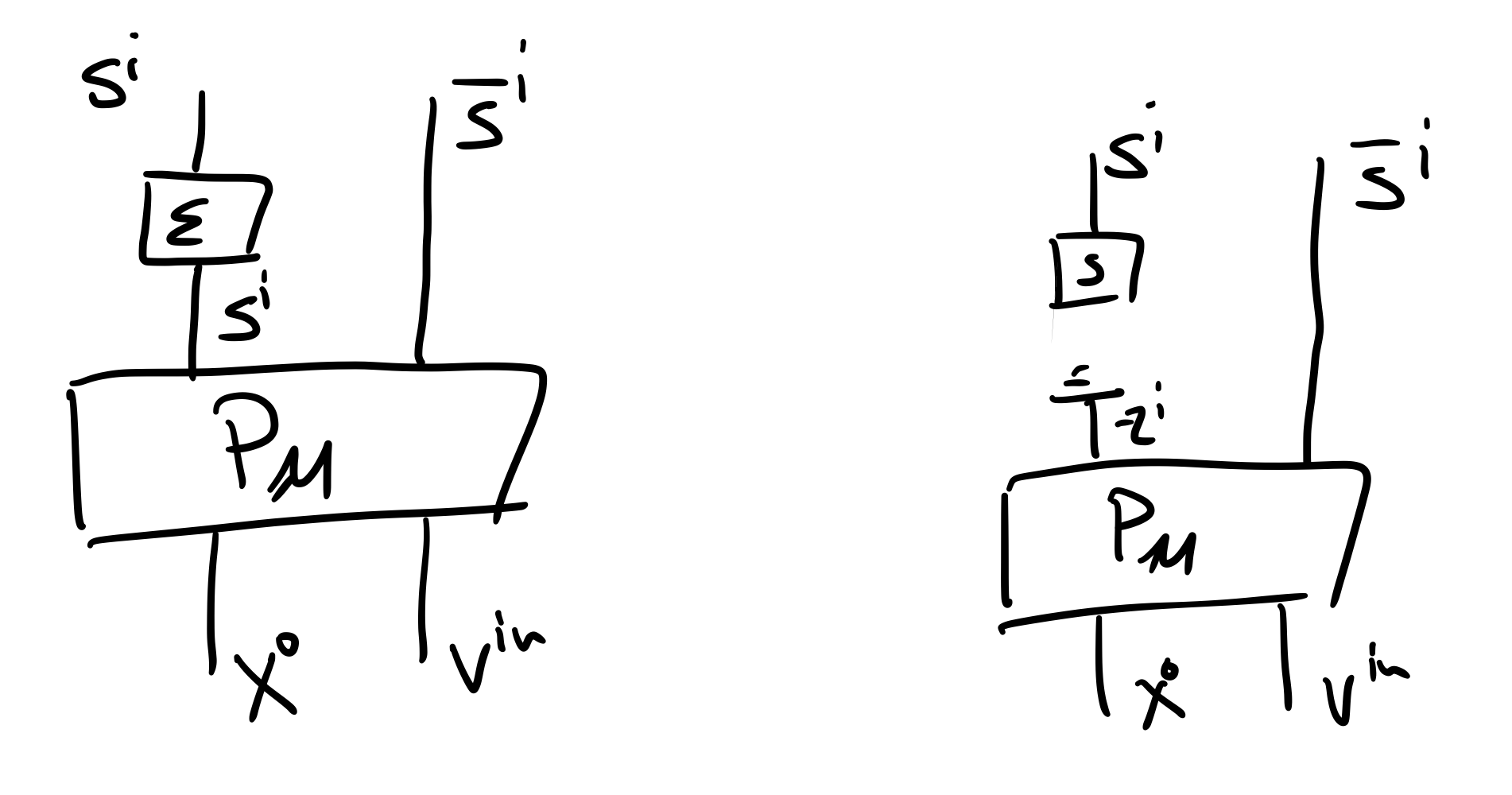

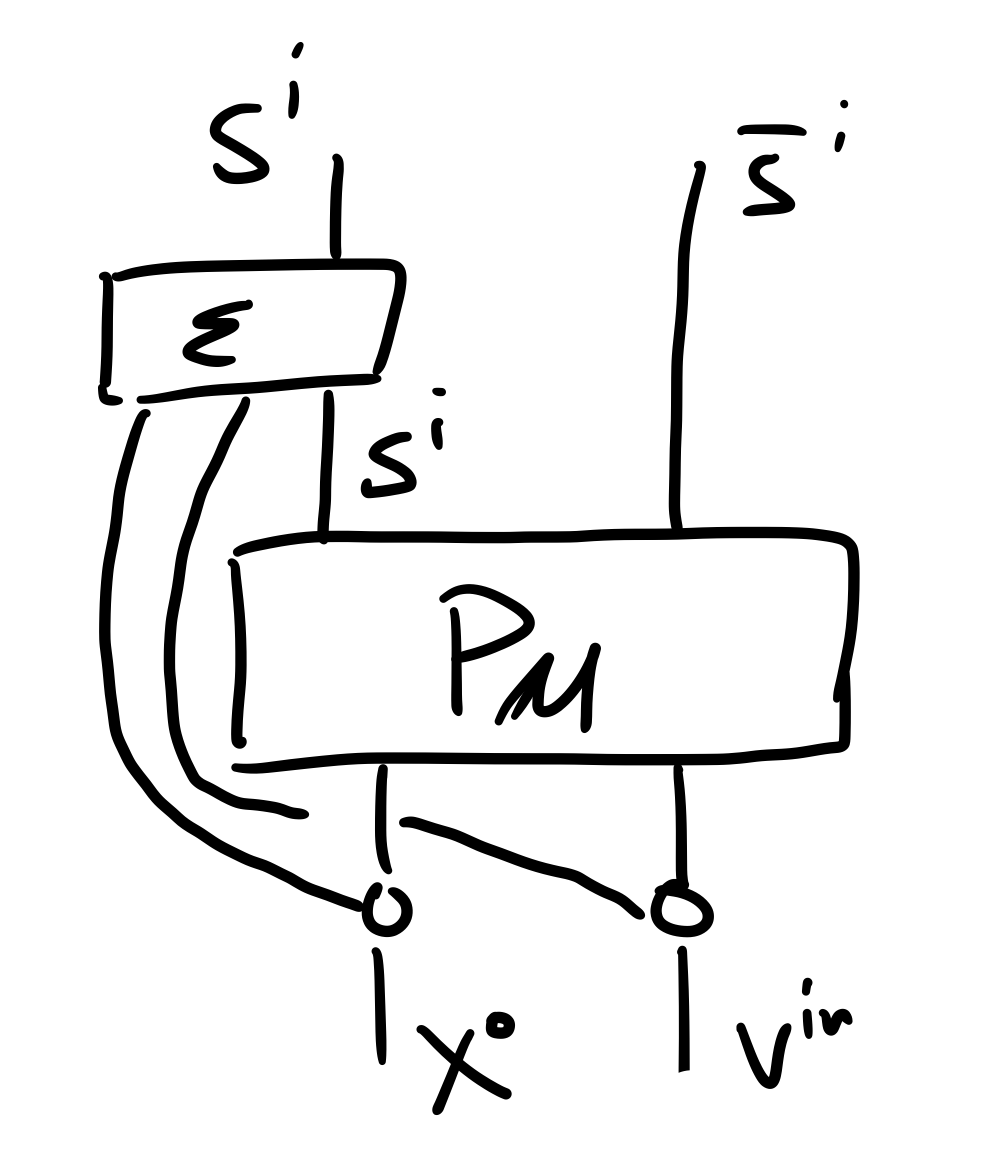

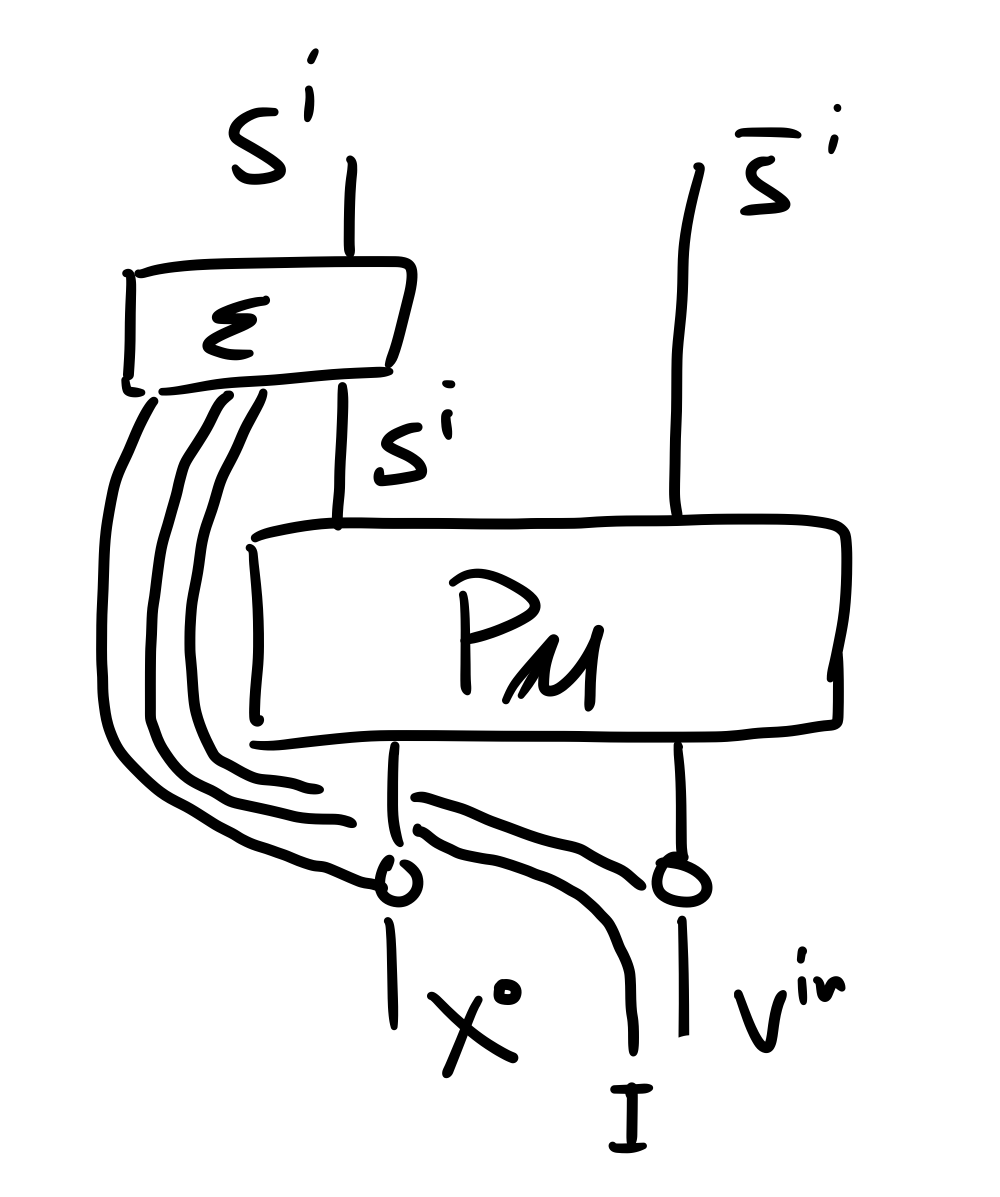

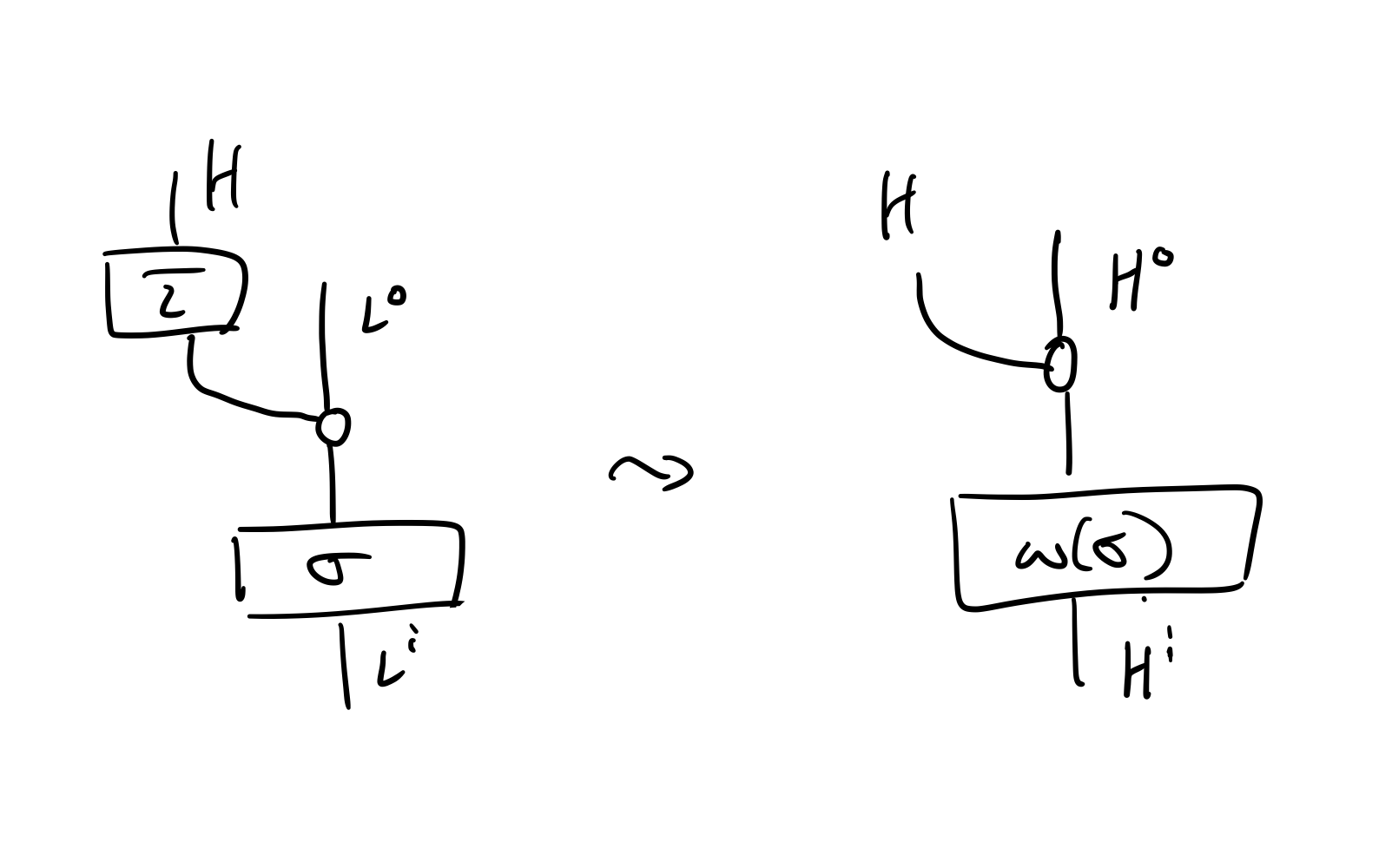

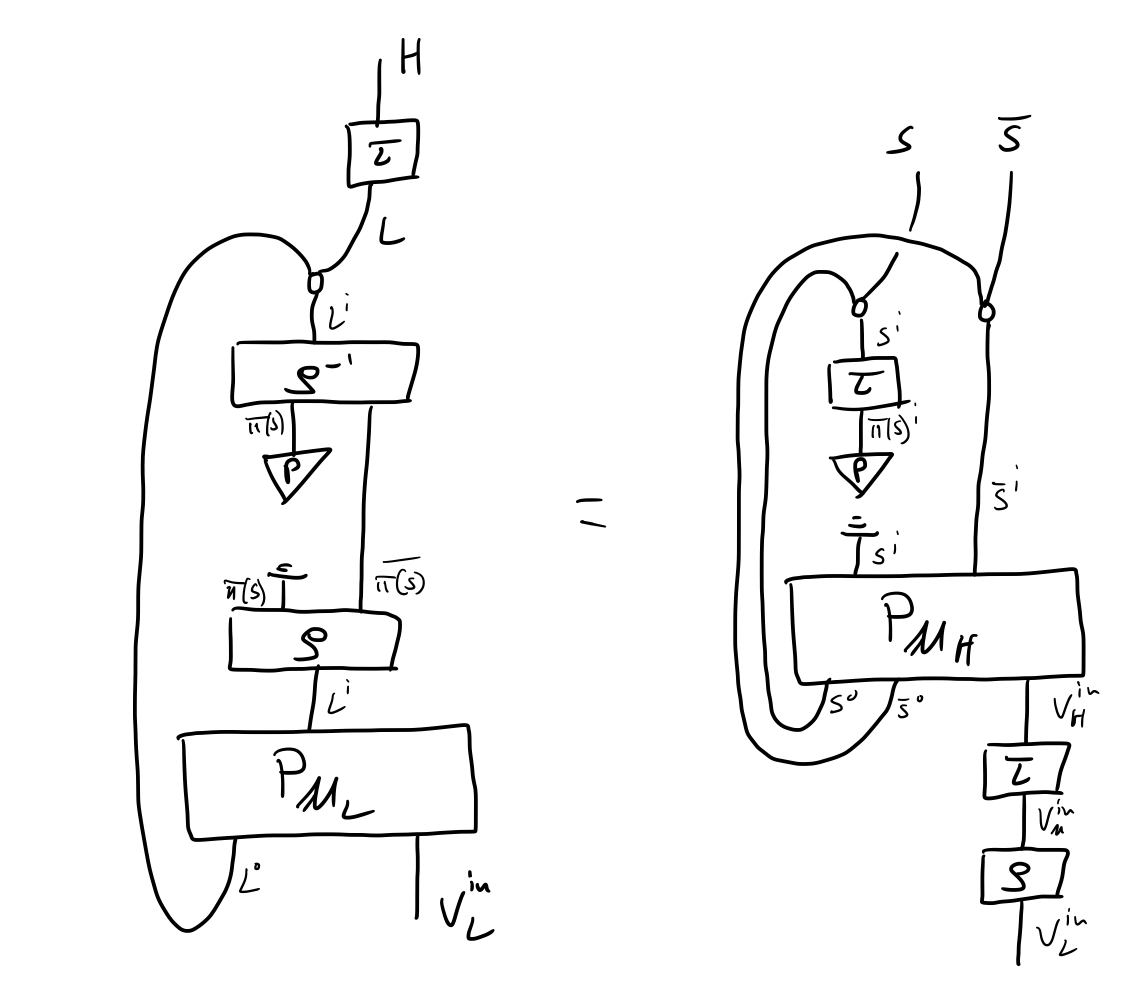

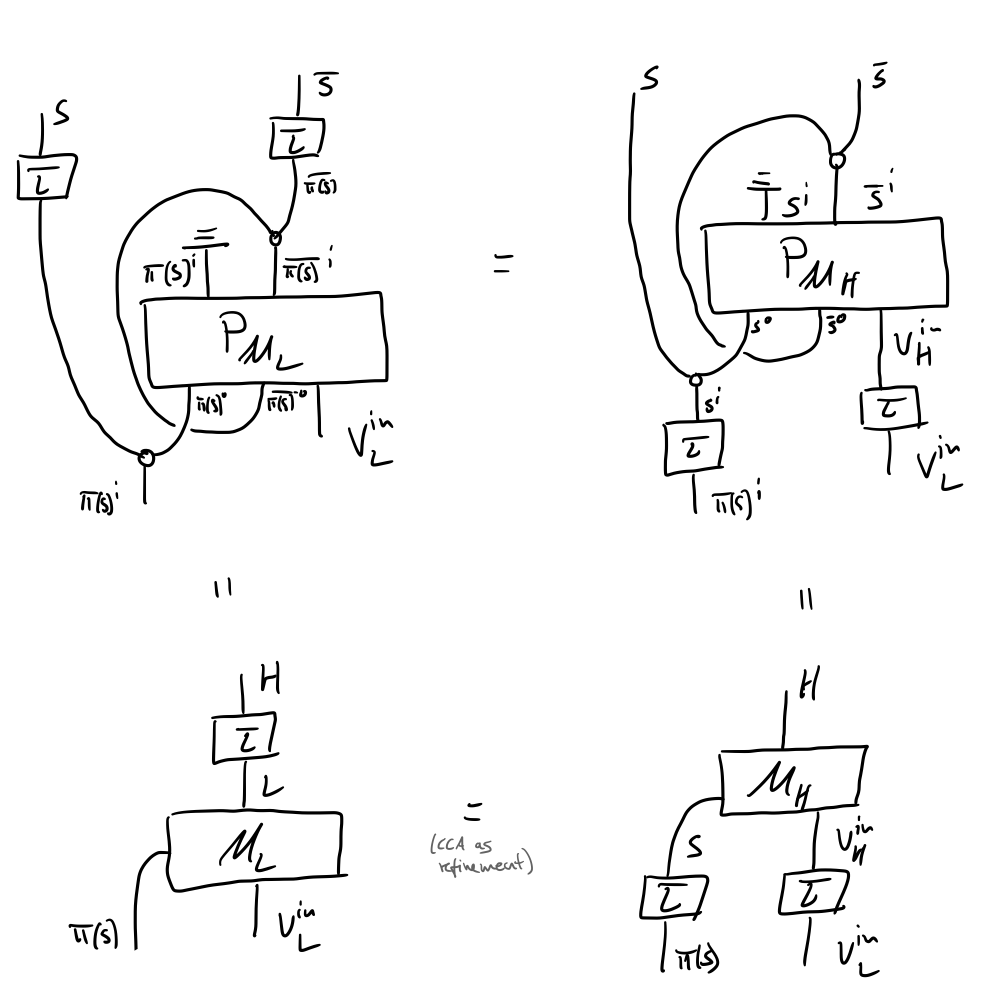

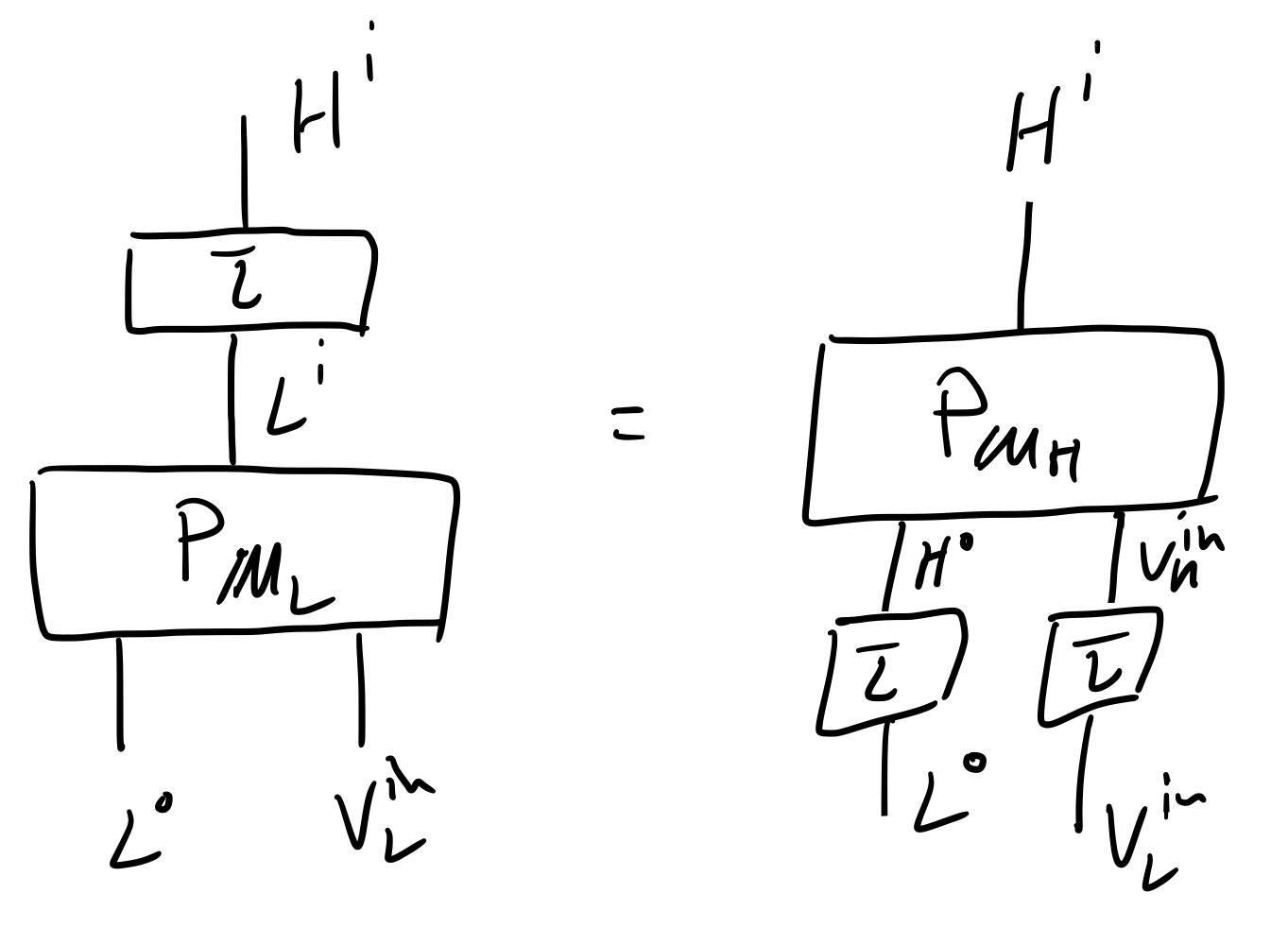

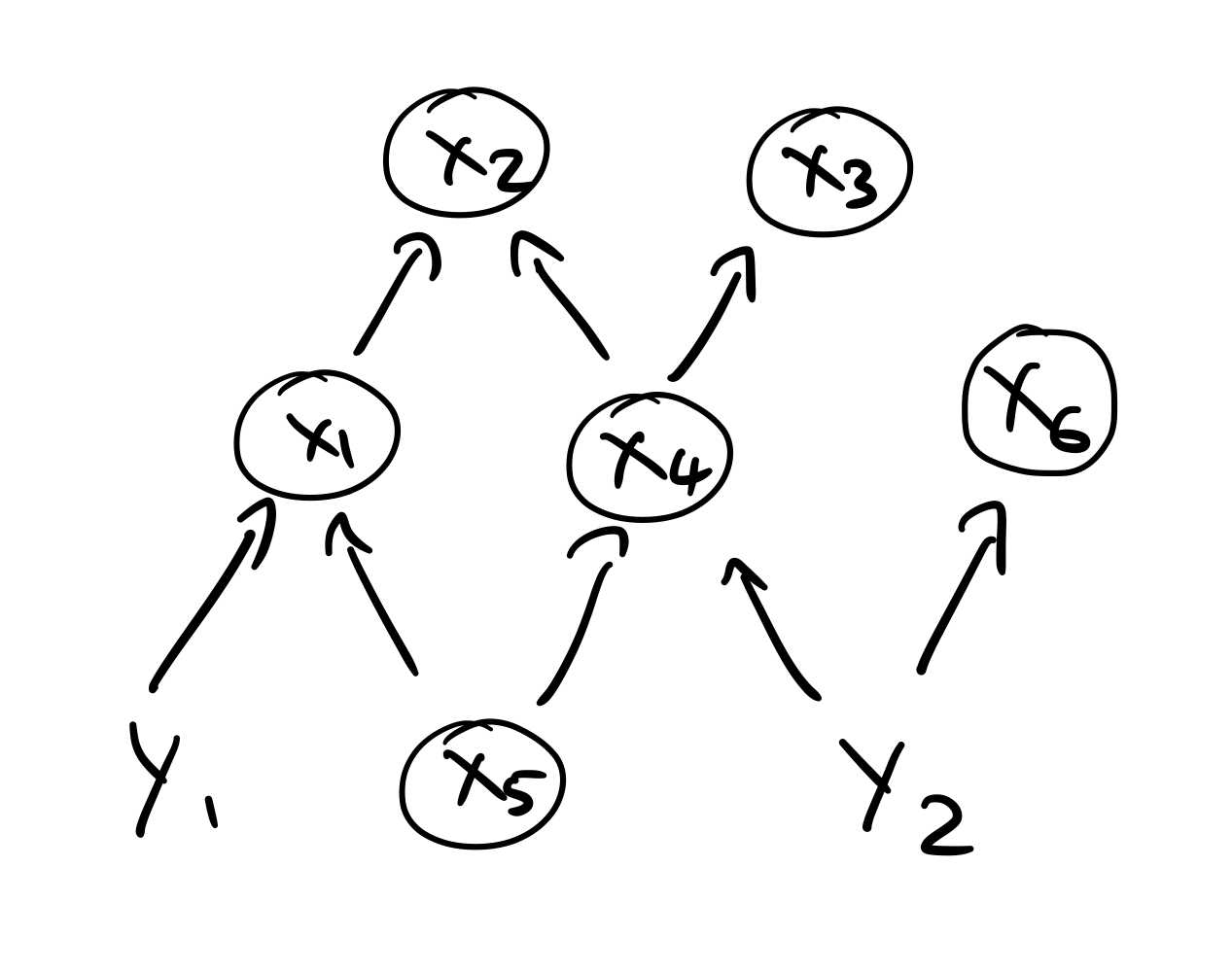

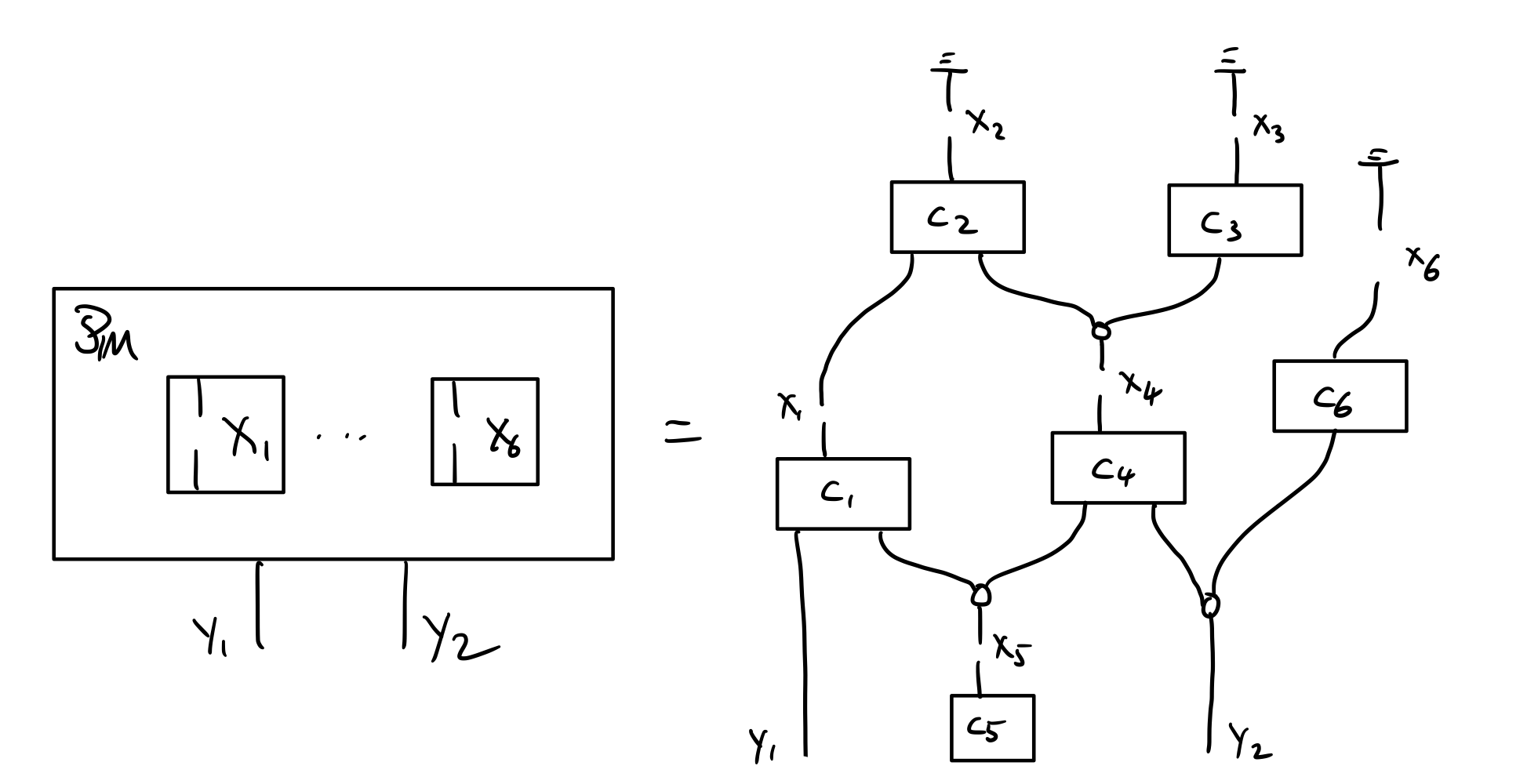

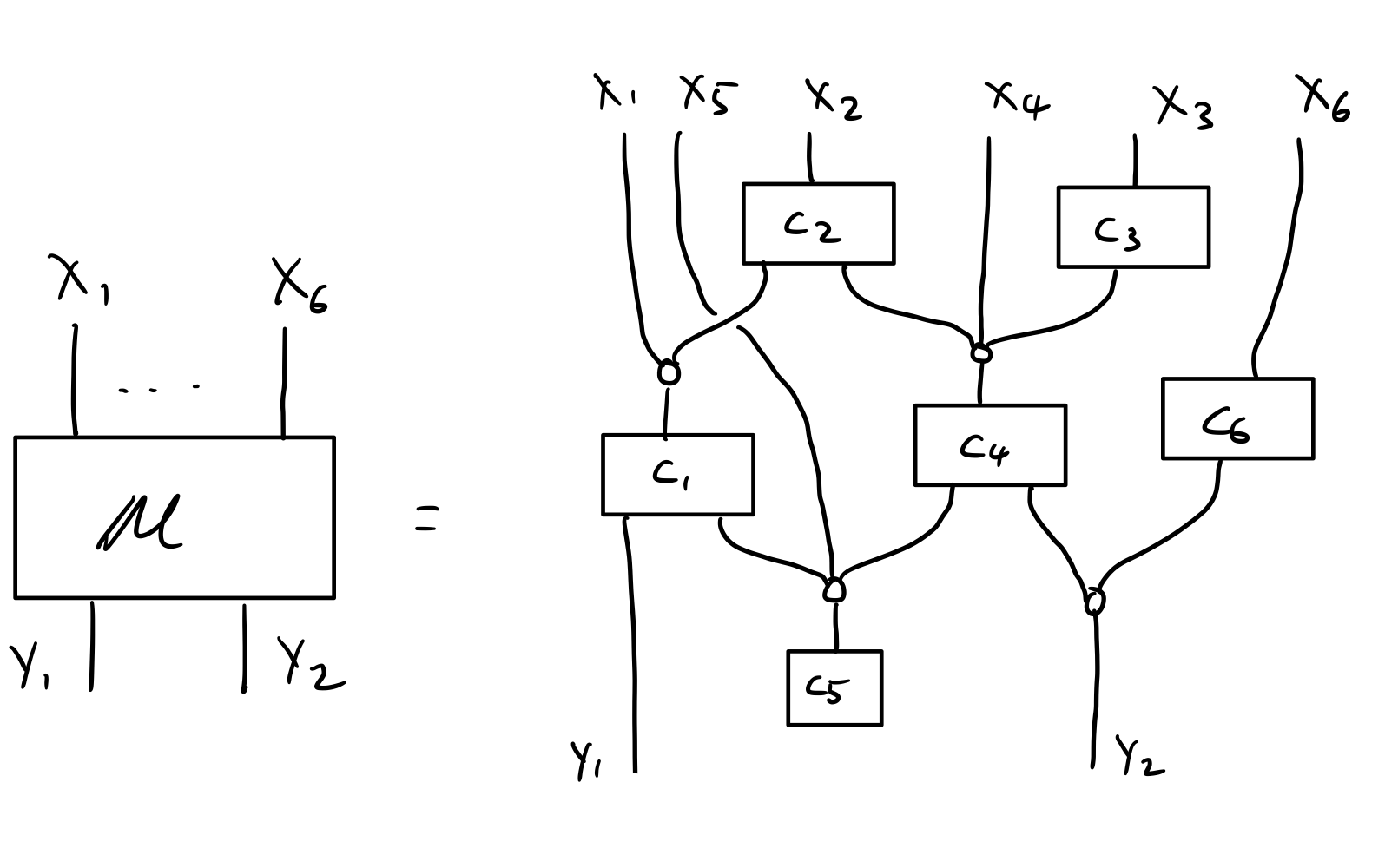

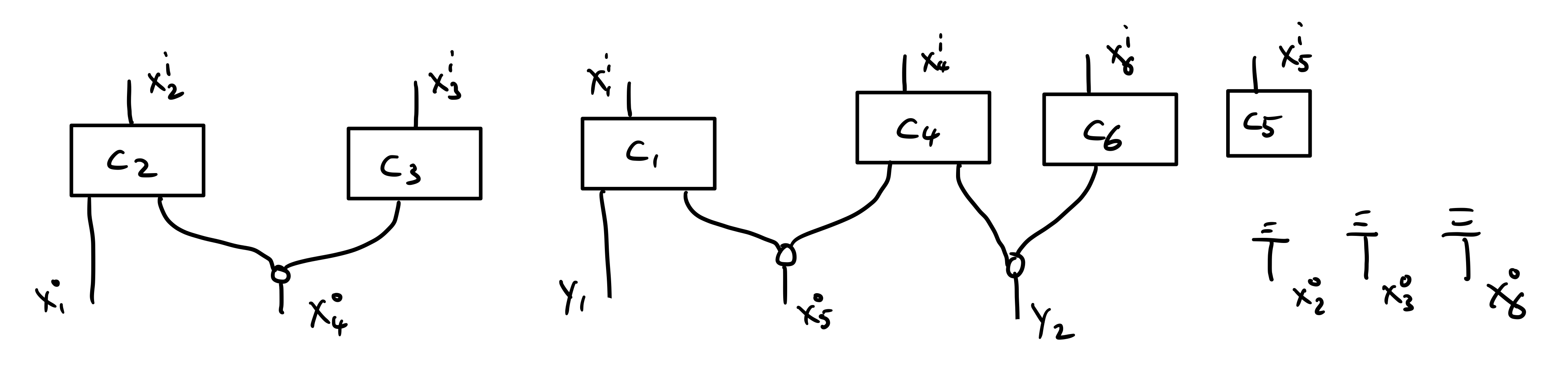

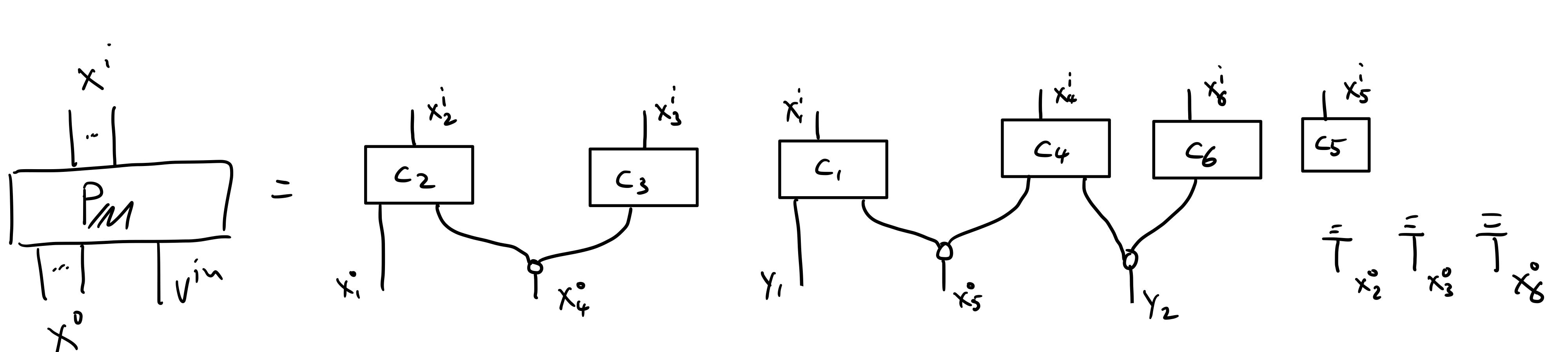

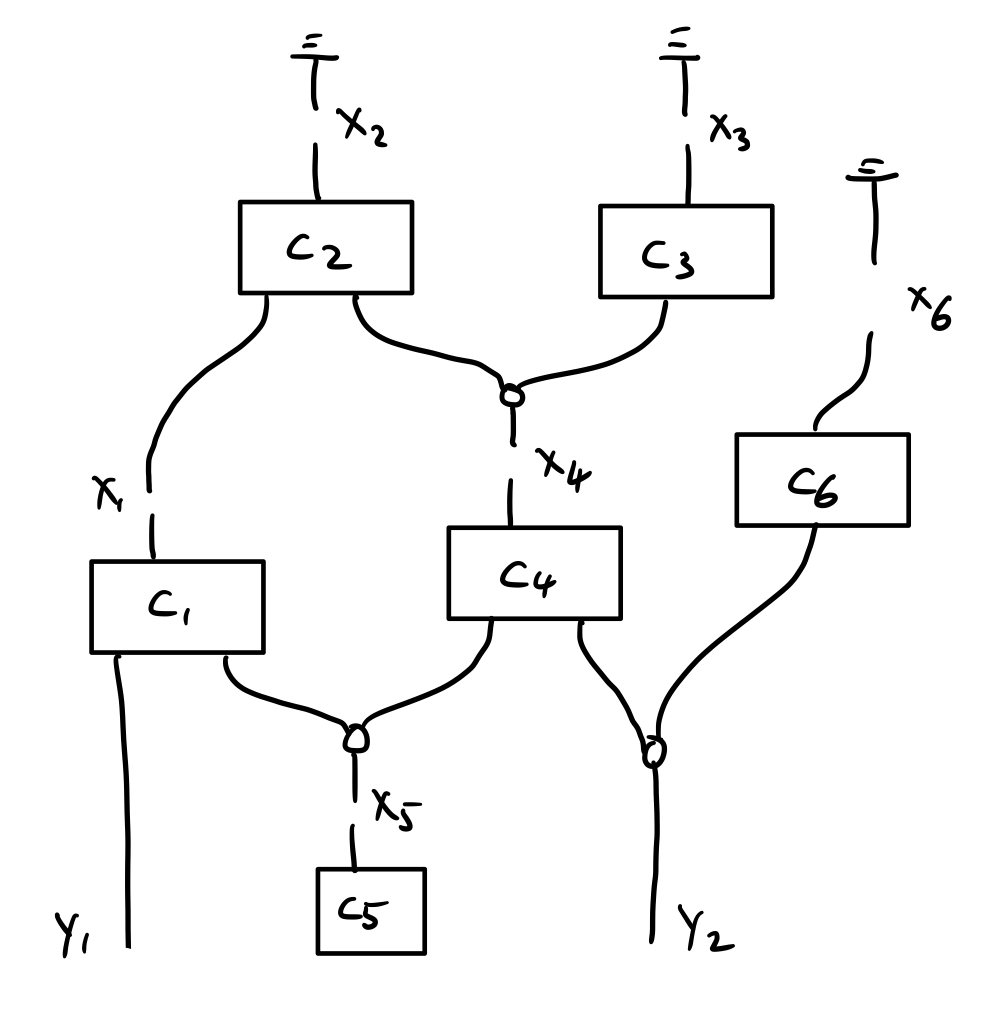

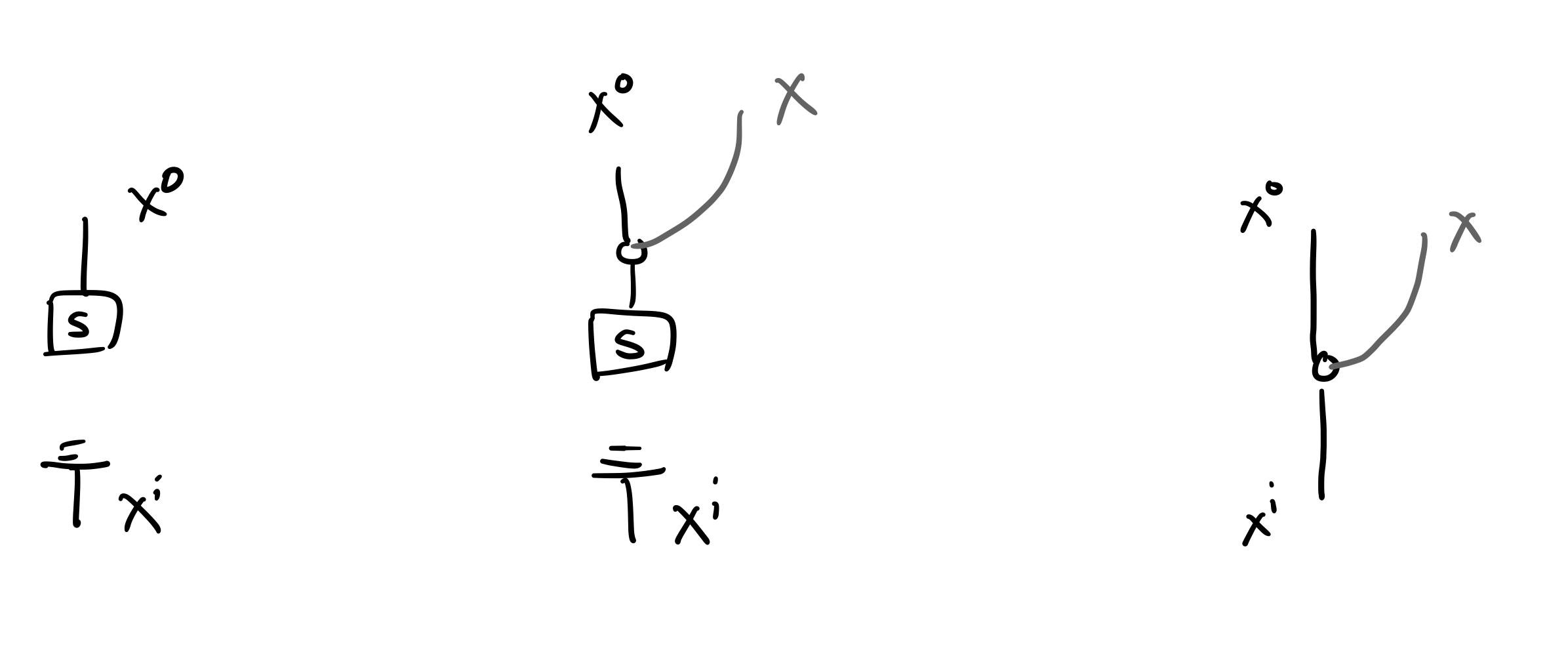

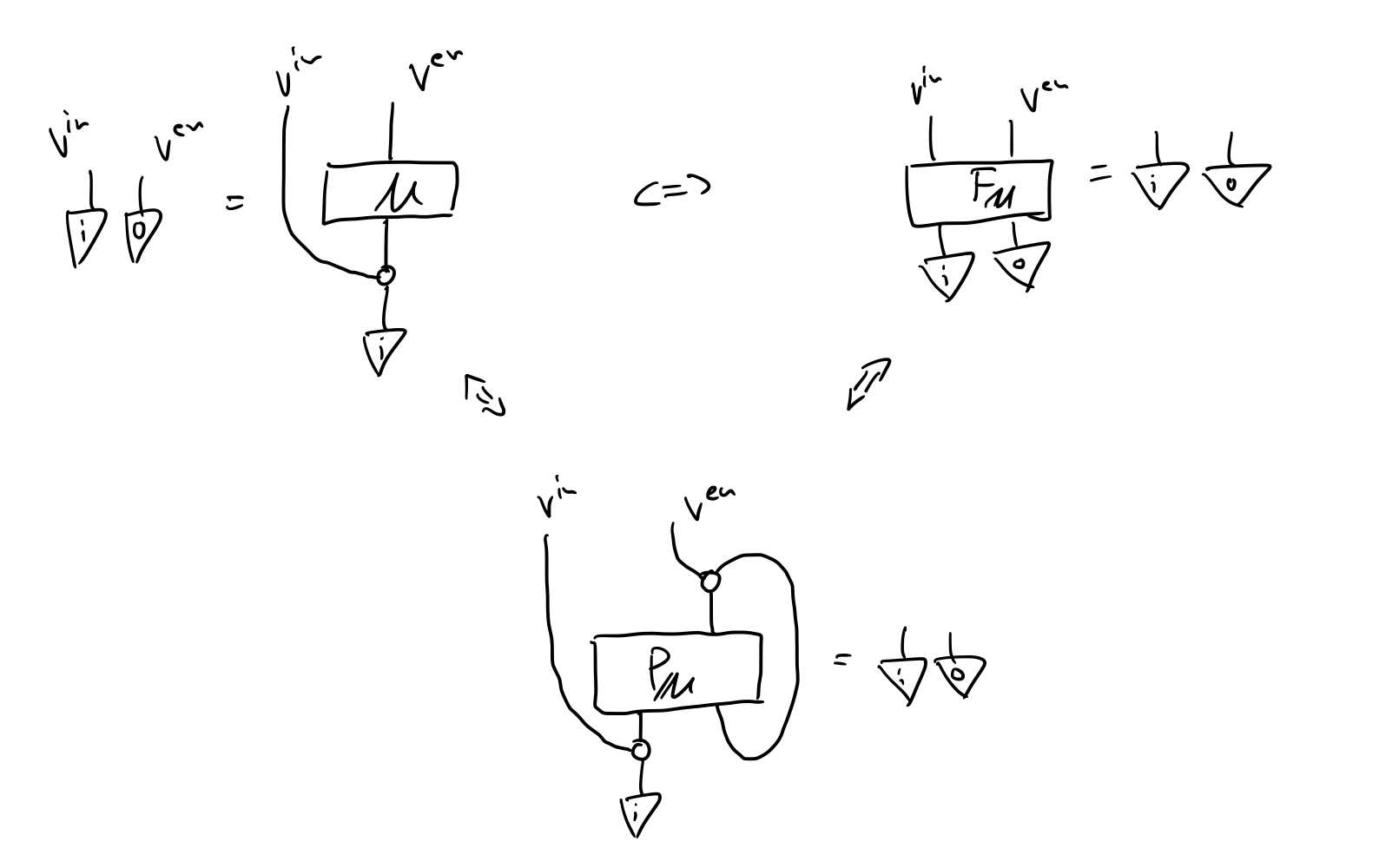

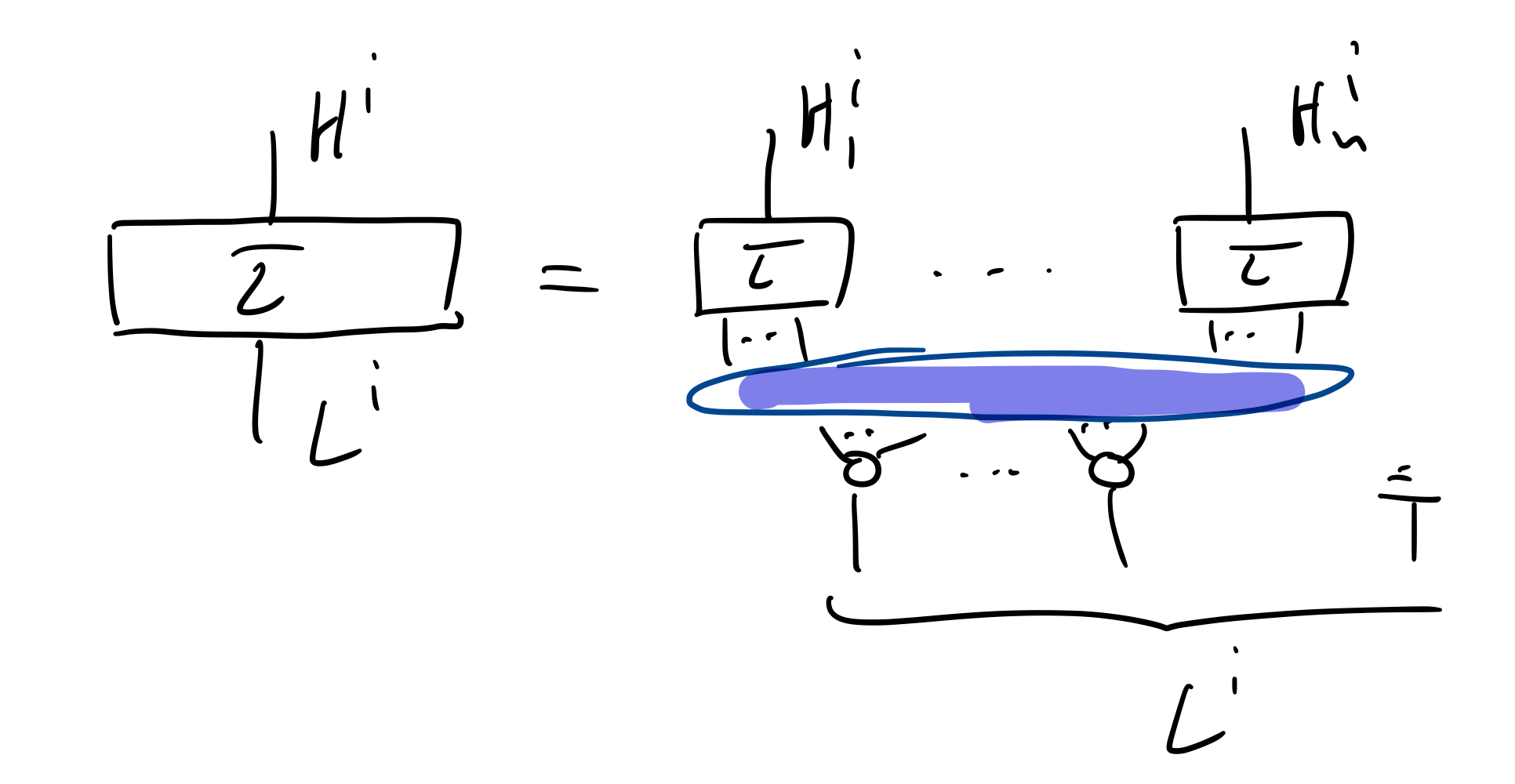

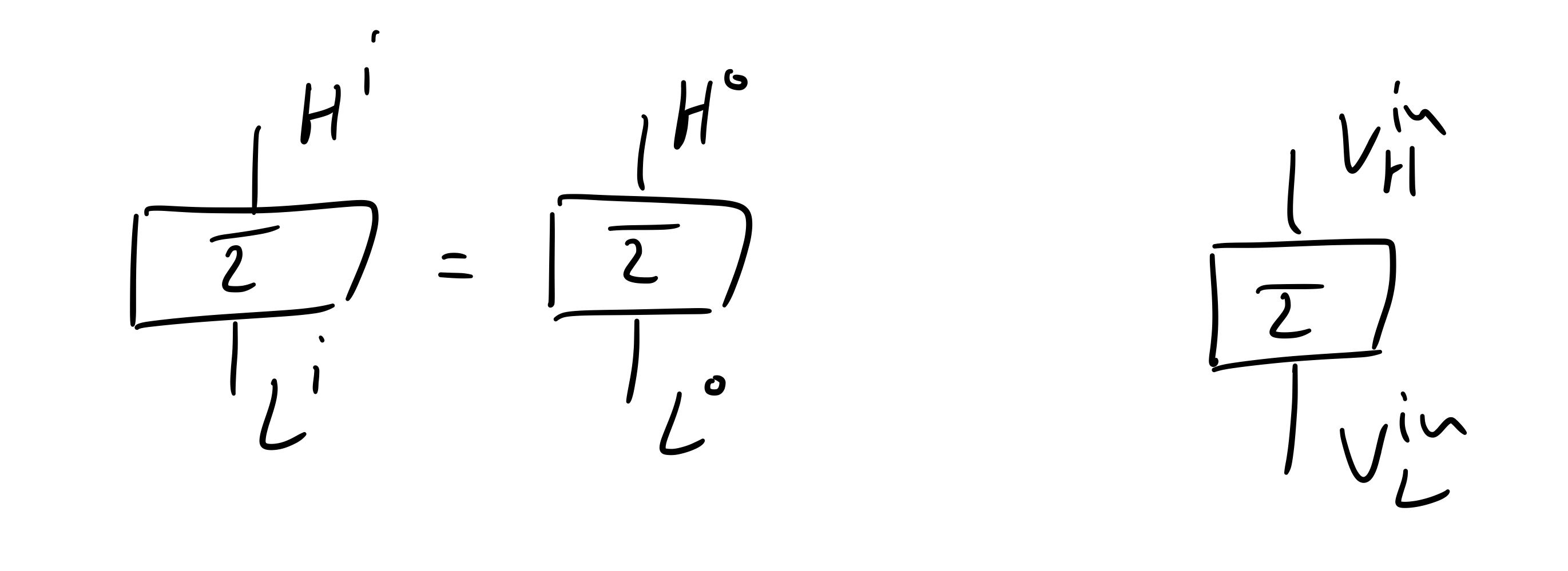

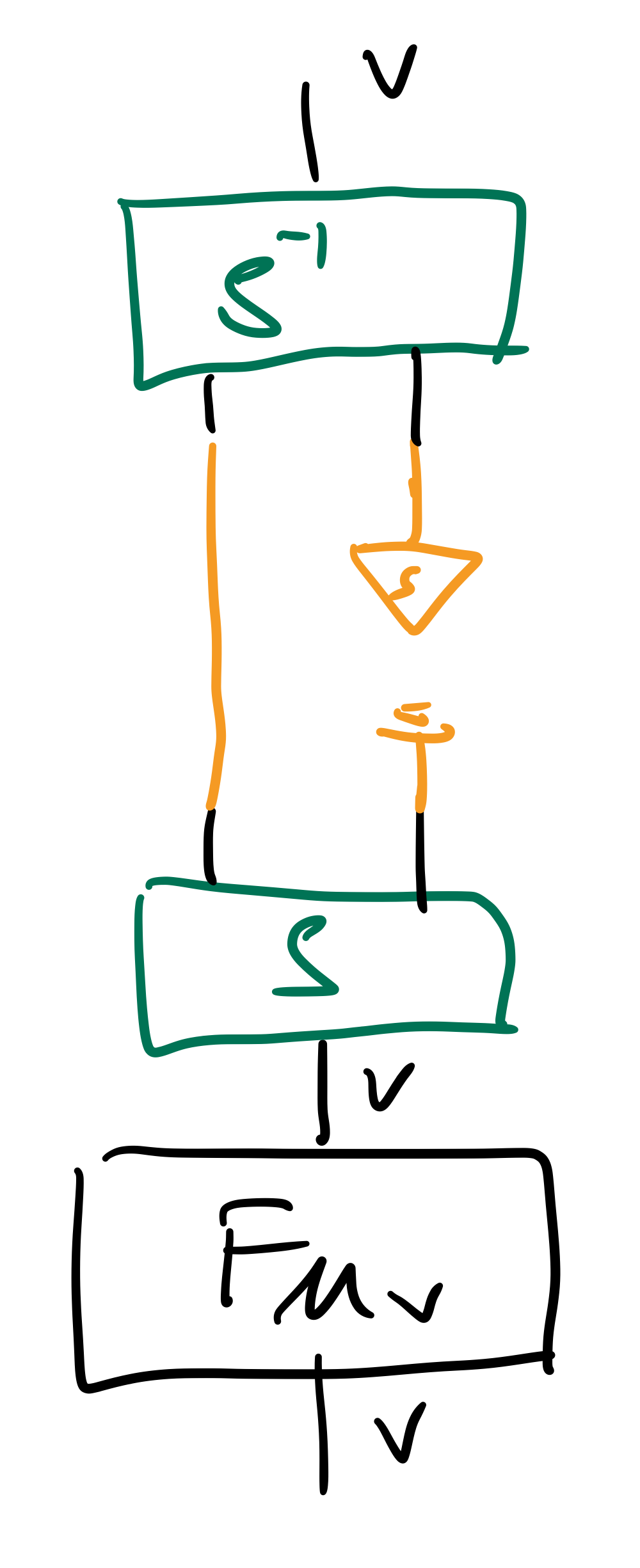

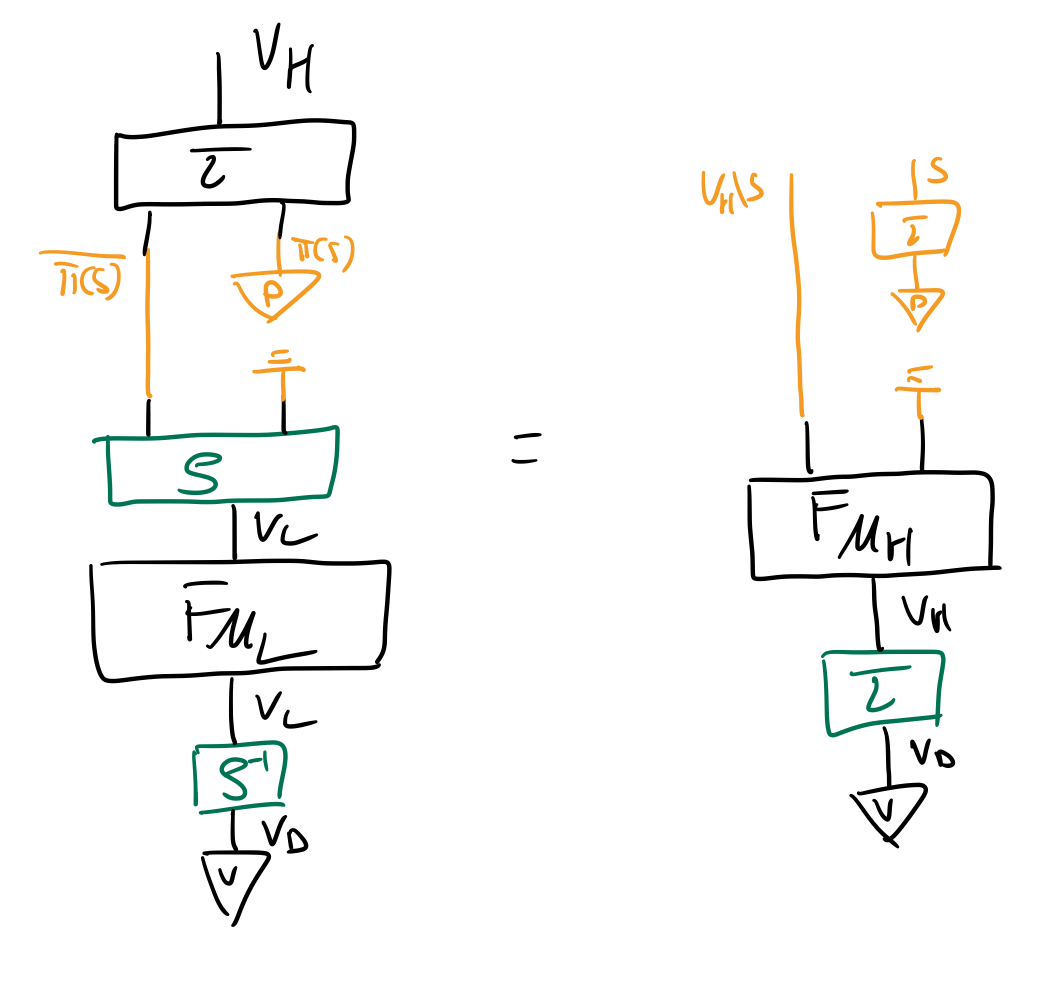

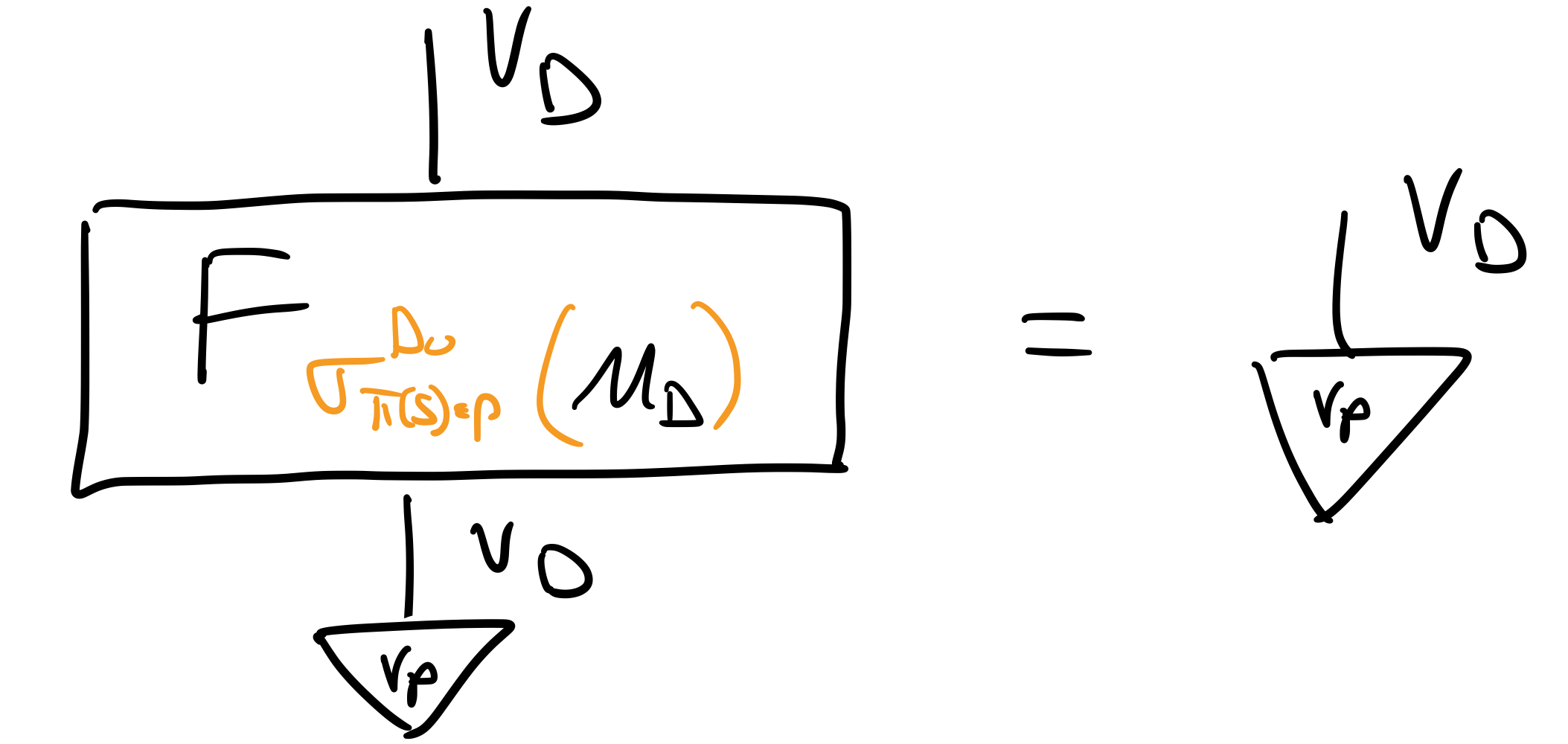

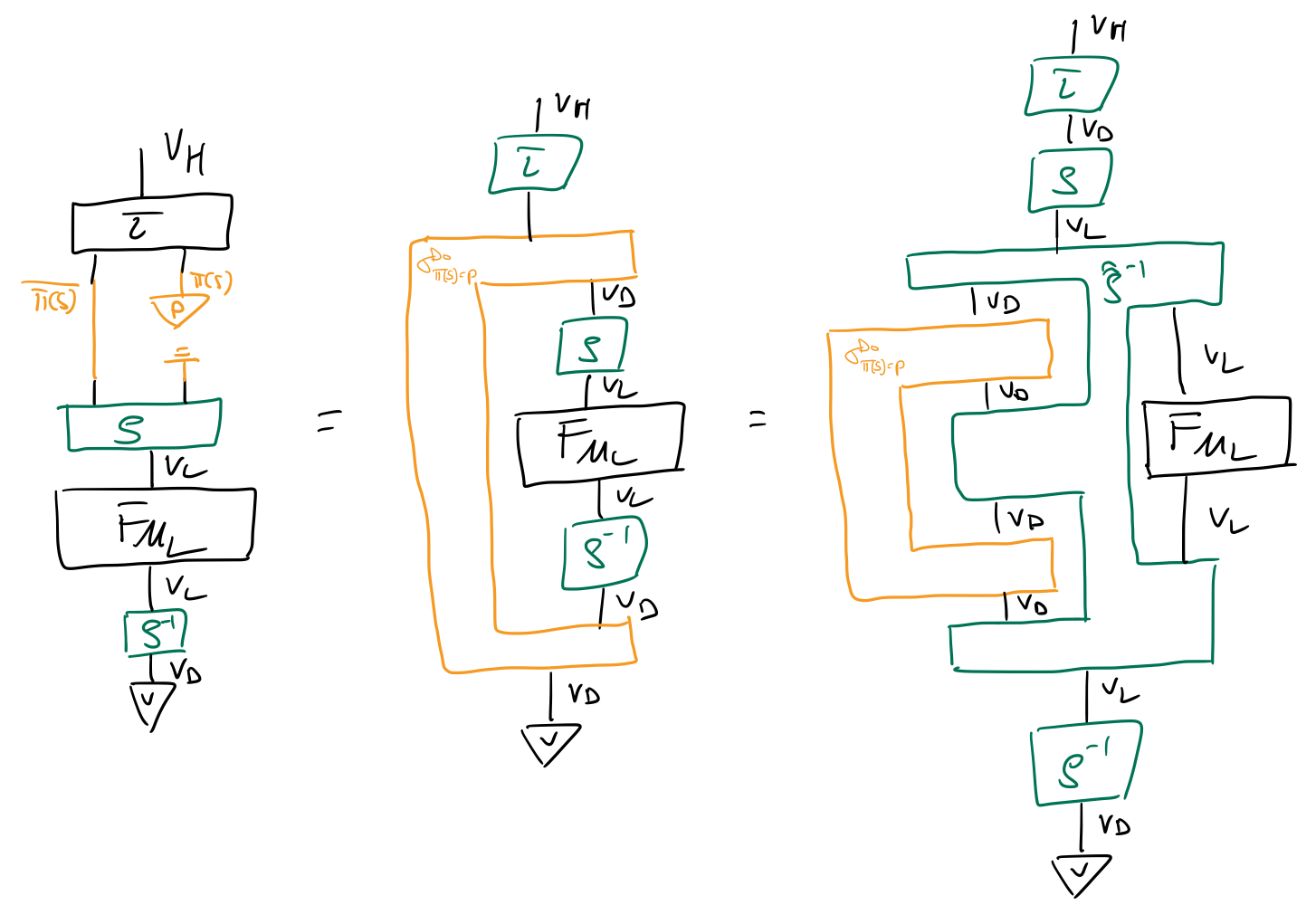

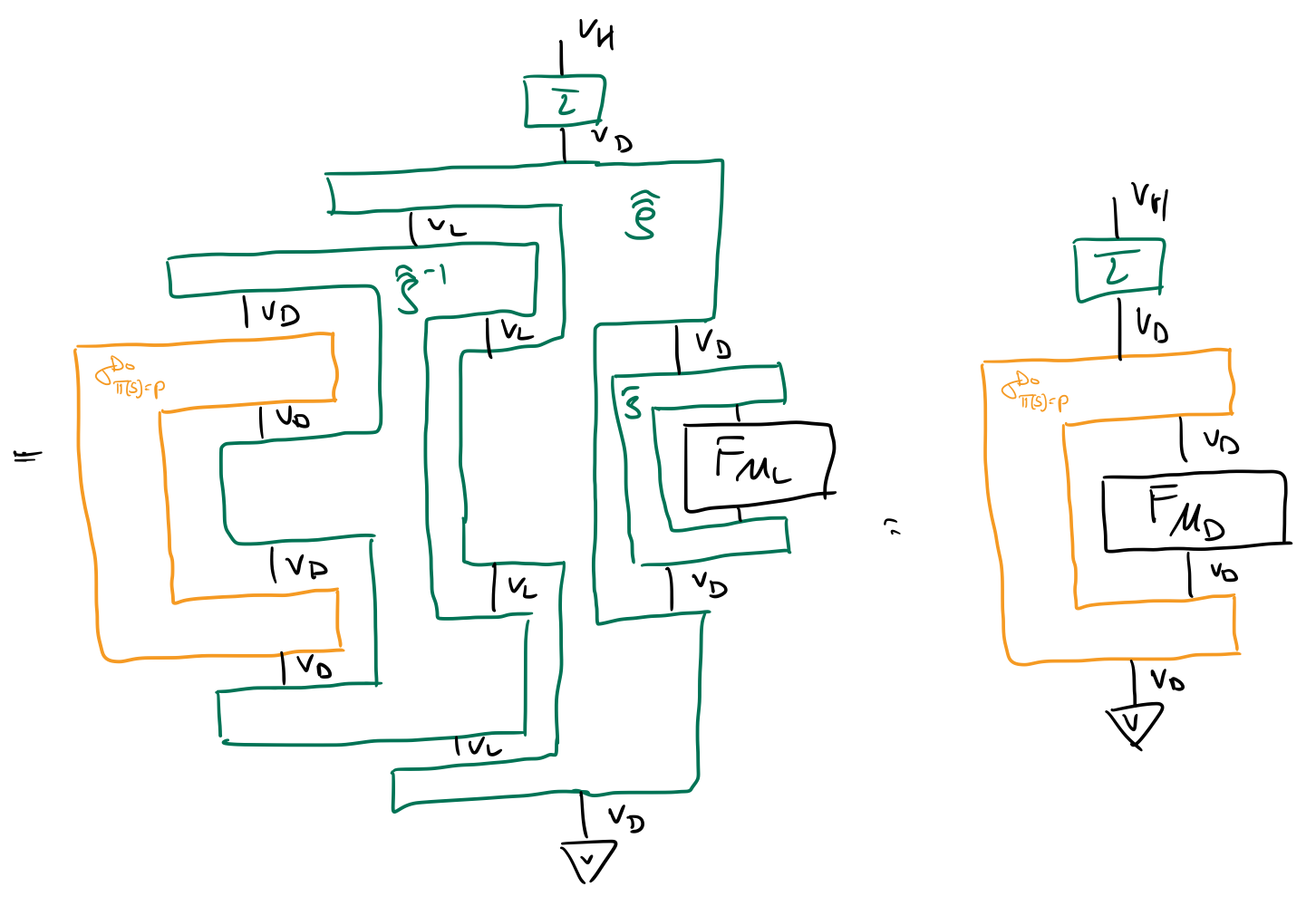

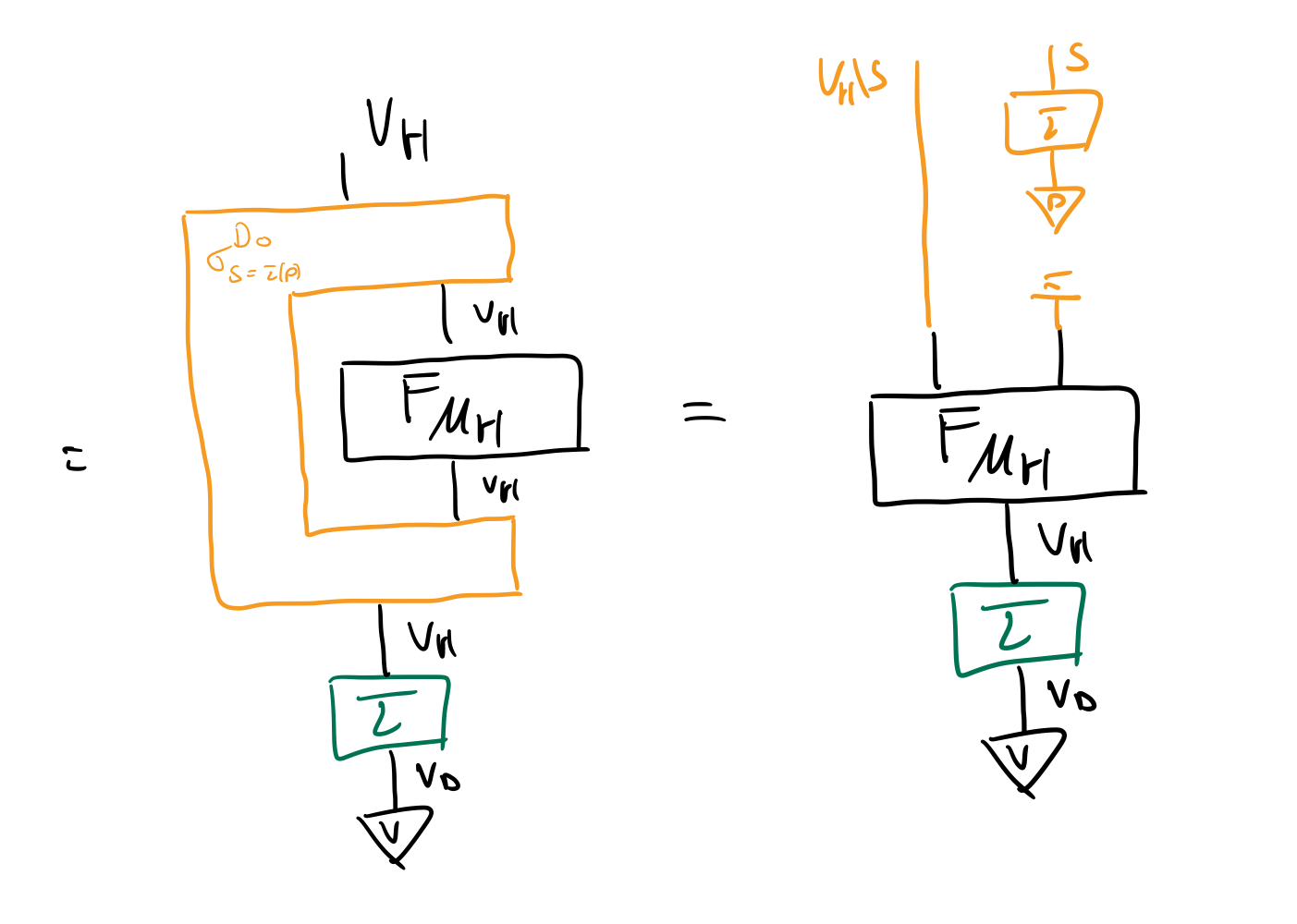

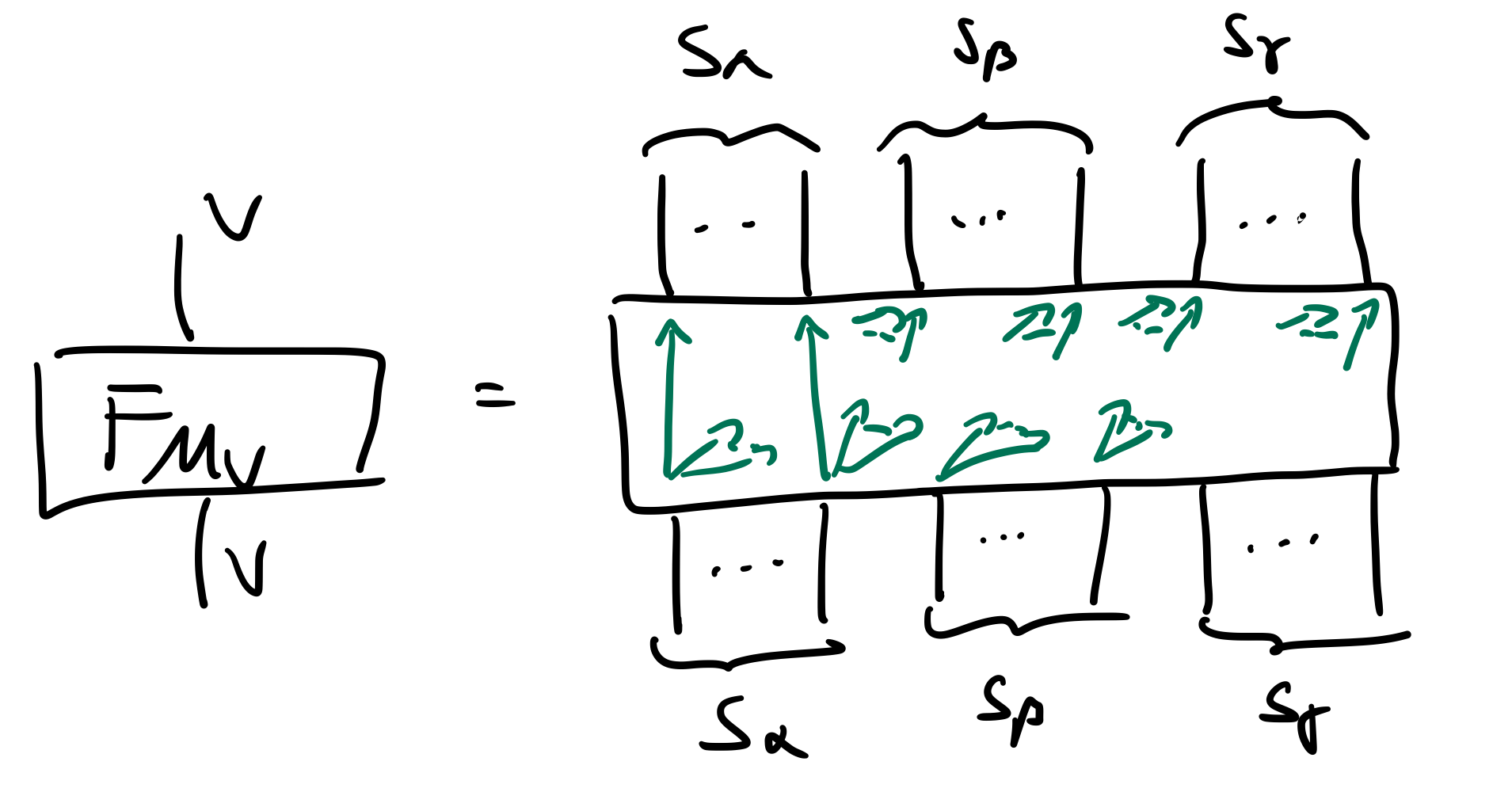

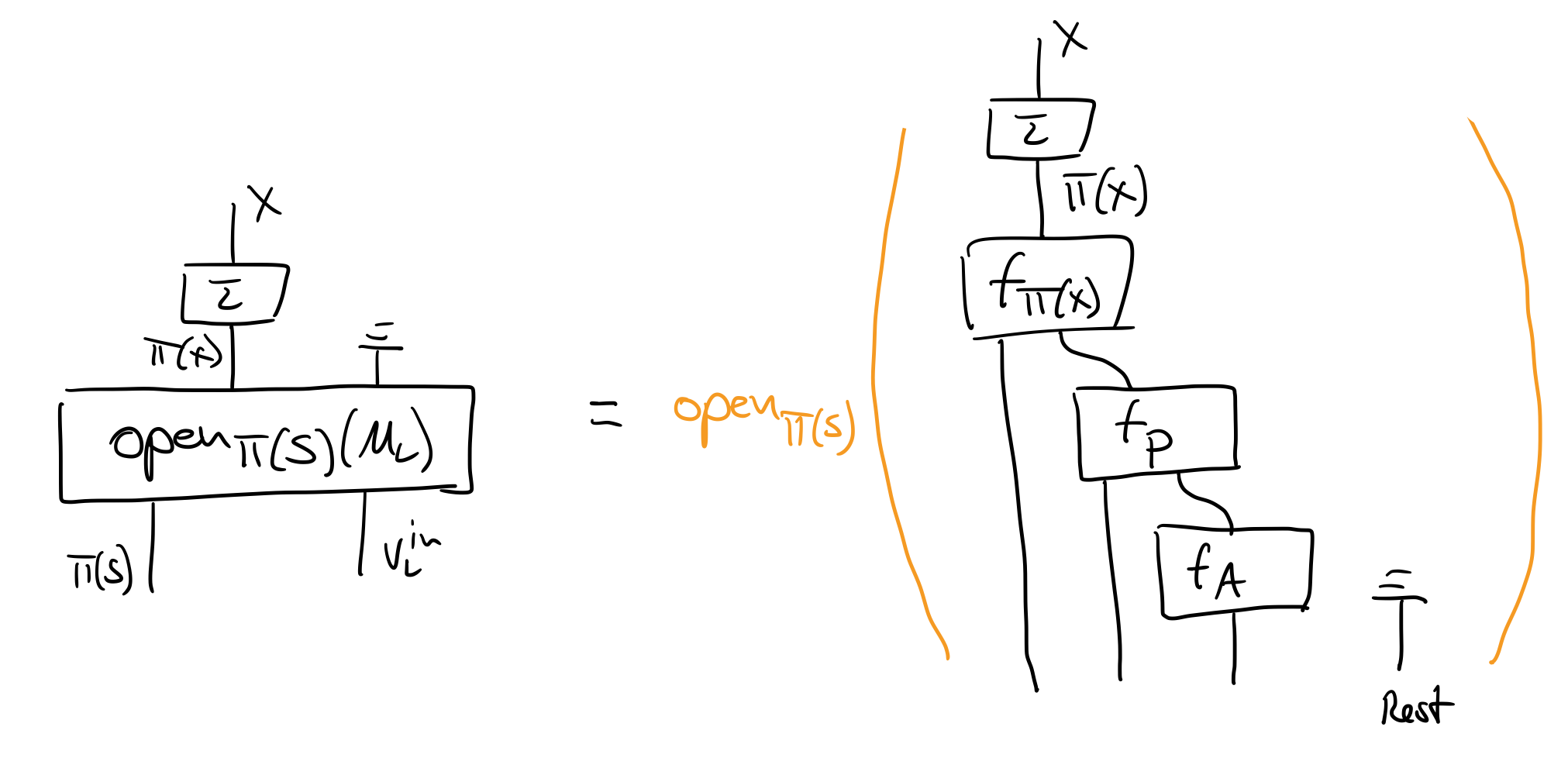

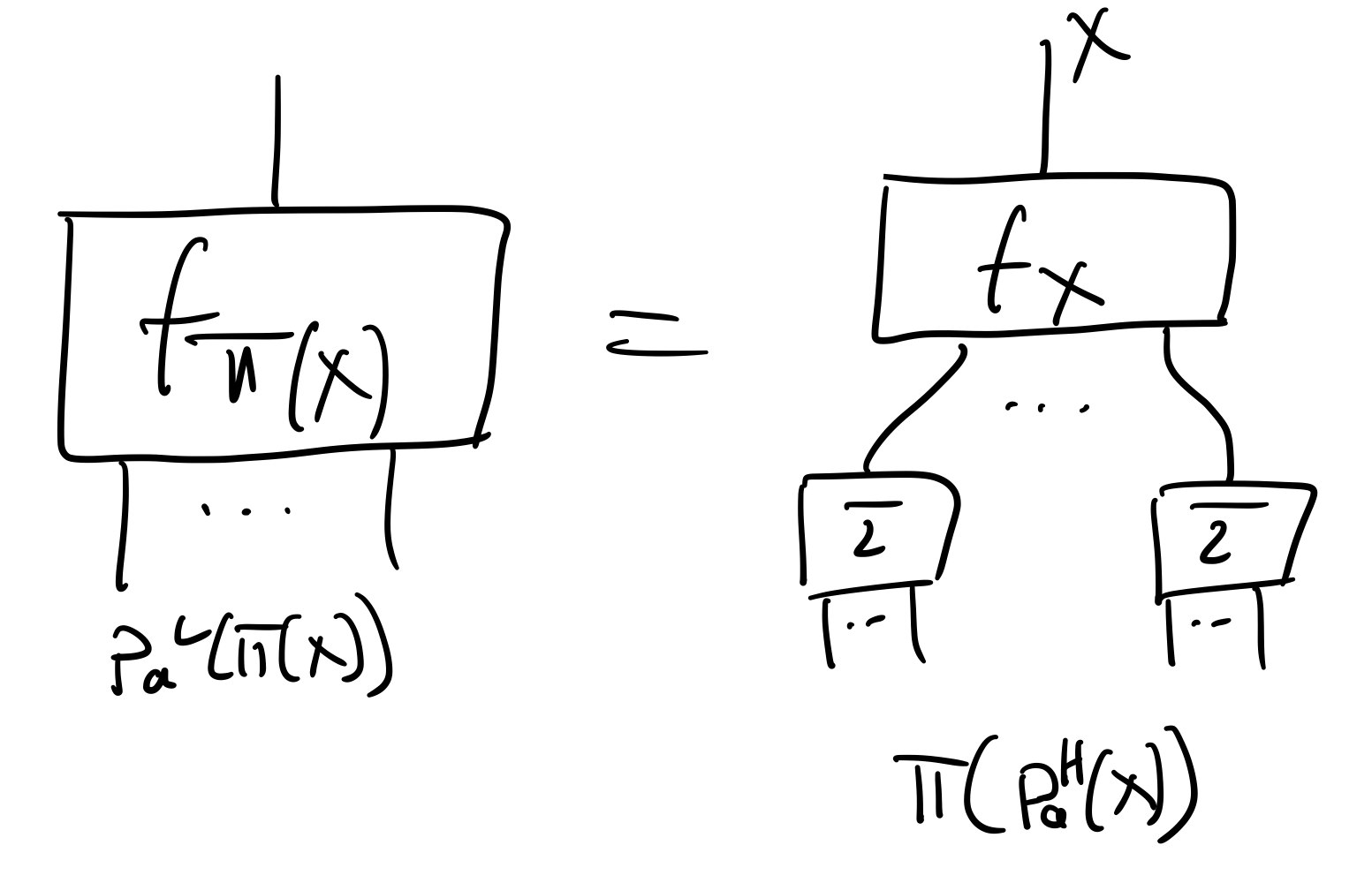

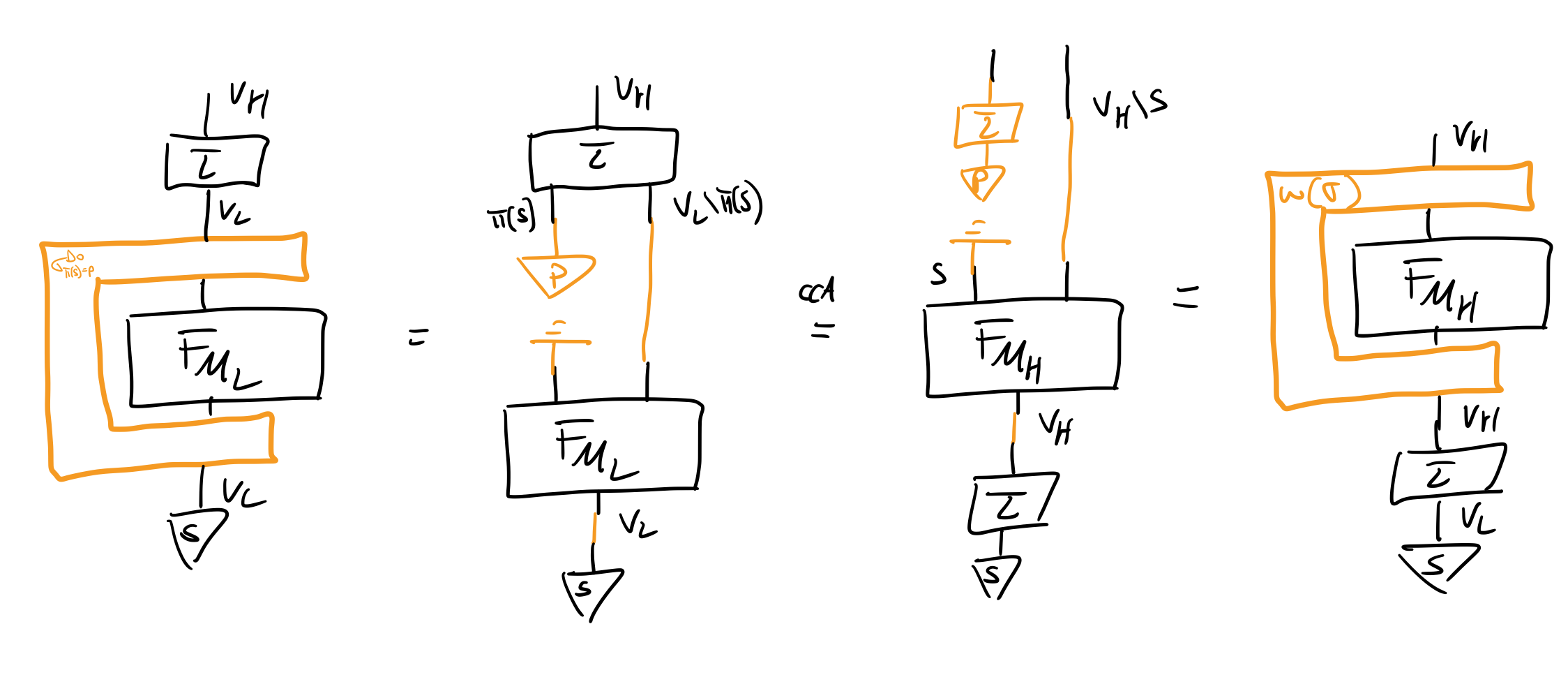

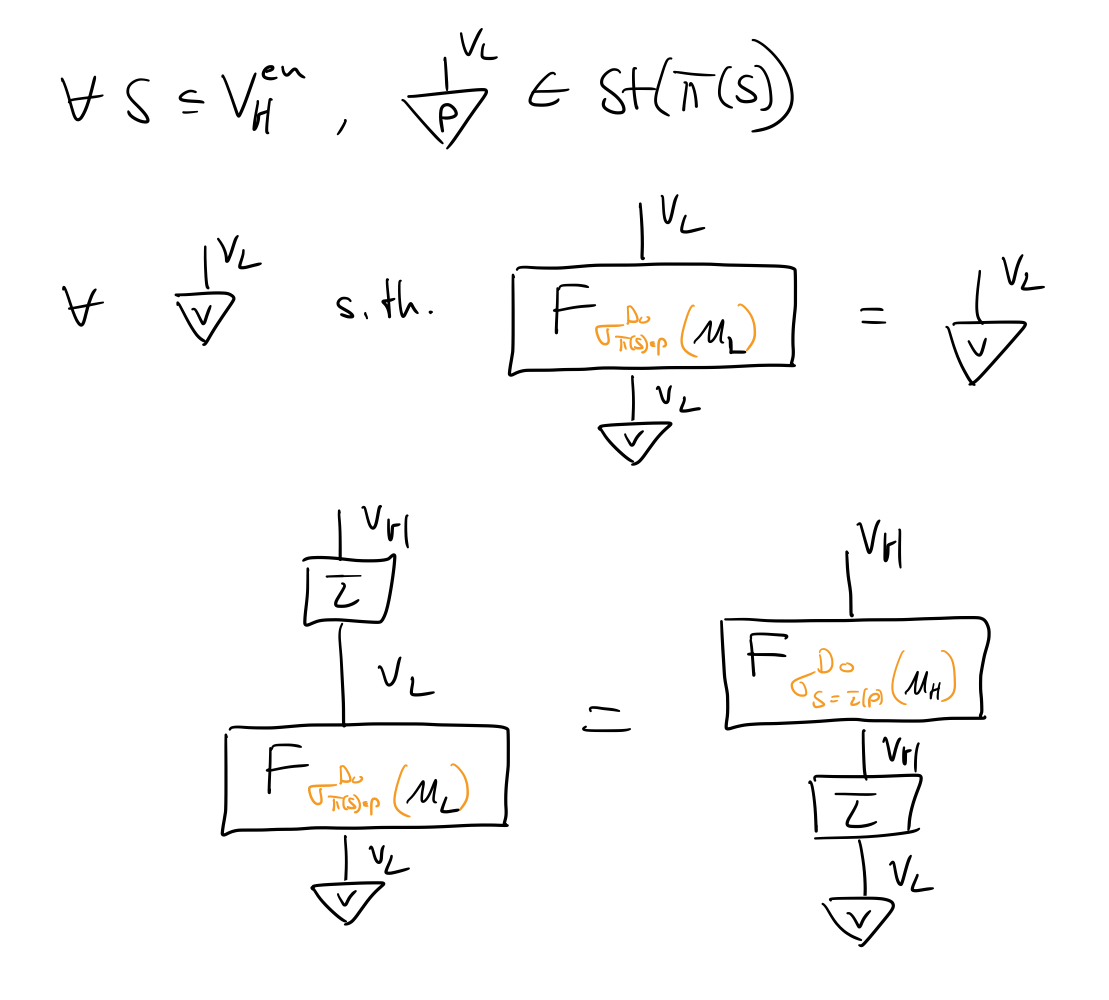

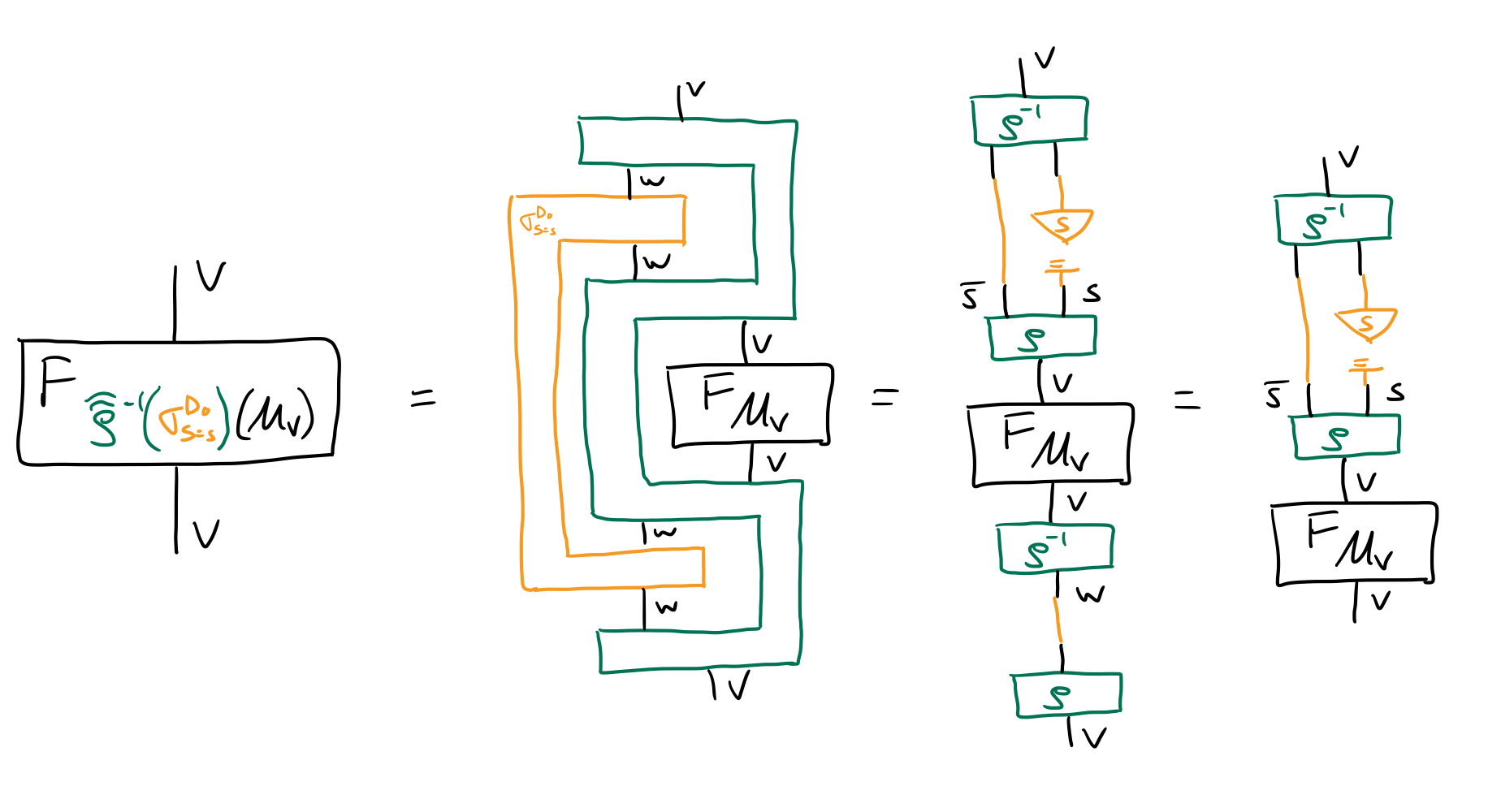

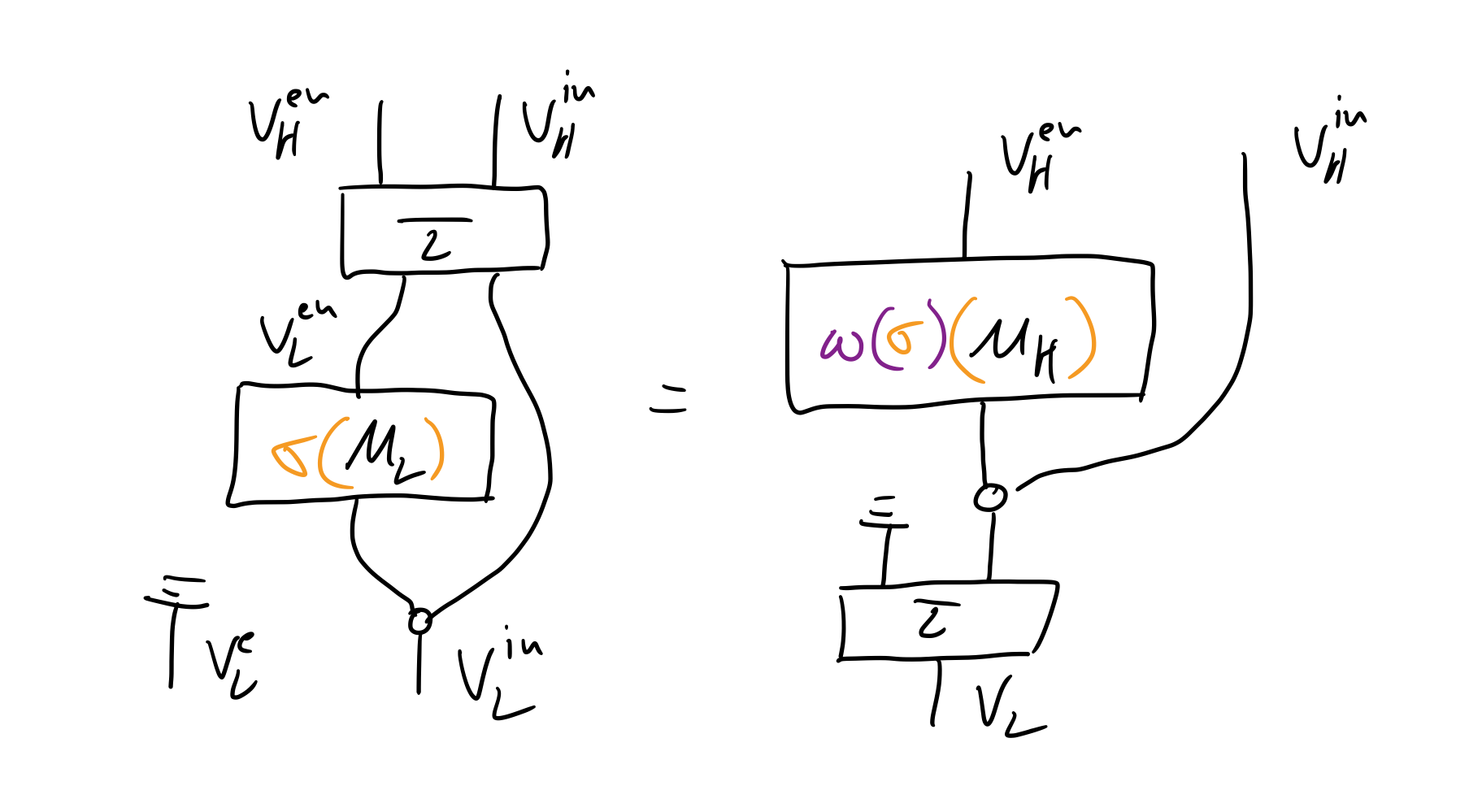

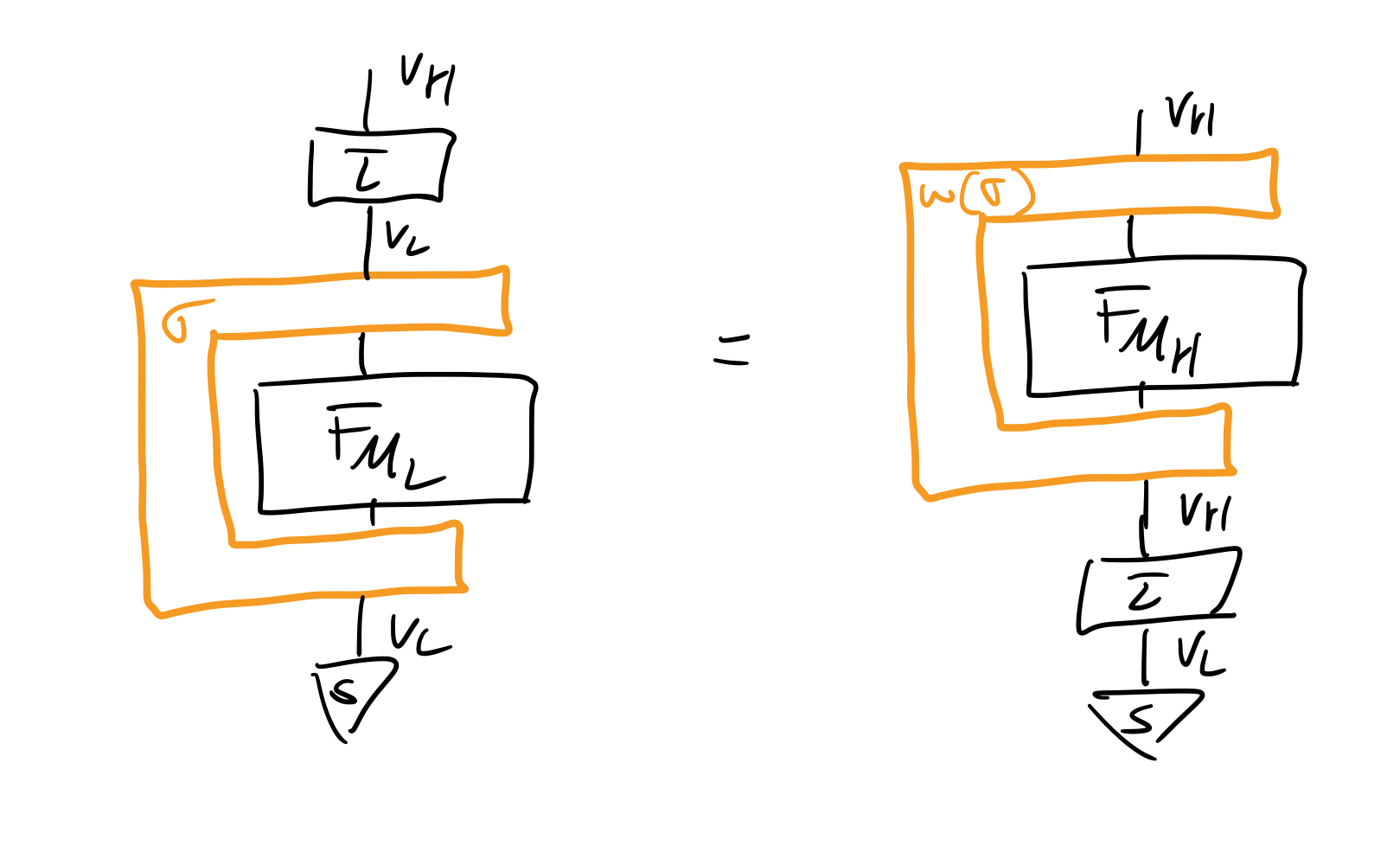

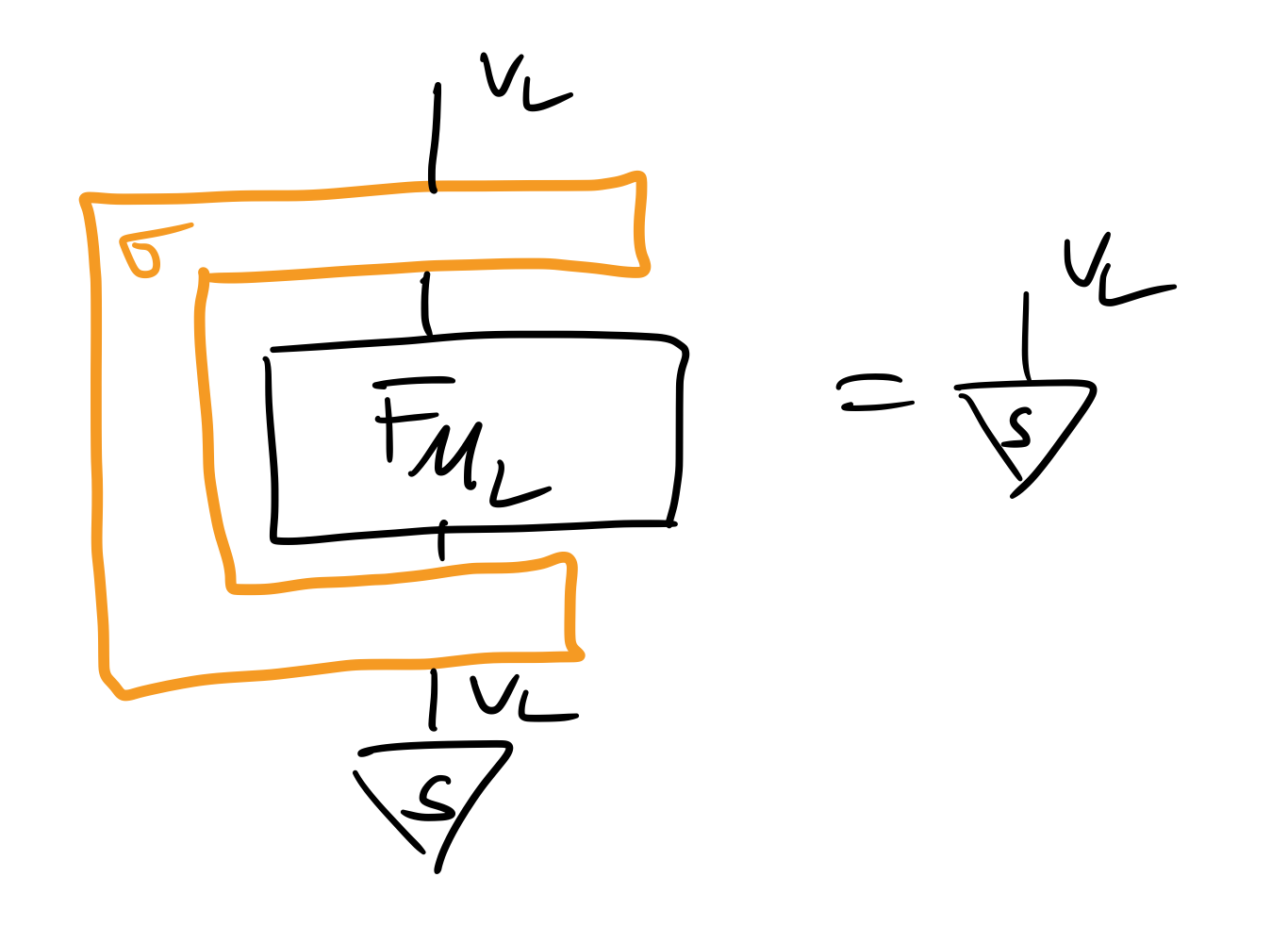

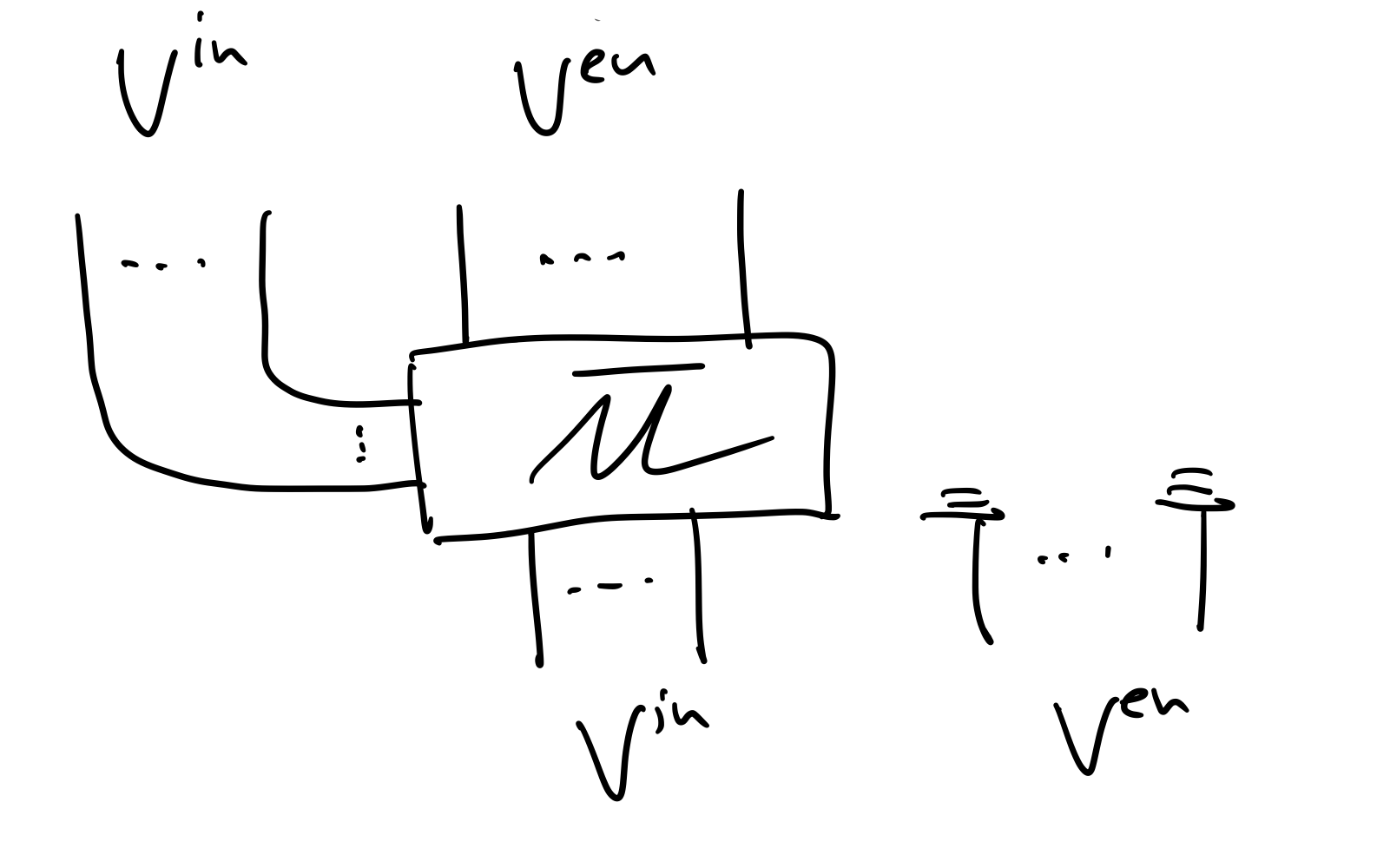

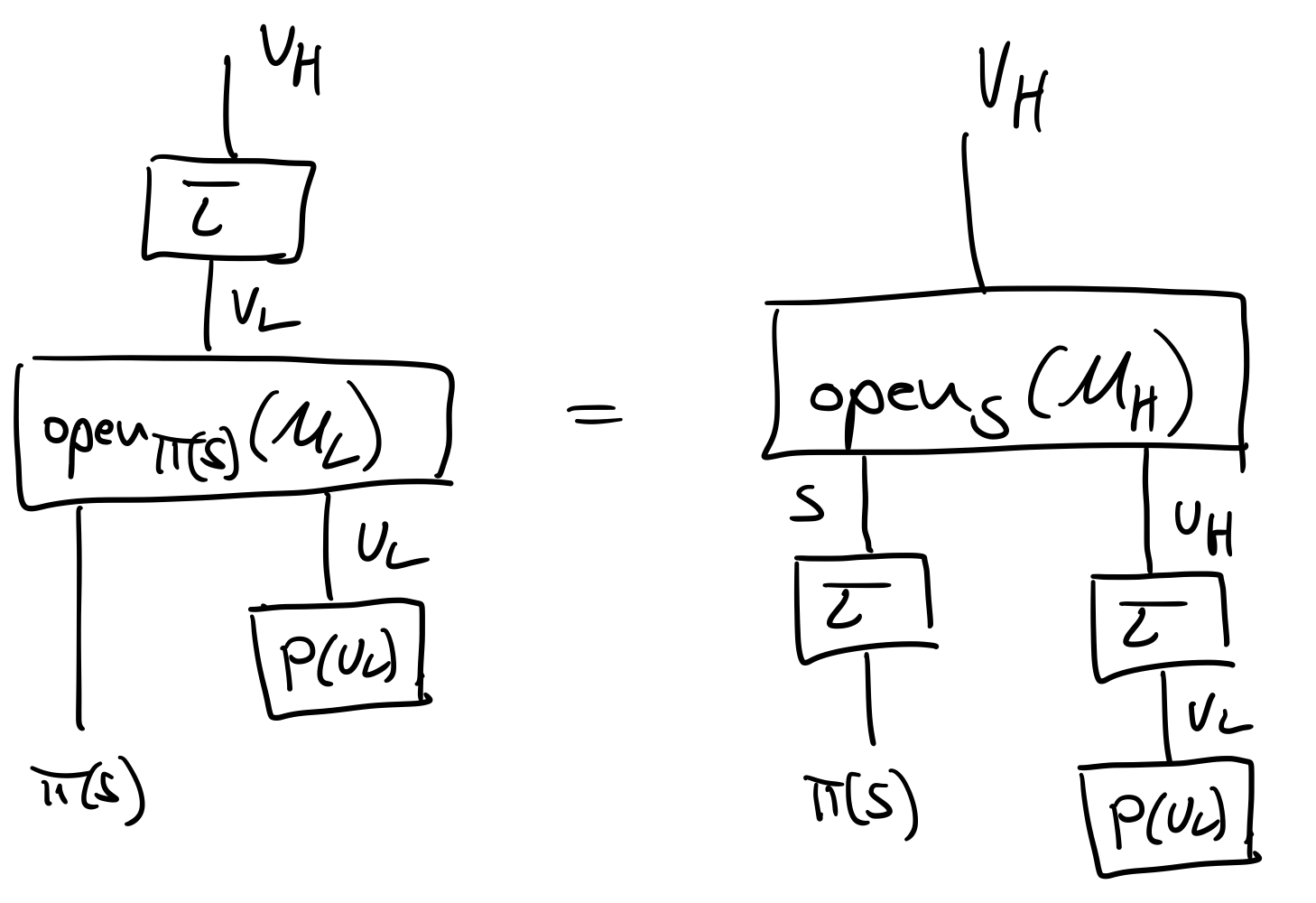

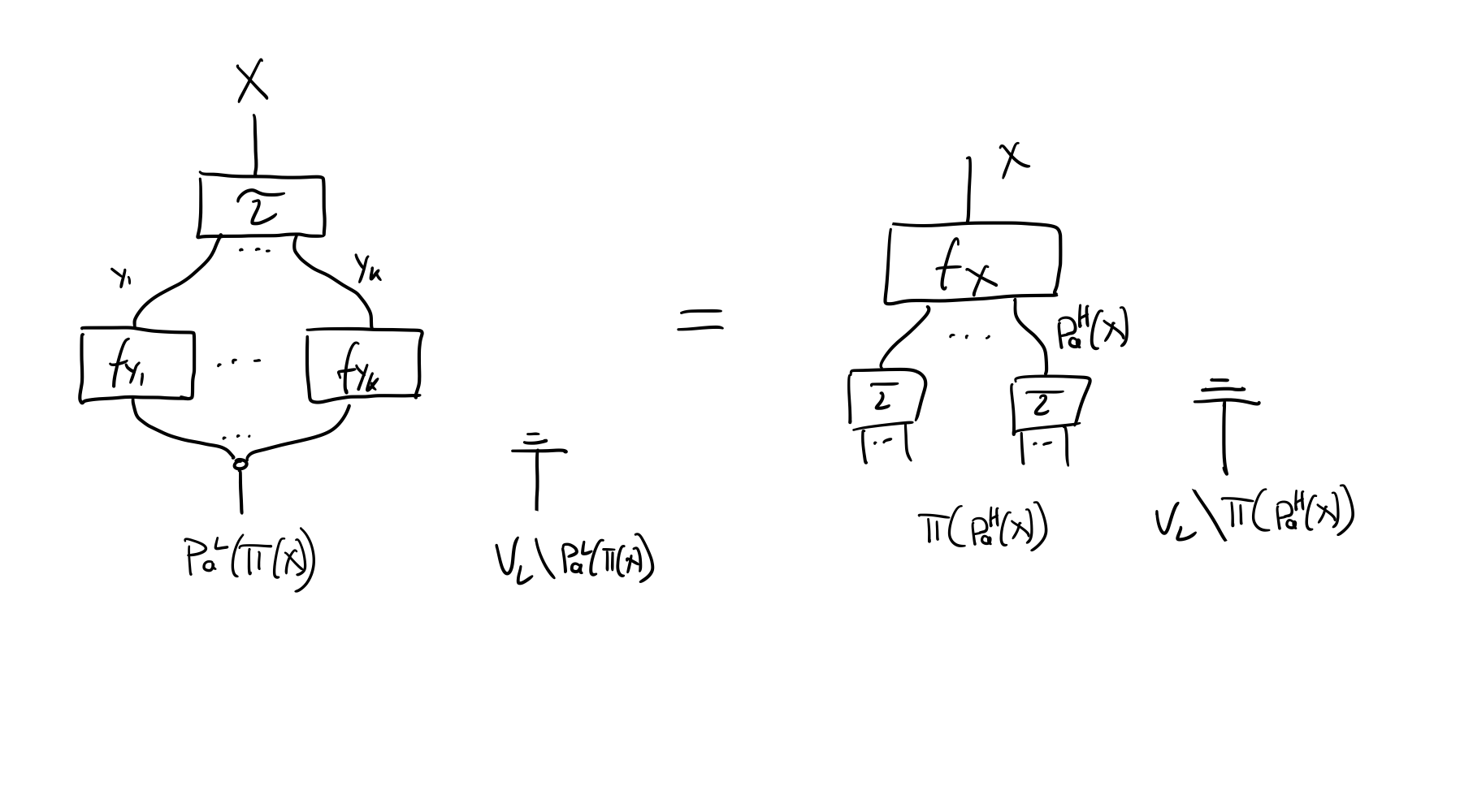

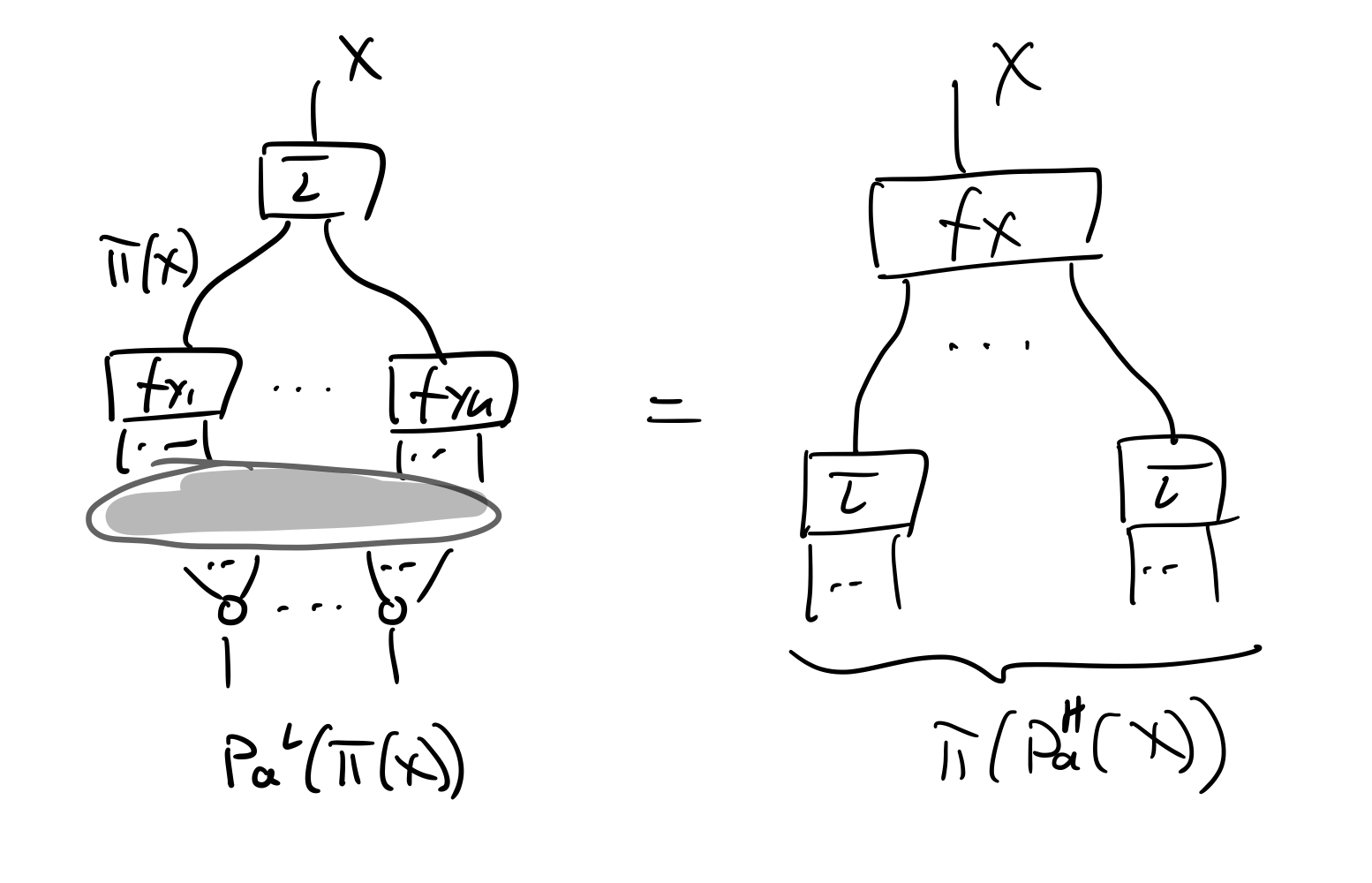

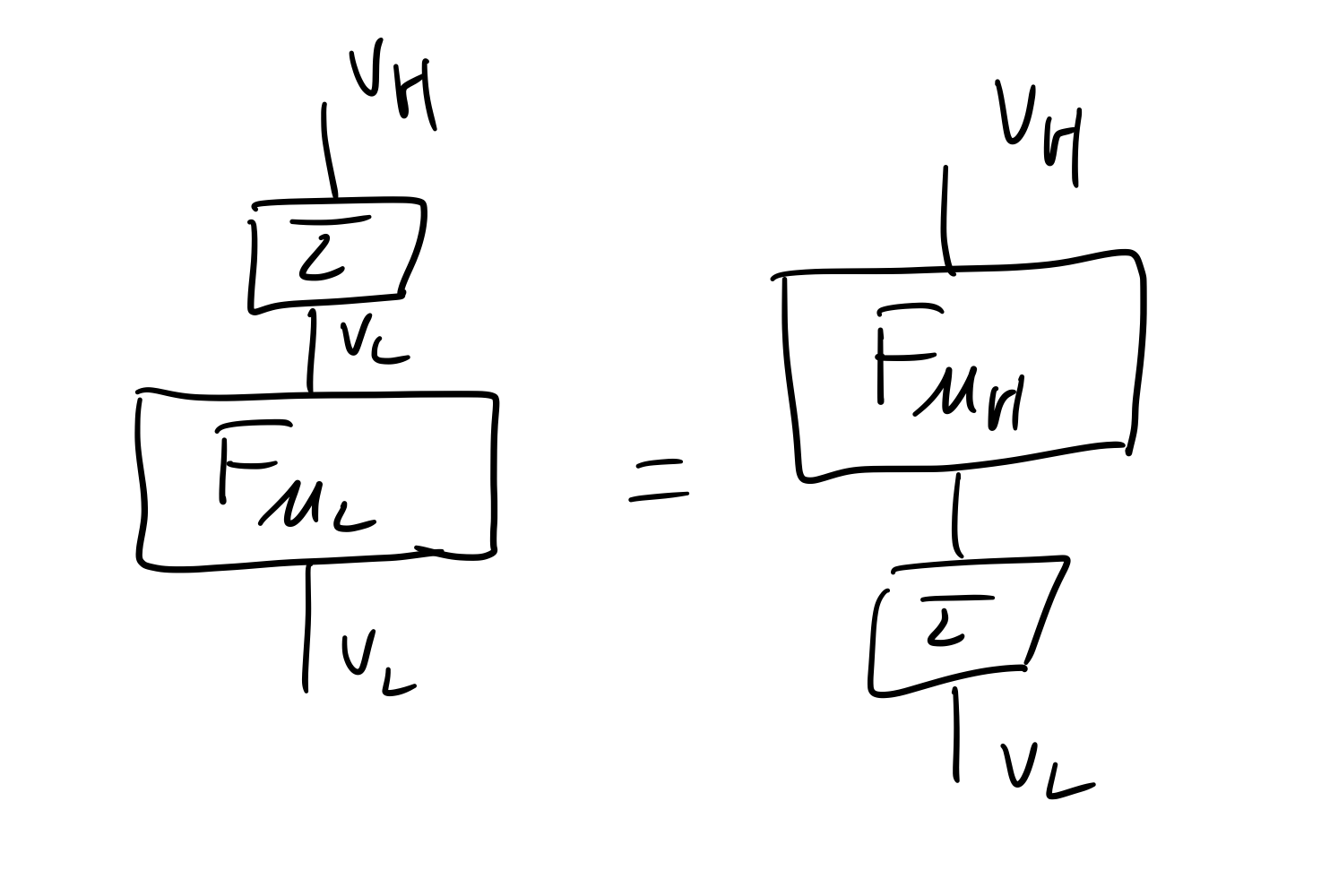

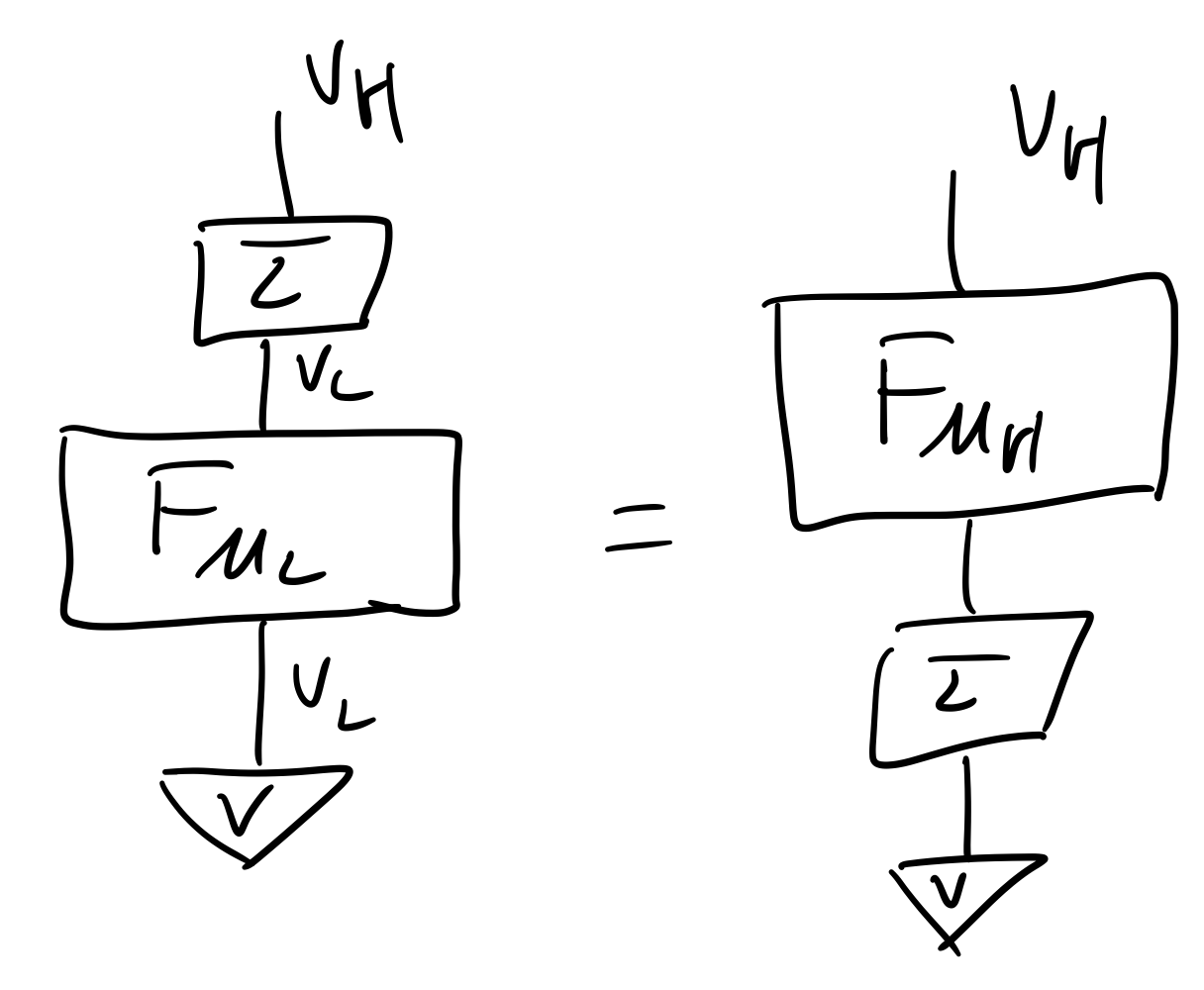

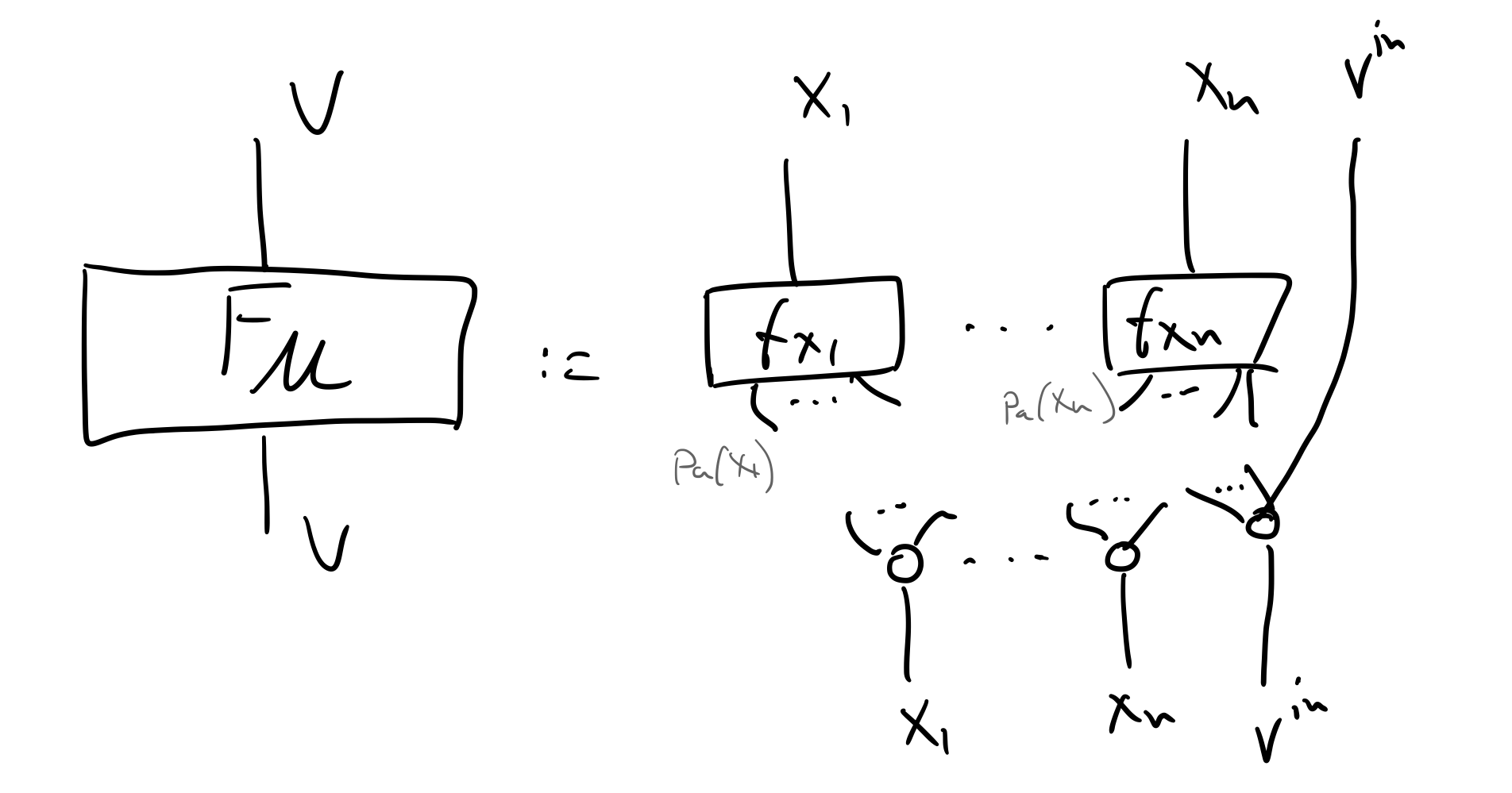

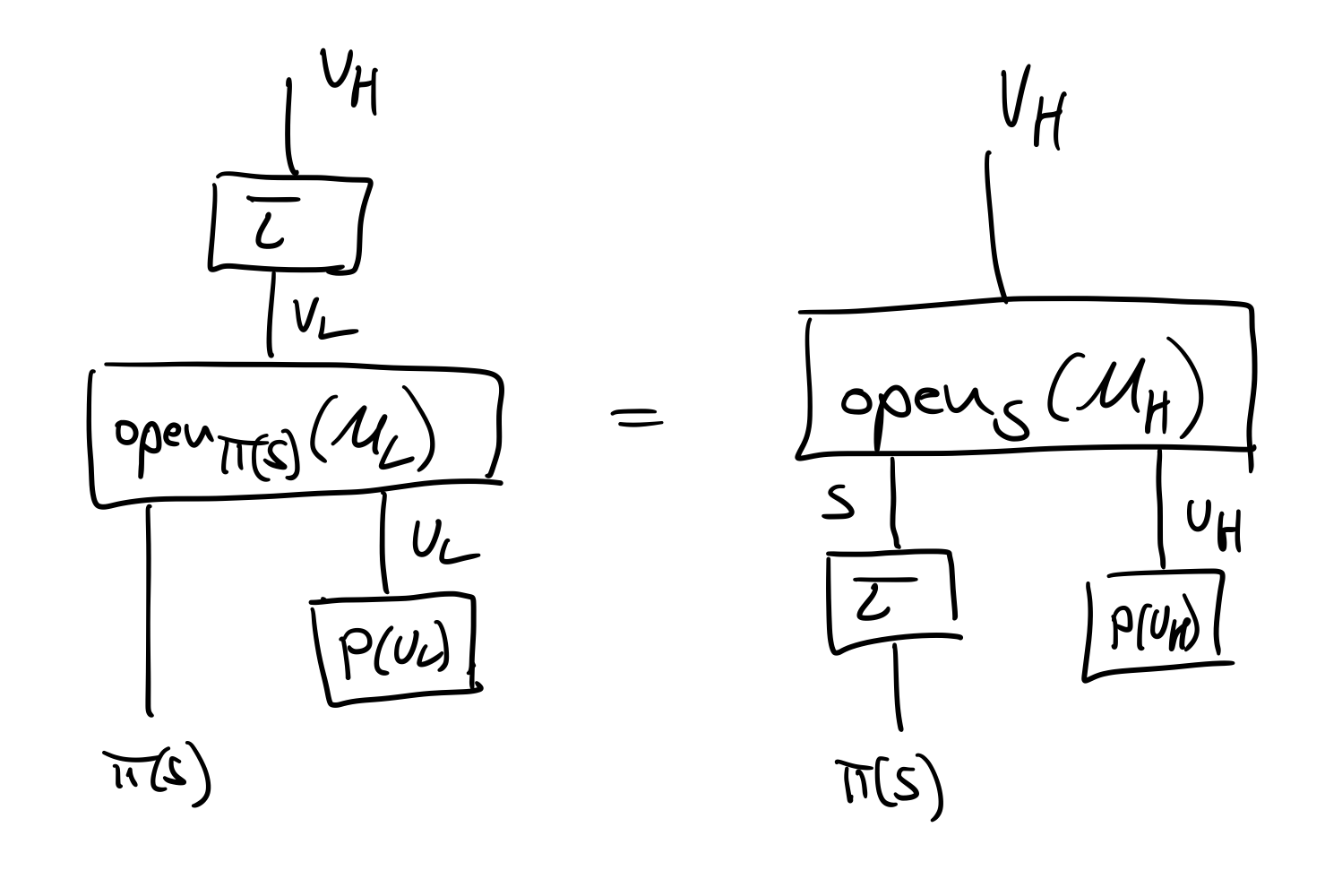

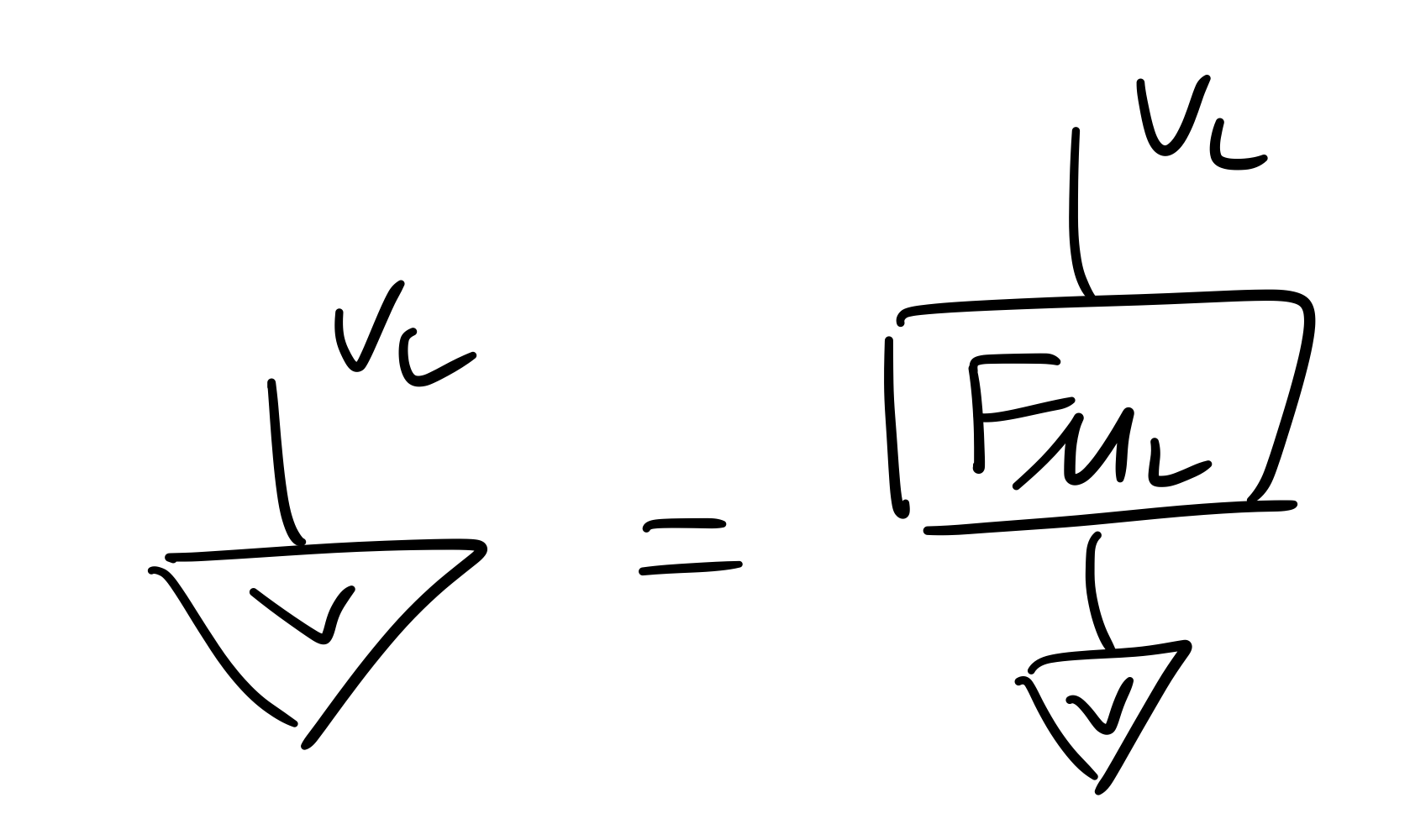

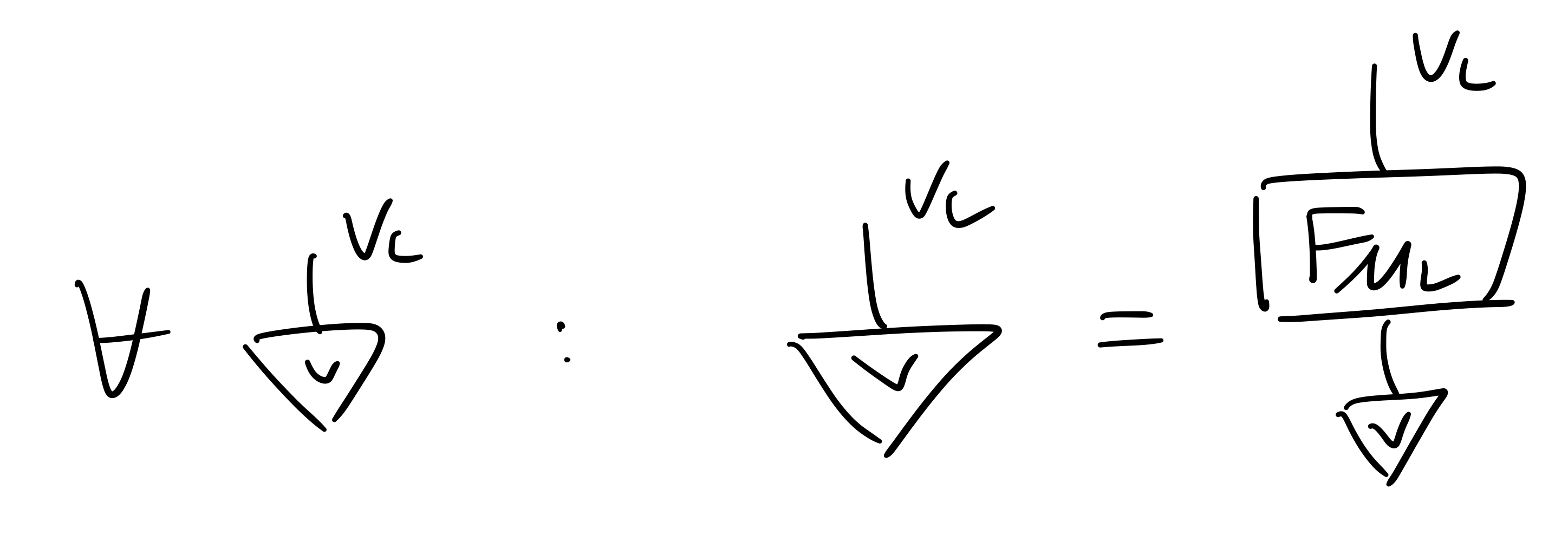

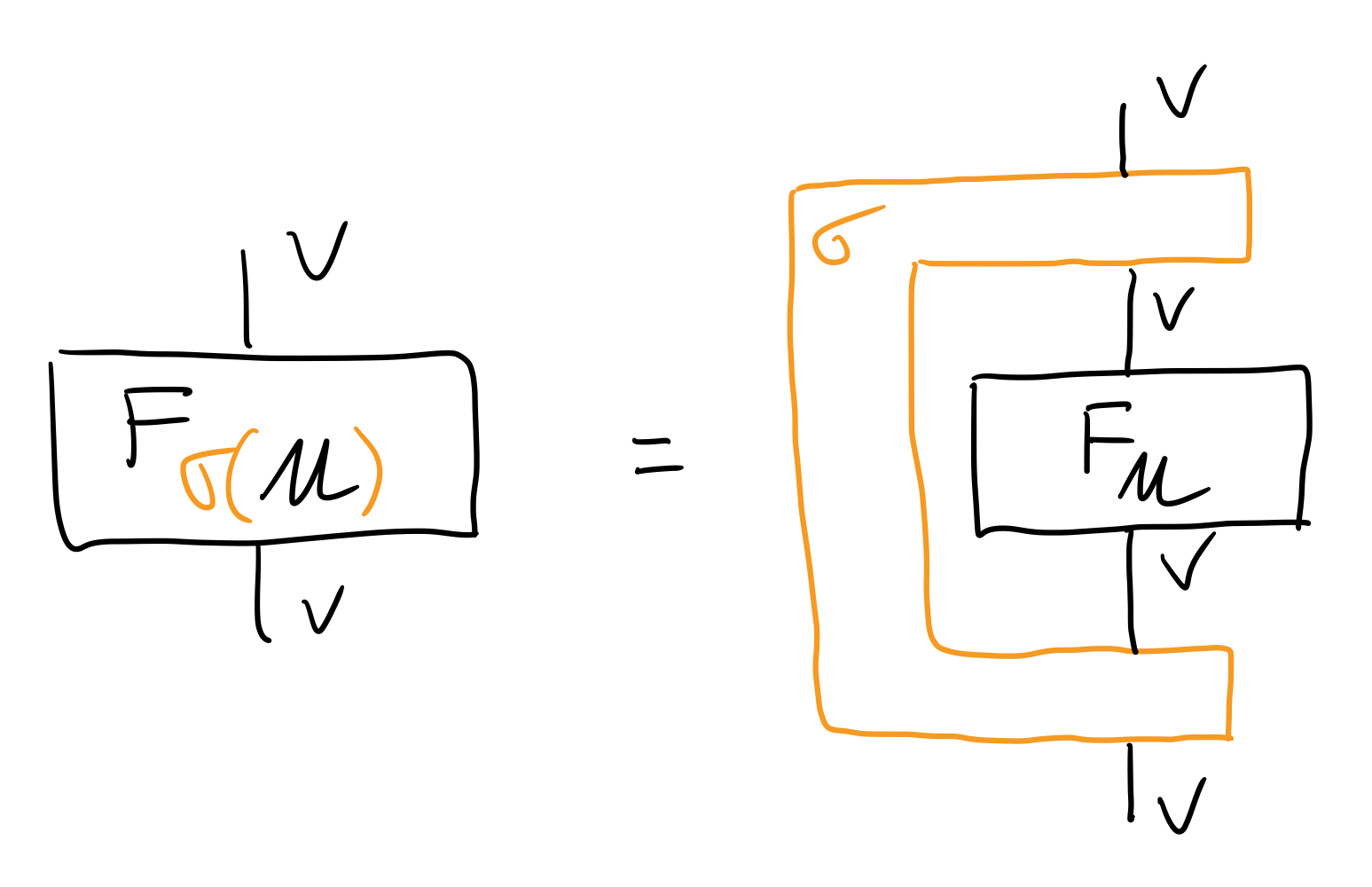

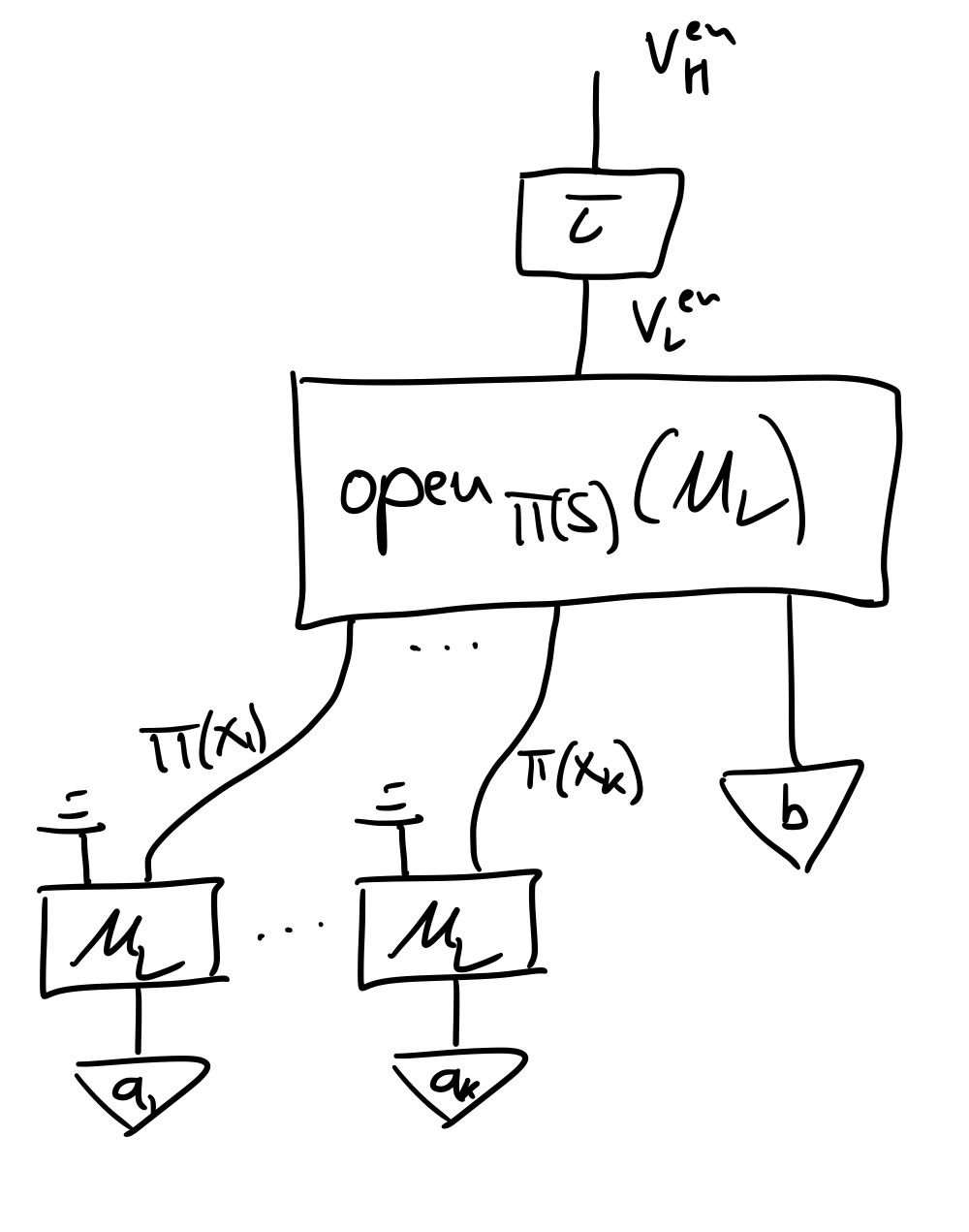

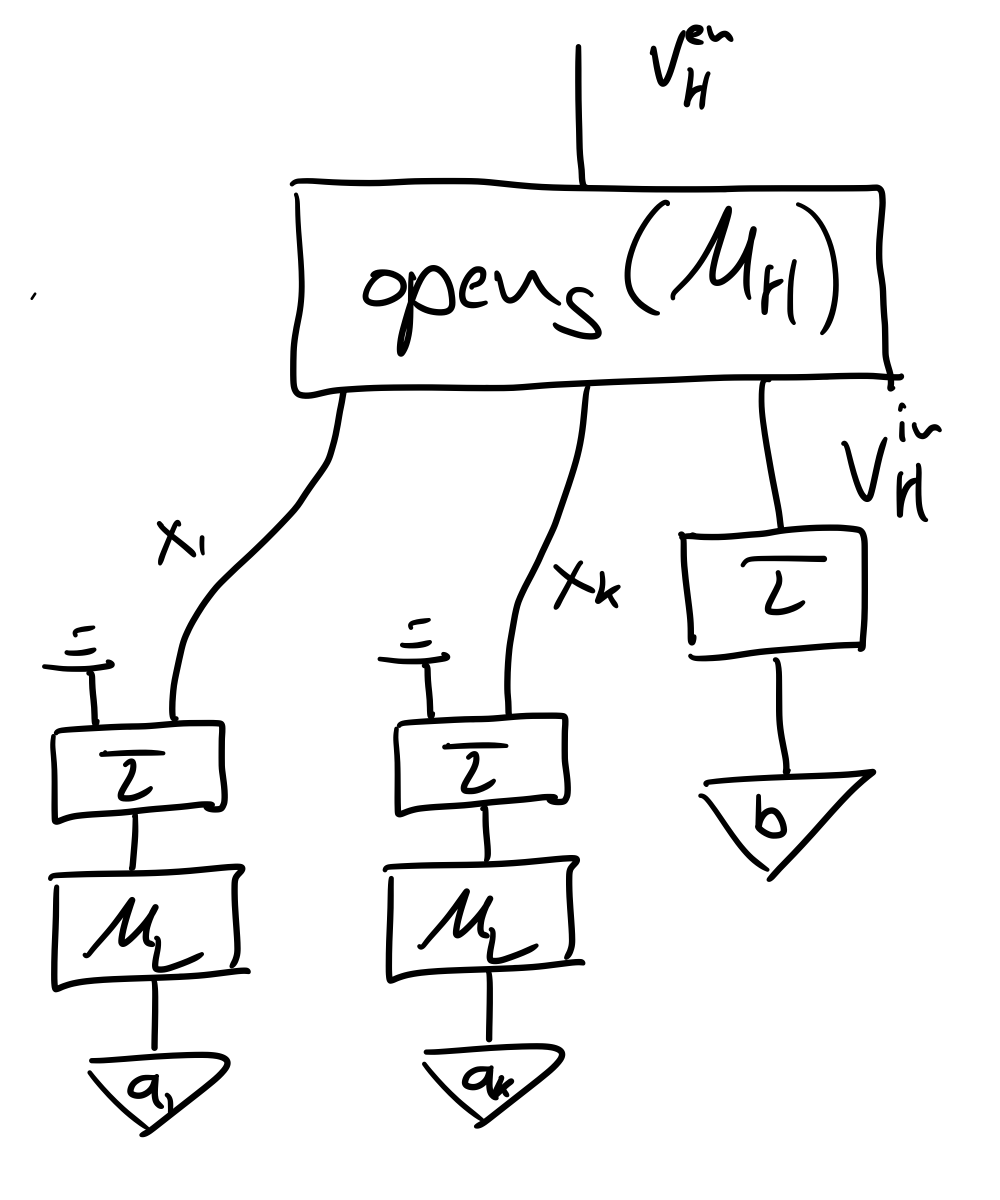

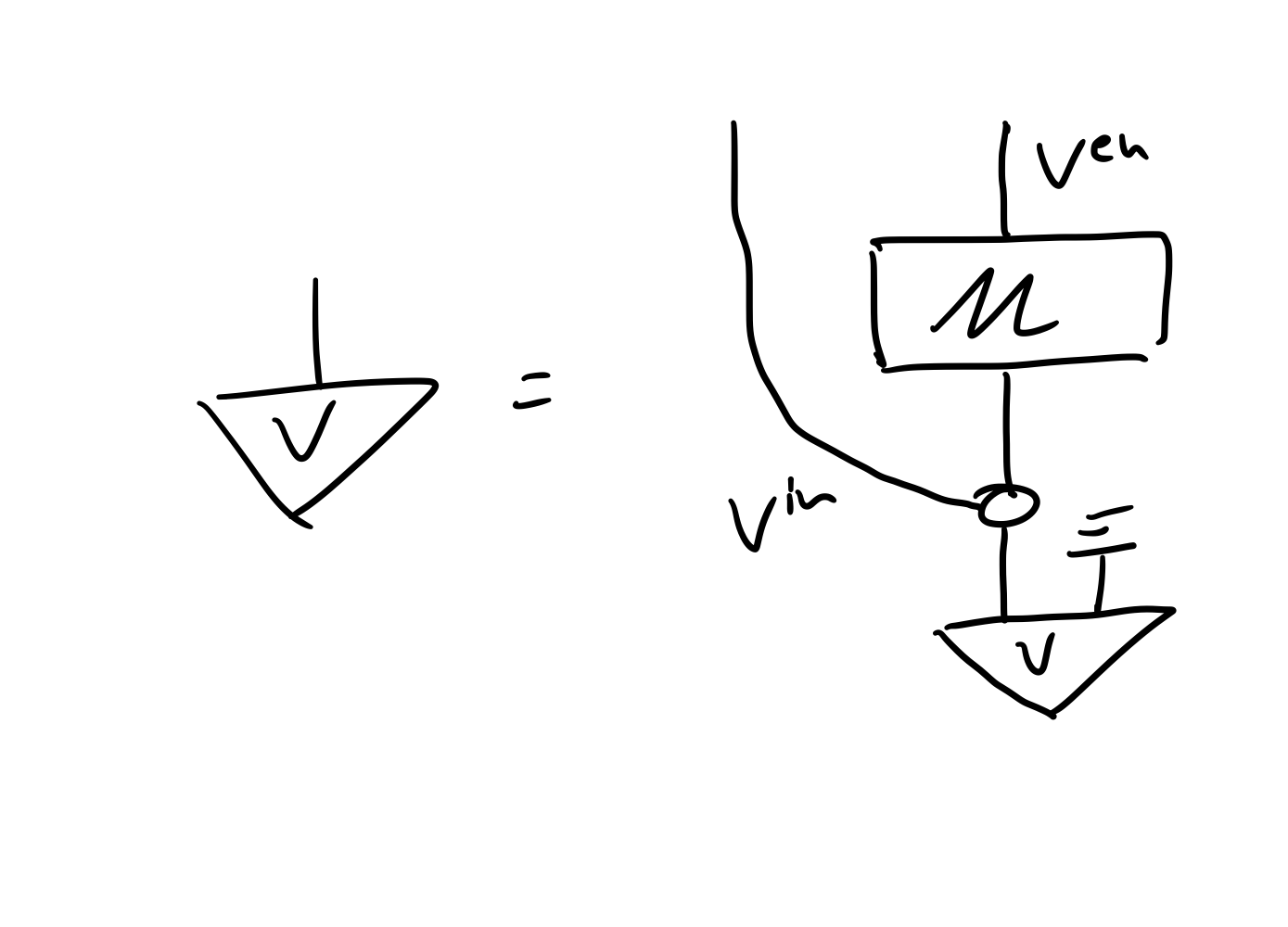

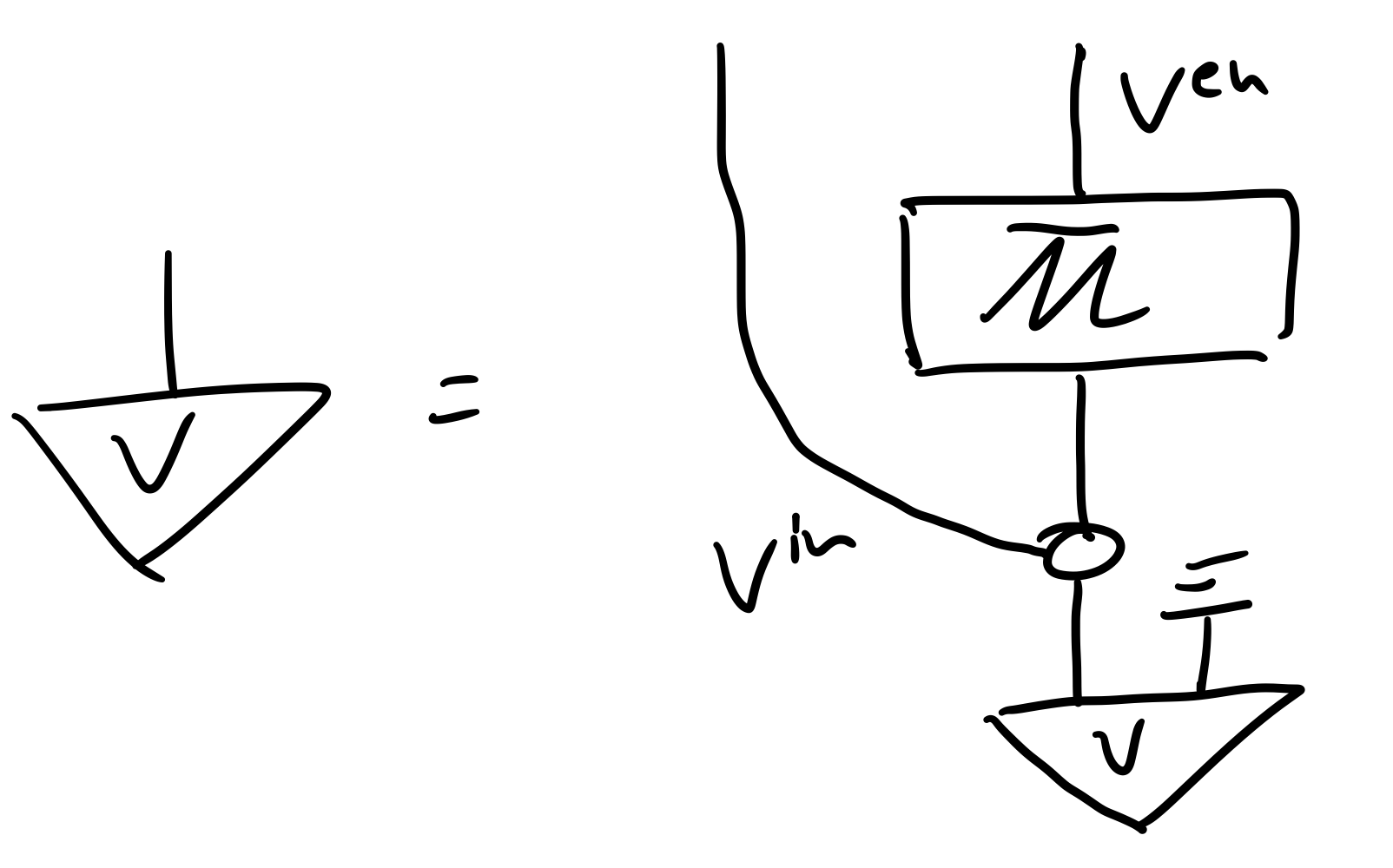

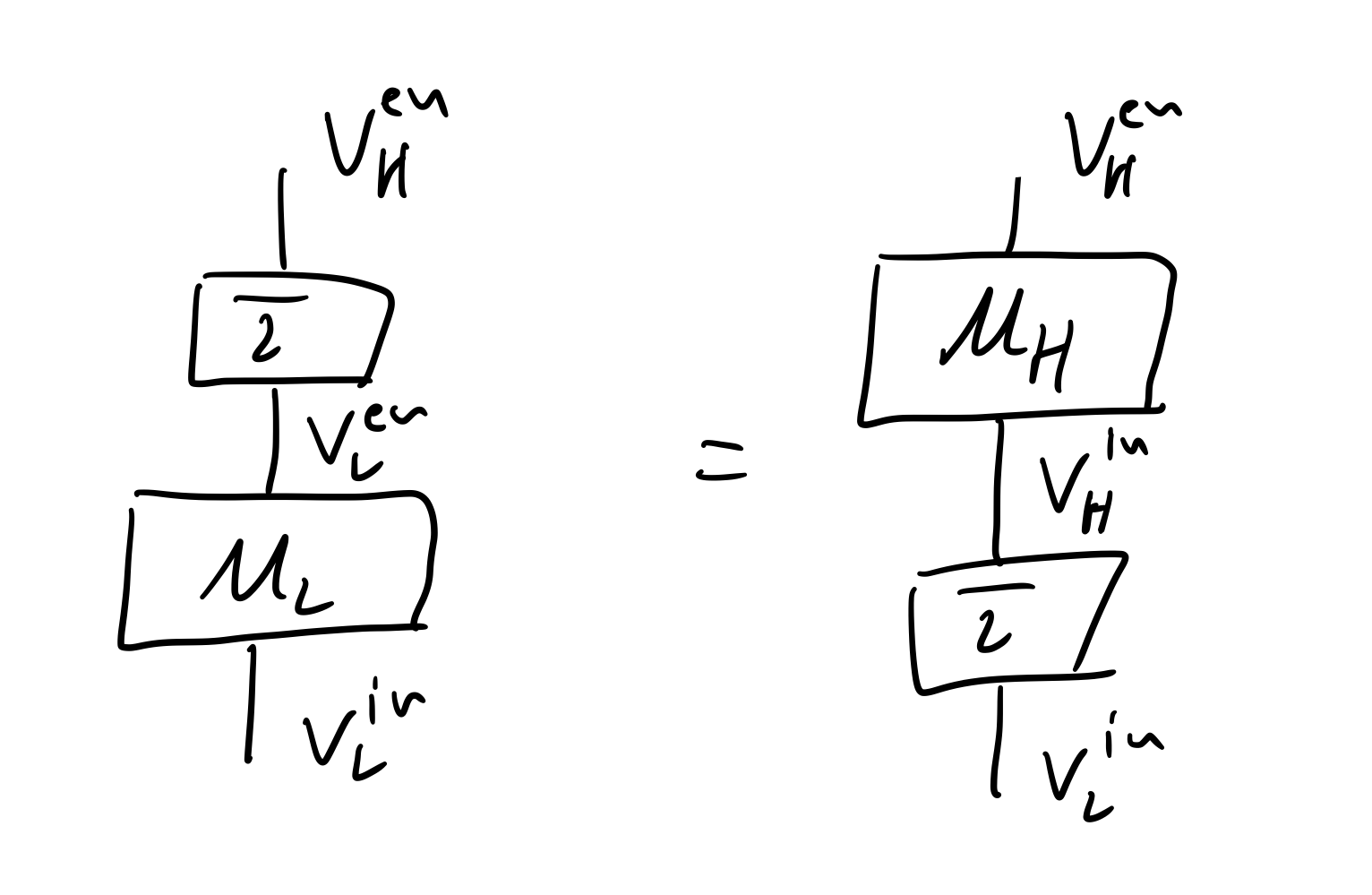

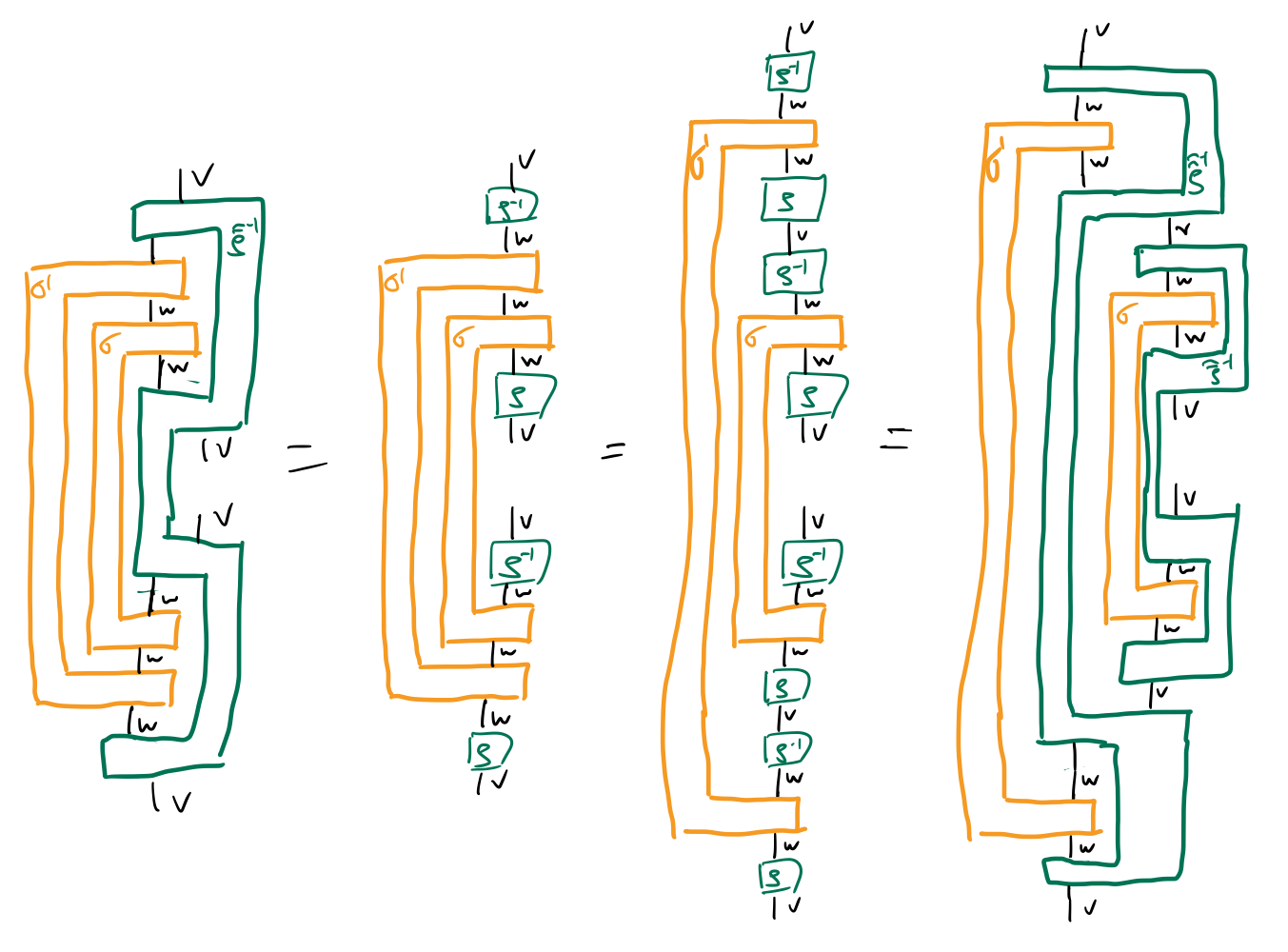

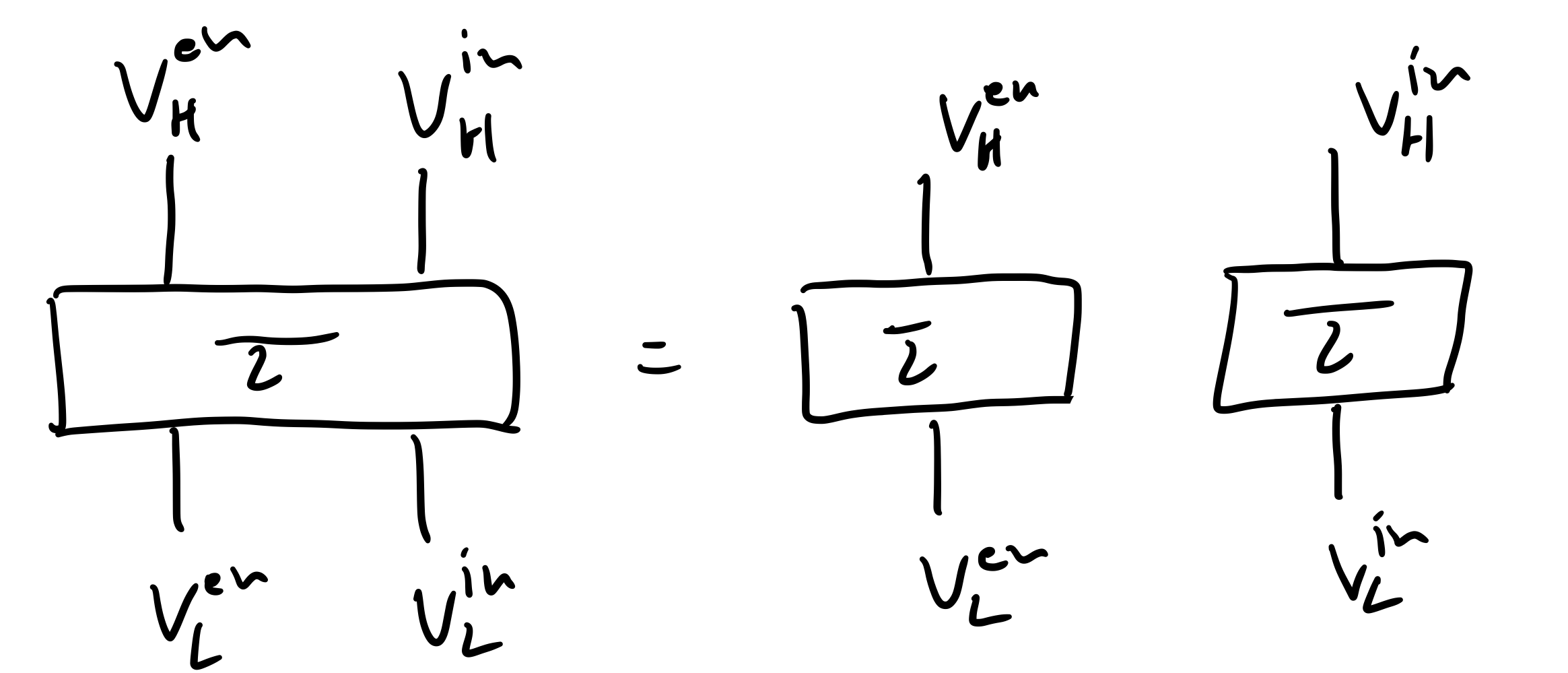

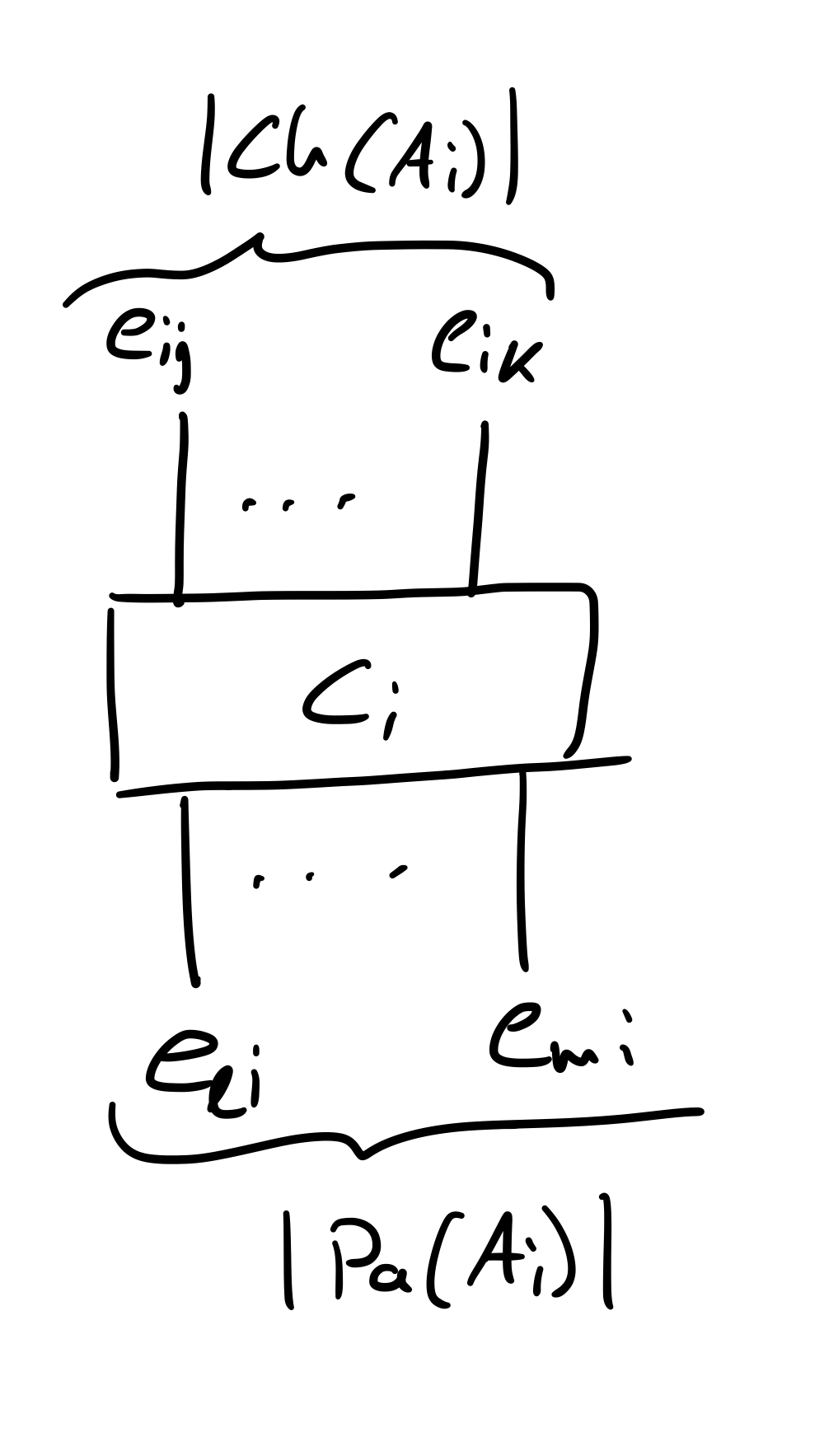

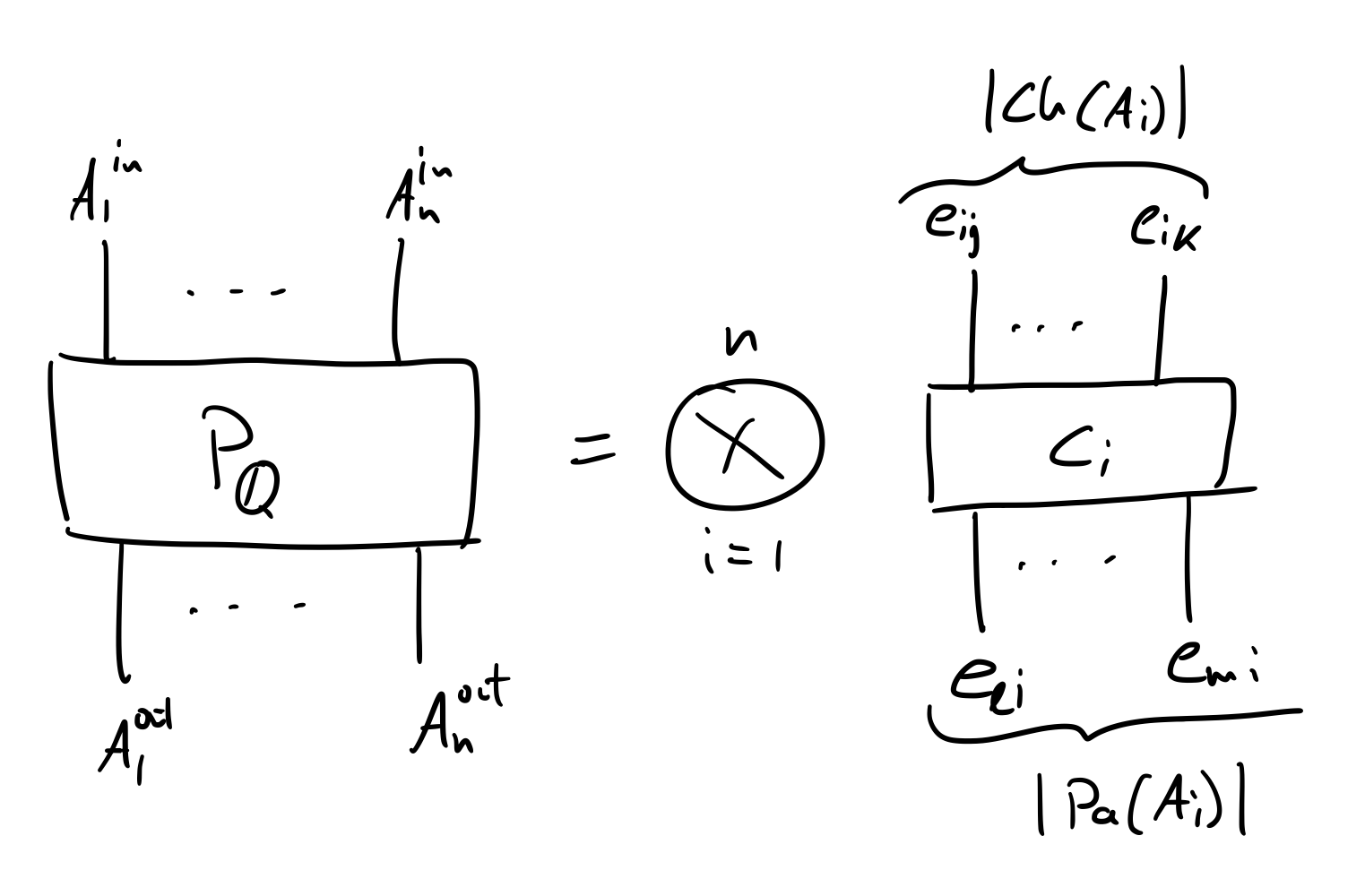

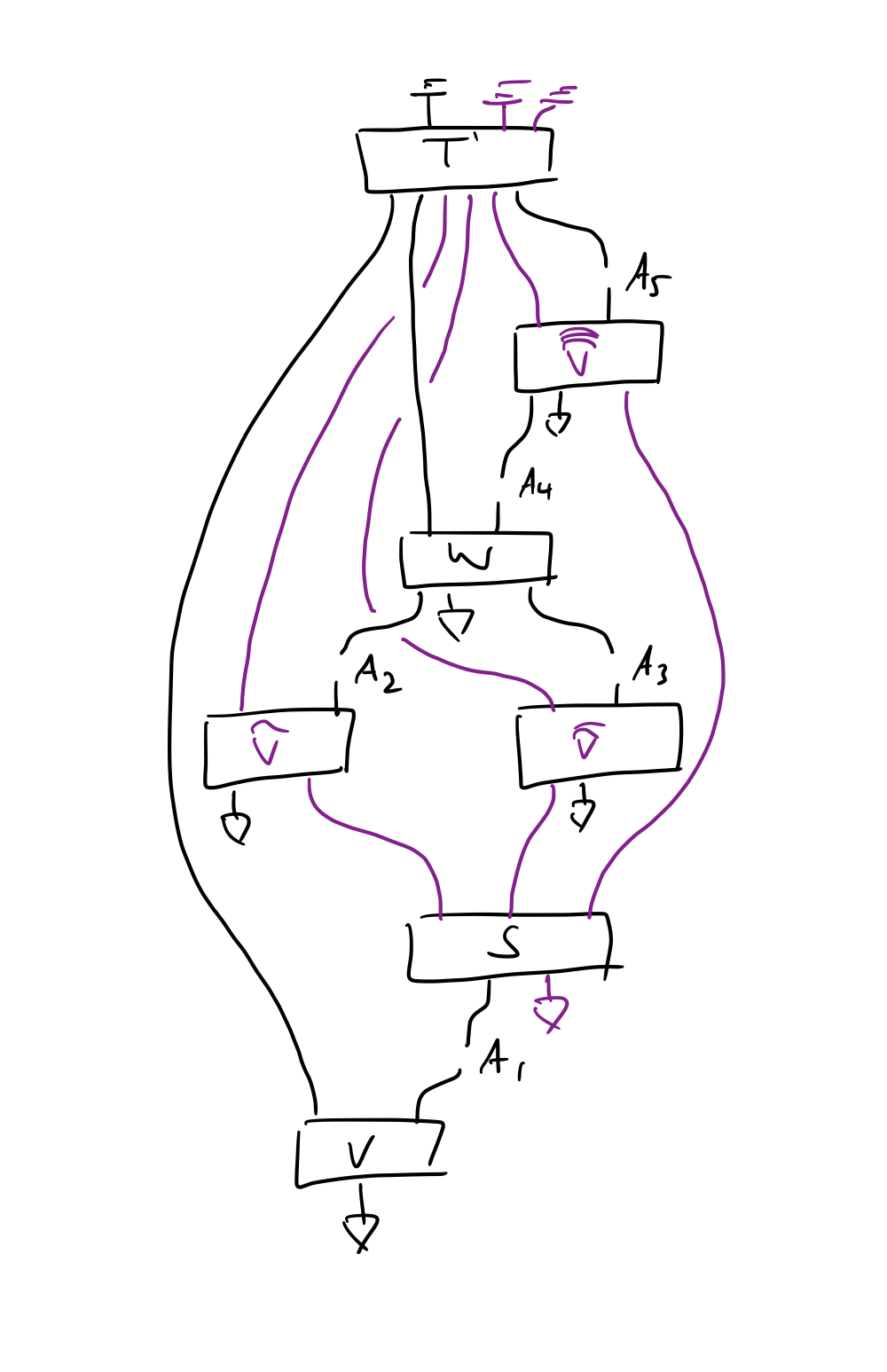

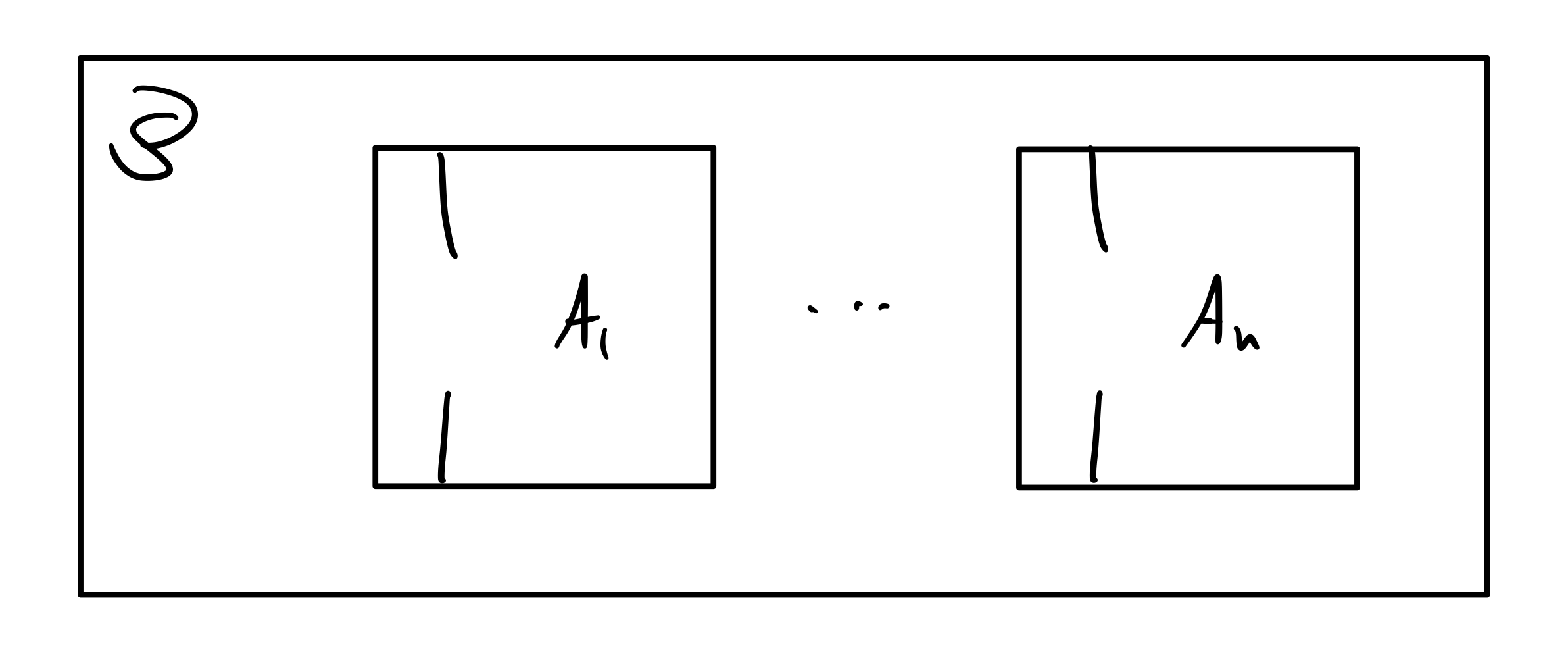

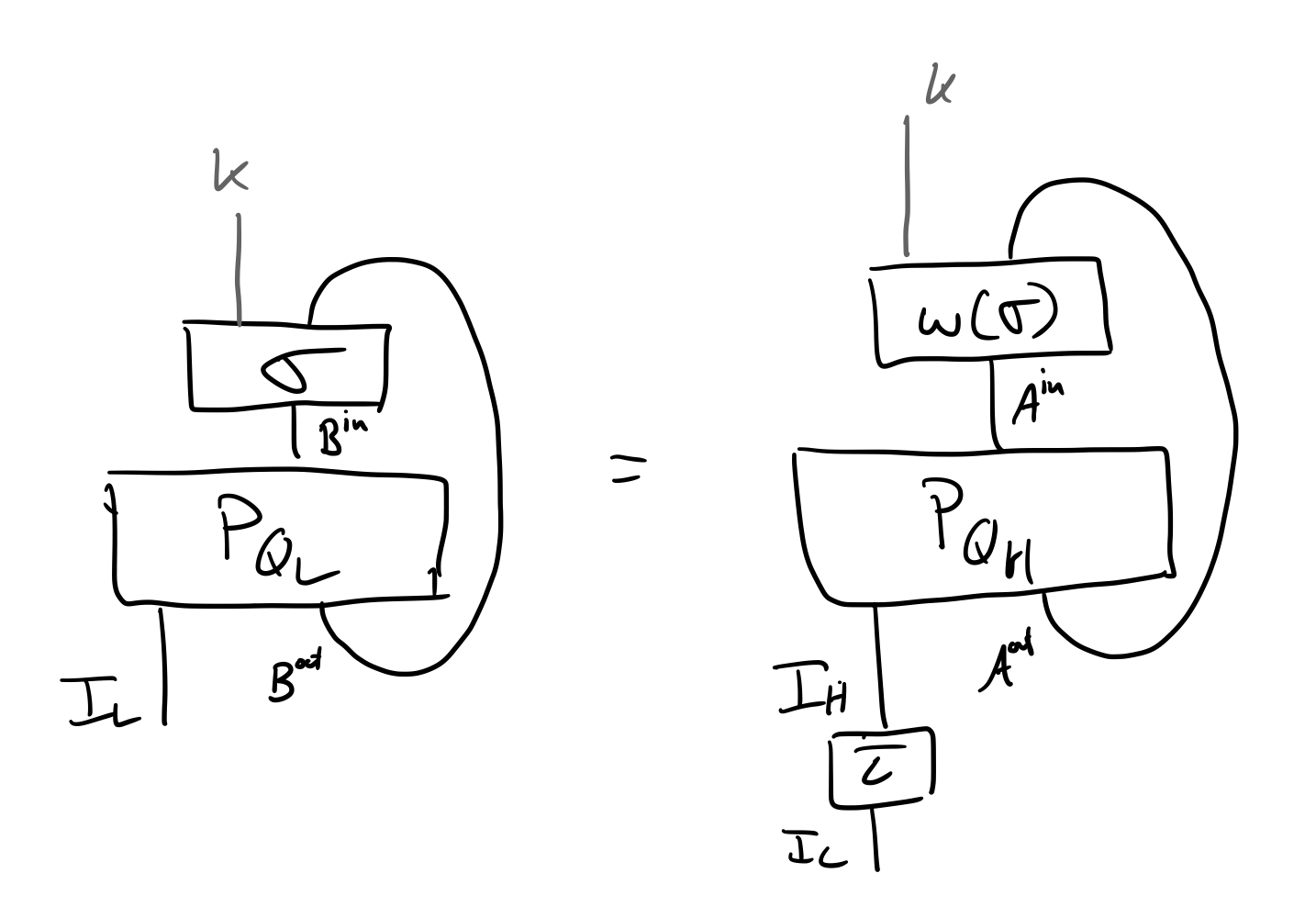

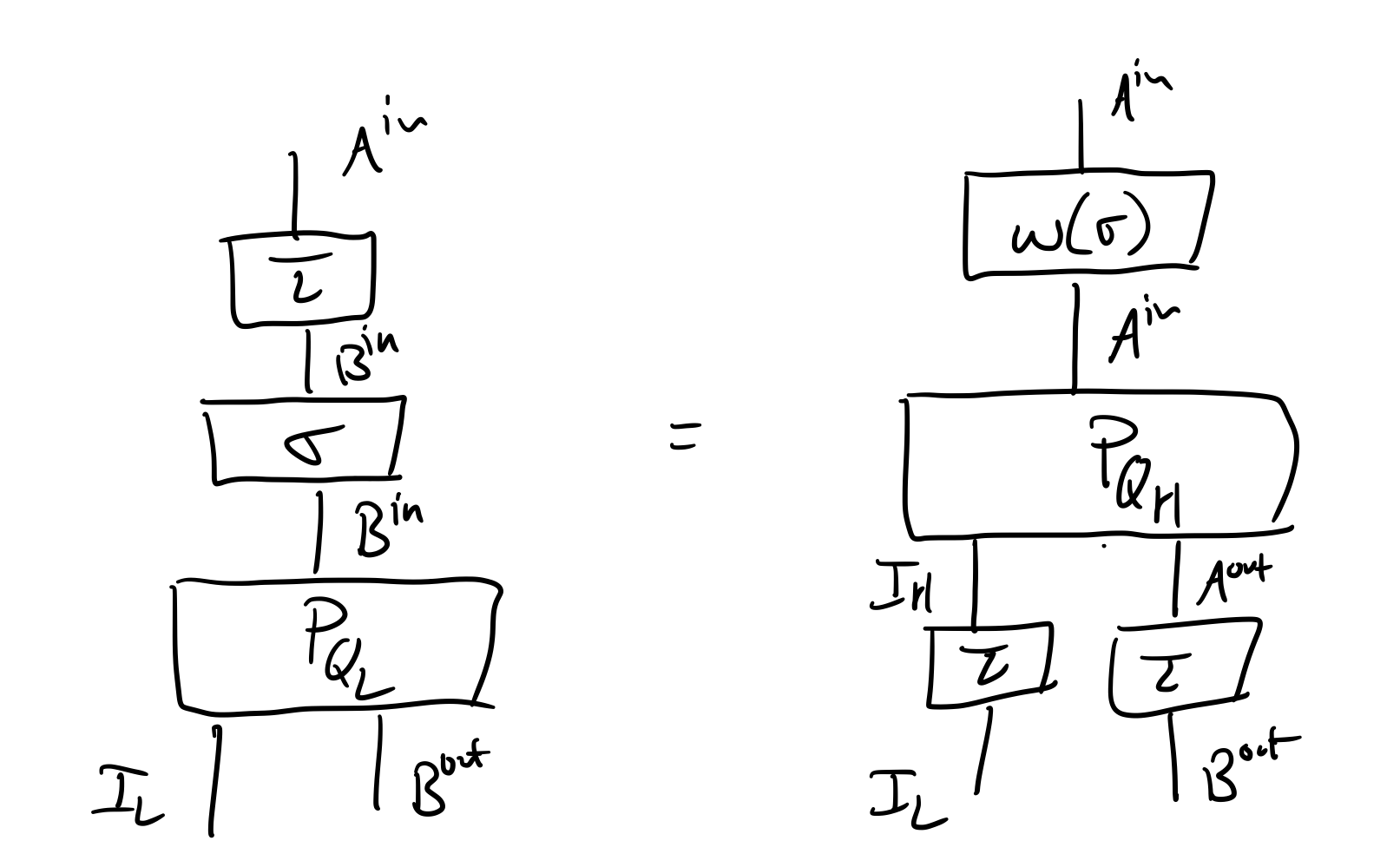

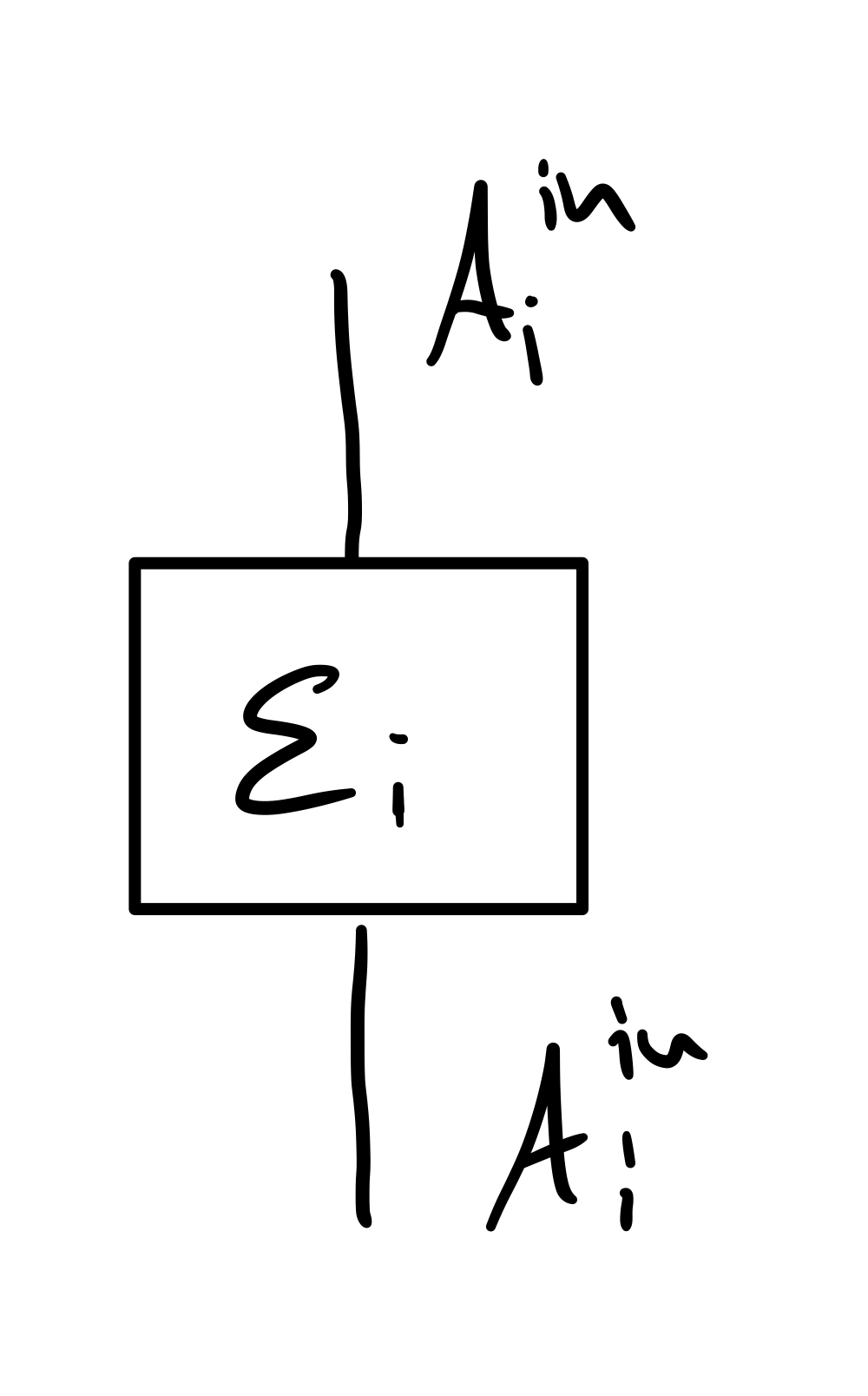

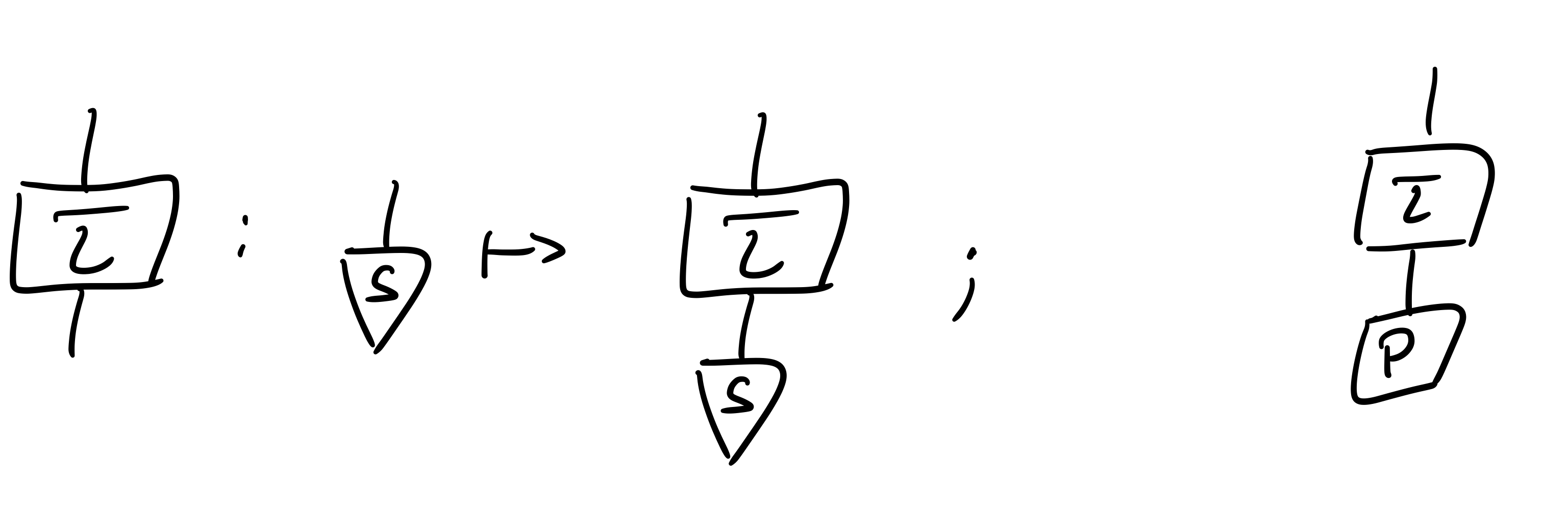

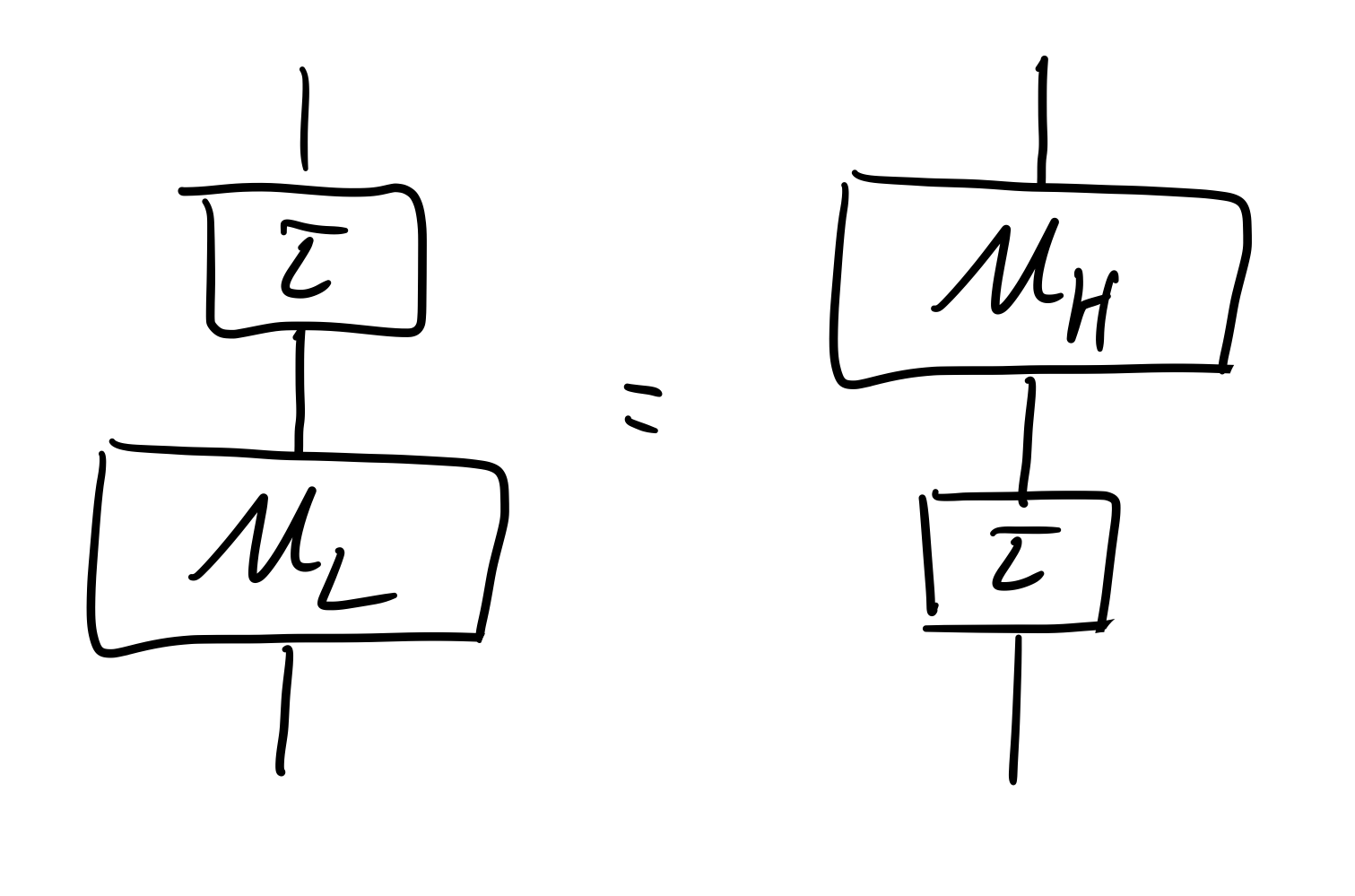

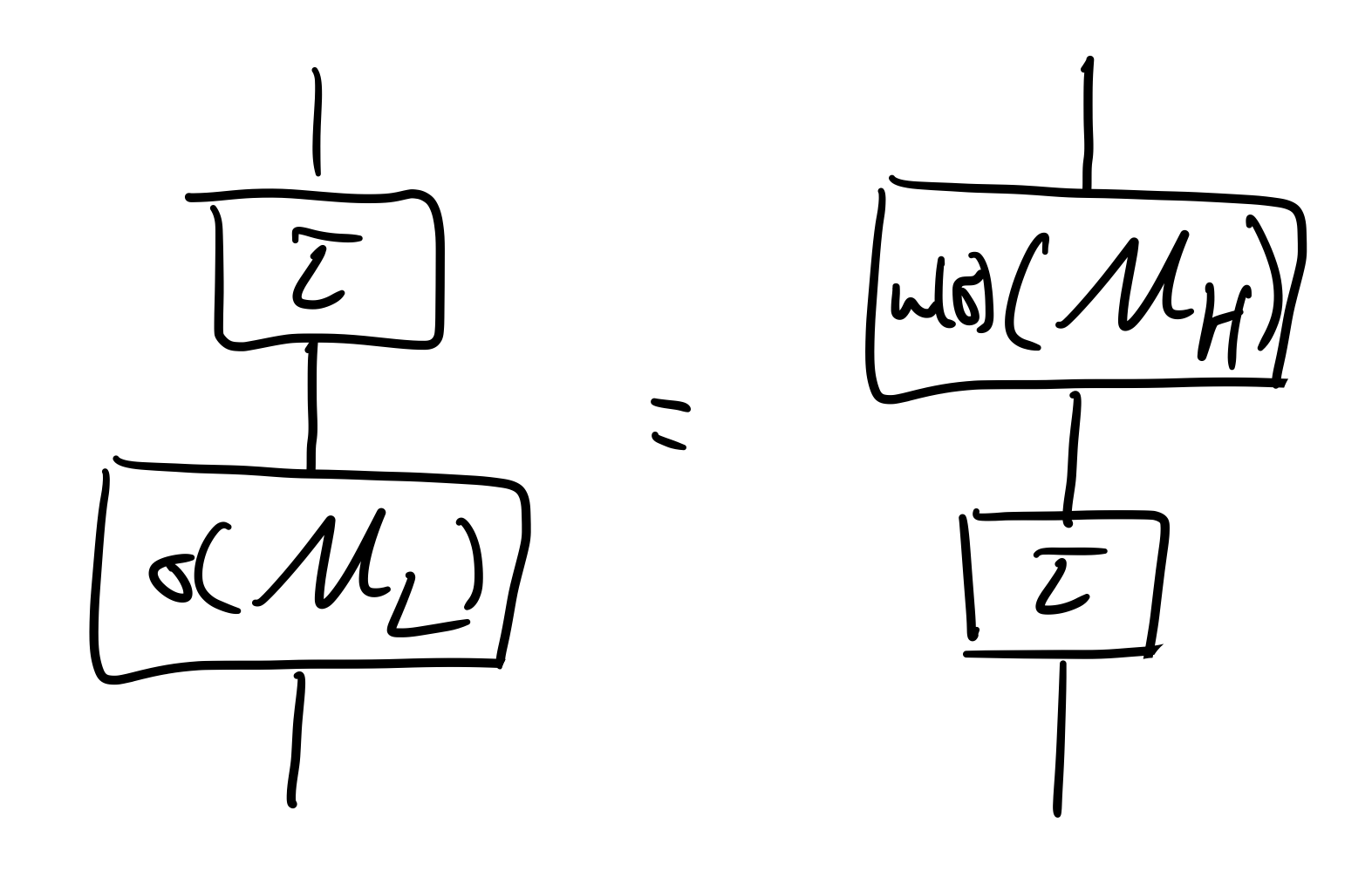

Abstracting from a low level to a more explanatory high level of description, and ideally while preserving causal structure, is fundamental to scientific practice, to causal inference problems, and to robust, efficient and interpretable AI. We present a general account of abstractions between low and high level models as natural transformations, focusing on the case of causal models. This provides a new formalisation of causal abstraction, unifying several notions in the literature, including constructive causal abstraction, Q-$τ$ consistency, abstractions based on interchange interventions, and `distributed' causal abstractions. Our approach is formalised in terms of category theory, and uses the general notion of a compositional model with a given set of queries and semantics in a monoidal, cd- or Markov category; causal models and their queries such as interventions being special cases. We identify two basic notions of abstraction: downward abstractions mapping queries from high to low level; and upward abstractions, mapping concrete queries such as Do-interventions from low to high. Although usually presented as the latter, we show how common causal abstractions may, more fundamentally, be understood in terms of the former. Our approach also leads us to consider a new stronger notion of `component-level' abstraction, applying to the individual components of a model. In particular, this yields a novel, strengthened form of constructive causal abstraction at the mechanism-level, for which we prove characterisation results. Finally, we show that abstraction can be generalised to further compositional models, including those with a quantum semantics implemented by quantum circuits, and we take first steps in exploring abstractions between quantum compositional circuit models and high-level classical causal models as a means to explainable quantum AI.

💡 Deep Analysis

Deep Dive into Causal and Compositional Abstraction.

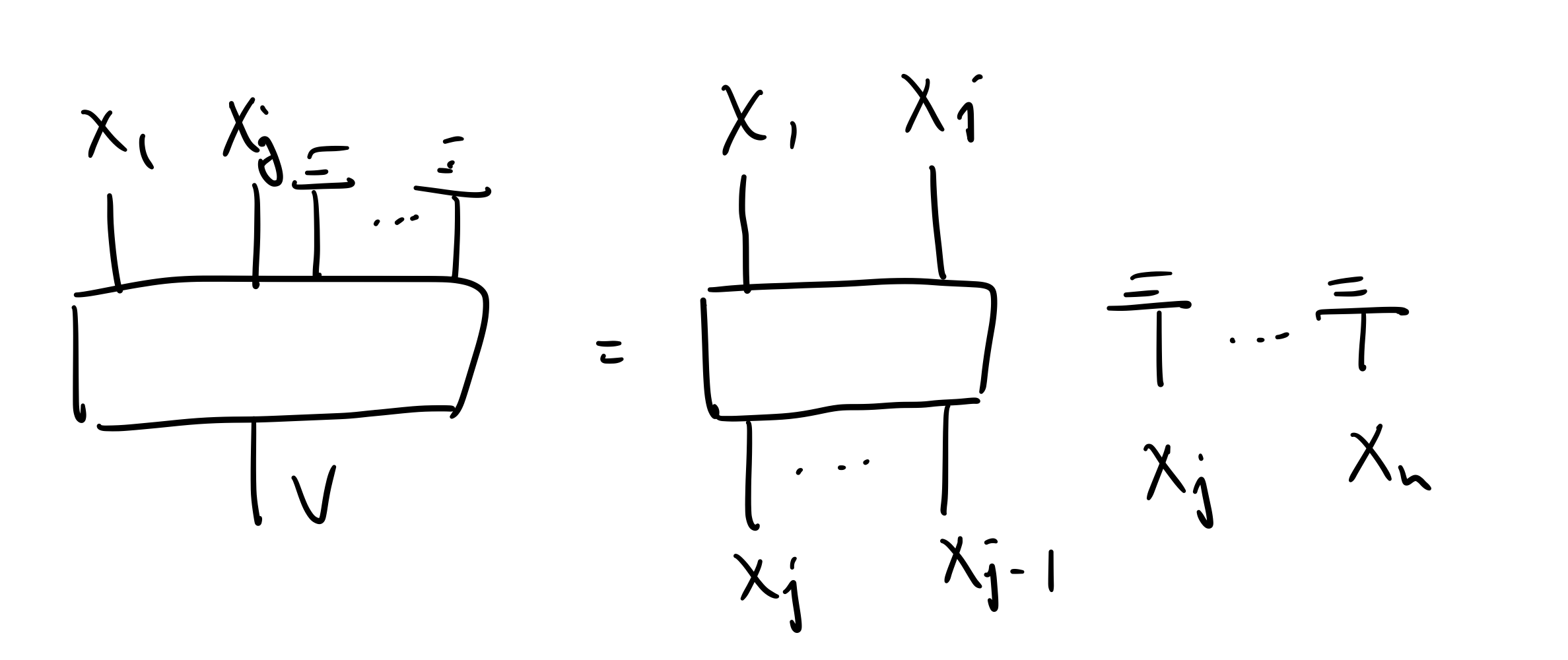

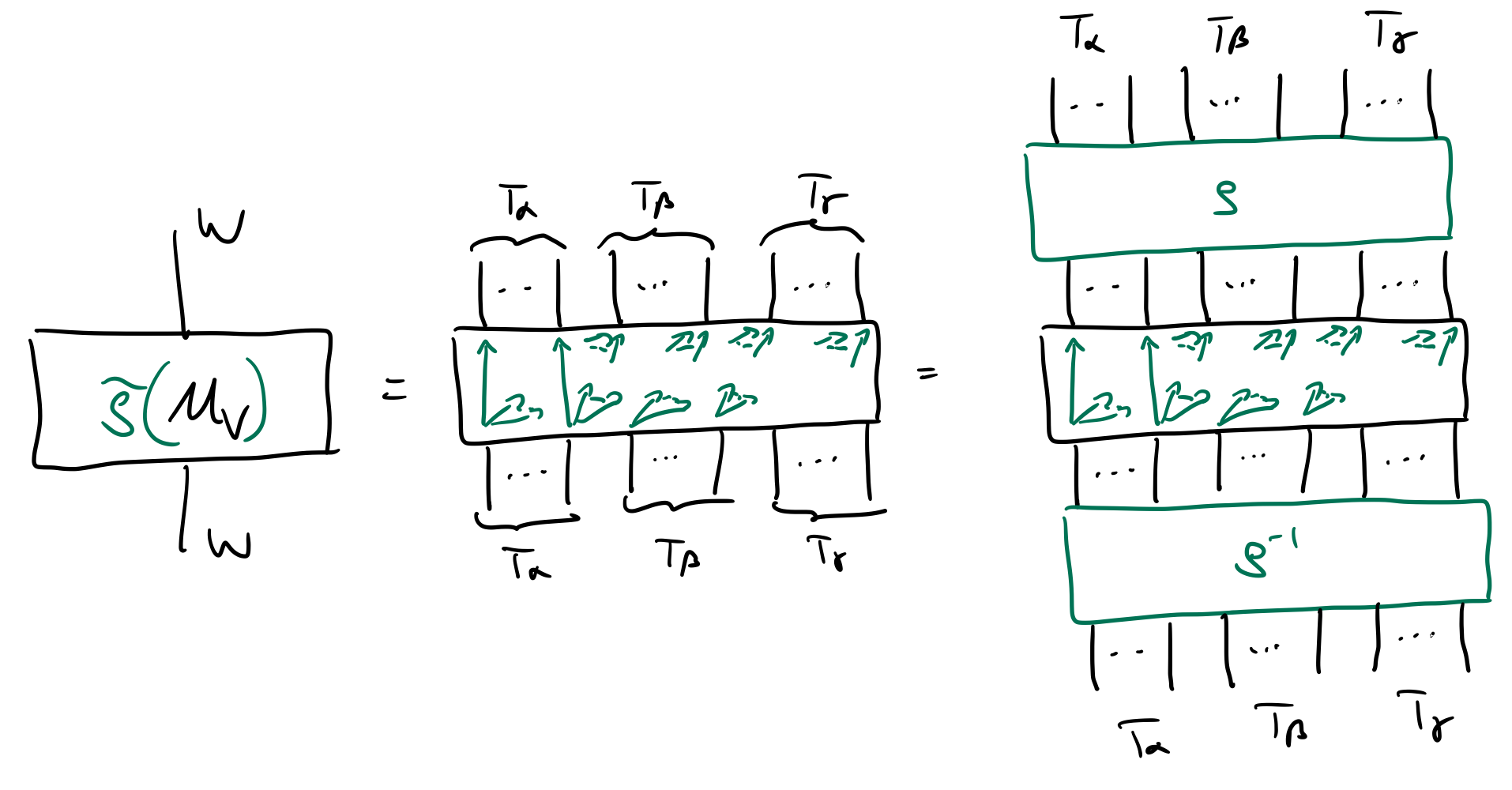

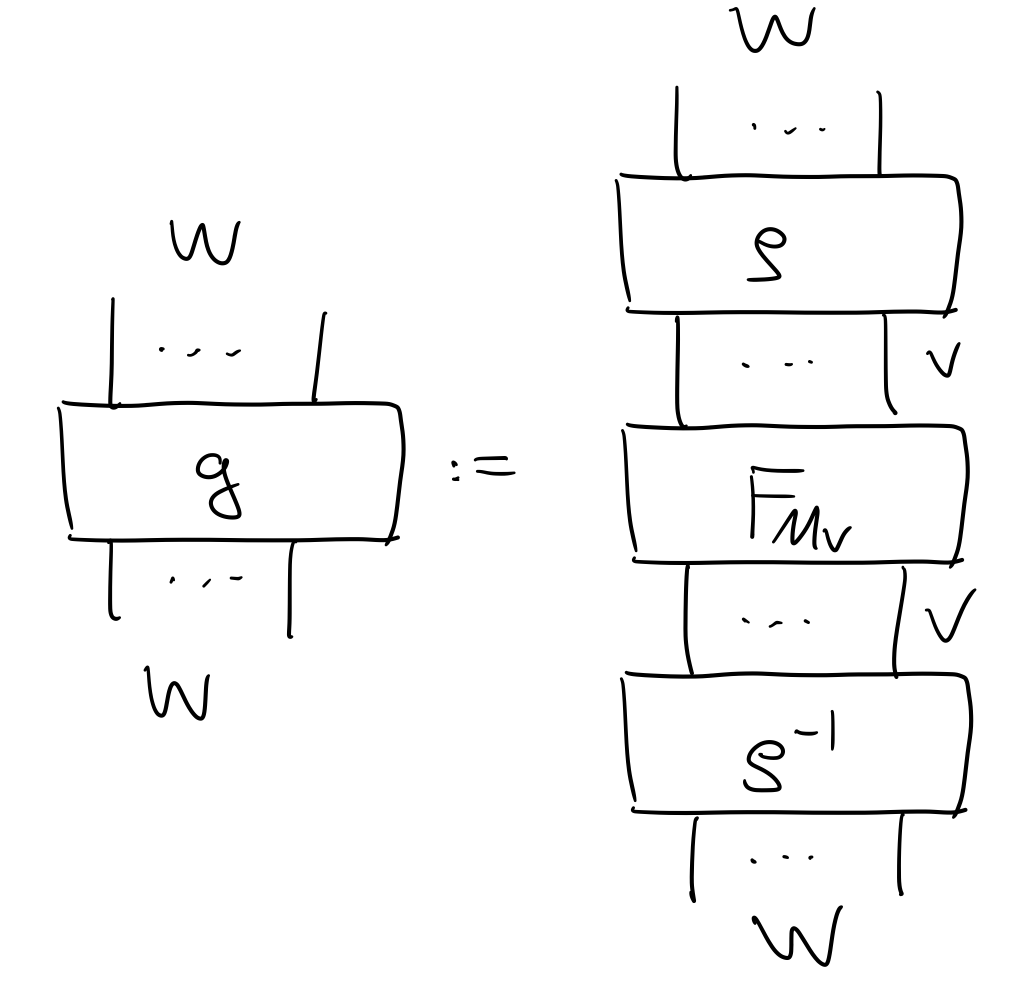

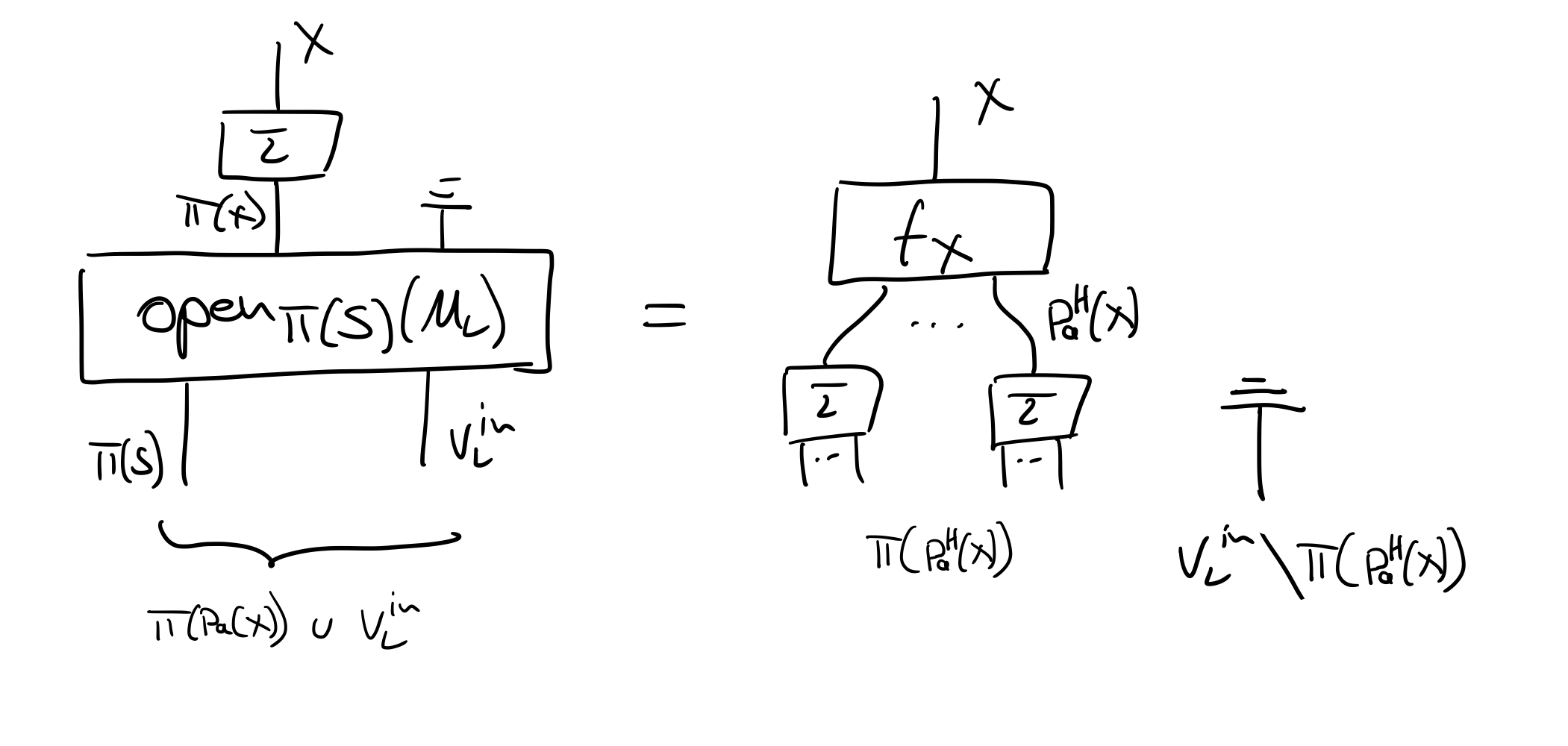

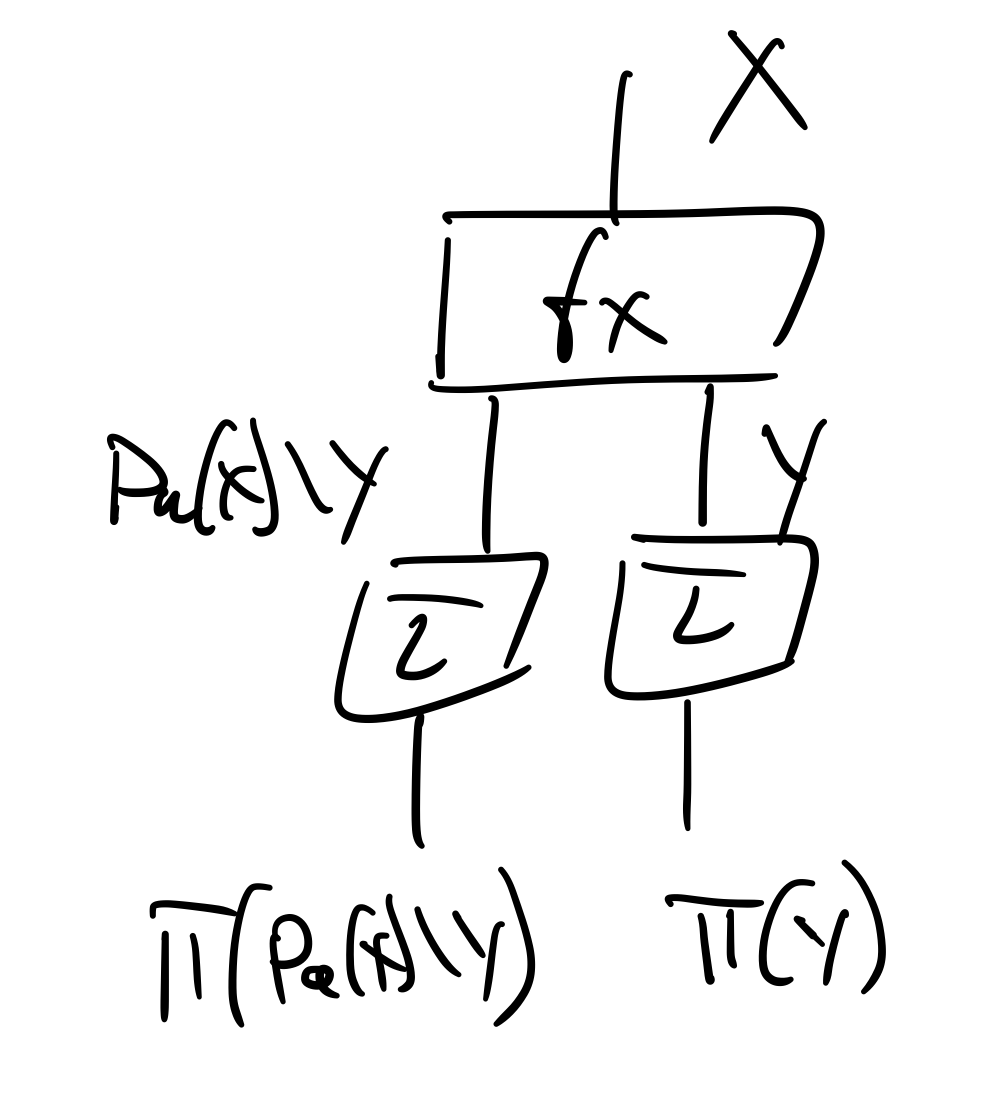

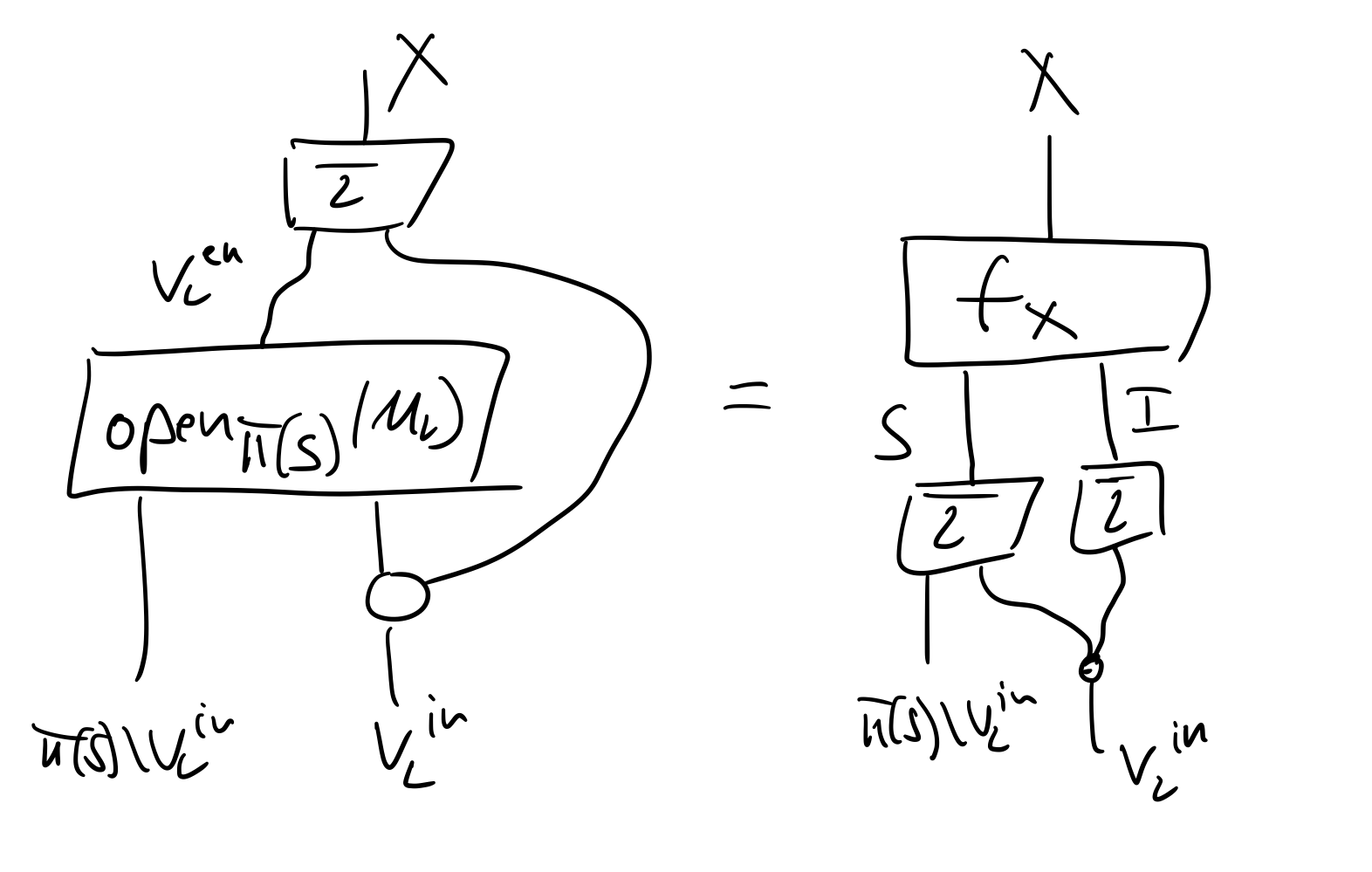

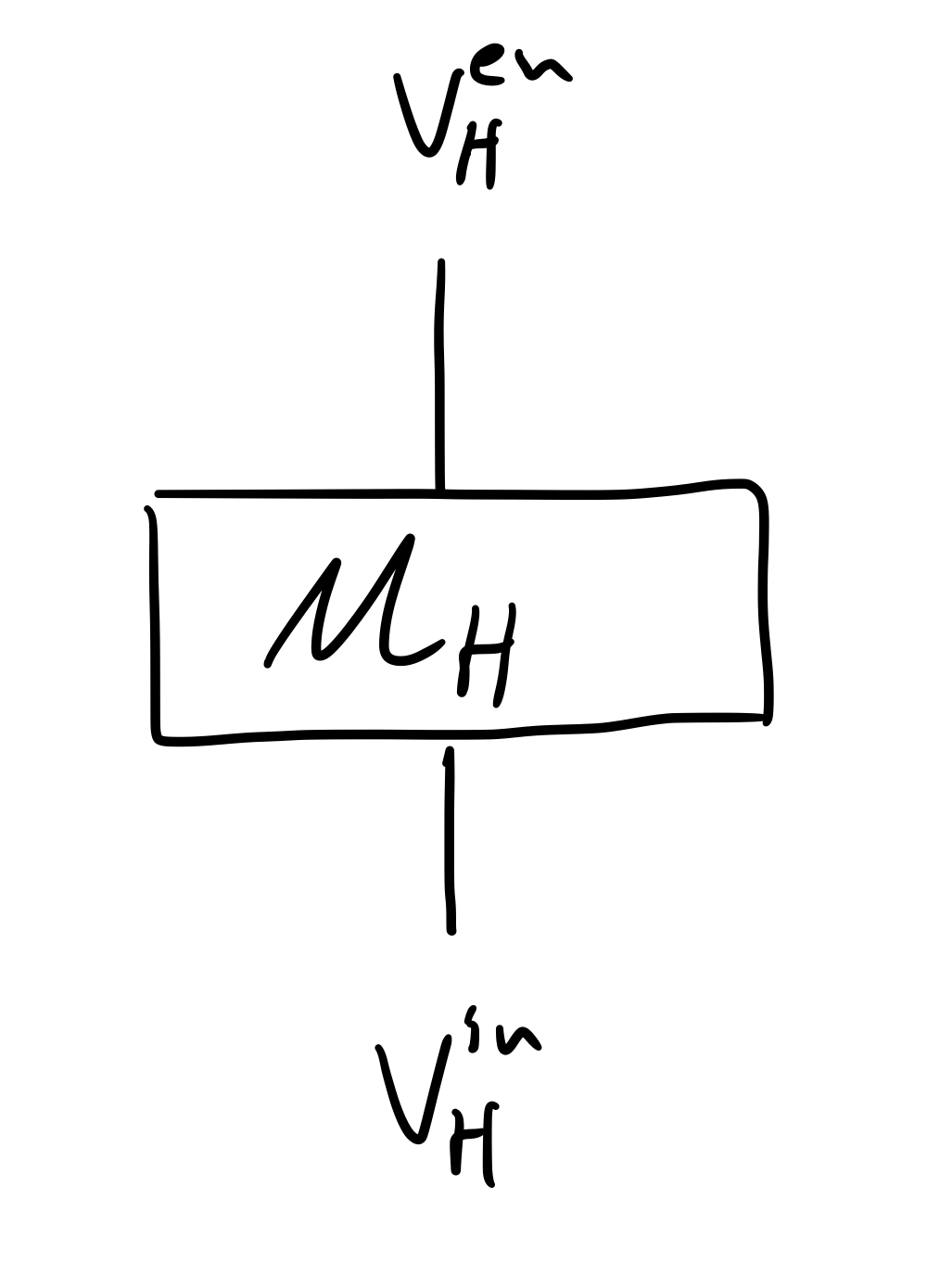

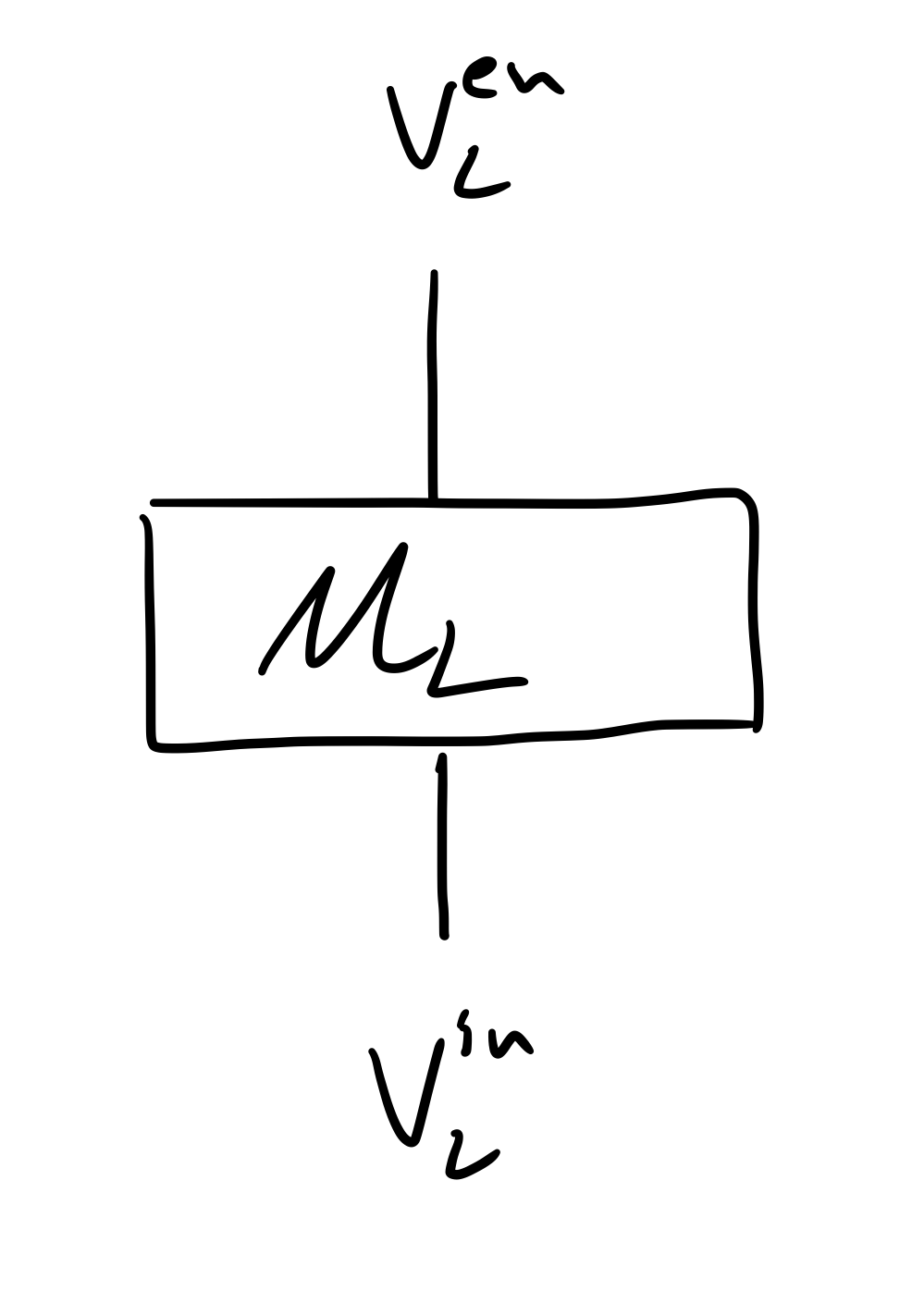

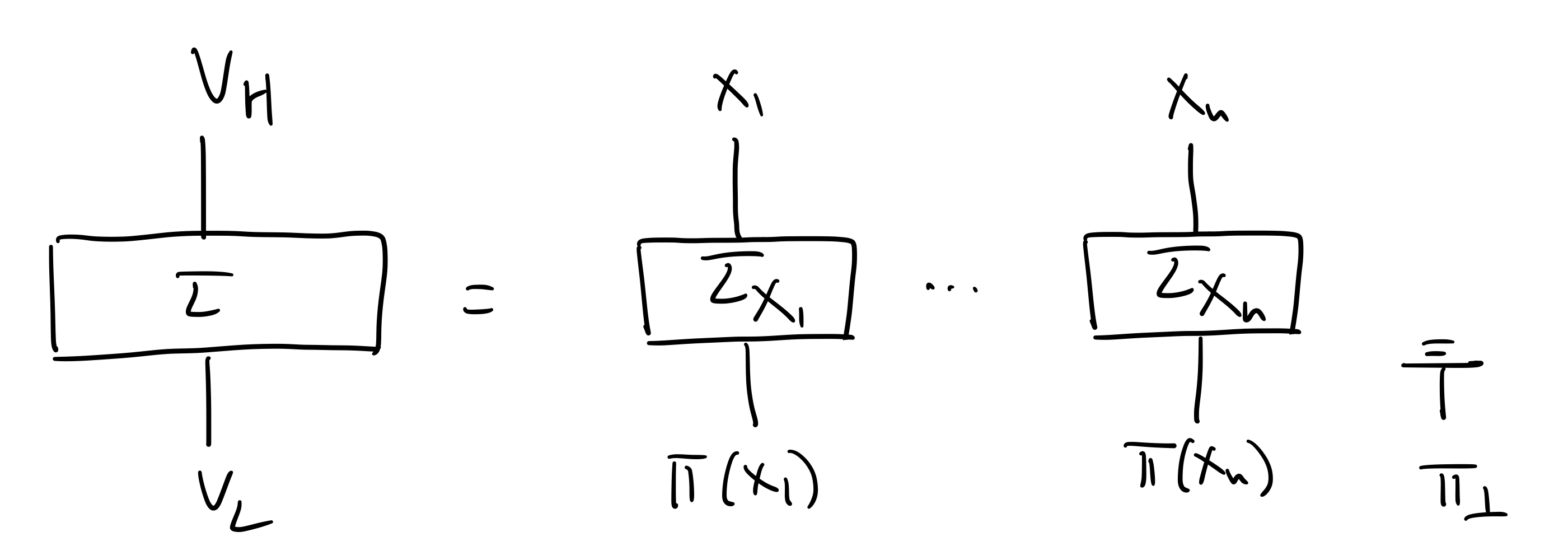

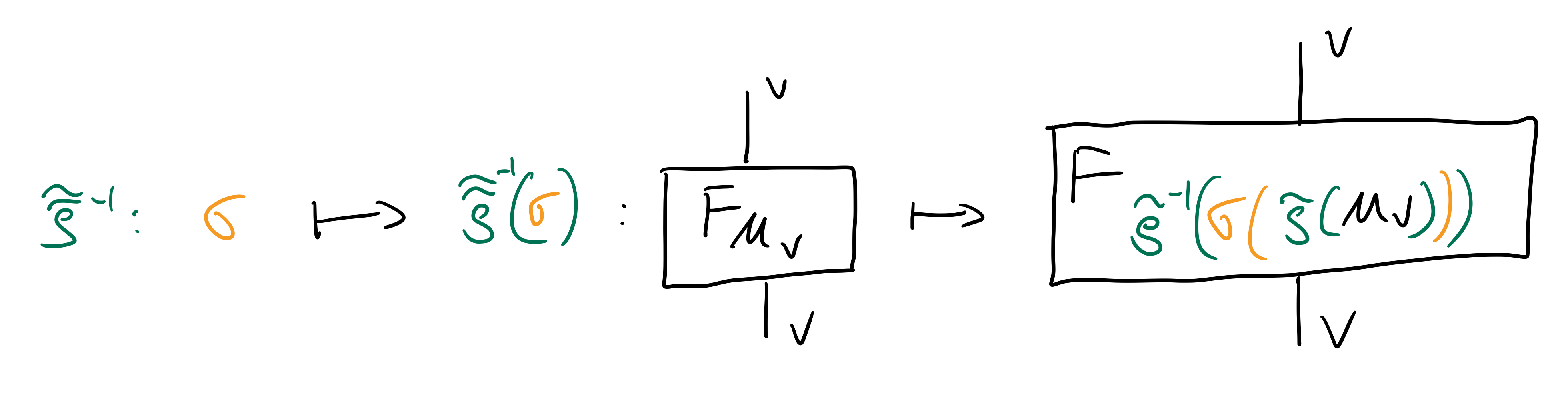

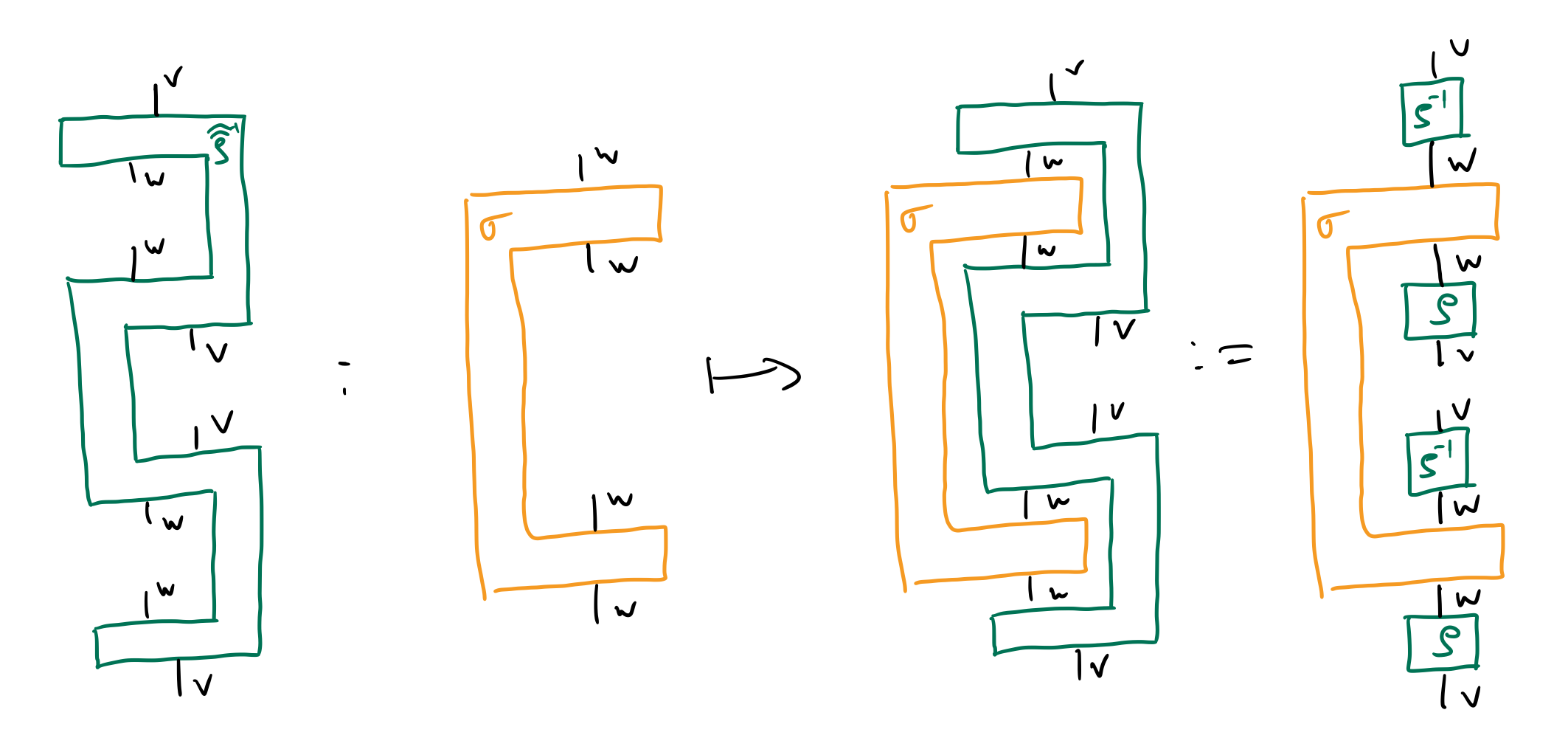

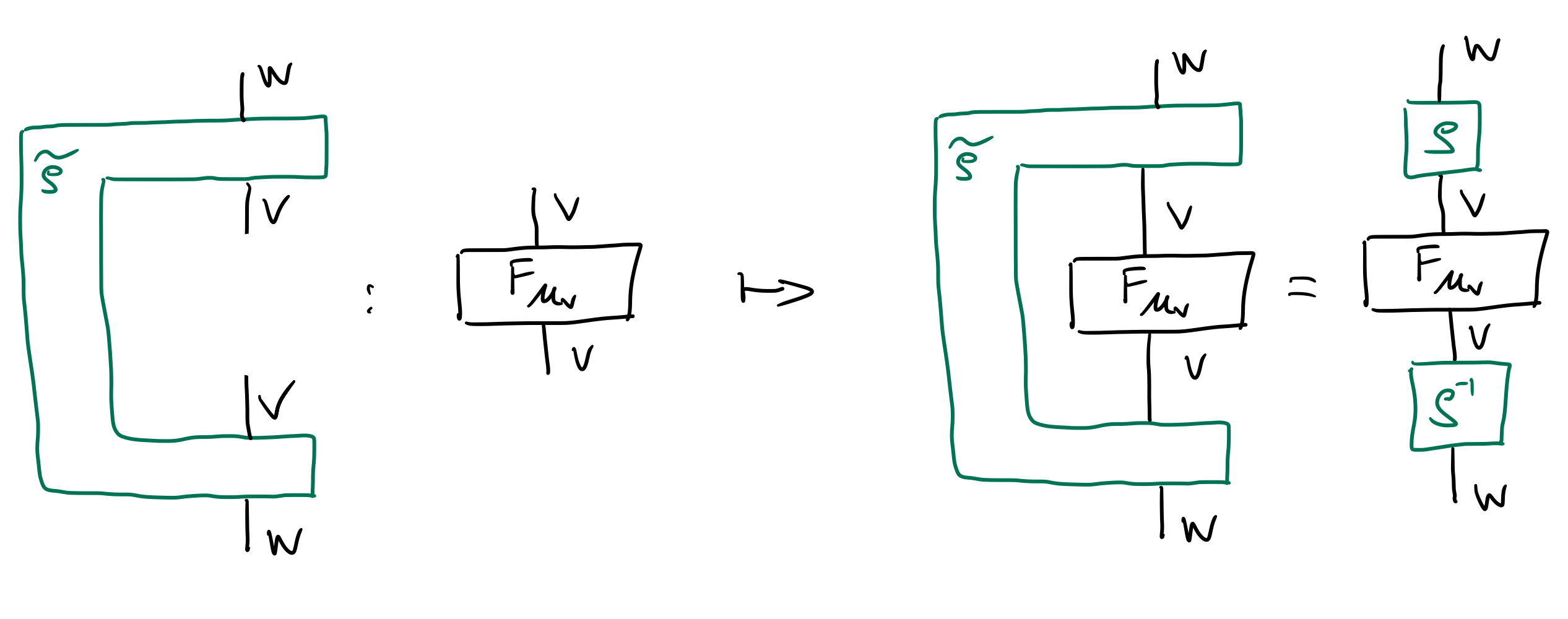

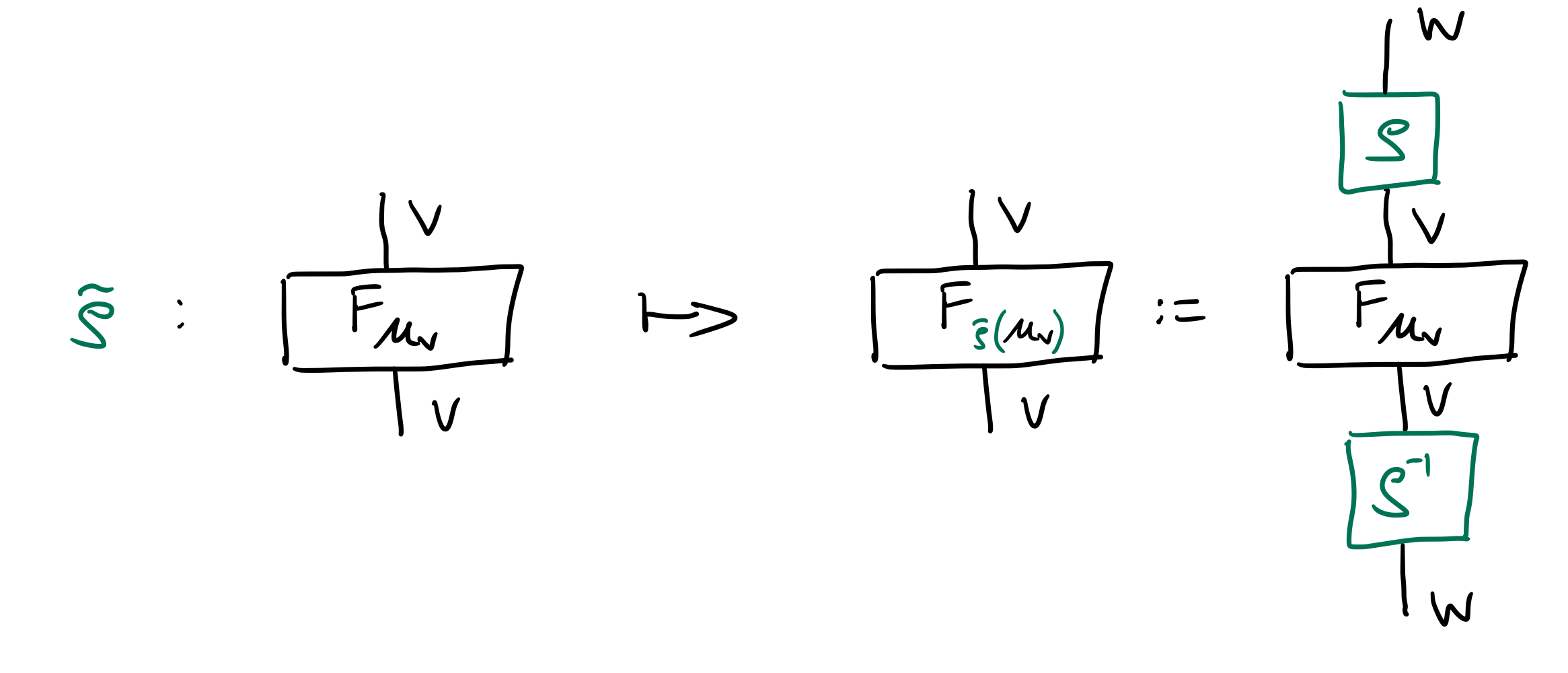

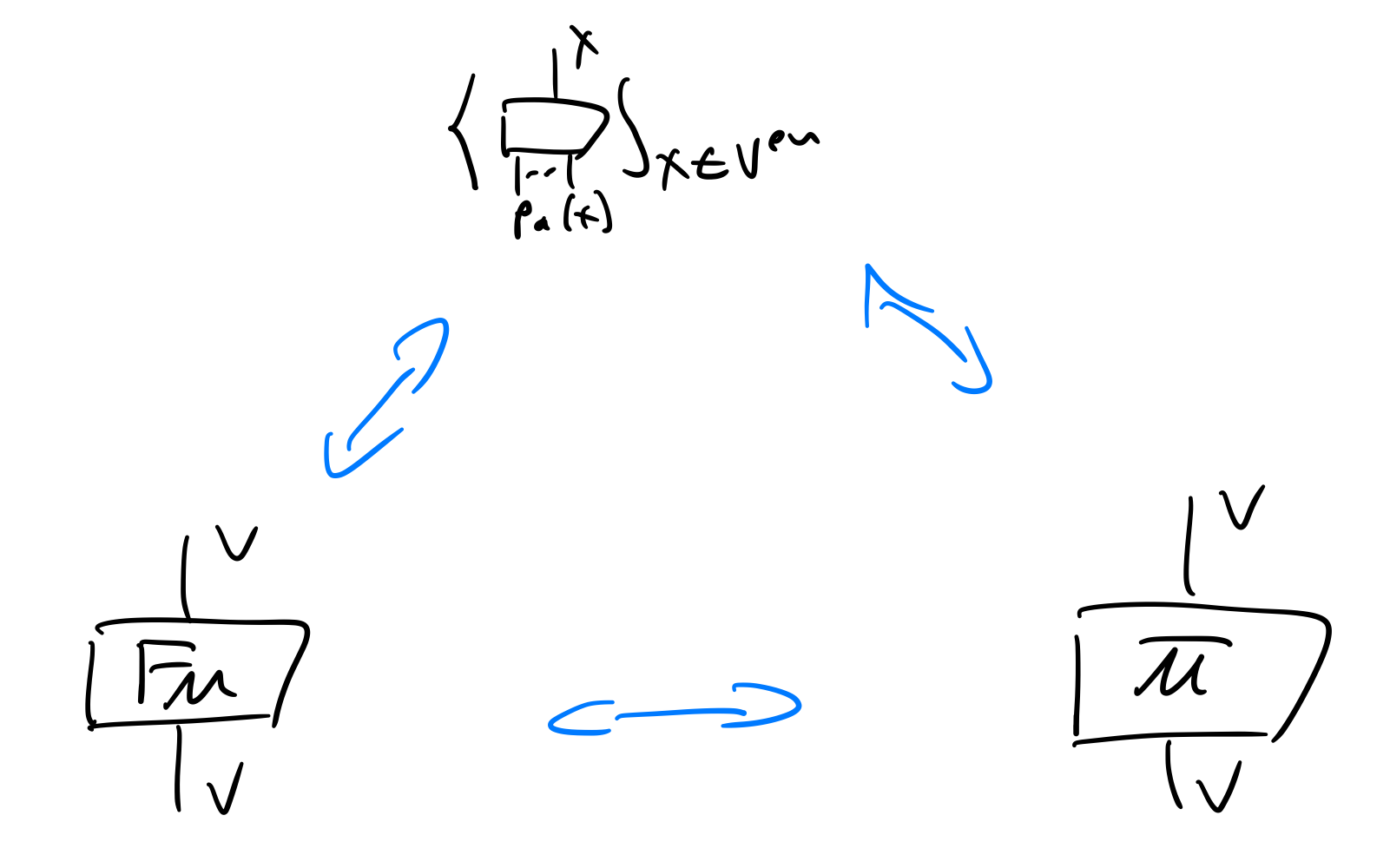

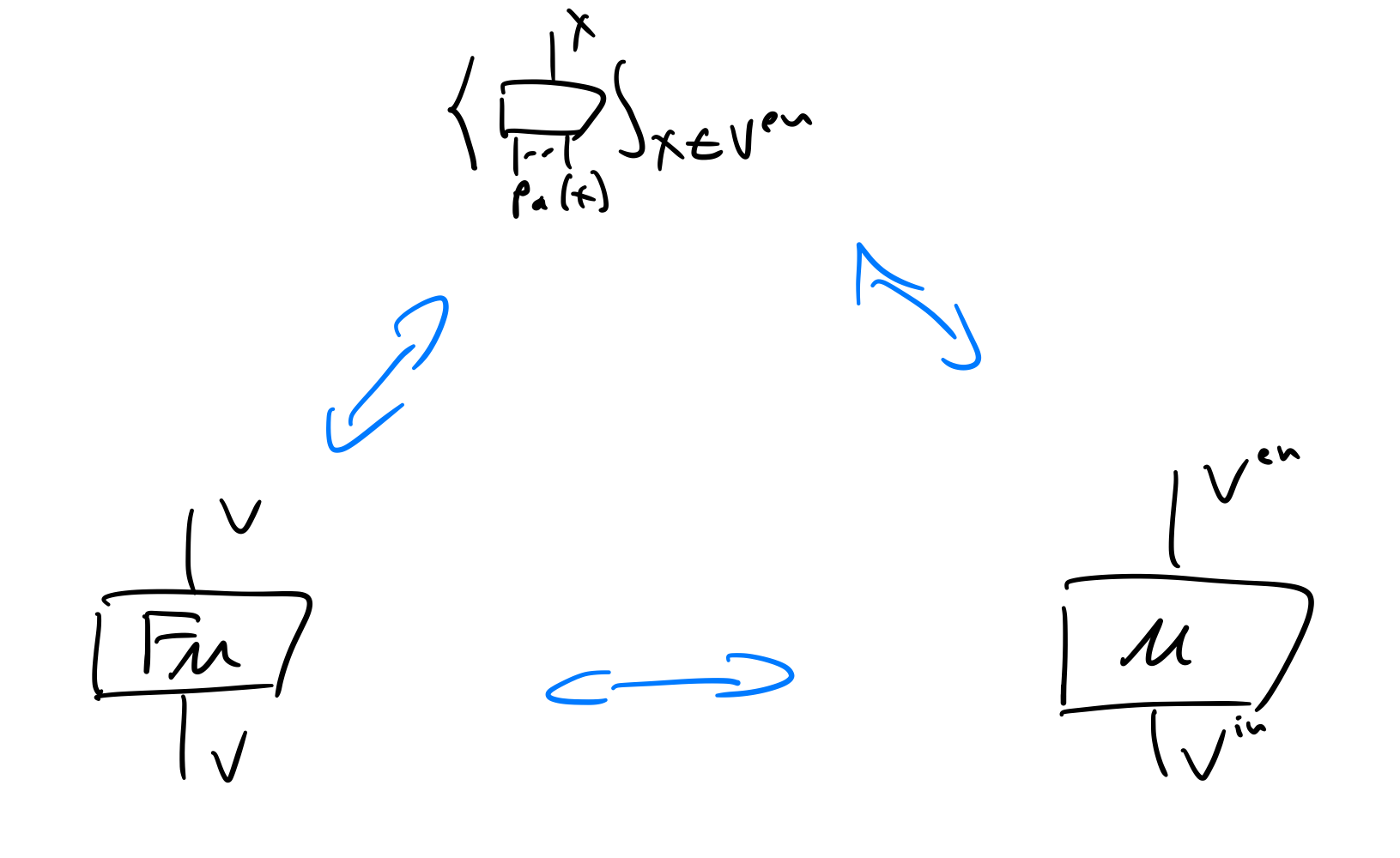

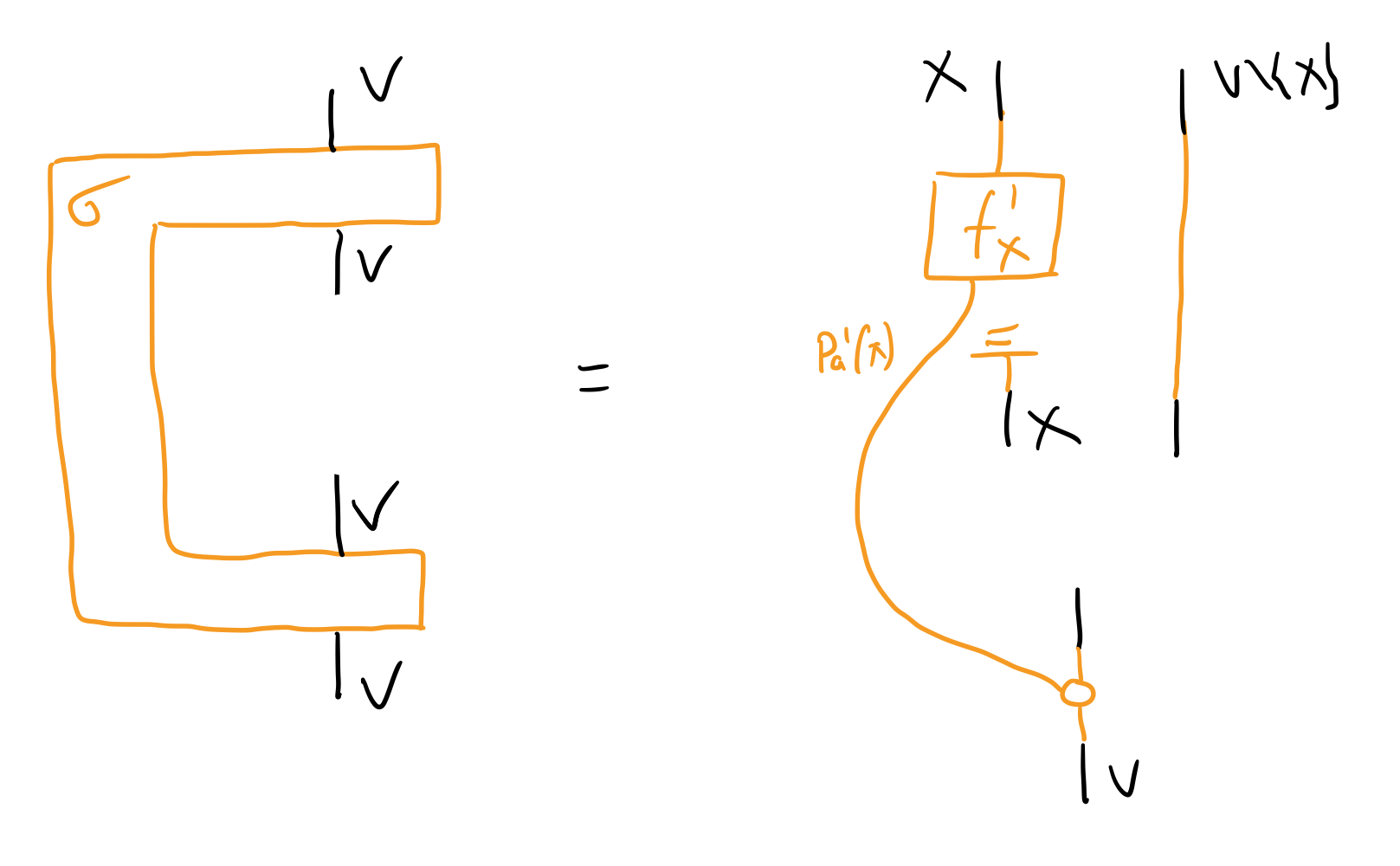

Abstracting from a low level to a more explanatory high level of description, and ideally while preserving causal structure, is fundamental to scientific practice, to causal inference problems, and to robust, efficient and interpretable AI. We present a general account of abstractions between low and high level models as natural transformations, focusing on the case of causal models. This provides a new formalisation of causal abstraction, unifying several notions in the literature, including constructive causal abstraction, Q-$τ$ consistency, abstractions based on interchange interventions, and `distributed’ causal abstractions. Our approach is formalised in terms of category theory, and uses the general notion of a compositional model with a given set of queries and semantics in a monoidal, cd- or Markov category; causal models and their queries such as interventions being special cases. We identify two basic notions of abstraction: downward abstractions mapping queries from high to

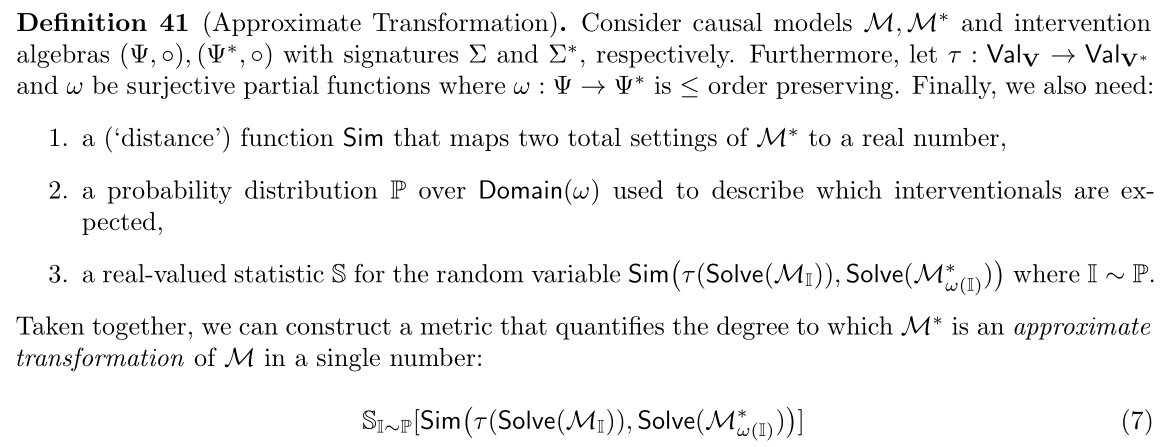

📄 Full Content

Abstracting away details in order to reason in terms of explanatory high-level concepts is a hallmark of both human cognition and scientific practice; from microscopic vs thermodynamic descriptions of a gas, to extracting macrovariables from climate data or medical scans, all the way to economics. In each of these cases, and most if not all situations of scientific practice, issues of abstraction intersect with those of causality and causal reasoning.

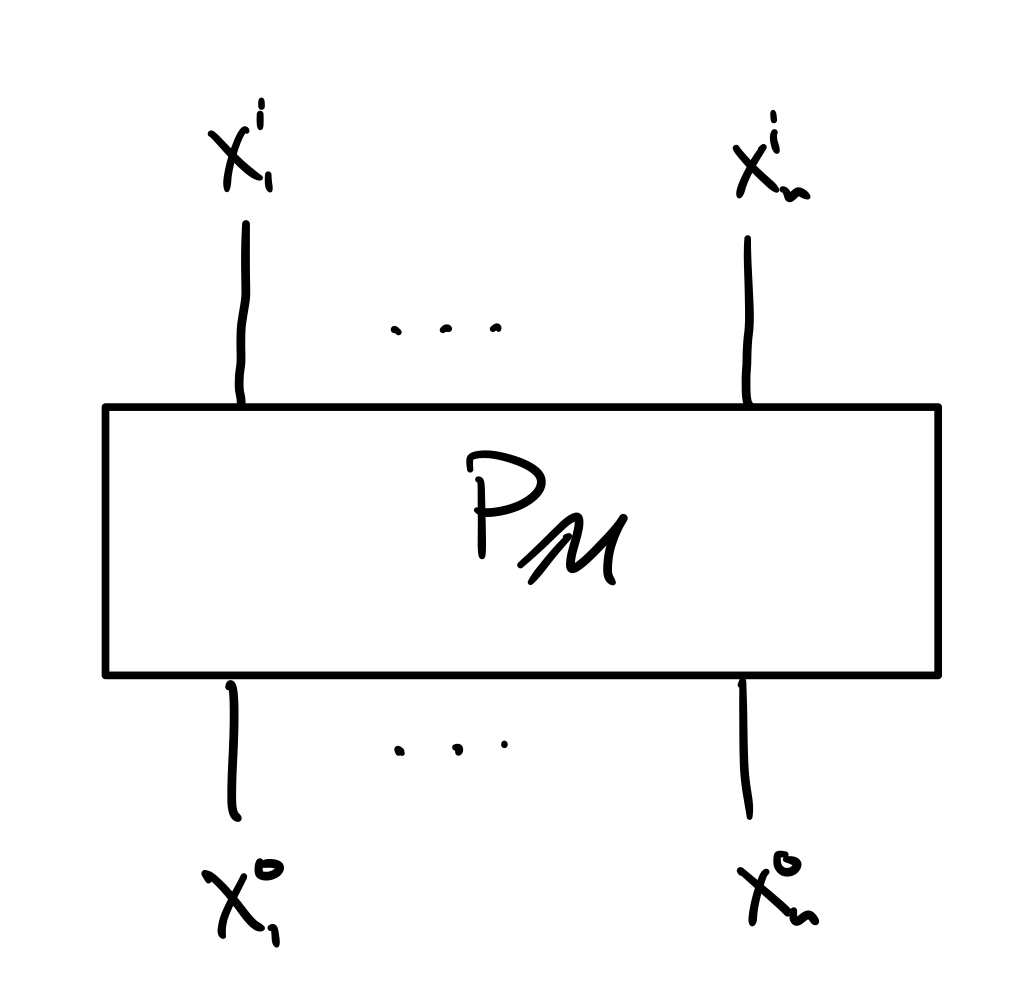

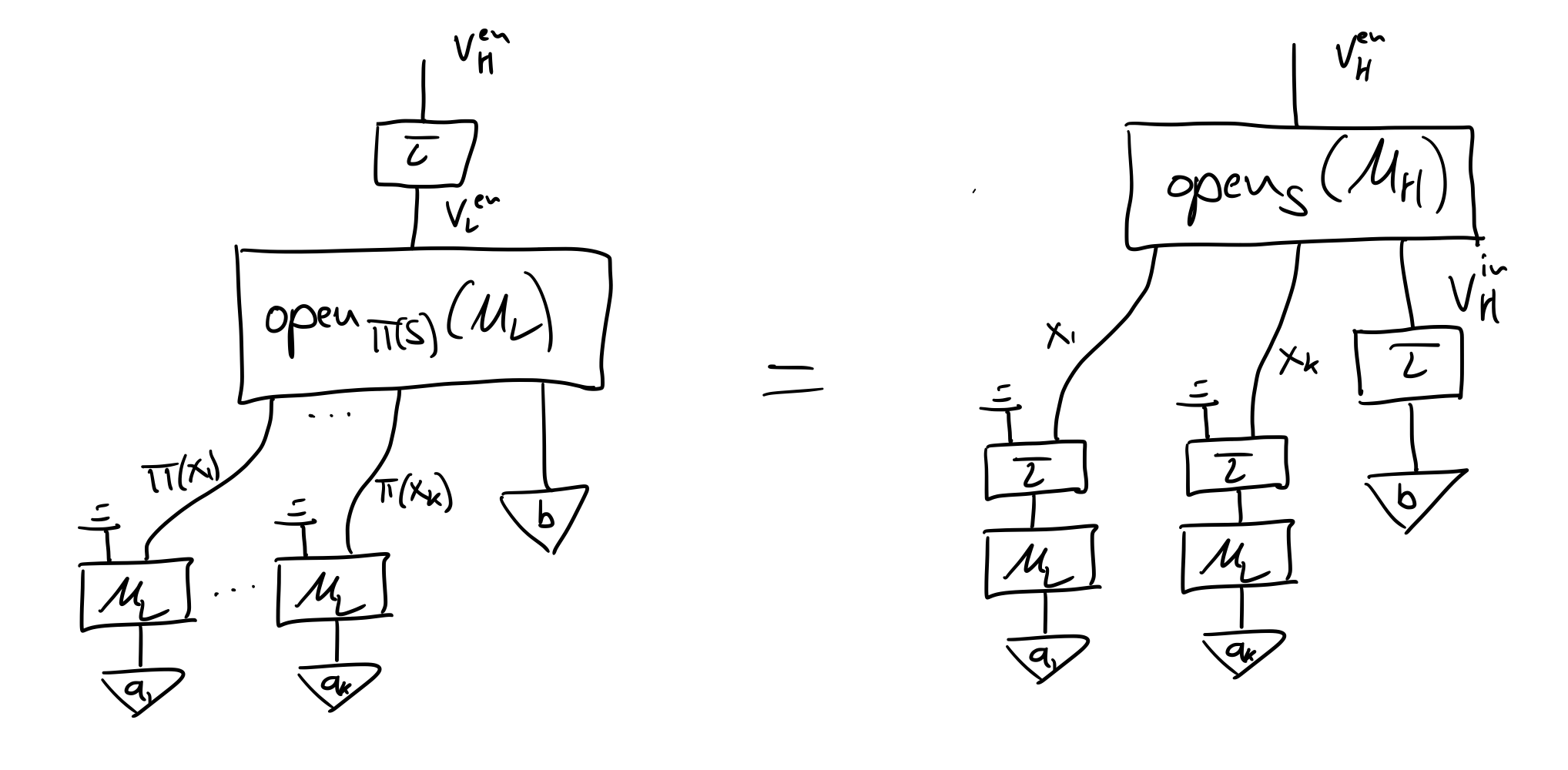

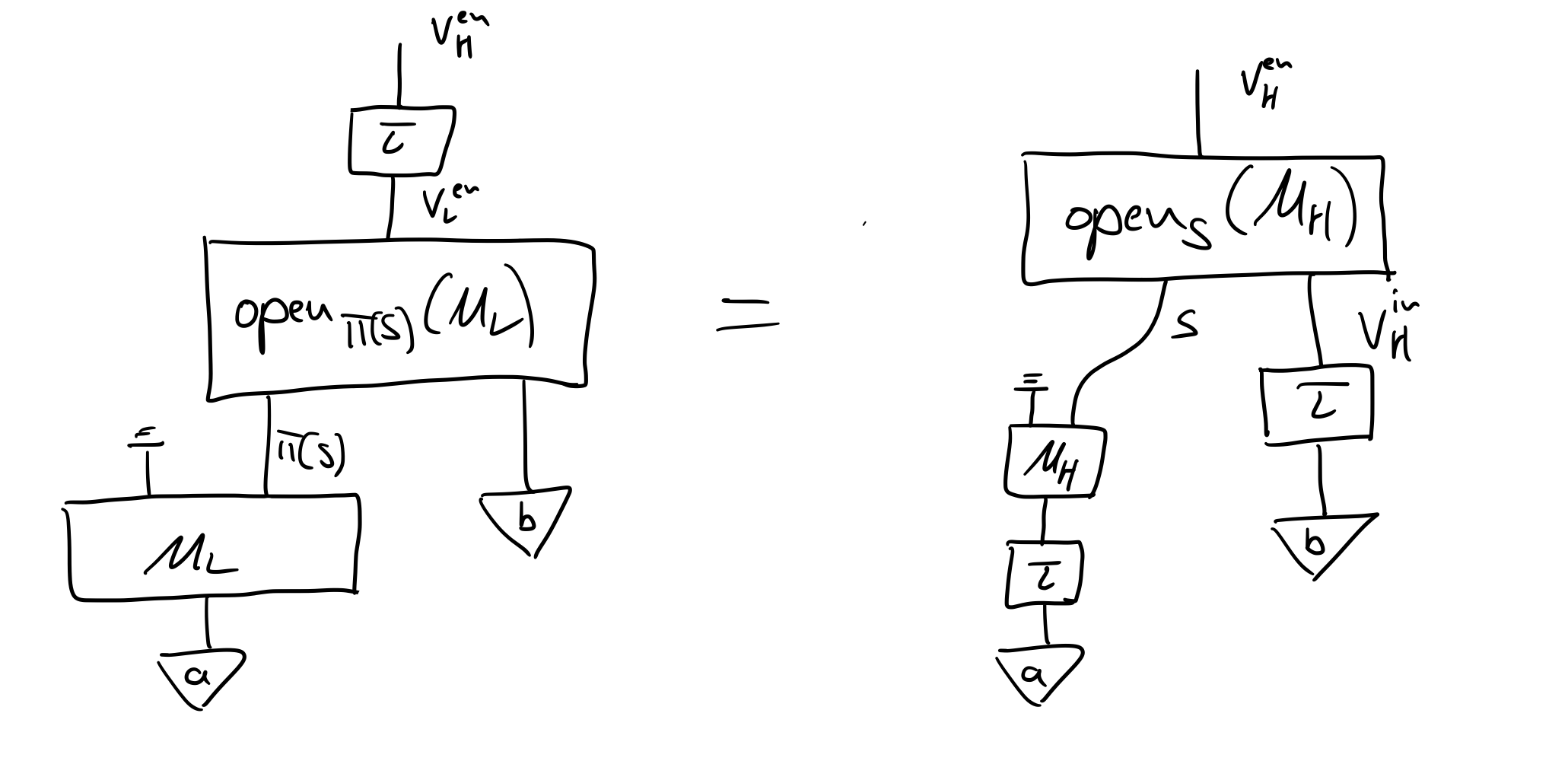

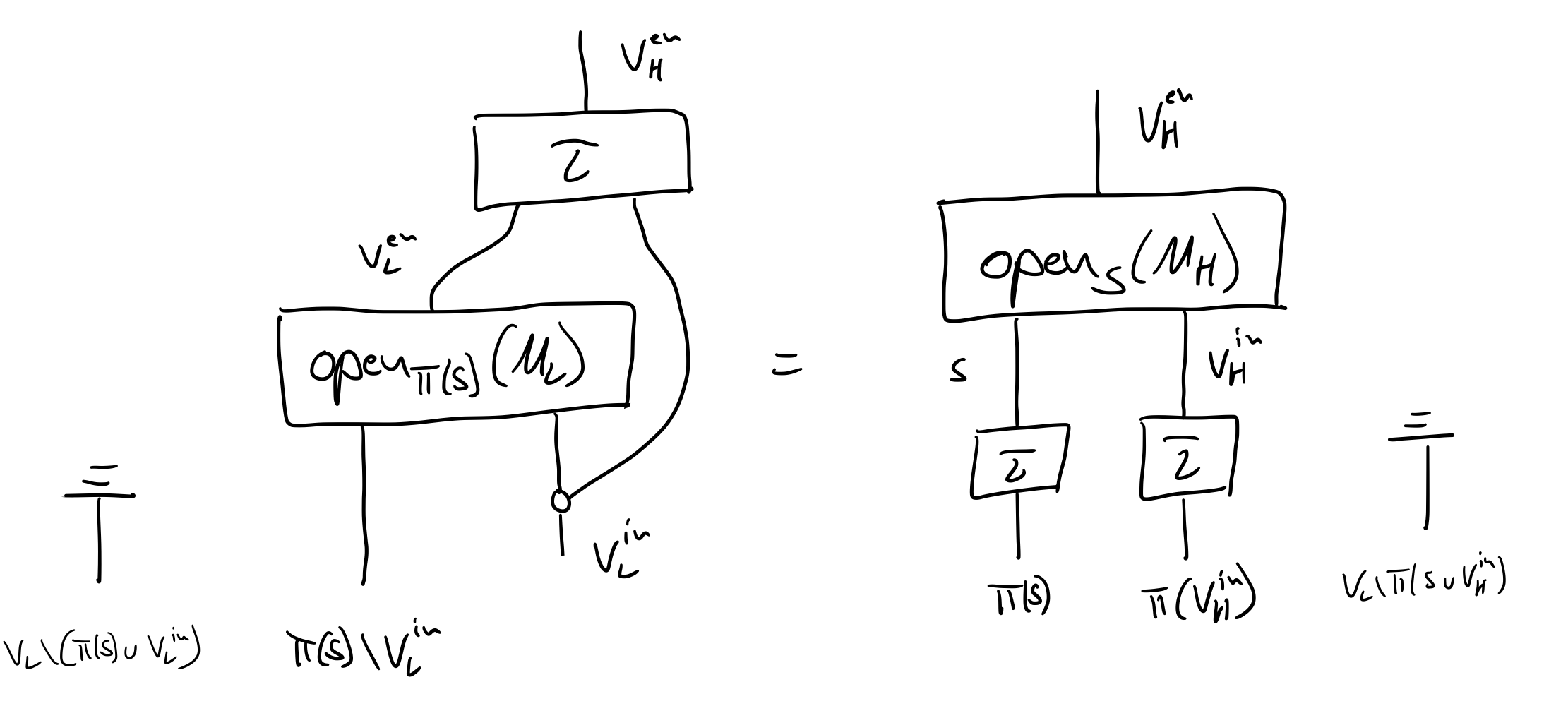

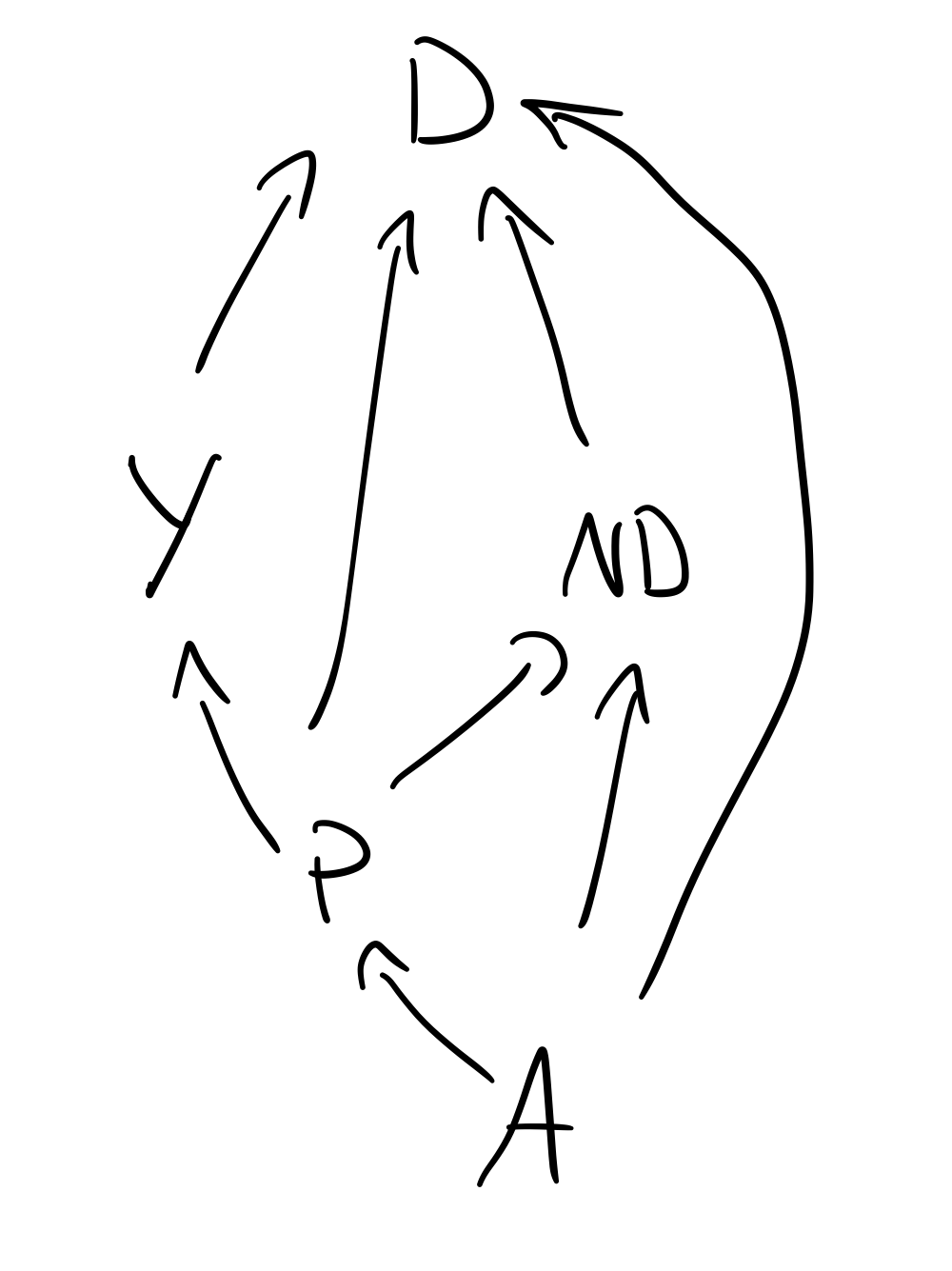

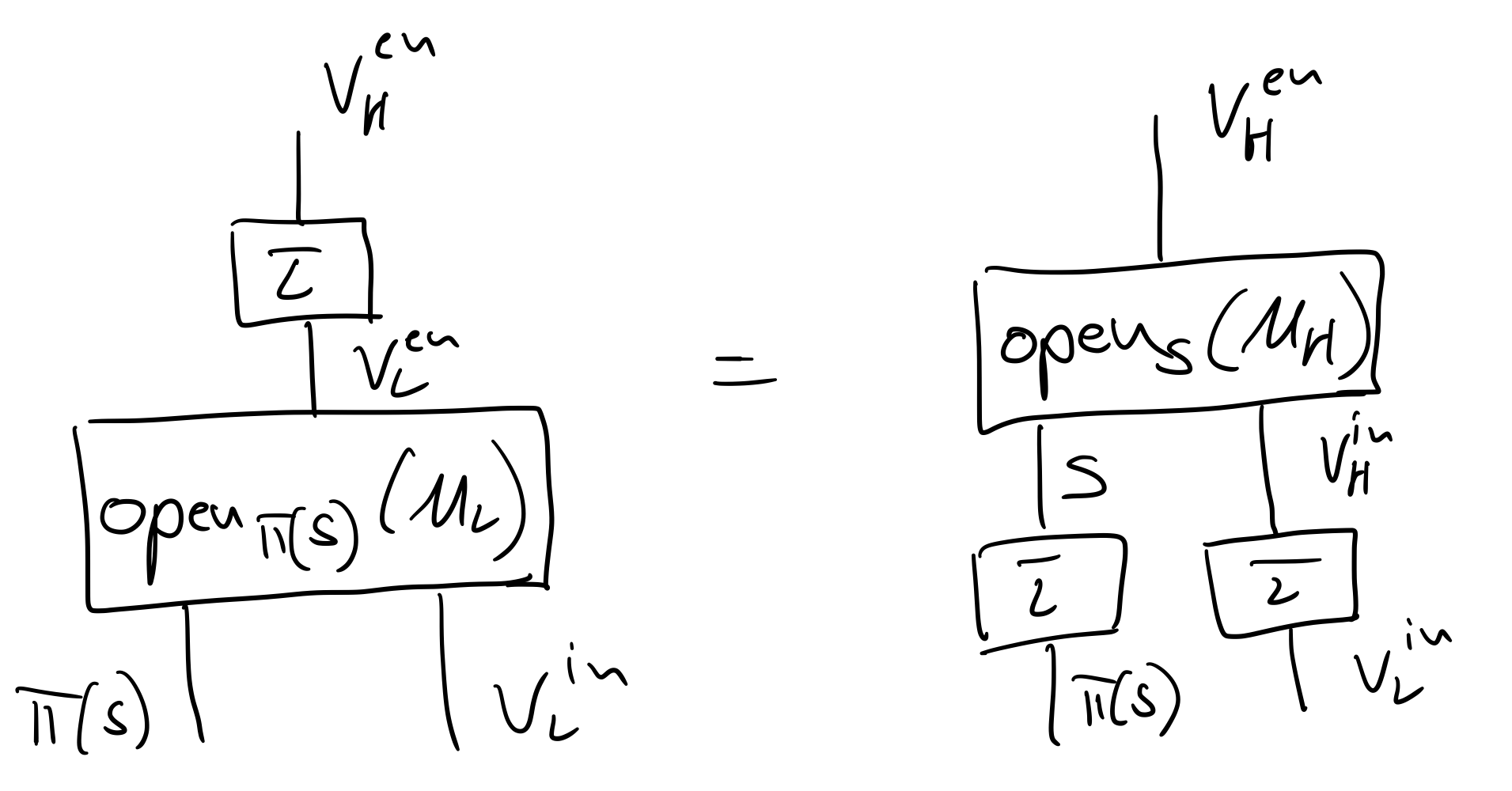

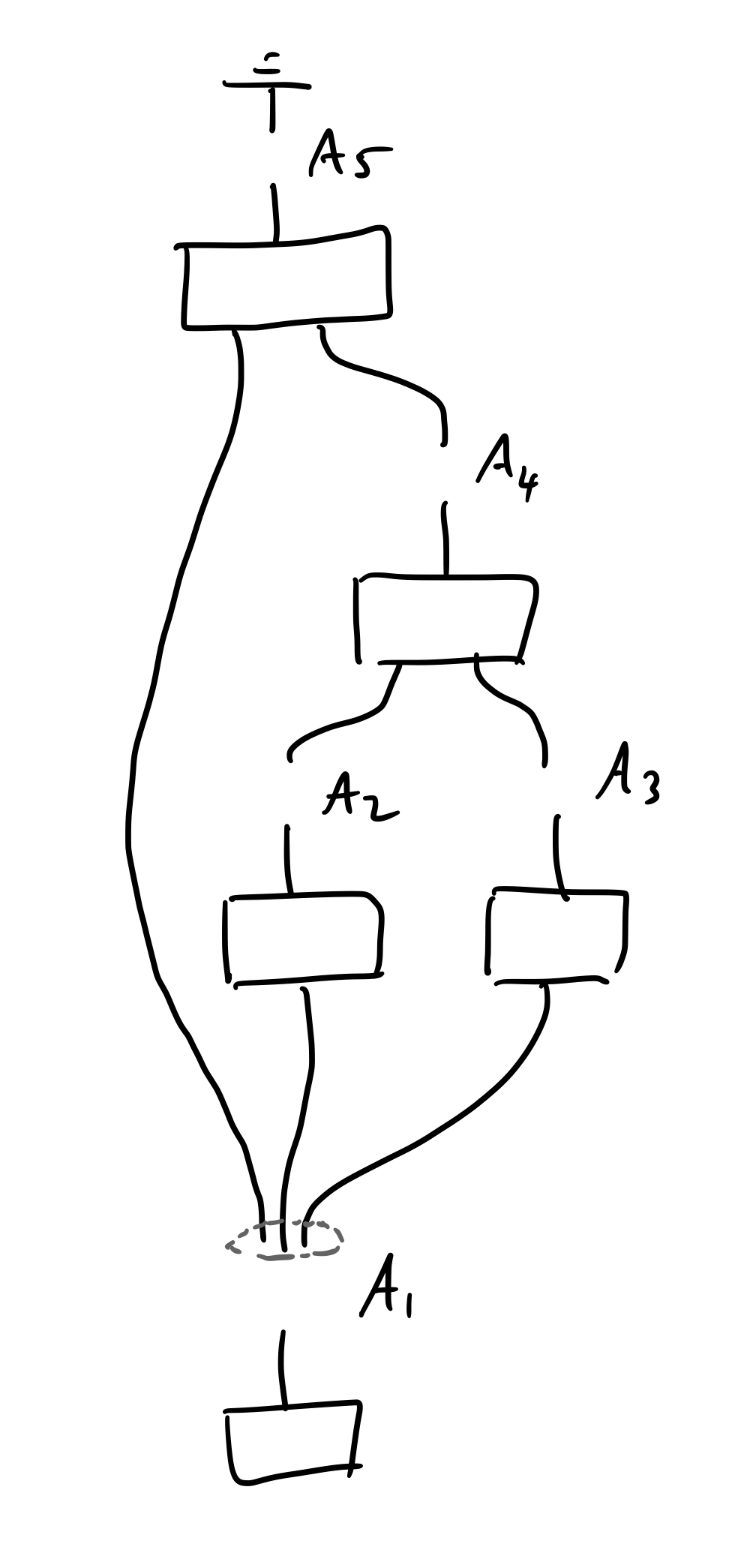

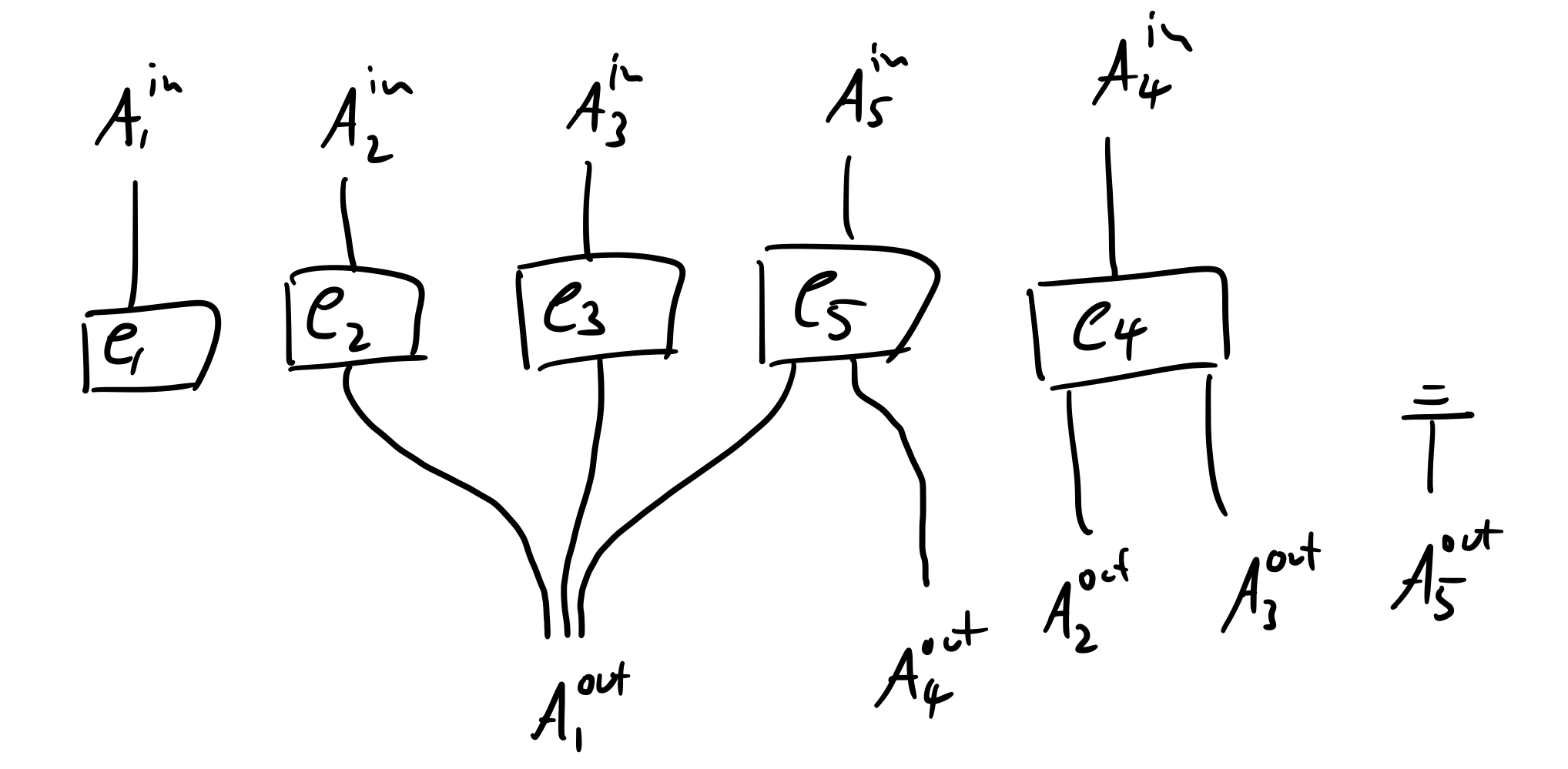

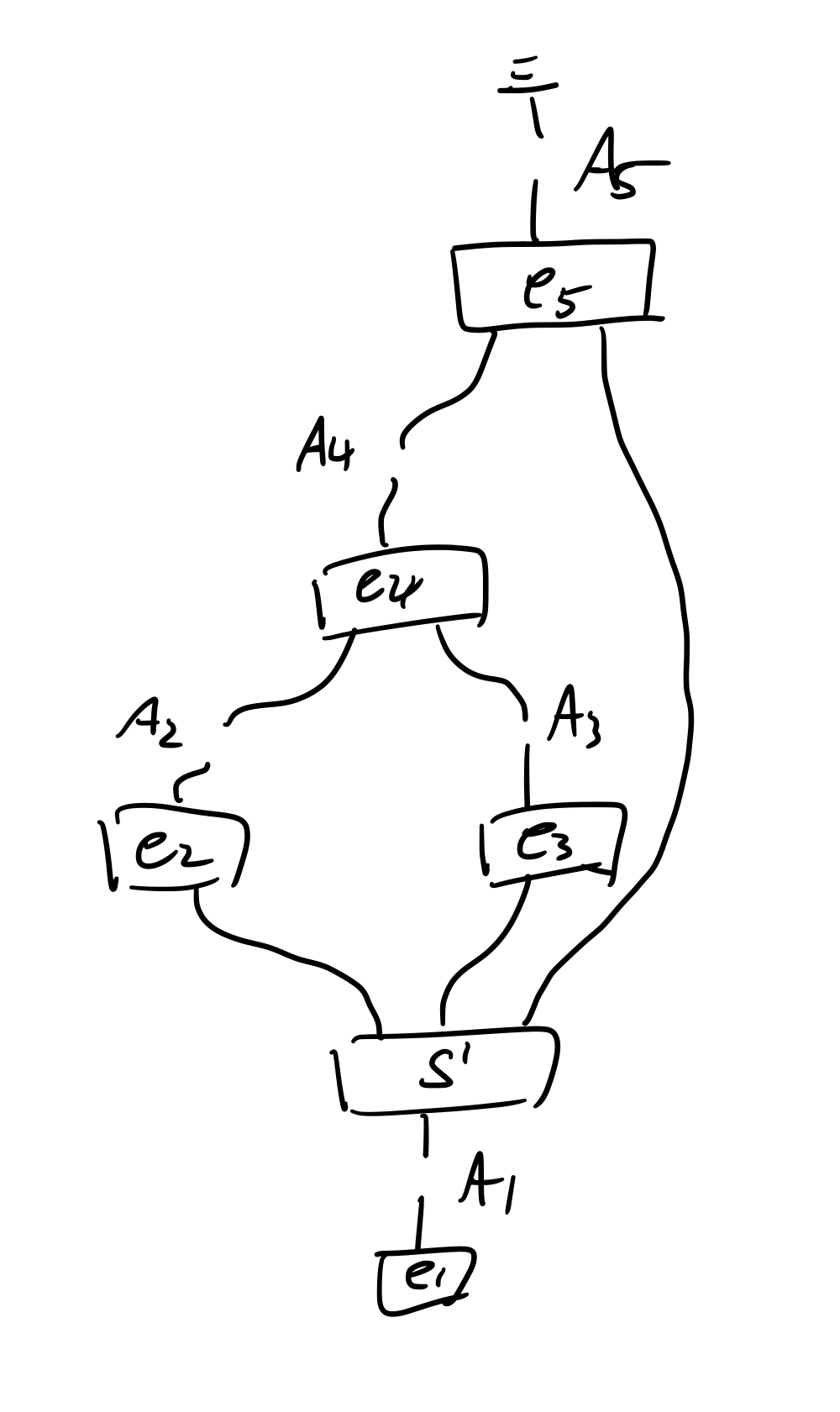

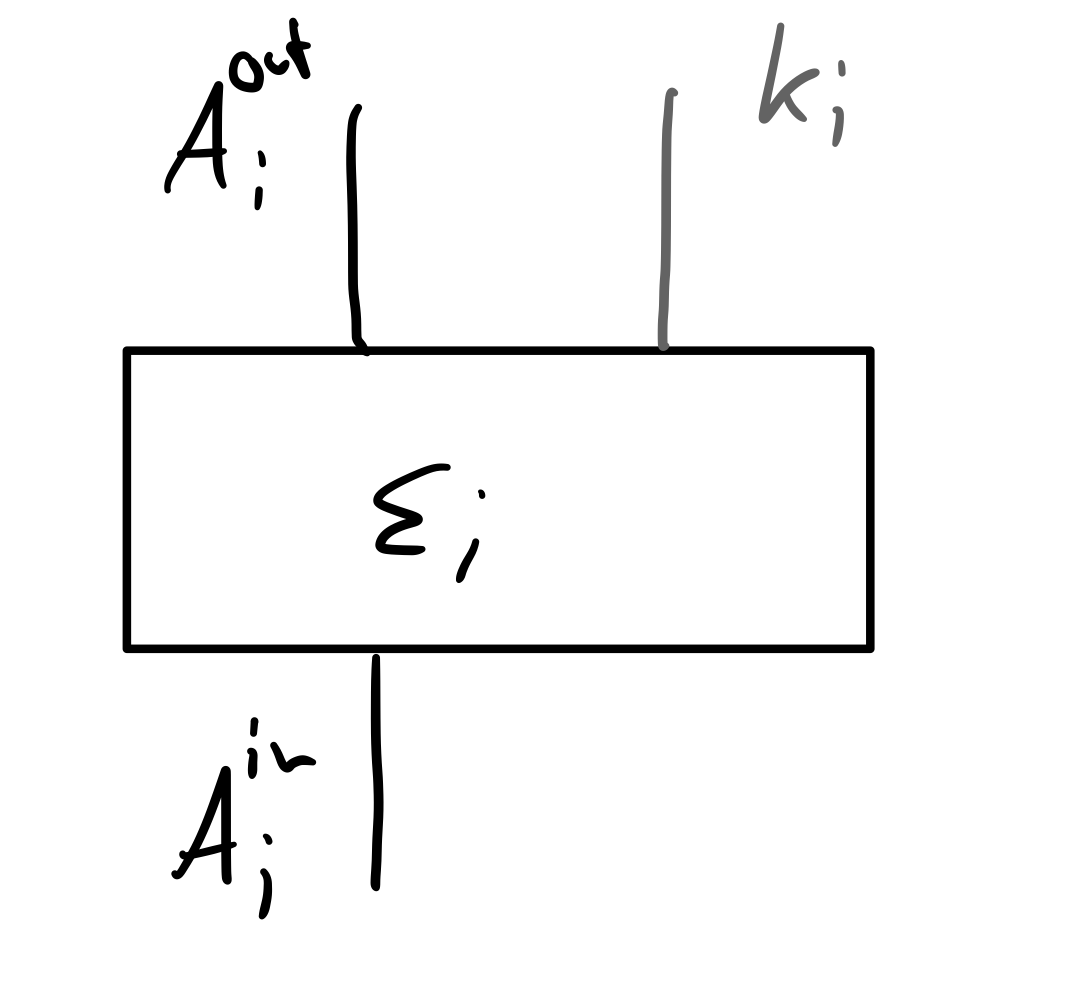

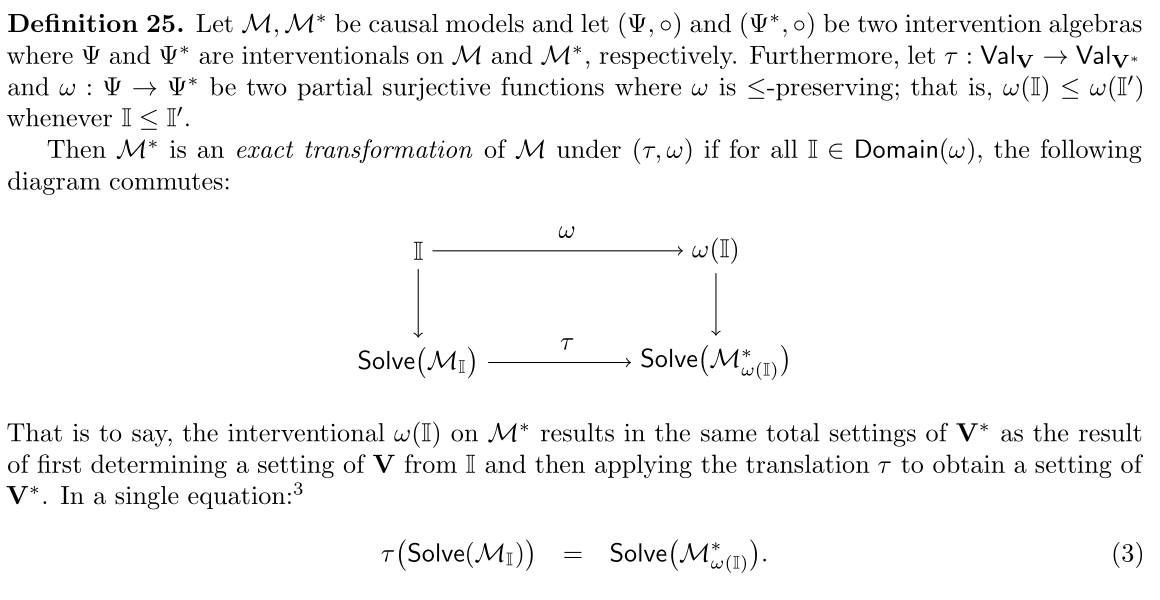

This intuition has been made precise through notions of causal abstraction, pioneered over the past decade in a number of works [CEP17, RWB 17, BH19, BEH20, MGIB23, GIZ 23]. These begin with the causal model framework [Pea09], which formalises causal models and reasoning at a given level of abstraction. A ‘high-level’ causal model H then stands in a causal abstraction relation to a ’low-level’ one L, when there is an abstraction map τ from low to high-level variables and a relation between interventions at each level such that intervening at the high-level is causally consistent with respect to interventions at the low-level.

Causal abstraction is receiving increasing attention for both foundational and practical reasons in fields ranging from causal inference to AI and philosophy. Despite the varying assumptions, goals and forms of causal model, in each scenario the same basic relation is needed. There are at least three distinct contexts that motivate its study. Firstly, a generalisation of causal identifiability problems in which the level of our causal hypotheses and quantities of interest differs from the level at which we have empirical data. In this case the low-level causal model is (an approximation of) a ground-truth model which we neither have access to, nor usually care about. Examples include questions varying from weather phenomena to human brains [CEP16,CEP17,XB24,HLT25]. Relatedly, abstraction is also key for a causal study of racial discrimination to avoid the ’loss of modularity’ from wrongly mixing relata of different levels of abstraction [MSEI25].

Second is the study of interpretability and explainability in artificial intelligence and deep learning. Similarly, here the aim is to relate a complex and obscure low-level model, such as a neural network, to a humaninterpretable high-level model. Now, however, the low-level model is perfectly known and not considered (an approximation of) a ground-truth model, but a computational model trained for some task which may or may not be explicitly causal; yet the abstraction relation to a manifestly interpretable, high-level causal model can provide a gold standard kind of interpretability. This has been pursued for post-hoc XAI methods by a number of works [GRP20, GLIP21, HT22, Bec22, WGI 24, GWP 24, GIZ 23, WGH 24, PMG25], with [GIZ 23] in fact arguing that any method in mechanistic interpretability is best understood from a causal abstraction perspective. Abstraction has also been used for not-post-hoc XAI, as an inductive bias to obtain models which are interpretable by design [GWL 22]. Moreover, it has been argued to provide an account of computational explanation more generally [GHI25].

Third is the problem of causal representation learning, which aims to obtain AI models that have learned appropriate causal variables and relations directly from ‘raw’, low-level data, without (all of) the information as to the appropriate dimensions or number of such variables. This generalises both causal discovery and other forms of (disentangled) representation learning, being argued to be essential for robustness and strong and efficient generalisabilty in AI [SLB 21]. An increasing number of works have explored questions of its theoretical and practical (im-)possibility, often relying on causal abstraction as a key ingredient [BDHLC22, YXL 23, KSB23, YRC 24, KGSL, LKR25, ZMSB24]. Relatedly, it has been shown that embodied AI relies on veridical world models that have learned causal structure at the right level of abstraction [GGM 24, RE24, REA25].

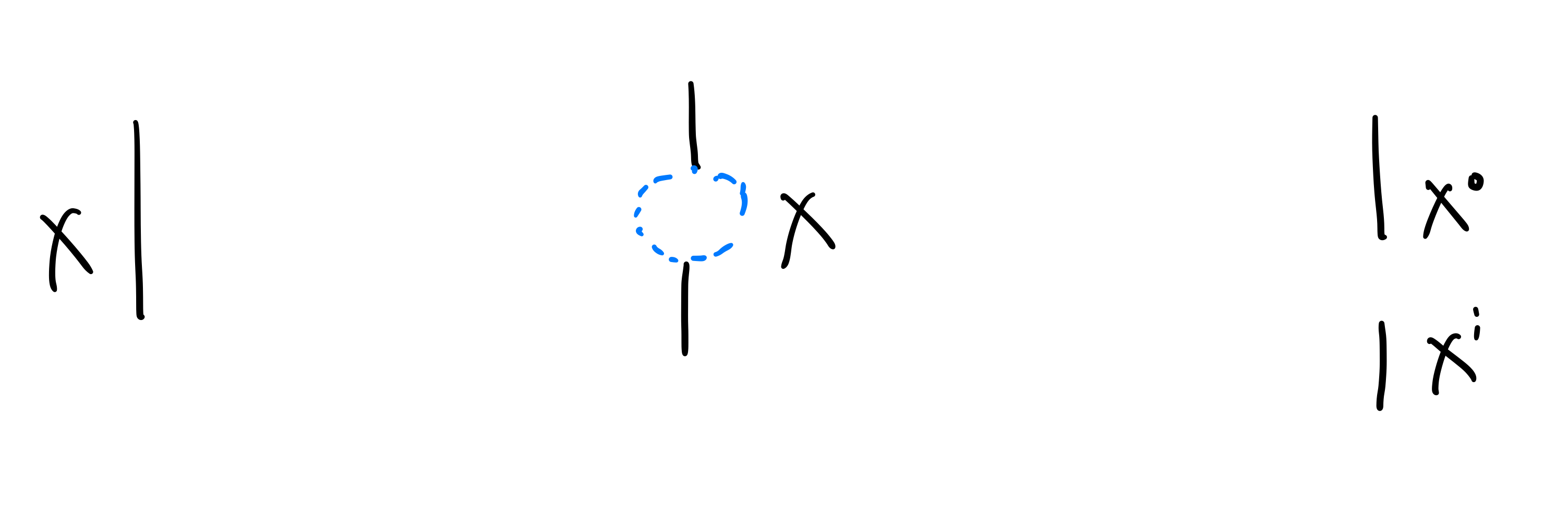

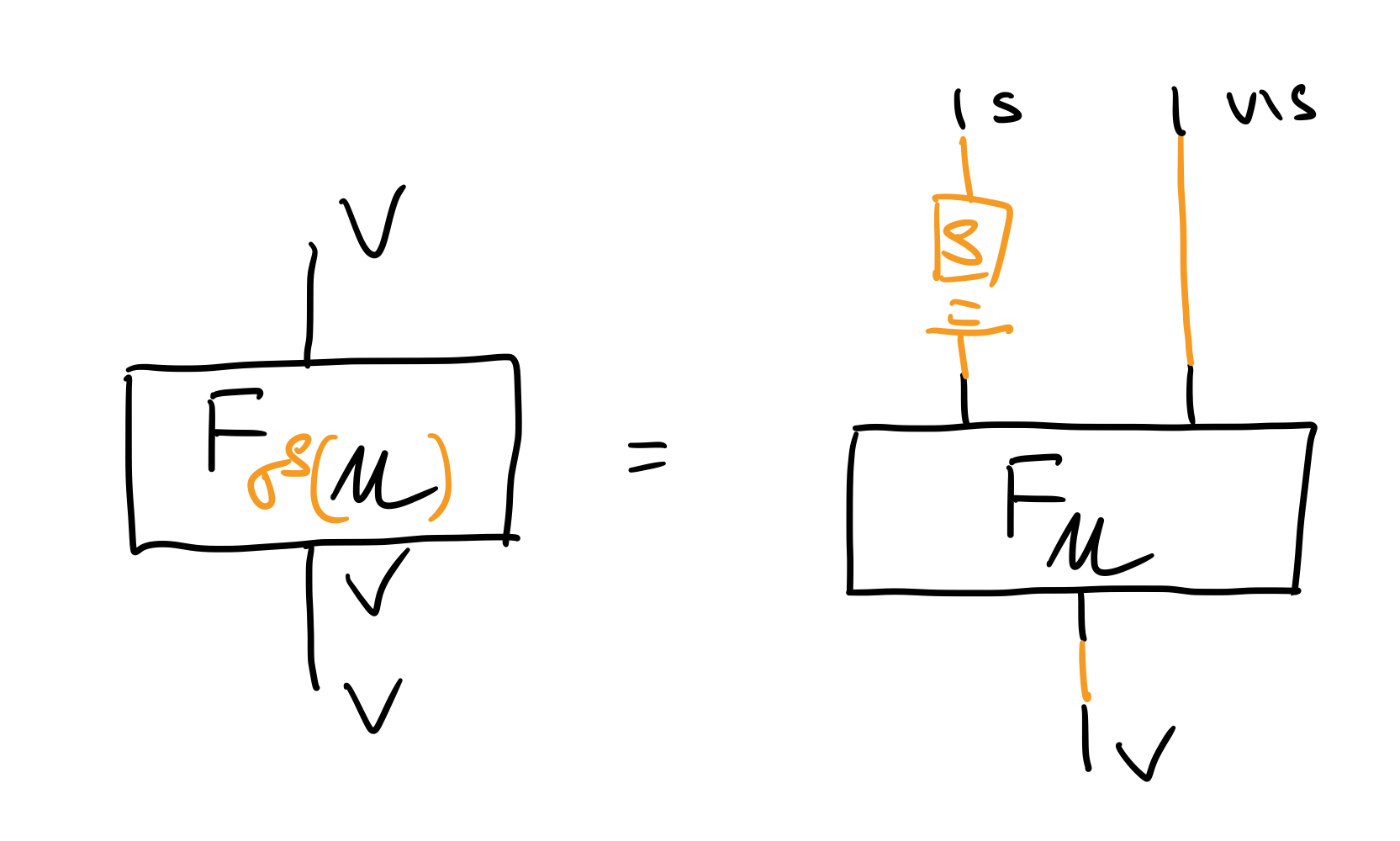

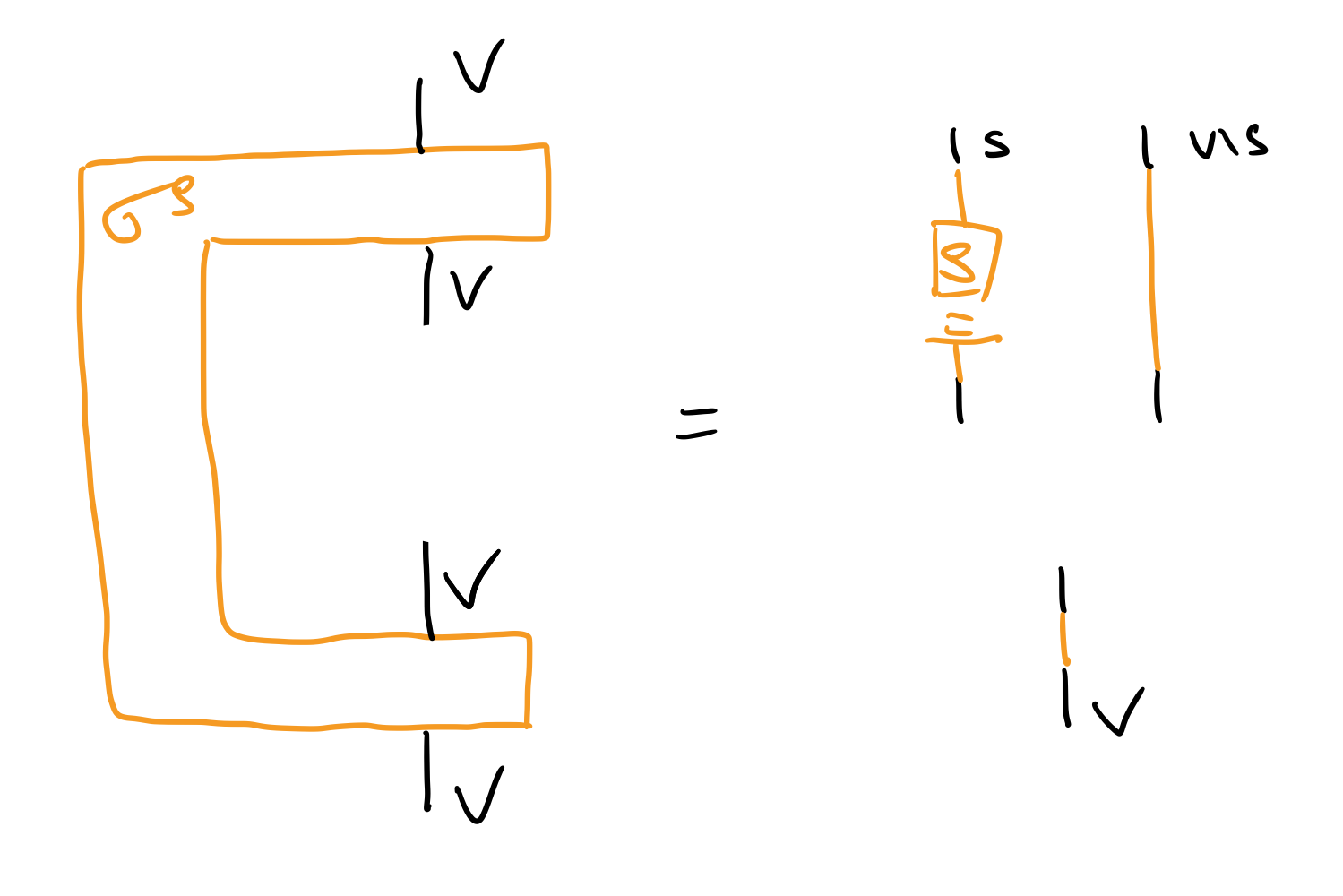

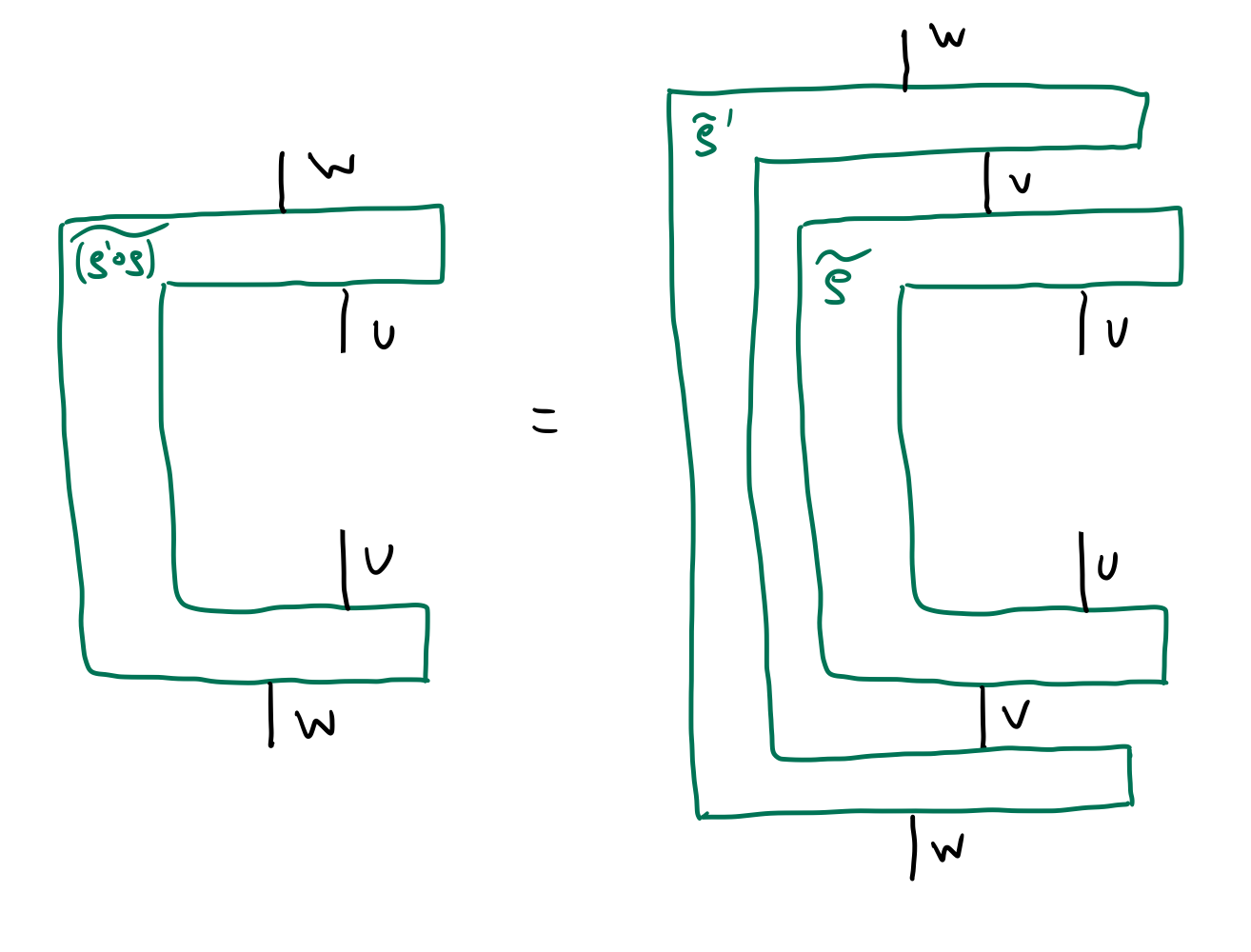

A language for abstraction. Despite forming a basic and essential notion for science, there is currently no unified formalism for causal abstraction, or any consensus as to which of a suite of closely related notions of abstraction are appropriate in which situation. For both future theory and experiments in abstraction, an appropriate conceptual language is needed. Now, causal reasoning is all about the interplay between syntactic causal structure and semantic probabilistic data, while abstraction is about how a model at one level of detail relates to another. Category theory is the mathematics of processes, structure and composition [Coe06,Lei14]; precisely the language that brings structural aspects and their relations to the forefront so they can be studied directly. Indeed categories are natural for describing both the structure of a model, through monoidal categories and the intuitive graphical language of string diagrams [PZ25, LT23, TLC `

…(Full text truncated)…

📸 Image Gallery

Reference

This content is AI-processed based on ArXiv data.