SIRUP: A diffusion-based virtual upmixer of steering vectors for highly-directive spatialization with first-order ambisonics

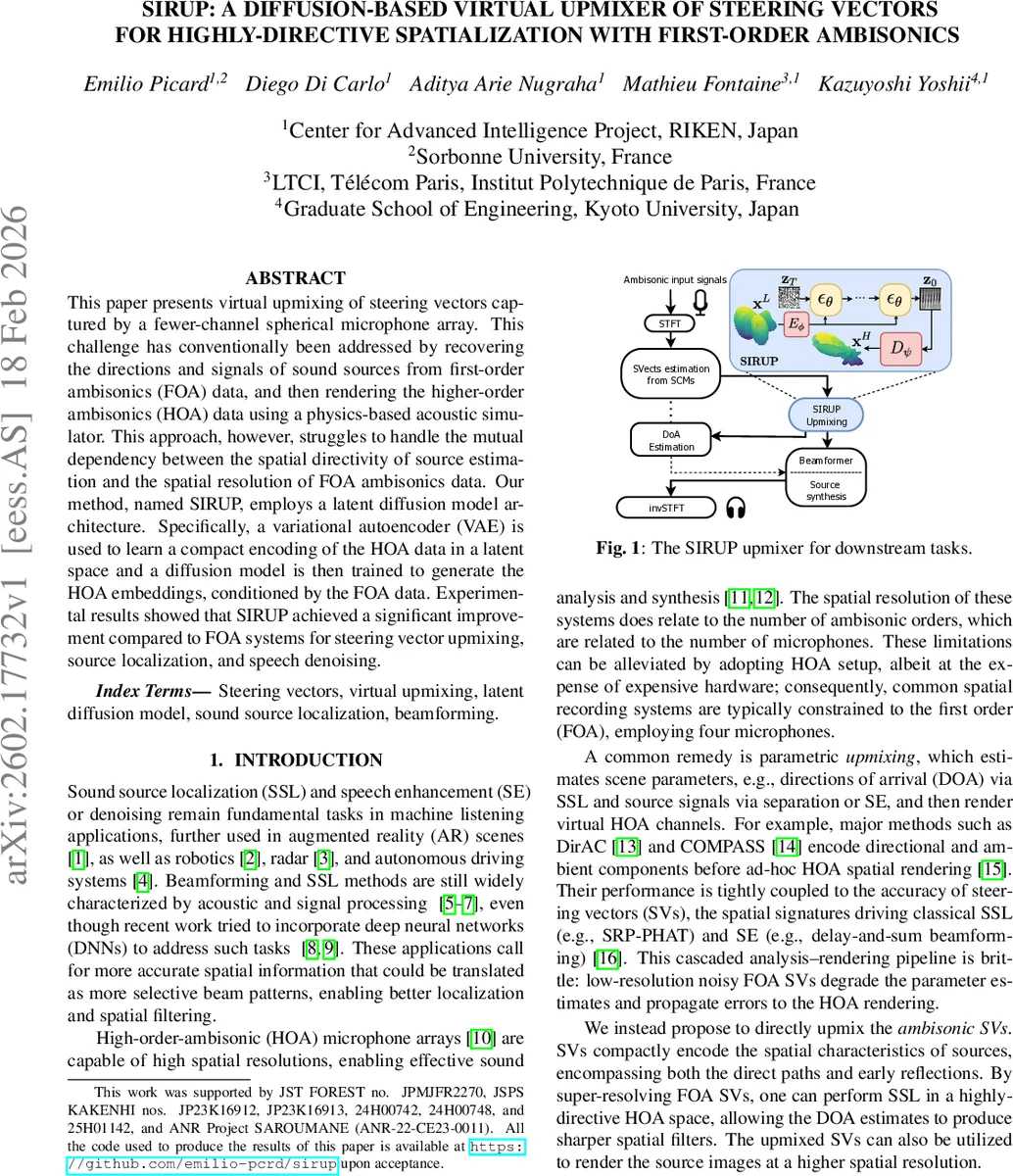

This paper presents virtual upmixing of steering vectors captured by a fewer-channel spherical microphone array. This challenge has conventionally been addressed by recovering the directions and signals of sound sources from first-order ambisonics (FOA) data, and then rendering the higher-order ambisonics (HOA) data using a physics-based acoustic simulator. This approach, however, struggles to handle the mutual dependency between the spatial directivity of source estimation and the spatial resolution of FOA ambisonics data. Our method, named SIRUP, employs a latent diffusion model architecture. Specifically, a variational autoencoder (VAE) is used to learn a compact encoding of the HOA data in a latent space and a diffusion model is then trained to generate the HOA embeddings, conditioned by the FOA data. Experimental results showed that SIRUP achieved a significant improvement compared to FOA systems for steering vector upmixing, source localization, and speech denoising.

💡 Research Summary

The paper introduces SIRUP, a novel diffusion‑based framework that virtually upmixes steering vectors (SVs) captured by a low‑order (first‑order, 4‑channel) ambisonic microphone array to the resolution of a higher‑order (third‑order, 16‑channel) ambisonic system. Traditional pipelines first estimate source directions and signals from FOA data and then render HOA channels with a physics‑based acoustic simulator. This cascaded approach suffers from a mutual dependency: the low spatial resolution of FOA limits the accuracy of direction estimation, and any errors propagate to the final HOA rendering.

SIRUP addresses this by directly super‑resolving the SVs themselves. The method consists of two stages. In the first stage a variational auto‑encoder (VAE) learns a compact latent representation of HOA SVs. The VAE is trained with a composite loss that combines L2 reconstruction, cosine similarity, a perceptual feature‑matching term, and a small KL‑divergence regularizer, ensuring that the latent space faithfully captures both magnitude and phase information of the SVs.

In the second stage a conditional latent diffusion model operates in the VAE’s latent space. The FOA SVs (four channels) are zero‑padded or supplemented with algebraic SVs to form a conditioning tensor c, which is encoded by the VAE encoder Eϕ. A UNet‑based denoiser εθ receives noisy latent samples (starting from z_T ∼ N(0, I)) together with the encoded condition and iteratively denoises them over T = 1000 steps (200 steps at inference). The UNet incorporates dilated convolutions along the frequency axis to enforce coherence across frequency bins and uses cross‑attention to inject the FOA condition at each block. After the reverse diffusion process, the decoder Dψ reconstructs the upmixed HOA SV matrix (\hat A_{up}) (F × 16 complex channels).

The upmixed SVs contain direct‑path and early‑reflection information, enabling more precise downstream tasks. For sound‑source localization (SSL), the authors apply a steered‑response‑power (SRP‑PHAT) grid search using algebraic SVs projected onto the upmixed space, yielding sharper angular spectra. For speech enhancement, beamforming weights are derived from either the measured or algebraic SVs in the HOA domain, allowing a Max‑SINR beamformer that benefits from the higher spatial selectivity. The model can also denoise SVs during inference, improving the quality of the reconstructed signals.

Experiments were conducted in simulated rooms (6 × 4 × 3 m) using the image‑source model in pyroomacoustics. Thirty acoustic scenes were generated for each condition, varying signal‑to‑noise ratio (SNR ∈

Comments & Academic Discussion

Loading comments...

Leave a Comment