Saliency-Aware Multi-Route Thinking: Revisiting Vision-Language Reasoning

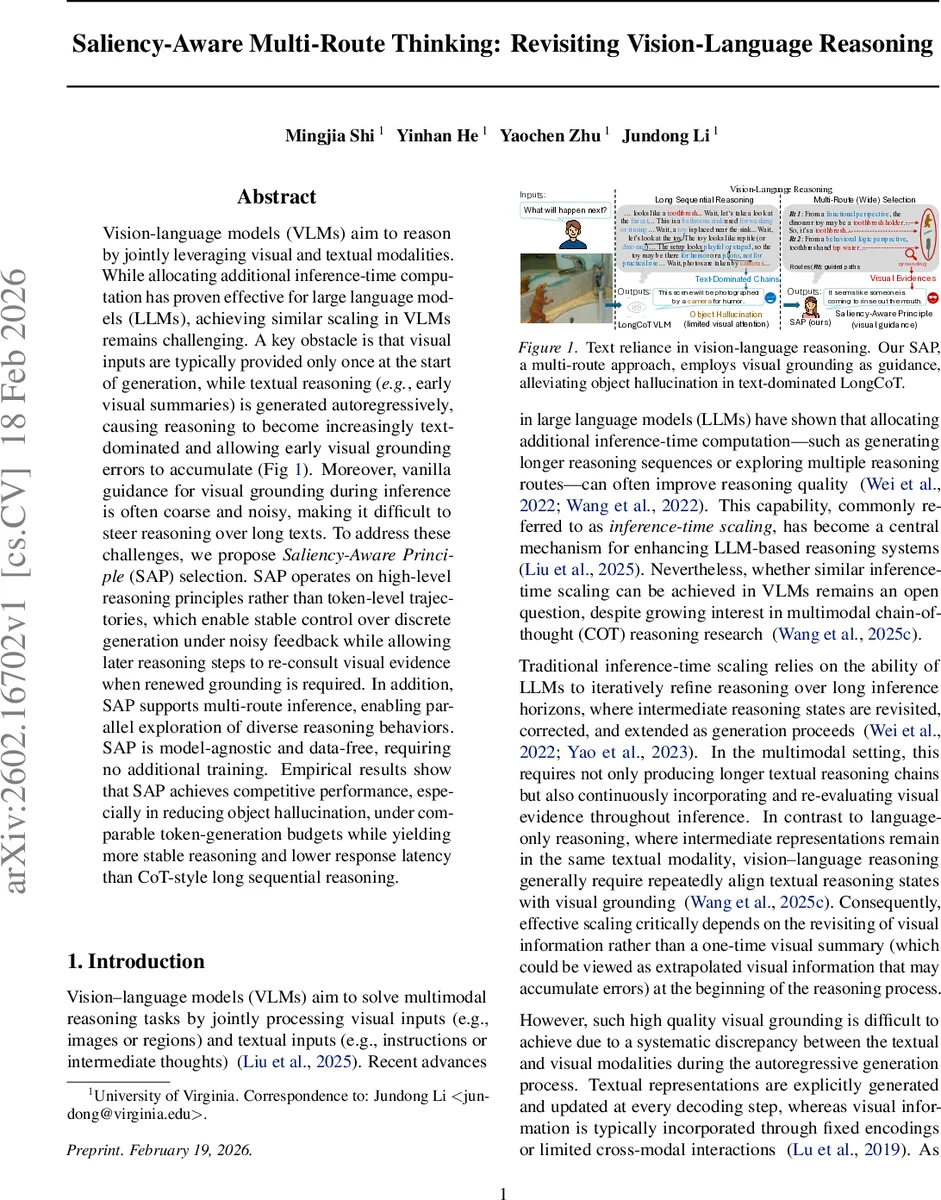

Vision-language models (VLMs) aim to reason by jointly leveraging visual and textual modalities. While allocating additional inference-time computation has proven effective for large language models (LLMs), achieving similar scaling in VLMs remains challenging. A key obstacle is that visual inputs are typically provided only once at the start of generation, while textual reasoning (e.g., early visual summaries) is generated autoregressively, causing reasoning to become increasingly text-dominated and allowing early visual grounding errors to accumulate. Moreover, vanilla guidance for visual grounding during inference is often coarse and noisy, making it difficult to steer reasoning over long texts. To address these challenges, we propose \emph{Saliency-Aware Principle} (SAP) selection. SAP operates on high-level reasoning principles rather than token-level trajectories, which enable stable control over discrete generation under noisy feedback while allowing later reasoning steps to re-consult visual evidence when renewed grounding is required. In addition, SAP supports multi-route inference, enabling parallel exploration of diverse reasoning behaviors. SAP is model-agnostic and data-free, requiring no additional training. Empirical results show that SAP achieves competitive performance, especially in reducing object hallucination, under comparable token-generation budgets while yielding more stable reasoning and lower response latency than CoT-style long sequential reasoning.

💡 Research Summary

The paper tackles a fundamental bottleneck in vision‑language models (VLMs): visual information is typically injected only once at the beginning of generation, after which the model proceeds autoregressively in the textual modality. As the reasoning chain grows, this leads to a “text‑dominated” regime where early visual grounding errors accumulate, causing object hallucination and biased reasoning. While large language models (LLMs) benefit from inference‑time scaling—either by generating longer chain‑of‑thought (CoT) sequences or by exploring multiple reasoning paths—such scaling does not directly translate to VLMs because visual evidence is not revisited during long inference horizons.

To overcome this, the authors introduce Saliency‑Aware Principle (SAP) selection, a model‑agnostic, data‑free inference‑time optimization framework that operates on high‑level reasoning principles rather than on token‑level trajectories. A principle is a compact textual description that specifies how the model should use visual evidence (e.g., “re‑examine salient objects after each hypothesis”, “verify spatial relations by checking relative positions”). The same principle can give rise to many concrete token sequences, allowing the search space to be compressed from the combinatorial set of routes to a more tractable set of principles.

SAP couples this principle space with a visual saliency signal extracted by a black‑box grounding module (denoted GROUND). Salient image regions (objects, text snippets, etc.) serve as a modality‑aware reference for evaluating whether a principle successfully leverages visual evidence. Importantly, feedback during inference is treated as ordinal (pairwise preferences) rather than absolute scalar scores, reflecting the noisy, evaluator‑dependent nature of multimodal supervision.

The optimization proceeds via an evolutionary population‑based algorithm:

- Initialization – Sample μ + λ principles (population size) using the language model’s prompt generation capability.

- Multi‑route inference – For each principle, generate τ reasoning routes, each producing a “thinking” output (r) and a summary (s).

- Discrete evaluation – Apply a black‑box comparator that yields relative rankings among the routes, then map these rankings to internal scores.

- Selection & variation – Keep the top μ elite principles, discard the rest, and generate λ new candidates by mutating or recombining elites (e.g., prompting the model to “refine” an elite principle).

- Iterate – Repeat steps 2‑4 for several generations, progressively steering the population toward principles that consistently align textual reasoning with salient visual cues.

Because the evaluation relies on saliency rather than raw token probabilities, SAP remains robust to noisy feedback and does not require task‑specific heuristics. Moreover, the multi‑route design allows parallel execution: each principle’s τ routes can be processed independently, yielding higher throughput and lower latency compared with sequential Long‑CoT, where each token depends on its predecessor.

Empirical validation is performed on the Qwen‑3‑VL‑8B‑Thinking model across several benchmarks: COCO‑VQA (object‑centric visual QA), OCR‑VQA (text‑in‑image QA), and POPE‑Recall (object presence evaluation). Under comparable token budgets (≈150 tokens per query), SAP achieves:

- Significant reduction in object hallucination (≈30 % relative drop) while maintaining or slightly improving answer accuracy (2‑4 % absolute gain).

- Lower response latency (15‑20 % faster) due to parallel multi‑route inference.

- Comparable or better overall performance relative to proprietary VLMs that rely on longer sequential CoT reasoning.

The authors also conduct ablation studies showing that (a) using saliency as the evaluation signal is crucial—random or uniform evaluation leads to performance collapse, (b) increasing the number of routes τ improves robustness up to a point, and (c) the evolutionary selection outperforms simple random sampling or beam search in the principle space.

Limitations are acknowledged: the saliency extractor depends on pretrained object detectors, which may miss subtle or abstract visual cues; the principle generation currently relies on prompting the same language model, which could inherit its biases; and the method has been tested primarily on image‑question answering tasks, leaving video or multimodal dialog scenarios unexplored.

In summary, SAP offers a practical, scalable, and modality‑aware approach to inference‑time scaling for VLMs. By shifting control from low‑level token trajectories to high‑level, saliency‑grounded principles, it mitigates early grounding errors, enables parallel multi‑route exploration, and delivers better accuracy‑latency trade‑offs without any additional training data. The work opens avenues for future research on richer saliency cues, domain‑specific principle libraries, and integration of human‑in‑the‑loop feedback to further enhance multimodal reasoning.

Comments & Academic Discussion

Loading comments...

Leave a Comment