Error Propagation and Model Collapse in Diffusion Models: A Theoretical Study

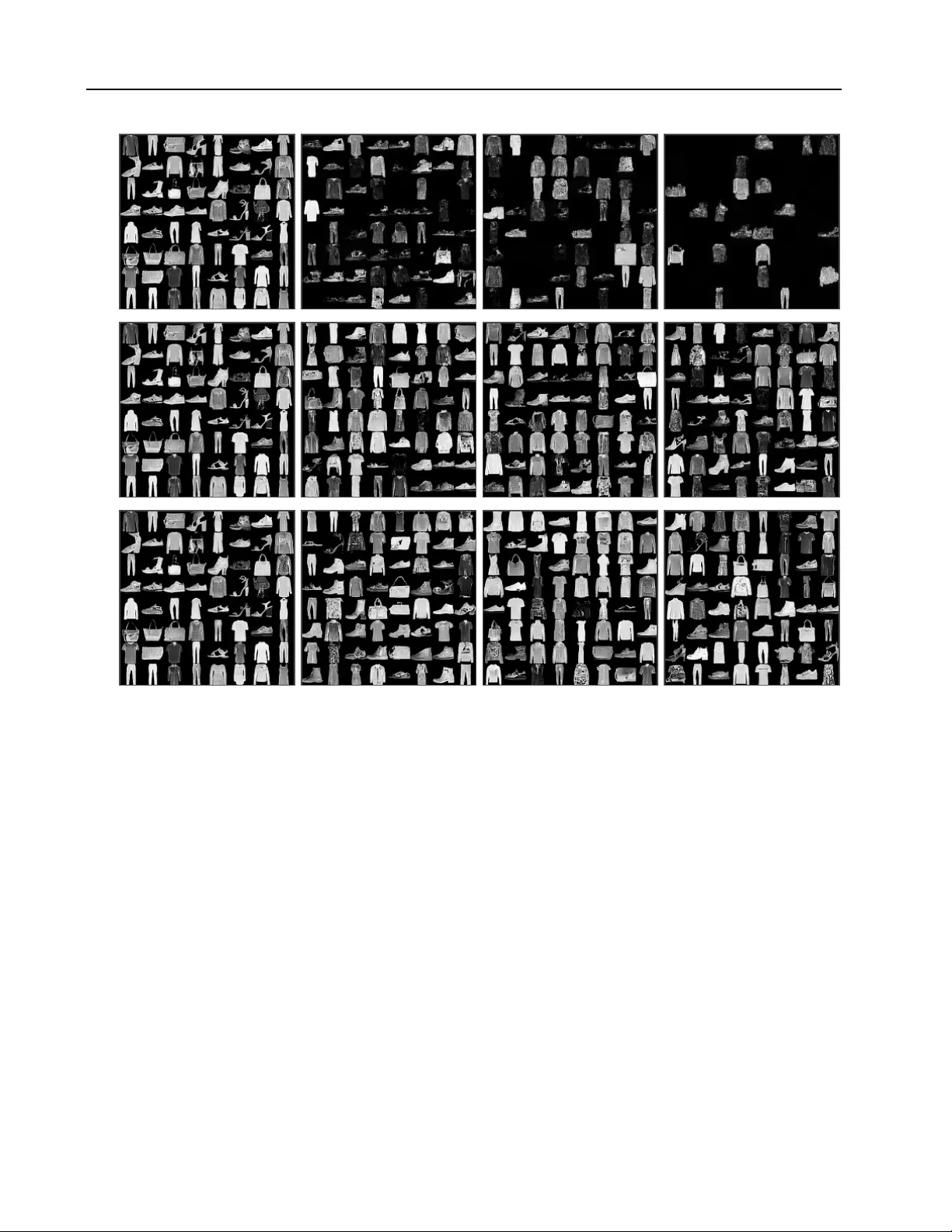

Machine learning models are increasingly trained or fine-tuned on synthetic data. Recursively training on such data has been observed to significantly degrade performance in a wide range of tasks, often characterized by a progressive drift away from …

Authors: Nail B. Khelifa, Richard E. Turner, Ramji Venkataramanan