GRIMM: Genetic stRatification for Inference in Molecular Modeling

The vast majority of biological sequences encode unknown functions and bear little resemblance to experimentally characterized proteins, limiting both our understanding of biology and our ability to harness functional potential for the bioeconomy. Predicting enzyme function from sequence remains a central challenge in computational biology, complicated by low sequence diversity and imbalanced label support in publicly available datasets. Models trained on these data can overestimate performance and fail to generalize. To address this, we introduce GRIMM (Genetic stRatification for Inference in Molecular Modeling), a benchmark for enzyme function prediction that employs genetic stratification: sequences are clustered by similarity and clusters are assigned exclusively to training, validation, or test sets. This ensures that sequences from the same cluster do not appear in multiple partitions. GRIMM produces multiple test sets: a closed-set test with the same label distribution as training (Test-1) and an open-set test containing novel labels (Test-2), serving as a realistic out-of-distribution proxy for discovering novel enzyme functions. While demonstrated on enzymes, this approach is generalizable to any sequence-based classification task where inputs can be clustered by similarity. By formalizing a splitting strategy often used implicitly, GRIMM provides a unified and reproducible framework for closed- and open-set evaluation. The method is lightweight, requiring only sequence clustering and label annotations, and can be adapted to different similarity thresholds, data scales, and biological tasks. GRIMM enables more realistic evaluation of functional prediction models on both familiar and unseen classes and establishes a benchmark that more faithfully assesses model performance and generalizability.

💡 Research Summary

The paper introduces GRIMM (Genetic stRatification for Inference in Molecular Modeling), a benchmark designed to provide a more realistic assessment of enzyme‑function prediction models. The authors begin by highlighting two pervasive issues in publicly available protein datasets: low sequence diversity and severe label imbalance. Because most sequences have unknown functions and the annotated portion is heavily skewed toward a few well‑studied families, models trained on these data often overfit to the specific evolutionary neighborhoods present in the training set. Conventional train‑validation‑test splits—typically random or proportion‑based—allow sequences from the same evolutionary cluster to appear in multiple partitions, inflating reported performance and masking poor generalization to truly novel proteins.

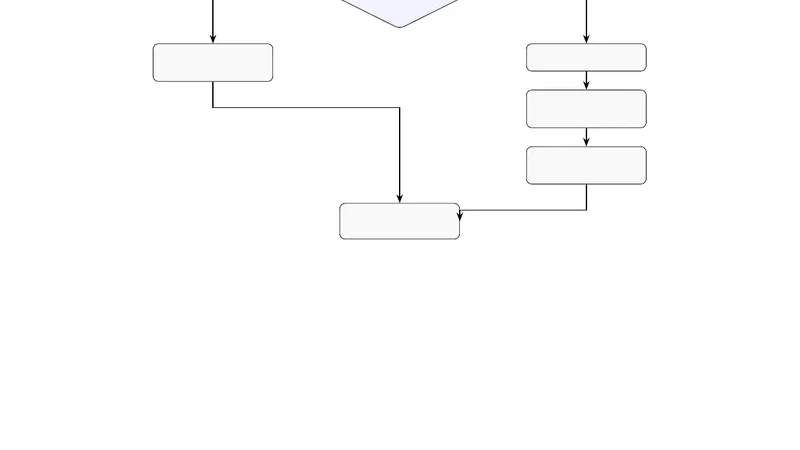

GRIMM addresses this problem through “genetic stratification.” First, all sequences are clustered by similarity using a fast tool such as CD‑HIT or MMseqs2, with a user‑defined identity threshold (e.g., 30 %). Each resulting cluster is then assigned exclusively to one of three partitions: training, validation, or testing. This guarantees that no two sequences from the same cluster ever share a partition, thereby ensuring that the test set contains only sequences with genetic backgrounds unseen during training.

Two complementary test sets are defined. Test‑1 (closed‑set) preserves the label distribution of the training data while still enforcing cluster exclusivity. It measures how well a model can recognize known enzyme classes when presented with new members of the same evolutionary families. Test‑2 (open‑set) deliberately introduces novel labels that were absent from the training set, mimicking the out‑of‑distribution scenario encountered when researchers search for previously uncharacterized enzymatic activities. High performance on Test‑2 indicates that a model can extrapolate beyond the label space it was trained on, a crucial capability for real‑world discovery.

The authors emphasize several practical advantages. The workflow is lightweight: it requires only sequence data, a clustering step, and existing label annotations. By adjusting the similarity threshold, users can tune the difficulty of the benchmark, from relatively easy (high identity) to highly stringent (low identity) splits. Moreover, because the partitioning operates at the cluster level, it naturally mitigates label imbalance—rare classes can be distributed more evenly across partitions.

Limitations are also discussed. The choice of clustering algorithm and identity cutoff strongly influences the size and composition of each partition; overly conservative thresholds may shrink the training set to the point where deep models cannot learn effectively. Conversely, too permissive thresholds may leave residual homology between partitions, re‑introducing leakage. In the open‑set scenario, the representativeness of novel labels depends on the availability of sufficiently many examples; if a new functional class is extremely rare, Test‑2 may not provide a robust estimate of out‑of‑distribution performance.

Beyond enzyme function prediction, the GRIMM framework is applicable to any sequence‑based classification task—such as protein‑ligand binding prediction, variant effect annotation, or transcription‑factor binding site identification—where inputs can be meaningfully clustered. Future work could explore multi‑level stratification (e.g., using several identity thresholds) to evaluate hierarchical generalization, or integrate GRIMM with meta‑learning and domain‑adaptation techniques to improve open‑set performance. Combining sequence clustering with structural embeddings may further refine the benchmark by capturing functional signals that pure sequence similarity misses.

In summary, GRIMM formalizes a splitting strategy that has often been applied implicitly in ad‑hoc studies, providing a reproducible, scalable, and biologically grounded benchmark. By delivering both closed‑set and open‑set evaluations, it enables researchers to gauge not only how well models memorize known enzyme classes but also how effectively they can discover genuinely new functions, thereby advancing both computational methodology and practical bio‑discovery pipelines.

Comments & Academic Discussion

Loading comments...

Leave a Comment