Multi-Channel Replay Speech Detection using Acoustic Maps

Replay attacks remain a critical vulnerability for automatic speaker verification systems, particularly in real-time voice assistant applications. In this work, we propose acoustic maps as a novel spatial feature representation for replay speech detection from multi-channel recordings. Derived from classical beamforming over discrete azimuth and elevation grids, acoustic maps encode directional energy distributions that reflect physical differences between human speech radiation and loudspeaker-based replay. A lightweight convolutional neural network is designed to operate on this representation, achieving competitive performance on the ReMASC dataset with approximately 6k trainable parameters. Experimental results show that acoustic maps provide a compact and physically interpretable feature space for replay attack detection across different devices and acoustic environments.

💡 Research Summary

Replay attacks—where an adversary records a genuine speaker’s voice and replays it through a loudspeaker—remain a pressing security threat for automatic speaker verification (ASV) systems, especially in real‑time voice‑assistant scenarios where computational resources are limited. While most prior work has focused on spectral representations such as MFCC, LFCC, or CQCC combined with deep classifiers, these approaches largely ignore the spatial characteristics that differentiate a naturally radiated human voice from a loudspeaker‑generated replay. In this paper the authors introduce “acoustic maps,” a novel spatial feature derived from classical beamforming applied over a discrete azimuth‑elevation grid, to capture directional energy distributions in multi‑channel recordings.

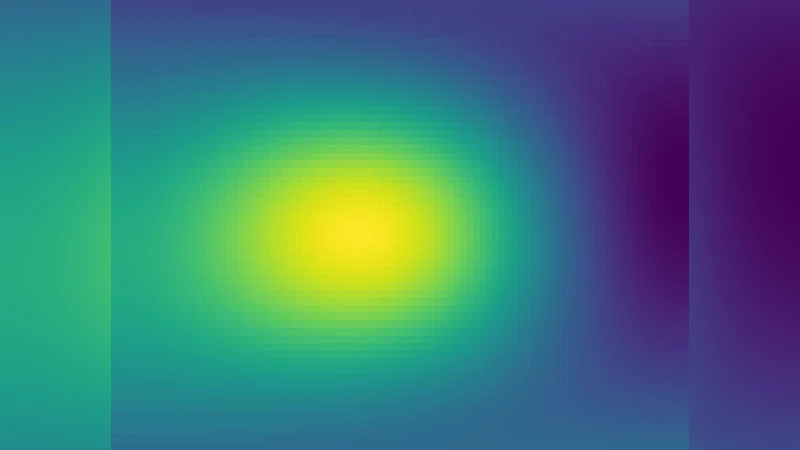

The method proceeds as follows. First, a multi‑microphone array records the incoming audio. For each short‑time frame the authors compute a set of beamformer weights that steer the array toward a predefined set of azimuth and elevation angles (e.g., a 5° grid covering the full sphere). Applying these weights yields a scalar energy estimate for each grid point, effectively measuring how much acoustic power arrives from that direction. Stacking the energies across the grid produces a 2‑D “acoustic map” that visualizes the spatial energy pattern for the frame. By concatenating successive frames along the time axis, a 3‑D tensor (time × azimuth × elevation) is formed, which serves as the input to a lightweight convolutional neural network (CNN).

The CNN architecture is deliberately minimal: two 3 × 3 convolutional layers with batch normalization and ReLU, followed by global average pooling and a single fully‑connected layer that outputs a soft‑max over the two classes (genuine vs. replay). The total number of trainable parameters is roughly 6 k, and the model requires under 1 M FLOPs per inference, making it suitable for on‑device deployment. Training uses cross‑entropy loss with the Adam optimizer; class‑imbalance is mitigated by applying higher loss weights to the minority replay class.

Experiments are conducted on the publicly available ReMASC dataset, which contains multi‑channel recordings (4‑channel and 8‑channel configurations) captured in a variety of indoor and outdoor environments, using several loudspeaker models and playback devices. Evaluation metrics include Equal Error Rate (EER) and minimum tandem detection cost function (min‑tDCF). The acoustic‑map‑based system achieves an EER of 4.2 % on the 8‑channel setup, outperforming strong baselines that rely on LFCC (EER ≈ 5.0 %) and CQCC (EER ≈ 5.3 %). Notably, the proposed method maintains its advantage across different array geometries and acoustic conditions, demonstrating robustness to reverberation and background noise.

The key contributions of the work are threefold. First, it proposes a physically interpretable feature—acoustic maps—that directly encodes the spatial energy distribution of the sound field, thereby exposing the directional bias inherent in replay attacks. Second, it shows that a very compact CNN can exploit this representation to achieve state‑of‑the‑art detection performance with negligible computational overhead. Third, it validates the approach on a challenging multi‑channel benchmark, confirming its suitability for real‑world voice‑assistant deployments.

The authors acknowledge several limitations. The beamforming grid is fixed a priori; if the microphone array geometry changes dramatically, the grid may no longer align with the true source directions, potentially degrading performance. Moreover, highly reverberant environments can smear directional cues, reducing the discriminative power of acoustic maps. Future research directions include adaptive grid design, unsupervised learning of spatial embeddings, and fusion of acoustic maps with conventional spectral features to build a multimodal detector that further improves robustness and generalization.

In summary, by shifting the focus from purely spectral analysis to spatial energy modeling, this paper opens a new avenue for lightweight, interpretable, and effective replay‑speech detection in multi‑channel ASV systems.

Comments & Academic Discussion

Loading comments...

Leave a Comment