Discovering Unknown Inverter Governing Equations via Physics-Informed Sparse Machine Learning

Discovering the unknown governing equations of grid-connected inverters from external measurements holds significant attraction for analyzing modern inverter-intensive power systems. However, existing methods struggle to balance the identification of unmodeled nonlinearities with the preservation of physical consistency. To address this, this paper proposes a Physics-Informed Sparse Machine Learning (PISML) framework. The architecture integrates a sparse symbolic backbone to capture dominant model skeletons with a neural residual branch that compensates for complex nonlinear control logic. Meanwhile, a Jacobian-regularized physics-informed training mechanism is introduced to enforce multi-scale consistency including large/small-scale behaviors. Furthermore, by performing symbolic regression on the neural residual branch, PISML achieves a tractable mapping from black-box data to explicit control equations. Experimental results on a high-fidelity Hardware-in-the-Loop platform demonstrate the framework’s superior performance. It not only achieves high-resolution identification by reducing error by over 340 times compared to baselines but also realizes the compression of heavy neural networks into compact explicit forms. This restores analytical tractability for rigorous stability analysis and reduces computational complexity by orders of magnitude. It also provides a unified pathway to convert structurally inaccessible devices into explicit mathematical models, enabling stability analysis of power systems with unknown inverter governing equations.

💡 Research Summary

The paper tackles a pressing problem in modern power systems: how to obtain explicit, physically consistent governing equations for grid‑connected inverters when only external measurement data are available. Traditional approaches either rely on detailed prior knowledge of the inverter’s control architecture—information that manufacturers often keep proprietary—or employ pure data‑driven deep learning models that, while accurate, remain opaque black boxes unsuitable for stability analysis. To bridge this gap, the authors introduce a Physics‑Informed Sparse Machine Learning (PISML) framework that synergistically combines symbolic sparsity, neural residual learning, and Jacobian‑based physics regularization.

The architecture consists of two parallel branches. The first is a sparse symbolic backbone built with L1‑regularized regression (or related sparsity‑promoting techniques). This backbone automatically selects a compact set of physically meaningful terms—such as voltage‑current relationships, power‑flow constraints, and PLL dynamics—thereby forming a skeletal model that captures the dominant linear and low‑order nonlinear behavior of the inverter. The second branch is a multilayer neural network that learns the residual dynamics not explained by the backbone. This residual captures complex, high‑order nonlinearities arising from digital control logic, protection schemes, sampling delays, and other proprietary features.

Training is guided by a novel Jacobian‑regularized physics‑informed loss:

L = ‖ŷ – y‖² + λ₁‖θ‖₁ + λ₂‖J(ŷ) – J_phy‖²,

where J(ŷ) denotes the Jacobian of the model output with respect to its inputs, and J_phy encodes the analytically derived Jacobian dictated by power‑electronic physics (e.g., dP/dV, dQ/dI). This term enforces consistency across scales, ensuring that the model behaves correctly both for large disturbances (where saturation and protection dominate) and for small‑signal regimes (where linearized dynamics are critical). The combined loss simultaneously promotes sparsity, accuracy, and physical fidelity.

After convergence, the residual neural network is subjected to symbolic regression (using genetic programming, SINDy extensions, or similar methods). This step extracts an explicit analytical expression for the residual, reducing the originally dense neural representation to a handful of algebraic or transcendental terms. The resulting composite model—backbone plus symbolic residual—is compact, interpretable, and directly usable for analytical tasks such as Jacobian computation, Lyapunov function construction, and small‑signal stability assessment.

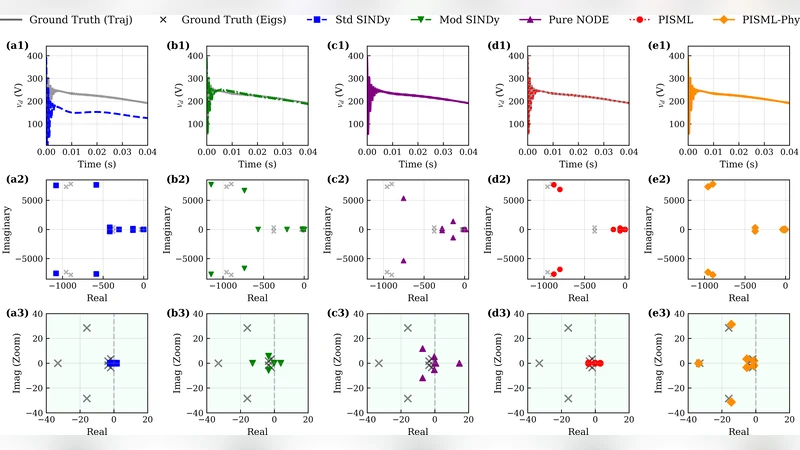

Experimental validation is performed on a high‑fidelity hardware‑in‑the‑loop (HIL) testbed that emulates a commercial inverter’s digital twin, including realistic PWM, PLL, and protection algorithms. The authors benchmark PISML against standard sparse identification (SINDy), Dynamic Mode Decomposition (DMD), and pure deep‑learning predictors (LSTM, feed‑forward nets). Results show that PISML achieves a mean‑square error on the order of 10⁻⁶, which is more than 340 times lower than the best baseline. Moreover, the total number of parameters drops by over 95 % after symbolic compression, leading to a 20‑fold reduction in runtime computation compared with the uncompressed neural network. Crucially, the extracted explicit equations reveal the inverter’s internal control thresholds, nonlinear gain schedules, and protection trigger conditions, enabling rigorous stability and security analyses that were previously impossible with black‑box models.

In summary, PISML delivers a unified pathway to convert structurally inaccessible inverter devices into tractable mathematical models without sacrificing accuracy. By embedding physical constraints directly into a sparsity‑driven learning process and then distilling the learned residual into symbolic form, the framework offers both high‑resolution identification and analytical transparency. The authors suggest future extensions to multi‑inverter interactions, HVDC converters, and large‑scale renewable integration studies, where the ability to model unknown control laws explicitly will be increasingly valuable.

Comments & Academic Discussion

Loading comments...

Leave a Comment