Investigation for Relative Voice Impression Estimation

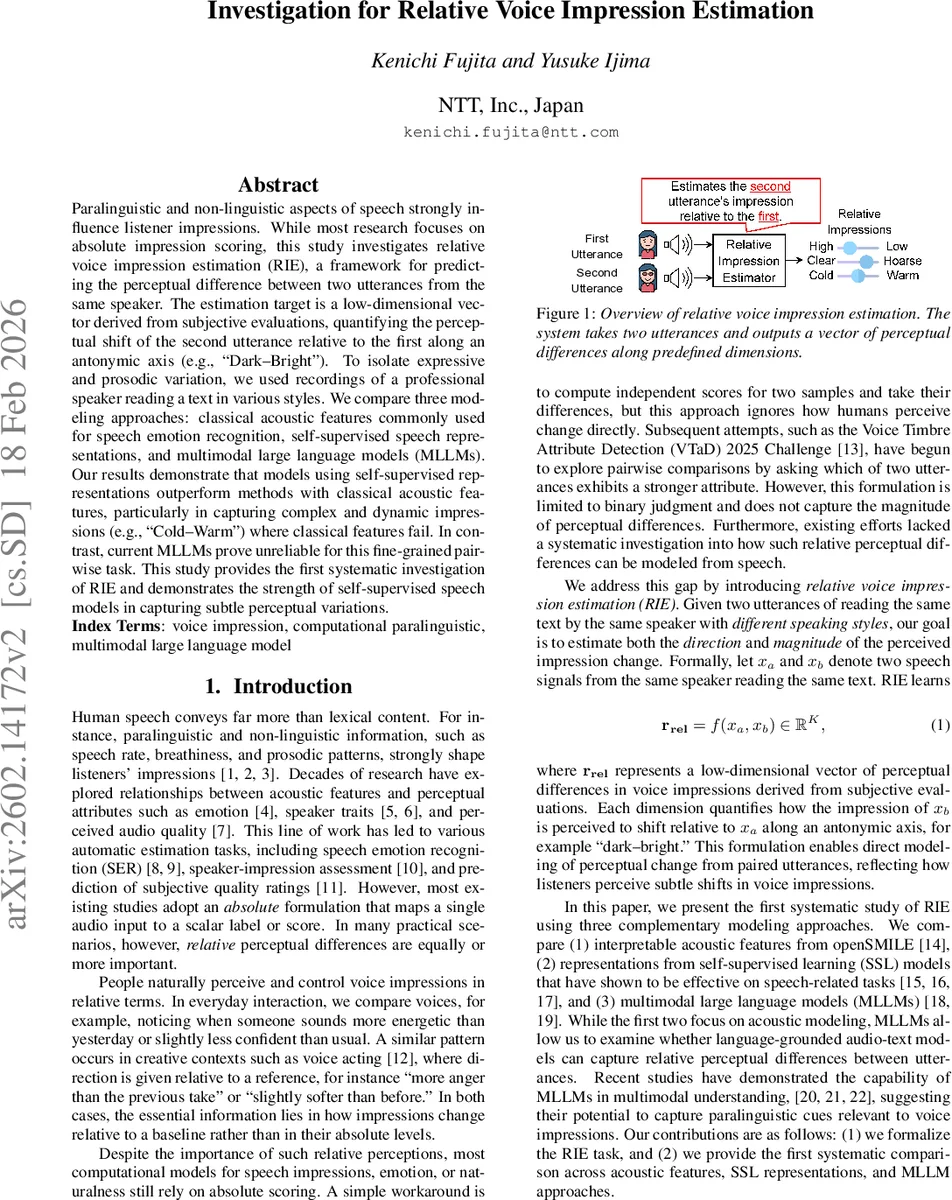

Paralinguistic and non-linguistic aspects of speech strongly influence listener impressions. While most research focuses on absolute impression scoring, this study investigates relative voice impression estimation (RIE), a framework for predicting the perceptual difference between two utterances from the same speaker. The estimation target is a low-dimensional vector derived from subjective evaluations, quantifying the perceptual shift of the second utterance relative to the first along an antonymic axis (e.g., Dark--Bright''). To isolate expressive and prosodic variation, we used recordings of a professional speaker reading a text in various styles. We compare three modeling approaches: classical acoustic features commonly used for speech emotion recognition, self-supervised speech representations, and multimodal large language models (MLLMs). Our results demonstrate that models using self-supervised representations outperform methods with classical acoustic features, particularly in capturing complex and dynamic impressions (e.g., Cold–Warm’’) where classical features fail. In contrast, current MLLMs prove unreliable for this fine-grained pairwise task. This study provides the first systematic investigation of RIE and demonstrates the strength of self-supervised speech models in capturing subtle perceptual variations.

💡 Research Summary

The paper introduces a novel task called Relative Voice Impression Estimation (RIE), which aims to predict the perceptual shift between two utterances spoken by the same speaker. Unlike traditional absolute scoring of voice impressions, RIE directly models the direction and magnitude of change along predefined antonymic axes (e.g., Dark–Bright, Cold–Warm). To create a controlled dataset, the authors recorded a single professional Japanese female voice actor reading the same text in 52 different speaking styles derived from the TTS Speaking Style Classification guidelines. This resulted in 814 utterance pairs (3,372 seconds of audio). Each pair was evaluated by crowdsourced listeners (3,920 participants, at least ten ratings per pair) on nine impression dimensions using a 7‑point Likert scale; both AB and BA orders were presented to mitigate order effects. The resulting low‑dimensional “impression difference vector” serves as the target for regression models.

Three modeling families are compared. (1) Classical acoustic features: openSMILE eGeMAPSv02 extracts 88 low‑level descriptors; the feature difference ϕΔ = ϕ(x_b) – ϕ(x_a) is fed to a suite of regressors (Ridge, PLS2, Random Forest, GBDT, SVR) and a three‑layer feed‑forward neural network. Feature selection is based on Pearson correlation with each impression; top eight features per dimension include high‑percentile F0, the first two MFCCs, alpha‑ratio, Hammarberg index, F1 bandwidth, and spectral flux. (2) Self‑supervised learning (SSL) representations: a pretrained HuBERT model (768‑dim frame embeddings) is aggregated via weighted sum, a bidirectional LSTM, and attention pooling into a 128‑dim utterance embedding. The embeddings of the two utterances are concatenated and passed through a three‑layer MLP to predict the nine‑dimensional difference vector. The SSL model is fine‑tuned with mean‑squared error loss and AdamW optimizer (lr = 0.002). (3) Multimodal large language models (MLLMs): zero‑shot experiments with ChatGPT (GPT‑5 “Thinking”) and Gemini 2.5 Pro. Audio is supplied directly, and a Japanese prompt asks the model to rate each of the nine dimensions and provide a short rationale. The returned textual scores are parsed into a numeric vector. No fine‑tuning is performed.

Performance is evaluated using Pearson correlation and Concordance Correlation Coefficient (CCC). Classical acoustic‑feature regressors achieve moderate results (Pearson ≈ 0.44–0.60, CCC ≈ 0.44–0.82), with the highest CCC of 0.84 on the Dark–Bright axis. The neural network variant slightly improves Pearson but not CCC. SSL‑based models consistently outperform the acoustic baseline, reaching Pearson ≈ 0.60–0.72 and CCC ≈ 0.66–0.82 across all dimensions; they excel especially on dynamic impressions such as Calm–Restless and Powerful–Weak, indicating that the high‑level representations capture subtle prosodic and spectral cues that handcrafted features miss. In contrast, the MLLM zero‑shot approach yields low correlations (Pearson 0.10–0.60, CCC 0.15–0.70) and even negative values on some axes, suggesting that current audio‑aware LLMs are not yet tuned to the fine‑grained perceptual judgments required for RIE.

The analysis of feature importance reveals that pitch‑related descriptors (especially the 80th percentile F0) are strongly linked to several impressions, confirming prior findings in speech emotion recognition. Low‑level spectral features (first two MFCCs) also contribute, while loudness‑related metrics show little relevance in this same‑speaker, same‑text setting. The authors discuss limitations: the dataset is single‑speaker and Japanese only, which restricts generalizability; the MLLM evaluation is exploratory and may improve with domain‑specific fine‑tuning; and crowdsourced ratings, while extensive, could still contain bias.

Overall, the study establishes RIE as a meaningful computational problem and demonstrates that self‑supervised speech models are currently the most effective tool for capturing subtle, relative voice impression changes. Classical acoustic features remain useful for certain axes but lack the expressive power for complex, dynamic shifts. Multimodal LLMs, in their present form, are unreliable for fine‑grained pairwise impression estimation, highlighting an area for future research. Potential extensions include multi‑speaker and multilingual datasets, larger crowdsourced annotation campaigns, and adapting LLMs through targeted fine‑tuning or prompt engineering to better handle perceptual reasoning tasks.

Comments & Academic Discussion

Loading comments...

Leave a Comment