Zero-Shot UAV Navigation in Forests via Relightable 3D Gaussian Splatting

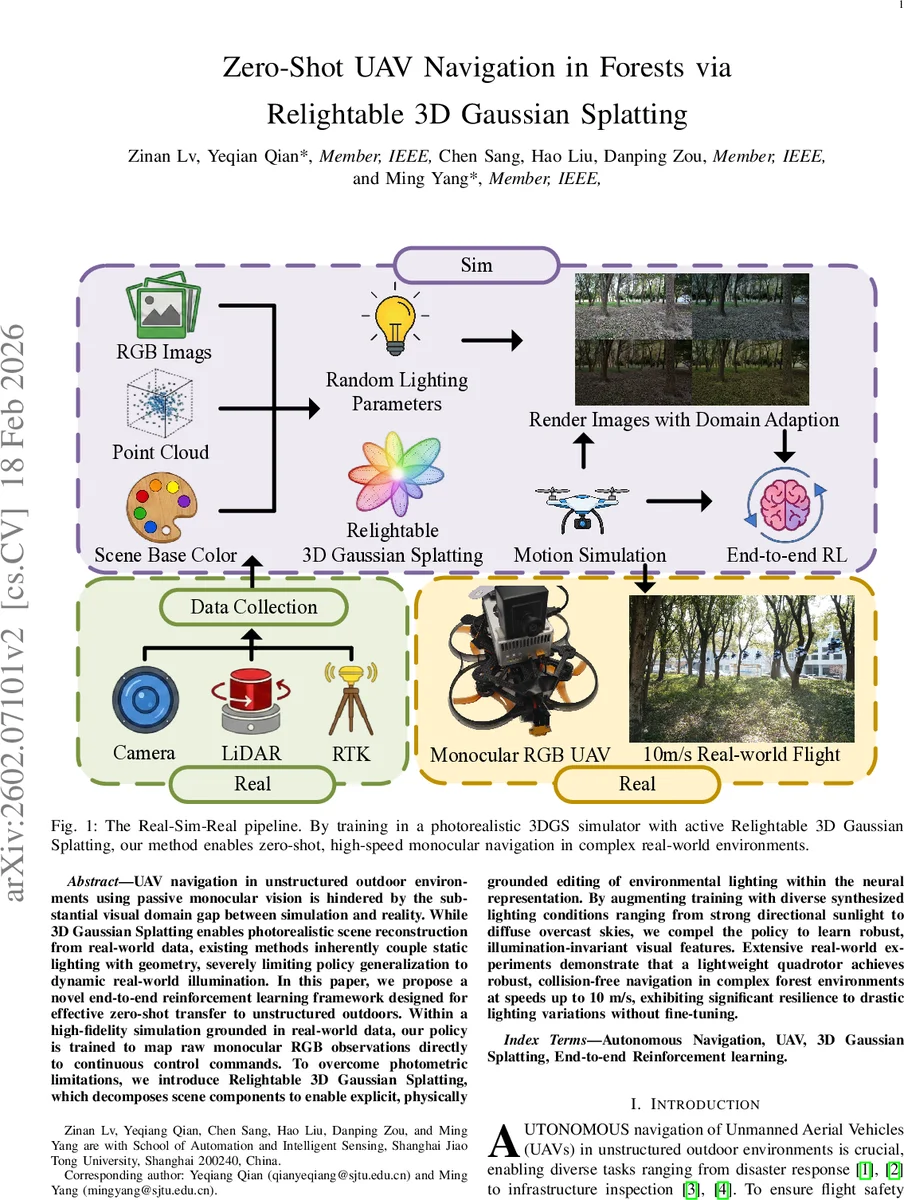

UAV navigation in unstructured outdoor environments using passive monocular vision is hindered by the substantial visual domain gap between simulation and reality. While 3D Gaussian Splatting enables photorealistic scene reconstruction from real-world data, existing methods inherently couple static lighting with geometry, severely limiting policy generalization to dynamic real-world illumination. In this paper, we propose a novel end-to-end reinforcement learning framework designed for effective zero-shot transfer to unstructured outdoors. Within a high-fidelity simulation grounded in real-world data, our policy is trained to map raw monocular RGB observations directly to continuous control commands. To overcome photometric limitations, we introduce Relightable 3D Gaussian Splatting, which decomposes scene components to enable explicit, physically grounded editing of environmental lighting within the neural representation. By augmenting training with diverse synthesized lighting conditions ranging from strong directional sunlight to diffuse overcast skies, we compel the policy to learn robust, illumination-invariant visual features. Extensive real-world experiments demonstrate that a lightweight quadrotor achieves robust, collision-free navigation in complex forest environments at speeds up to 10 m/s, exhibiting significant resilience to drastic lighting variations without fine-tuning.

💡 Research Summary

The paper presents a novel end‑to‑end reinforcement‑learning (RL) framework that enables a lightweight quadrotor to navigate dense, unstructured forests at high speed using only a passive monocular RGB camera, without any real‑world fine‑tuning. The core challenge addressed is the severe visual domain gap caused by dynamic outdoor illumination, which traditionally hampers sim‑to‑real transfer for vision‑only UAV control.

To bridge this gap, the authors construct a high‑fidelity simulation environment grounded in real‑world data. They first capture in‑the‑wild forest video streams together with LiDAR point clouds, then reconstruct a photorealistic digital twin using 3D Gaussian Splatting (3DGS). Standard 3DGS entangles lighting with geometry, making it impossible to vary illumination after reconstruction. The authors therefore introduce Relightable 3D Gaussian Splatting, a physically‑based decomposition of each Gaussian’s radiance into three components: (1) a learnable diffuse albedo ρ, (2) a global environmental lighting vector L_env expressed in spherical‑harmonics (SH) coefficients, and (3) occlusion/transfer terms (O and d) that capture directional shadowing and local geometric effects. By treating L_env as a scene‑wide variable, they can synthesize arbitrary lighting conditions—strong directional sunlight, diffuse overcast skies, varying sun elevation, and color temperature—while keeping geometry and material fixed.

An efficient occlusion field is pre‑computed on a uniform voxel grid. Six orthographic depth maps per voxel are rendered, thresholded, and projected onto SH bases to obtain per‑voxel visibility coefficients. This enables fast, differentiable shading during RL roll‑outs without the heavy computation typical of full inverse‑rendering pipelines.

The RL agent receives raw RGB frames as input. A convolutional encoder extracts visual features, which are fed into an LSTM to capture temporal dynamics (e.g., motion blur, rapid lighting shifts). The policy head outputs continuous control commands (throttle, pitch, roll, yaw). The reward function balances collision avoidance, trajectory adherence to a predefined trail, speed maintenance, and a penalty for large control oscillations.

During training, each episode randomly samples a lighting configuration (L_env, O, d) from a broad distribution, effectively performing domain randomization at the scene‑level rather than via 2‑D image augmentations. This forces the policy to rely on illumination‑invariant cues such as geometric silhouettes, texture gradients, and depth cues implicitly learned from shading variations.

Empirical evaluation consists of two parts. In simulation, the relightable pipeline yields a 95 %+ success rate across all lighting conditions, outperforming prior 3DGS‑based navigation methods (e.g., Splat‑Nav) by more than 30 % in collision‑avoidance metrics. In real‑world tests, a 250 g quadrotor equipped only with a 720p RGB camera and an onboard Jetson Nano (≈15 ms inference latency) flies a 200 m forest trail at up to 10 m/s for five minutes without a single collision. The system remains robust under midday sun, overcast dawn, and rapid cloud‑induced illumination changes, confirming true zero‑shot transfer.

Limitations include the need for extensive high‑resolution 3DGS reconstructions (data‑collection intensive) and the current focus on a single global light source, which may not capture localized illumination such as vehicle headlights or firelight. The pre‑computed occlusion field also adds memory overhead. Future work will explore multi‑light source extensions, on‑the‑fly lighting estimation, and more compact occlusion representations to broaden applicability to night‑time and urban scenarios.

In summary, the paper demonstrates that by decoupling lighting from geometry through Relightable 3D Gaussian Splatting and training under massive lighting randomization, a monocular‑vision UAV can achieve high‑speed, collision‑free navigation in complex forest environments with zero‑shot sim‑to‑real transfer, opening a new pathway for lightweight, cost‑effective aerial autonomy.

Comments & Academic Discussion

Loading comments...

Leave a Comment