PoeTone: A Framework for Constrained Generation of Structured Chinese Songci with LLMs

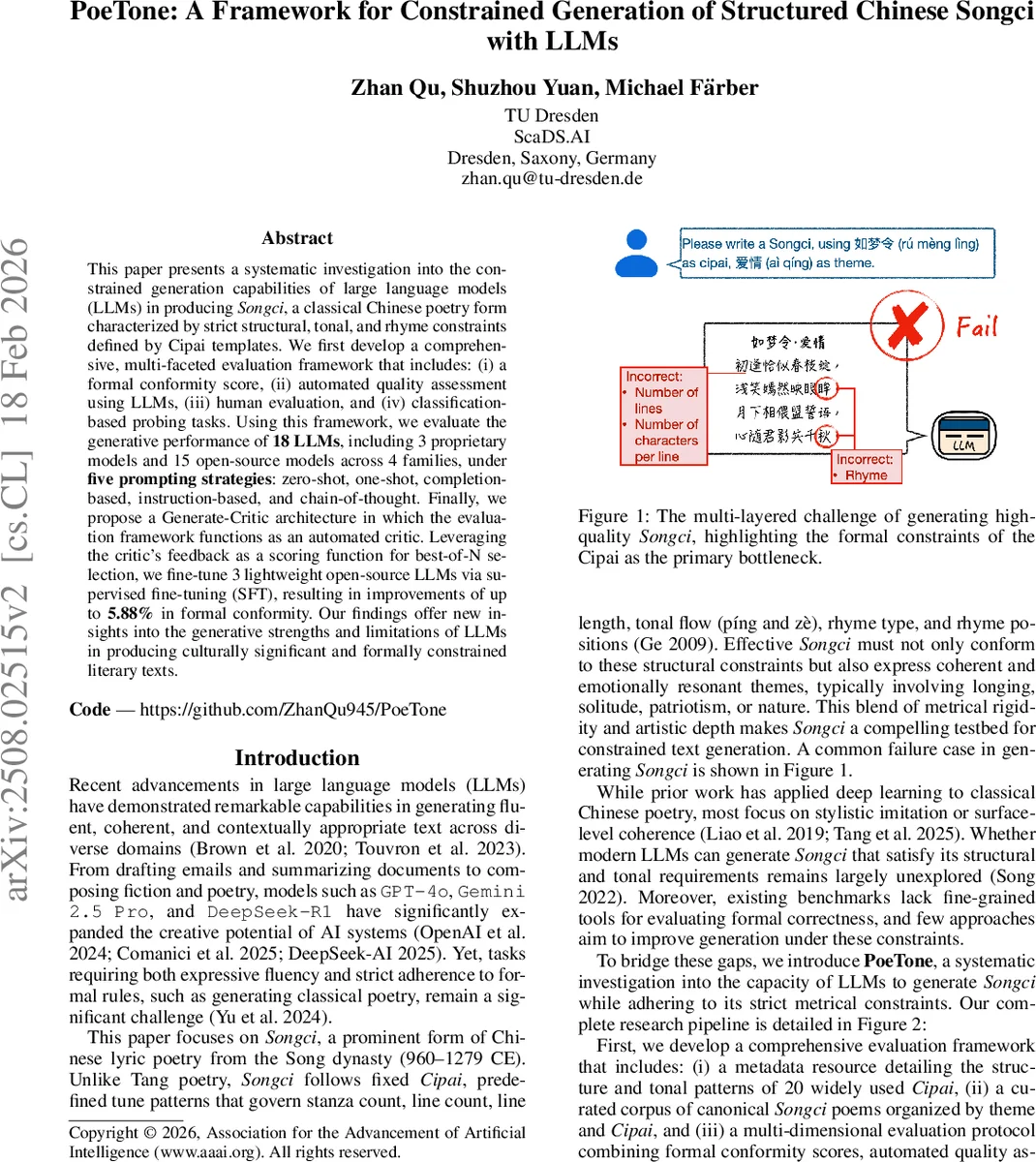

This paper presents a systematic investigation into the constrained generation capabilities of large language models (LLMs) in producing Songci, a classical Chinese poetry form characterized by strict structural, tonal, and rhyme constraints defined by Cipai templates. We first develop a comprehensive, multi-faceted evaluation framework that includes: (i) a formal conformity score, (ii) automated quality assessment using LLMs, (iii) human evaluation, and (iv) classification-based probing tasks. Using this framework, we evaluate the generative performance of 18 LLMs, including 3 proprietary models and 15 open-source models across 4 families, under five prompting strategies: zero-shot, one-shot, completion-based, instruction-based, and chain-of-thought. Finally, we propose a Generate-Critic architecture in which the evaluation framework functions as an automated critic. Leveraging the critic’s feedback as a scoring function for best-of-N selection, we fine-tune 3 lightweight open-source LLMs via supervised fine-tuning (SFT), resulting in improvements of up to 5.88% in formal conformity. Our findings offer new insights into the generative strengths and limitations of LLMs in producing culturally significant and formally constrained literary texts.

💡 Research Summary

The paper “PoeTone: A Framework for Constrained Generation of Structured Chinese Songci with LLMs” investigates how well large language models (LLMs) can generate Songci, a classical Chinese lyric form that is governed by strict structural, tonal, and rhyme constraints encoded in Cipai (tune‑pattern) templates. To evaluate this capability, the authors first construct two essential resources that have not previously been publicly available: (1) a machine‑readable JSON metadata repository covering the 20 most frequently used Cipai, each with detailed specifications of stanza count, line length, required tonal categories (píng = level, zè = oblique), and rhyme‑group positions; and (2) a curated corpus of 120 canonical Songci poems, annotated by six thematic categories (love & longing, patriotism, nature, history, sorrow, philosophical reflection).

Using these resources, the authors design a multi‑dimensional evaluation framework that combines (i) a Formal Conformity Score, (ii) automated quality assessment by powerful LLM judges, (iii) human evaluation (including a poetic Turing test and Likert‑scale ratings), and (iv) classification‑based probing tasks. The Formal Conformity Score is the core metric; it aggregates three sub‑scores—Structural Integrity, Tonal Adherence, and Rhyme Scheme Compliance—using weights wS = 0.4, wT = 0.3, wR = 0.3. Structural integrity checks line count and exact character count per line; tonal adherence uses a modern Mandarin pronunciation lexicon to verify that characters at prescribed positions match the required level or oblique tone (allowing “zhòng” where both are permitted); rhyme compliance extracts the final characters at designated rhyme positions and measures the proportion that belong to the same rhyme group according to the classical rhyme dictionary (Ge 2009). The final score for a generated poem is the maximum weighted sum across all known variants of the target Cipai.

To complement rule‑based scoring, the authors employ two proprietary LLMs (GPT‑4o and ERNIE 4.5 Turbo) as “judges”. Each generated poem, together with its Cipai and theme, is presented to the judge models, which rate Fluency, Coherence, and Poetic Quality on a 1‑5 scale. This provides a fast, repeatable proxy for human judgment while acknowledging the judges’ limited literary sensibility.

Human evaluation proceeds in two stages. First, evaluators see a paired presentation of a model‑generated poem and a human‑written reference (both anonymized) and must decide which is human‑authored, also indicating confidence. After the true authorship is revealed, evaluators rate the generated poem on Thematic Faithfulness, Artistic Merit, and Overall Quality (1‑5 Likert). Additionally, the authors conduct probing experiments using lightweight classifiers (SVM on character‑level BERT embeddings and Multinomial Naïve Bayes on TF‑IDF unigrams) to test whether LLM outputs encode (a) Cipai identity, (b) thematic class, and (c) source (human vs. model).

The benchmark covers 18 LLMs: three proprietary (GPT‑4o, Gemini 2.5 Pro, ERNIE 4.5 Turbo) and fifteen open‑source models from four families (LLaMA, Mistral/Mixtral, Qwen, DeepSeek). Each model is prompted under five strategies for every Cipai‑theme pair: Zero‑shot, One‑shot (single example), Completion (first stanza supplied), Instruction (explicit formal rules), and Chain‑of‑Thought (model first enumerates rules before generation). In total, over 1,800 poems are generated.

Results reveal that even state‑of‑the‑art proprietary models struggle with formal constraints. Zero‑shot performance yields average conformity scores below 30%; One‑shot and Completion improve modestly to around 45%; Instruction and Chain‑of‑Thought achieve the highest scores, with CoT marginally outperforming pure Instruction (average overall conformity ≈ 55%). Tonal and rhyme compliance remain the weakest dimensions, often below 60%, indicating that LLMs are better at maintaining line length than at respecting phonological patterns. Among open‑source models, Qwen‑1.8B and DeepSeek‑Chat 7B show relatively better adherence, yet still lag behind the best proprietary scores.

To address these gaps, the authors propose a Generate‑Critic architecture. The rule‑based Formal Conformity Score serves as an automated critic that evaluates N = 5 generated candidates per prompt and selects the highest‑scoring one (Best‑of‑N). The selected poem is then used as a target label for supervised fine‑tuning (SFT) via LoRA adapters. Three lightweight open‑source models (LLaMA‑7B‑Chat, Qwen‑1.8B‑Chat, DeepSeek‑Chat 7B‑Chat) are fine‑tuned on the best‑of‑N outputs. Post‑SFT, structural, tonal, and rhyme scores improve by an average of 3.2–3.8 percentage points, and the aggregate Formal Conformity Score rises up to 5.88 pp. This demonstrates that an automated critic‑feedback loop can meaningfully enhance constrained generation without massive data collection.

Key insights from the study include: (1) Precise, machine‑readable constraint metadata is essential for objective evaluation of formally structured text generation. (2) Prompt engineering matters: prompting models to reason about constraints (CoT) yields the most faithful outputs. (3) Automatic critic‑guided selection combined with lightweight SFT can substantially boost performance even for relatively small models. (4) Current LLMs excel at fluency and thematic coherence but lack intrinsic mastery of phonological rules required by classical poetry, suggesting that rule‑based augmentation remains necessary for high‑fidelity cultural text generation.

In conclusion, the paper makes four major contributions: (i) releasing a comprehensive Cipai metadata repository and a thematically annotated Songci corpus; (ii) introducing a formal conformity scoring system and a layered evaluation protocol that blends rule‑based, LLM‑based, and human assessments; (iii) providing an extensive benchmark of 18 LLMs across five prompting strategies, exposing systematic limitations in formal adherence; and (iv) presenting the Generate‑Critic framework, which leverages automated rule‑based feedback to improve constrained generation via best‑of‑N selection and supervised fine‑tuning. The work advances the state of the art in AI‑driven generation of culturally significant, formally constrained literary texts and highlights the continued need for hybrid approaches that combine large‑scale language modeling with explicit rule enforcement.

Comments & Academic Discussion

Loading comments...

Leave a Comment