Voice Impression Control in Zero-Shot TTS

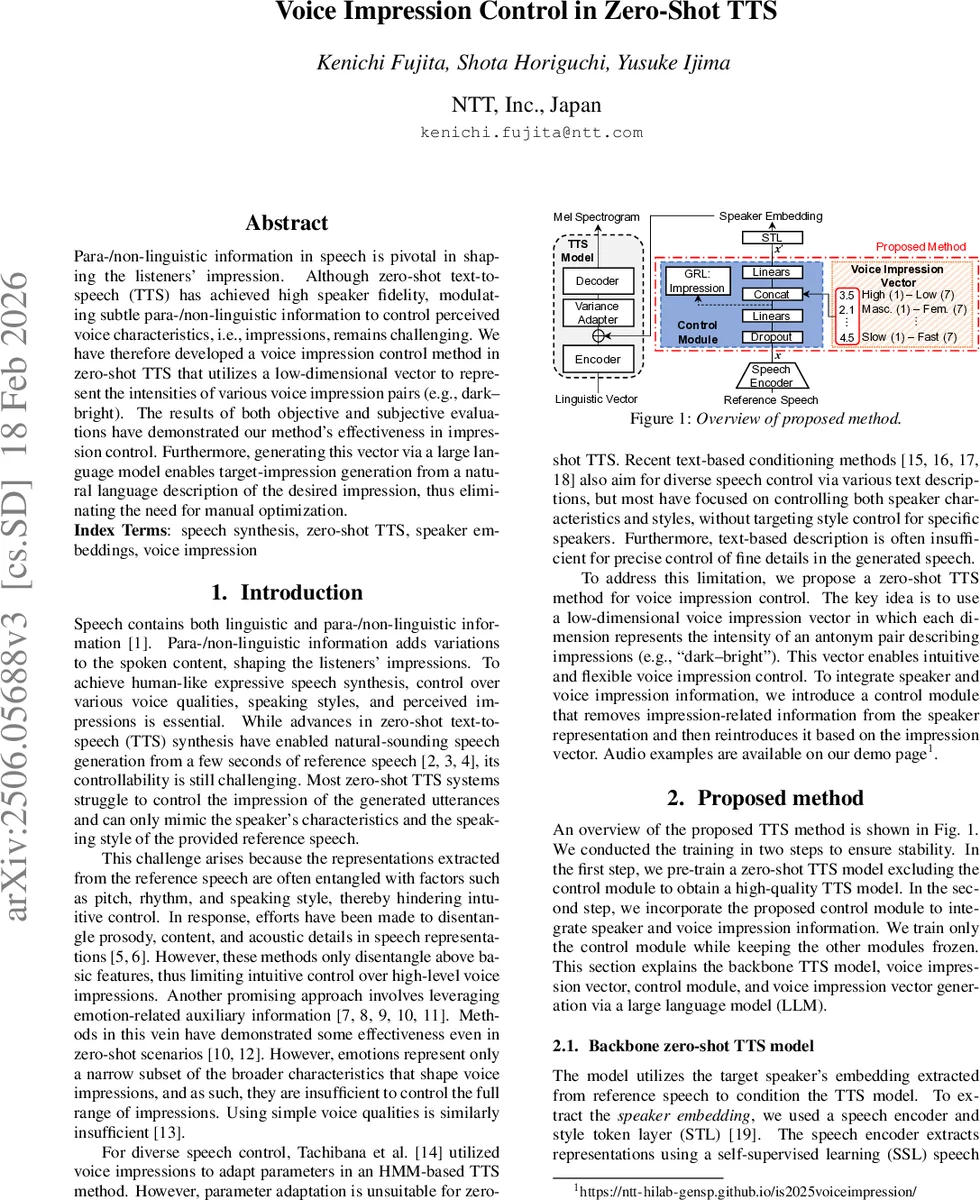

Para-/non-linguistic information in speech is pivotal in shaping the listeners’ impression. Although zero-shot text-to-speech (TTS) has achieved high speaker fidelity, modulating subtle para-/non-linguistic information to control perceived voice characteristics, i.e., impressions, remains challenging. We have therefore developed a voice impression control method in zero-shot TTS that utilizes a low-dimensional vector to represent the intensities of various voice impression pairs (e.g., dark-bright). The results of both objective and subjective evaluations have demonstrated our method’s effectiveness in impression control. Furthermore, generating this vector via a large language model enables target-impression generation from a natural language description of the desired impression, thus eliminating the need for manual optimization. Audio examples are available on our demo page (https://ntt-hilab-gensp.github.io/is2025voiceimpression/).

💡 Research Summary

The paper addresses a notable gap in zero‑shot text‑to‑speech (TTS) technology: the ability to manipulate subtle para‑linguistic cues that shape listeners’ impressions of a voice. While recent zero‑shot TTS systems can faithfully clone a speaker’s timbre from a few seconds of reference audio, they lack fine‑grained control over high‑level voice qualities such as “dark‑bright” or “masculine‑feminine”. To fill this void, the authors propose a Voice Impression Control framework that introduces a low‑dimensional Voice Impression Vector (VIV). The VIV consists of eleven dimensions, each representing the intensity of an antonym pair (e.g., High–Low pitch, Masculine–Feminine, Clear–Hoarse, Calm–Restless, Powerful–Weak, Youthful–Elderly, Thick–Thin, Tense–Relaxed, Dark–Bright, Cold–Warm, Slow–Fast). Each dimension is scored on a 1‑to‑7 Likert scale, providing an intuitive, human‑readable description of the desired impression.

The backbone TTS model follows a conventional zero‑shot pipeline: a self‑supervised learning (SSL) speech encoder (HuBERT‑BASE) extracts frame‑level representations, which are aggregated via a weighted sum, a bidirectional LSTM, and an attention mechanism into a single speaker embedding x. A Style Token Layer (STL) further stabilises this embedding. FastSpeech2 serves as the acoustic model, and HiFi‑GAN synthesises waveforms. However, x inevitably entangles speaker identity with impression‑related information, making direct manipulation difficult.

To disentangle these factors, the authors add a dedicated control module trained in a second stage while freezing the backbone. Two mechanisms are employed: (1) a Gradient Reversal Layer (GRL) combined with a high‑rate dropout (0.8) forces the speaker embedding to shed impression cues, encouraging it to retain only speaker‑specific characteristics; (2) the VIV is projected to the same dimensionality as the processed embedding (both to 32 D) and concatenated before feeding into the decoder. Consequently, the decoder receives speaker identity from x and impression guidance exclusively from the VIV, allowing independent adjustment of each impression dimension.

Manual tuning of the VIV would be labor‑intensive. The paper therefore leverages large language models (LLMs) to generate VIVs from natural‑language prompts. A prompt includes a task description, explicit instructions defining each dimension, and a target specification (e.g., “Talk to children ‘Sleepy’”). The LLM interprets the description and outputs an 11‑dimensional score vector, which can be fine‑tuned by the user if needed. This approach mirrors recent text‑guided image generation pipelines, extending them to high‑level speech attributes.

Data collection involved an in‑house Japanese corpus of 1,800 h (22 kHz) covering 20,270 speakers. For 1,154 speakers, a single utterance was crowd‑sourced and rated on the ten primary dimensions (the eleventh, Slow–Fast, was derived from speech rate). To label the remaining data, the authors trained an impression estimator (HuBERT‑BASE + BiLSTM + attention) on the manually scored subset, achieving a root‑MSE of 0.338. This estimator automatically generated VIVs for the full dataset, enabling large‑scale training.

Training proceeded in three phases: (i) pre‑train the FastSpeech2 backbone for 200 k steps; (ii) further refine the backbone with a GAN loss for another 200 k steps; (iii) insert the control module and train it for 50 k steps while keeping all other parameters frozen. The control module’s projection layers and dropout were empirically set to 32 D and 0.8, respectively.

Objective evaluations used two unseen speakers (one female, one male) in a zero‑shot setting. By modulating a single VIV dimension from –3 to +3 (relative to the reference), the authors observed monotonic changes in the corresponding impression scores estimated by the same impression estimator, confirming controllability. Simultaneous modulation of two correlated dimensions (Powerful–Weak and Tense–Relaxed) also yielded consistent, predictable changes. Speaker similarity was measured with Resemblyzer; even at ±3 modulation, cosine similarity remained higher than that between different speakers of the same gender, indicating that identity preservation is largely maintained.

Subjective listening tests further validated the approach. Participants rated the perceived impression of generated utterances across several dimensions and modulation levels. Results showed statistically significant shifts toward the target impression as the VIV was moved toward the intended extreme, while overall naturalness and speaker similarity remained acceptable.

In summary, the paper demonstrates that a compact, interpretable impression vector combined with adversarial disentanglement and LLM‑driven automatic mapping enables effective, fine‑grained control of high‑level voice impressions in zero‑shot TTS. The method preserves speaker identity, requires minimal manual effort, and opens avenues for multilingual extensions, richer impression taxonomies, and real‑time interactive voice styling.

Comments & Academic Discussion

Loading comments...

Leave a Comment