Robust Reinforcement Learning-Based Locomotion for Resource-Constrained Quadrupeds with Exteroceptive Sensing

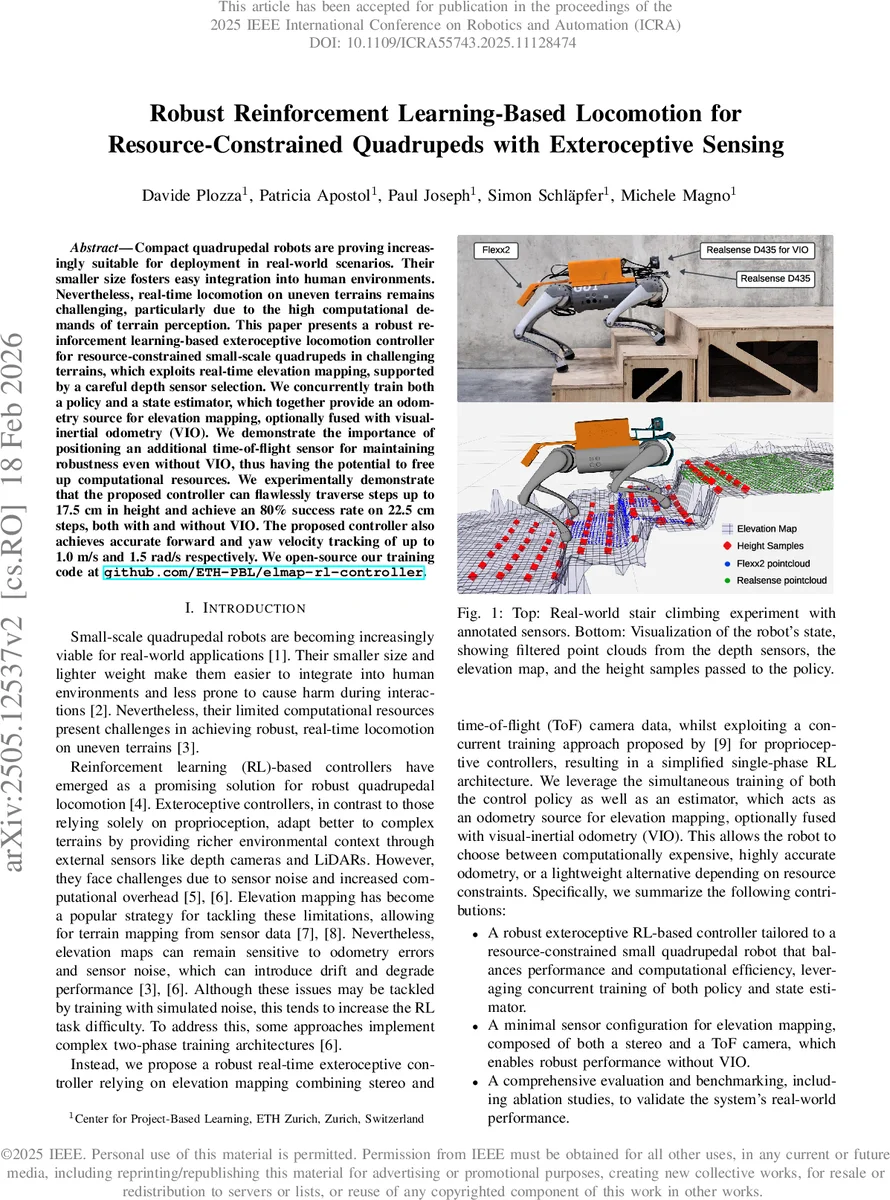

Compact quadrupedal robots are proving increasingly suitable for deployment in real-world scenarios. Their smaller size fosters easy integration into human environments. Nevertheless, real-time locomotion on uneven terrains remains challenging, particularly due to the high computational demands of terrain perception. This paper presents a robust reinforcement learning-based exteroceptive locomotion controller for resource-constrained small-scale quadrupeds in challenging terrains, which exploits real-time elevation mapping, supported by a careful depth sensor selection. We concurrently train both a policy and a state estimator, which together provide an odometry source for elevation mapping, optionally fused with visual-inertial odometry (VIO). We demonstrate the importance of positioning an additional time-of-flight sensor for maintaining robustness even without VIO, thus having the potential to free up computational resources. We experimentally demonstrate that the proposed controller can flawlessly traverse steps up to 17.5 cm in height and achieve an 80% success rate on 22.5 cm steps, both with and without VIO. The proposed controller also achieves accurate forward and yaw velocity tracking of up to 1.0 m/s and 1.5 rad/s respectively. We open-source our training code at github.com/ETH-PBL/elmap-rl-controller.

💡 Research Summary

This paper presents a robust reinforcement‑learning (RL) based locomotion controller designed for small‑scale quadrupedal robots that operate under tight computational budgets while traversing uneven terrain. The authors equip a modified Unitree Go1 platform with an Intel NUC (i7) and an NVIDIA Jetson Orin Nano, and integrate three depth sensors: a front‑facing RealSense D435 stereo camera, a rear‑mounted PMD Flexx2 time‑of‑flight (ToF) sensor, and an additional D435 used as an infrared stereo pair for visual‑inertial odometry (VIO). By combining stereo and ToF data, the system builds a real‑time 2.5‑D elevation map (0.025 m resolution, 5 × 5 m area) using a GPU‑accelerated implementation running at 30 Hz on the Jetson. Point clouds are filtered, down‑sampled, and the robot’s own limbs are masked out to avoid self‑interference. A Kalman filter updates each grid cell, and a simple global z‑shift correction mitigates minor odometry drift.

The core learning architecture follows the concurrent training paradigm introduced in prior work

Comments & Academic Discussion

Loading comments...

Leave a Comment