Cocoa: Co-Planning and Co-Execution with AI Agents

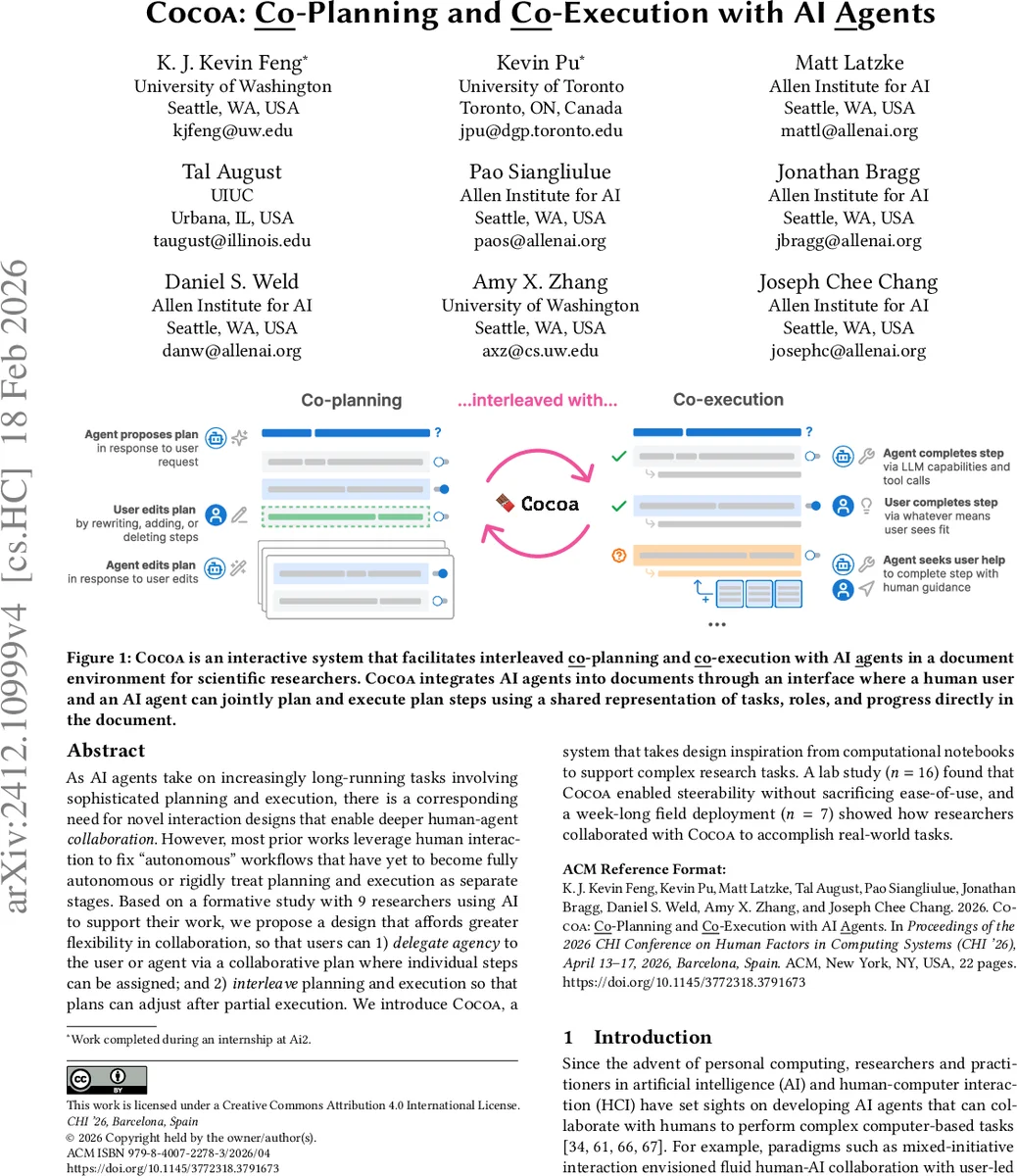

As AI agents take on increasingly long-running tasks involving sophisticated planning and execution, there is a corresponding need for novel interaction designs that enable deeper human-agent collaboration. However, most prior works leverage human interaction to fix “autonomous” workflows that have yet to become fully autonomous or rigidly treat planning and execution as separate stages. Based on a formative study with 9 researchers using AI to support their work, we propose a design that affords greater flexibility in collaboration, so that users can 1) delegate agency to the user or agent via a collaborative plan where individual steps can be assigned; and 2) interleave planning and execution so that plans can adjust after partial execution. We introduce Cocoa, a system that takes design inspiration from computational notebooks to support complex research tasks. A lab study (n=16) found that Cocoa enabled steerability without sacrificing ease-of-use, and a week-long field deployment (n=7) showed how researchers collaborated with Cocoa to accomplish real-world tasks.

💡 Research Summary

The paper introduces Cocoa, an interactive document‑based system that tightly integrates large‑language‑model (LLM) agents into the workflow of scientific researchers. Unlike prior approaches that treat planning and execution as separate, sequential phases, Cocoa enables “co‑planning” and “co‑execution,” allowing users to iteratively edit a shared plan, assign individual steps to either the human or the AI, and immediately re‑run or modify steps based on intermediate results. The design draws inspiration from computational notebooks: each plan step is represented as a notebook‑like cell that can be executed, inspected, and revised in place.

The authors first conducted a formative study with nine researchers, uncovering that project documents (e.g., lab notebooks, research wikis) are natural venues for collaborative AI assistance, but existing tools either force users to intervene only after an autonomous run or require them to control the entire process manually. These findings motivated three design goals: (1) a shared, editable plan visible in the document, (2) flexible assignment of agency per step, and (3) seamless transition between planning and execution.

Cocoa’s UI consists of a plan pane where the agent proposes an initial sequence of tasks, buttons to assign steps to the agent or the researcher, and a sidebar that displays the agent’s generated outputs (text, tables, code). Users can edit any output directly, provide feedback, and trigger re‑execution of that step, which automatically updates downstream steps. This interleaving of planning and execution creates a feedback loop that mirrors how researchers naturally work: they draft a plan, run a sub‑task, examine results, and then refine the plan before proceeding.

The system was evaluated in two studies. In a controlled lab experiment with 16 participants, Cocoa was compared against a strong chat‑based baseline that follows a more linear “plan‑then‑execute” pattern. Quantitatively, participants using Cocoa performed on average 3.2 plan modifications per task—more than double the baseline—indicating higher steerability. Subjective measures showed significant improvements in perceived control and transparency, while overall ease‑of‑use remained comparable.

A subsequent 7‑day field deployment with seven researchers applied Cocoa to real research projects such as literature surveys, method synthesis, and data organization. Participants reported that the ability to assign specific steps to the AI freed them from low‑level routine work, while the capacity to edit AI‑generated drafts prevented the propagation of errors. The shared document served both as an execution log and a living research artifact, improving traceability and collaboration among team members.

Overall, the paper contributes (1) a formative study that clarifies the needs of researchers for flexible human‑AI collaboration, (2) the Cocoa prototype that operationalizes co‑planning and co‑execution in a notebook‑style interface, (3) empirical evidence that this design yields higher agent steerability without sacrificing usability, and (4) insights from real‑world deployment about how researchers dynamically delegate agency. The authors argue that in knowledge‑intensive domains, AI agents are most valuable when they act as collaborative partners rather than fully autonomous actors. Future work is suggested on extending memory management for long‑term plans, supporting multi‑user conflict resolution, and integrating domain‑specific plugins to broaden Cocoa’s applicability.

Comments & Academic Discussion

Loading comments...

Leave a Comment