MMCAformer: Macro-Micro Cross-Attention Transformer for Traffic Speed Prediction with Microscopic Connected Vehicle Driving Behavior

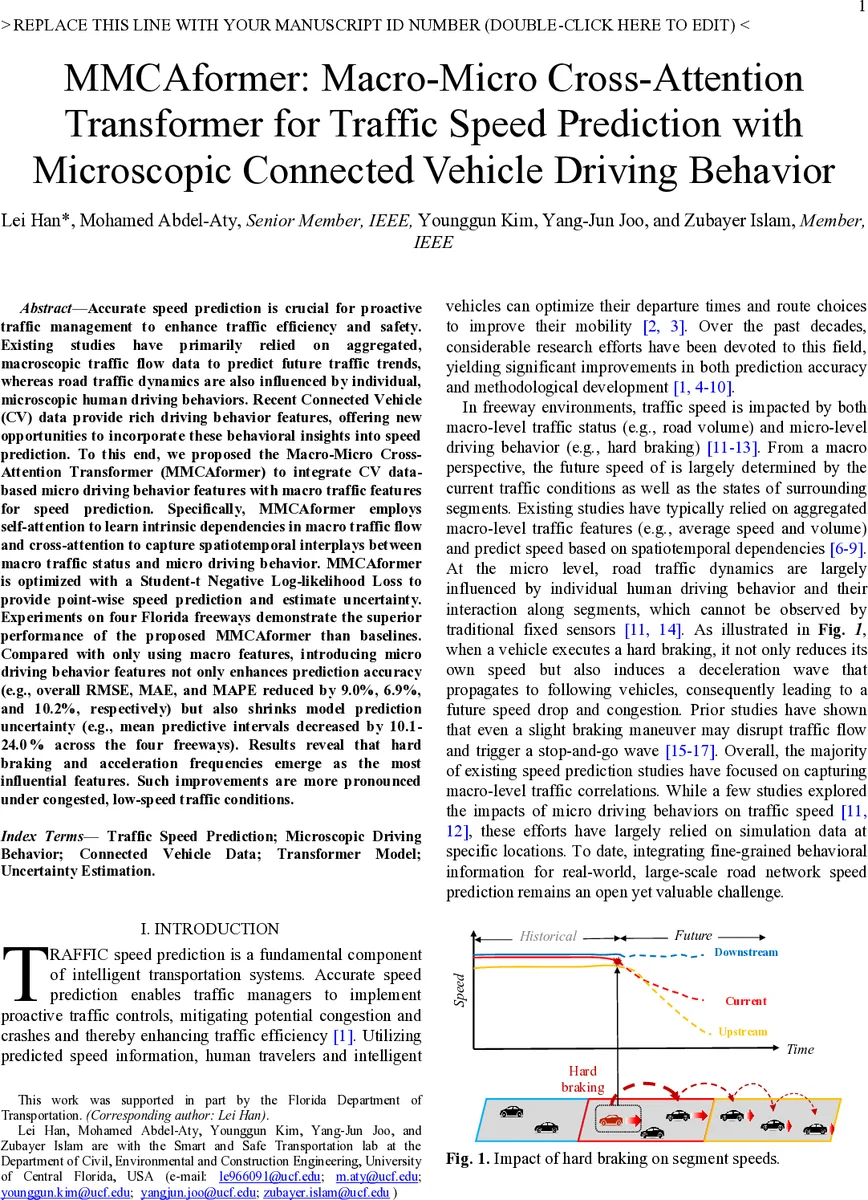

Accurate speed prediction is crucial for proactive traffic management to enhance traffic efficiency and safety. Existing studies have primarily relied on aggregated, macroscopic traffic flow data to predict future traffic trends, whereas road traffic dynamics are also influenced by individual, microscopic human driving behaviors. Recent Connected Vehicle (CV) data provide rich driving behavior features, offering new opportunities to incorporate these behavioral insights into speed prediction. To this end, we propose the Macro-Micro Cross-Attention Transformer (MMCAformer) to integrate CV data-based micro driving behavior features with macro traffic features for speed prediction. Specifically, MMCAformer employs self-attention to learn intrinsic dependencies in macro traffic flow and cross-attention to capture spatiotemporal interplays between macro traffic status and micro driving behavior. MMCAformer is optimized with a Student-t negative log-likelihood loss to provide point-wise speed prediction and estimate uncertainty. Experiments on four Florida freeways demonstrate the superior performance of the proposed MMCAformer compared to baselines. Compared with only using macro features, introducing micro driving behavior features not only enhances prediction accuracy (e.g., overall RMSE, MAE, and MAPE reduced by 9.0%, 6.9%, and 10.2%, respectively) but also shrinks model prediction uncertainty (e.g., mean predictive intervals decreased by 10.1-24.0% across the four freeways). Results reveal that hard braking and acceleration frequencies emerge as the most influential features. Such improvements are more pronounced under congested, low-speed traffic conditions.

💡 Research Summary

**

The paper introduces MMCAformer, a novel Transformer‑based architecture that jointly leverages macroscopic traffic flow data and microscopic driving‑behavior information extracted from connected‑vehicle (CV) trajectories to predict freeway speeds. Traditional speed‑prediction approaches rely almost exclusively on aggregated sensor data (loop detectors, probe‑vehicle averages) and therefore capture only the macro‑level dynamics of traffic. However, traffic flow is also strongly affected by individual driver actions such as hard braking or rapid acceleration, which can propagate as shockwaves and cause abrupt speed changes. Recent advances in CV technology provide high‑frequency (1–3 s) vehicle‑level kinematic data, making it possible to quantify these micro‑behaviors in real traffic.

Data and Feature Engineering

The authors collected CV data from three Florida counties (Hillsborough, Orange, Seminole) covering four major freeways (I‑4, I‑75, I‑275, OS‑I‑4) over 66 days in 2024. The raw dataset contains roughly 8.9 million trajectory points from about 45 000 daily trips, representing a 4–5 % market penetration. After cleaning (removing speed outliers, stationary vehicles, etc.), they retained about 2.5 million unique journeys and 35 million points. For each of the 565 road segments, they derived:

- Macro features – segment‑level average speed and CV volume (unique journey count) aggregated every 5 minutes.

- Micro features – (1) speed volatility (standard deviation of speeds within each vehicle trajectory, then averaged per segment) and (2) frequencies of acceleration and braking events. Acceleration is computed over 3‑second intervals; thresholds based on the 85th and 97.5th percentiles of the empirical distribution define three intensity levels (light, medium, hard) for both acceleration and deceleration. Consequently, seven micro‑level variables (volatility + three acceleration levels + three braking levels) are produced at the same 5‑minute cadence.

Model Architecture

MMCAformer receives two parallel input tensors: a macro tensor (X^{M}\in\mathbb{R}^{T\times N\times d_M}) and a micro tensor (X^{\mu}\in\mathbb{R}^{T\times N\times d_{\mu}}), where (T) is the historical window length, (N) the number of segments, and (d) the feature dimensions. The architecture consists of three main blocks:

- Macro Self‑Attention Encoder – a standard multi‑head self‑attention layer (identical to the original Transformer encoder) processes only the macro tensor, learning spatio‑temporal dependencies among segment speeds and volumes.

- Cross‑Attention Fusion – the macro encoder output serves as the query, while the micro tensor provides keys and values. This cross‑attention mechanism explicitly models how microscopic driving actions influence (and are influenced by) the macroscopic traffic state. The resulting fused representation captures bidirectional macro‑micro interactions.

- Prediction Head – the fused representation is passed through a linear projection that outputs three parameters of a Student‑t distribution: location (\mu) (point prediction of future speed), scale (\sigma) (uncertainty magnitude), and degrees of freedom (\nu) (tail heaviness).

Loss Function and Uncertainty Estimation

Instead of the conventional mean‑squared error, the authors adopt a Student‑t negative log‑likelihood (t‑NLL) loss:

\

Comments & Academic Discussion

Loading comments...

Leave a Comment