Automated Assessment of Kidney Ureteroscopy Exploration for Training

Purpose: Kidney ureteroscopic navigation is challenging with a steep learning curve. However, current clinical training has major deficiencies, as it requires one-on-one feedback from experts and occurs in the operating room (OR). Therefore, there is a need for a phantom training system with automated feedback to greatly \revision{expand} training opportunities. Methods: We propose a novel, purely ureteroscope video-based scope localization framework that automatically identifies calyces missed by the trainee in a phantom kidney exploration. We use a slow, thorough, prior exploration video of the kidney to generate a reference reconstruction. Then, this reference reconstruction can be used to localize any exploration video of the same phantom. Results: In 15 exploration videos, a total of 69 out of 74 calyces were correctly classified. We achieve < 4mm camera pose localization error. Given the reference reconstruction, the system takes 10 minutes to generate the results for a typical exploration (1-2 minute long). Conclusion: We demonstrate a novel camera localization framework that can provide accurate and automatic feedback for kidney phantom explorations. We show its ability as a valid tool that enables out-of-OR training without requiring supervision from an expert.

💡 Research Summary

The paper addresses a critical bottleneck in ureteroscopic training: the steep learning curve combined with the reliance on expert‑present, operating‑room (OR) based instruction. To overcome the need for one‑on‑one supervision and to expand training opportunities, the authors propose a fully video‑based localization framework that automatically evaluates a trainee’s exploration of a kidney phantom. The system consists of two main stages. First, a “reference” reconstruction is generated from a slow, thorough exploration performed by an expert. Using simultaneous localization and mapping (SLAM) techniques adapted for endoscopic imagery, the algorithm extracts robust, rotation‑invariant features (ORB) across multiple scales, matches them across frames, and builds a dense 3‑D point cloud of the phantom together with the camera trajectory. This reference model encodes the spatial positions of all calyces and serves as a ground‑truth map for subsequent assessments.

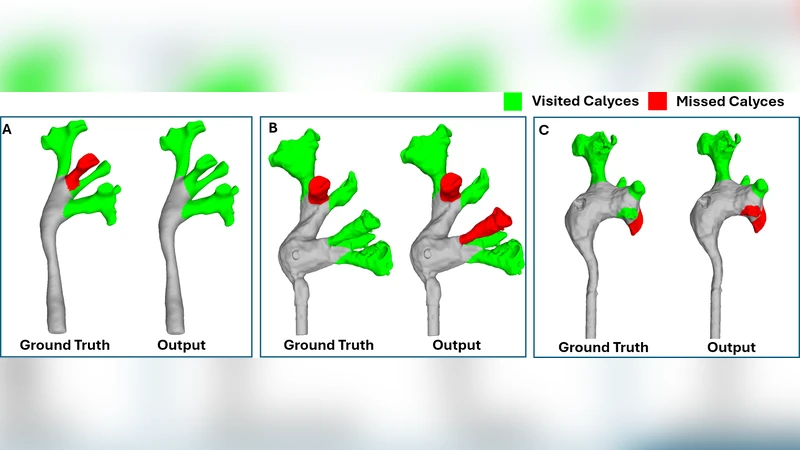

Second, any trainee video of the same phantom is registered to the reference. For each frame, the algorithm solves a Perspective‑n‑Point (PnP) problem with RANSAC outlier rejection to estimate the camera pose relative to the reference point cloud. By comparing the trajectory of the trainee’s camera with the reference, the system determines which calyces have been visited and which have been missed. The output includes a binary visited/missed label for each calyx, the timestamps of first observation, and quantitative metrics such as coverage percentage and average camera displacement.

The authors evaluated the method on fifteen exploration videos, each lasting 1–2 minutes, covering a total of 74 calyces. The system correctly classified 69 calyces, yielding a detection accuracy of roughly 93 %. Pose localization error was below 4 mm on average, with rotational error under 2°, which is comparable to clinical navigation tolerances. Processing time, after the reference reconstruction is available, is about ten minutes per exploration, making it feasible to provide immediate post‑procedure feedback without requiring real‑time computation.

Key strengths of the approach include: (1) No reliance on external tracking hardware; the entire pipeline uses only the ureteroscope video, reducing cost and simplifying setup. (2) Once a reference is built for a given phantom, it can be reused indefinitely, enabling high‑throughput training. (3) Automated, objective feedback eliminates subjectivity inherent in expert observation and provides clear performance metrics for both trainees and instructors.

Limitations are also acknowledged. The current validation uses a rigid plastic phantom; real patient anatomy introduces tissue deformation, bleeding, and occlusions that can degrade feature detection and pose estimation. The method is sensitive to lighting variations and camera shake, which may cause registration failures in less controlled environments. Moreover, the system operates offline; while ten‑minute turnaround is acceptable for post‑session review, true real‑time guidance would require algorithmic acceleration and possibly hardware‑level optimizations. Finally, the quality of the reference depends on the expert’s thoroughness; an incomplete reference would propagate errors to all subsequent assessments.

Future work suggested by the authors includes extending the framework to real patient data, incorporating adaptive feature extraction to handle variable illumination, developing a lightweight, possibly GPU‑accelerated version for near‑real‑time feedback, and creating a library of reference models for phantoms of varying sizes and anatomical variations. Longitudinal studies to measure the impact of automated feedback on skill acquisition and retention are also proposed.

In summary, this study introduces a novel, purely video‑driven camera localization and assessment pipeline for kidney ureteroscopy training. It demonstrates high accuracy in detecting missed calyces and sub‑centimeter pose precision, while delivering feedback within a practical time frame. By eliminating the need for expert presence and expensive tracking hardware, the system promises to democratize ureteroscopic skill acquisition, allowing trainees to practice extensively outside the OR and receive objective, quantitative performance evaluations.

Comments & Academic Discussion

Loading comments...

Leave a Comment