Controlled oscillation modeling using port-Hamiltonian neural networks

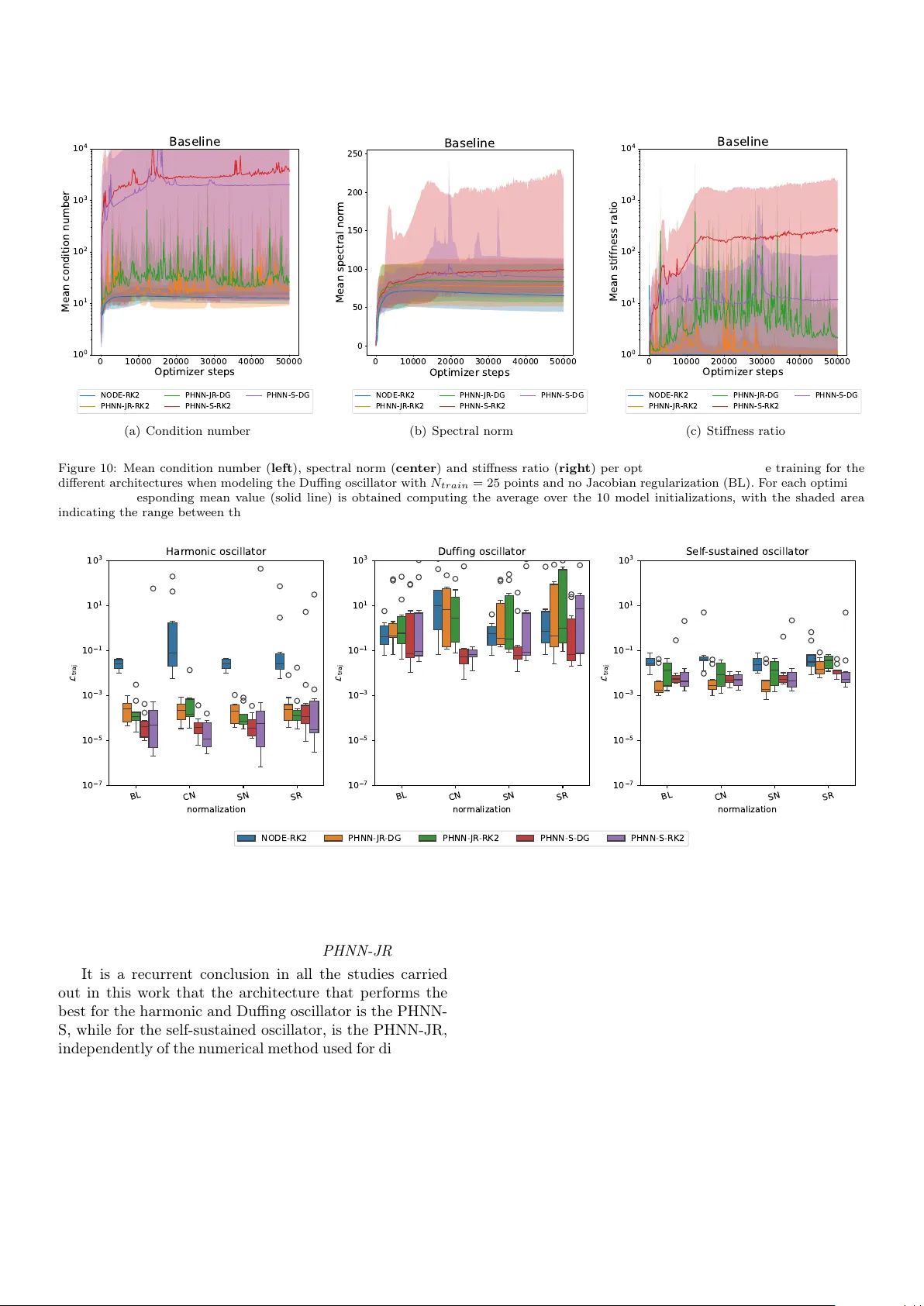

Learning dynamical systems through purely data-driven methods is challenging as they do not learn the underlying conservation laws that enable them to correctly generalize. Existing port-Hamiltonian neural network methods have recently been successfu…

Authors: ** *제공된 텍스트에 저자 정보가 명시되어 있지 않습니다.* (코드 저장소 `https://github.com/mlinaresv/ControlledOscillationPHNNs` 로부터 추정하면 “M. Linares” 등일 가능성이 있습니다.) **