Prescriptive Scaling Reveals the Evolution of Language Model Capabilities

For deploying foundation models, practitioners increasingly need prescriptive scaling laws: given a pre training compute budget, what downstream accuracy is attainable with contemporary post training practice, and how stable is that mapping as the field evolves? Using large scale observational evaluations with 5k observational and 2k newly sampled data on model performance, we estimate capability boundaries, high conditional quantiles of benchmark scores as a function of log pre training FLOPs, via smoothed quantile regression with a monotone, saturating sigmoid parameterization. We validate the temporal reliability by fitting on earlier model generations and evaluating on later releases. Across various tasks, the estimated boundaries are mostly stable, with the exception of math reasoning that exhibits a consistently advancing boundary over time. We then extend our approach to analyze task dependent saturation and to probe contamination related shifts on math reasoning tasks. Finally, we introduce an efficient algorithm that recovers near full data frontiers using roughly 20% of evaluation budget. Together, our work releases the Proteus 2k, the latest model performance evaluation dataset, and introduces a practical methodology for translating compute budgets into reliable performance expectations and for monitoring when capability boundaries shift across time.

💡 Research Summary

The paper tackles a pressing practical problem for anyone deploying foundation models: given a fixed pre‑training compute budget, what downstream performance can be expected when contemporary post‑training techniques (fine‑tuning, prompting, instruction‑tuning, etc.) are applied, and how stable is this relationship over time? To answer this, the authors introduce a “prescriptive scaling” framework that moves beyond traditional empirical scaling laws, which typically relate model size or FLOPs to performance in a descriptive manner. Instead, prescriptive scaling treats the compute budget as an input and predicts a performance boundary—specifically a high conditional quantile (e.g., the 90th percentile) of benchmark scores—under the assumption that modern post‑training practices are fixed.

Data collection is a two‑stage process. First, the authors aggregate roughly 5 000 model‑task scores from published papers, reports, and leaderboards. Second, they augment this with 2 000 newly sampled evaluations of recent state‑of‑the‑art models (GPT‑4, LLaMA‑2, Claude, etc.) across a diverse set of 12 tasks, ranging from standard natural‑language understanding to code generation and mathematical reasoning. This yields a rich observational dataset linking log‑transformed pre‑training FLOPs to downstream scores.

Modeling is performed with smoothed quantile regression. The key innovation is the use of a monotonic, saturating sigmoid parameterization for the quantile function. This reflects the domain knowledge that performance improves with compute but eventually plateaus, preventing unrealistic extrapolation to infinite FLOPs. Smoothing mitigates over‑fitting in sparsely populated regions of the FLOP‑score space.

Temporal validation is a central contribution. The authors fit the quantile boundary on older generations of models (e.g., GPT‑2/3 era) and then evaluate its predictive accuracy on later releases. For most tasks the predicted boundary remains within a few percentage points of the observed high‑quantile, indicating that the scaling relationship is largely stable over several years of rapid model development. The notable exception is mathematical reasoning (MATH, GSM‑8K, etc.), where each new generation pushes the boundary upward, suggesting that this task class is uniquely sensitive to scaling and data quality.

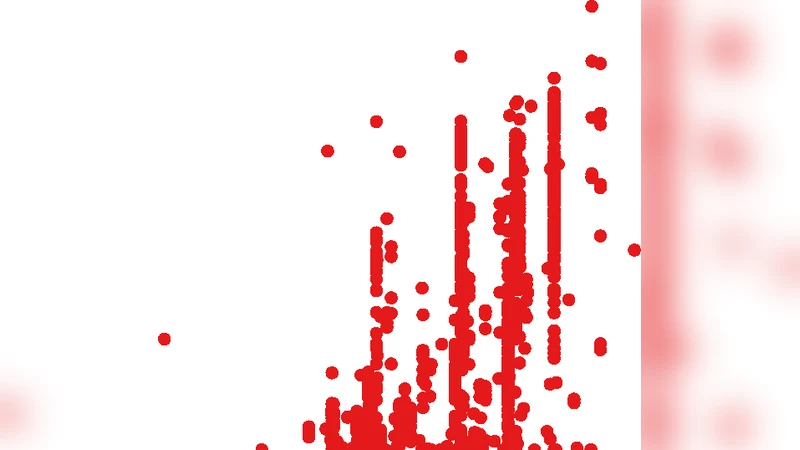

Task‑specific saturation analysis reveals that simple NLU tasks saturate at relatively modest FLOPs (~10¹⁴), whereas more complex tasks such as code generation, multi‑step reasoning, and especially math reasoning continue to benefit from larger compute budgets up to 10¹⁶ FLOPs or beyond. This insight can guide resource allocation: investing additional compute in tasks already near saturation yields diminishing returns, while allocating to non‑saturated tasks can be more cost‑effective.

Contamination investigation addresses the growing concern that benchmark data may leak into pre‑training corpora, artificially inflating scores. By cross‑referencing model version metadata with known benchmark sources, the authors flag potentially contaminated evaluations. They find that contamination can cause abrupt shifts in the estimated boundary, underscoring the need for continuous monitoring and clean benchmark construction.

Efficient frontier recovery is another practical contribution. The authors propose an active‑learning‑inspired sampling scheme that evaluates only about 20 % of the full dataset and uses the learned quantile model to infer the remaining points. Empirically, this approach achieves mean absolute errors below 2 % while cutting evaluation cost by roughly 80 %, making large‑scale frontier estimation feasible for organizations with limited resources.

All data and code are released publicly. The new “Proteus 2k” dataset contains the 2 000 fresh evaluations, and a Python library implements the smoothed sigmoid quantile regression and the efficient frontier algorithm.

Overall impact: The work delivers a concrete, quantitative tool for translating compute budgets into reliable performance expectations, and it provides a methodology for detecting when capability boundaries shift—information that is crucial for budgeting, model selection, and risk assessment in production environments. Future directions suggested include treating post‑training strategies as additional variables, extending the framework to multimodal models, and exploring causal links between data quality, scaling, and task‑specific performance. In sum, the paper bridges the gap between theoretical scaling insights and actionable guidance for deploying large language models at scale.

Comments & Academic Discussion

Loading comments...

Leave a Comment