Differentiating Between Human-Written and AI-Generated Texts Using Automatically Extracted Linguistic Features

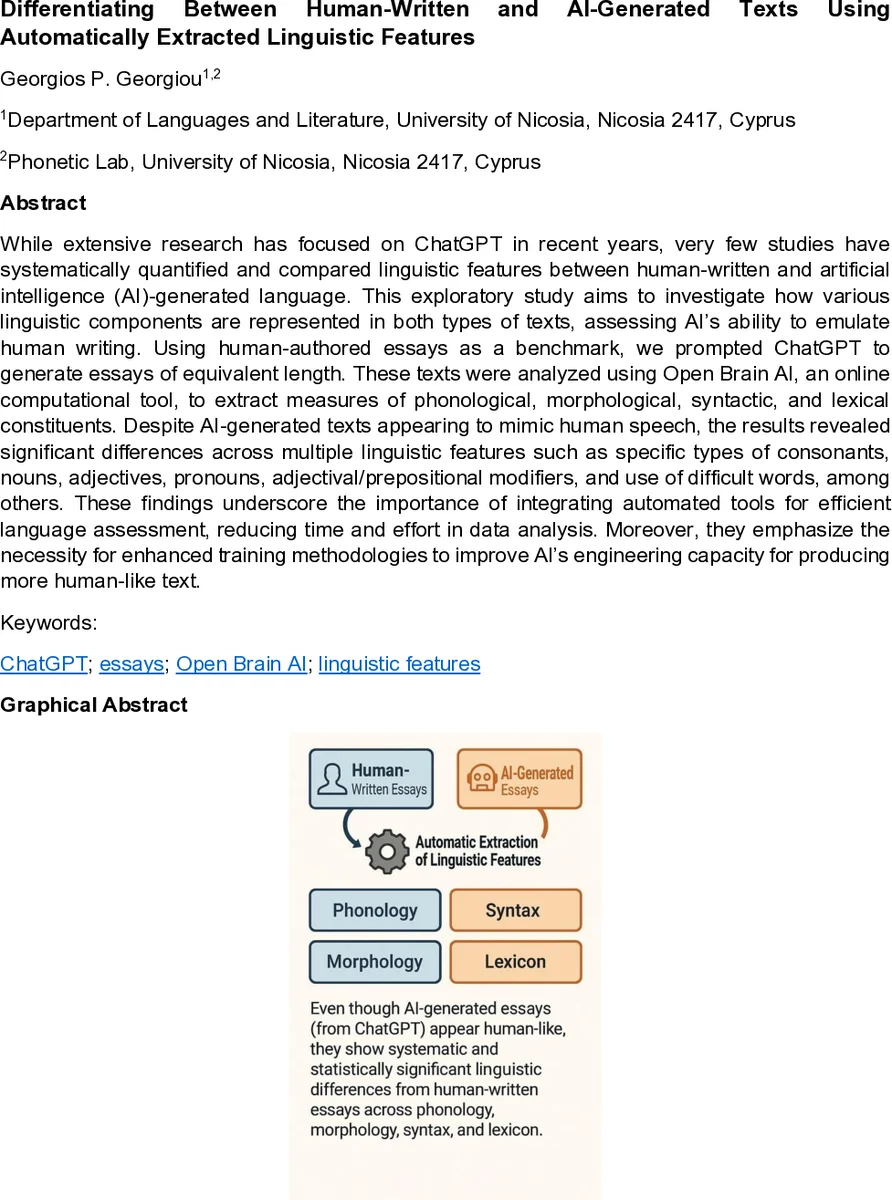

While extensive research has focused on ChatGPT in recent years, very few studies have systematically quantified and compared linguistic features between human-written and artificial intelligence (AI)-generated language. This exploratory study aims to investigate how various linguistic components are represented in both types of texts, assessing the ability of AI to emulate human writing. Using human-authored essays as a benchmark, we prompted ChatGPT to generate essays of equivalent length. These texts were analyzed using Open Brain AI, an online computational tool, to extract measures of phonological, morphological, syntactic, and lexical constituents. Despite AI-generated texts appearing to mimic human speech, the results revealed significant differences across multiple linguistic features such as specific types of consonants, nouns, adjectives, pronouns, adjectival/prepositional modifiers, and use of difficult words, among others. These findings underscore the importance of integrating automated tools for efficient language assessment, reducing time and effort in data analysis. Moreover, they emphasize the necessity for enhanced training methodologies to improve the engineering capacity of AI for producing more human-like text.

💡 Research Summary

This paper presents an exploratory comparison between human‑written IELTS Task‑2 essays and essays automatically generated by ChatGPT‑4 under tightly controlled conditions. Five authentic essays authored by professional English teachers (average 310 words, 17 sentences) served as the human baseline. Using the same prompts, word‑count targets, and academic register instructions, the authors prompted ChatGPT‑4 (March 2024 version) to produce five matching AI essays. All texts underwent minimal preprocessing—Unicode normalization, whitespace standardization, and punctuation cleaning—preserving the original linguistic structure.

The core of the analysis relied on Open Brain AI, an open‑source online platform that extracts a comprehensive set of linguistic metrics. In total, 94 features were obtained: 22 phonological (e.g., syllable count, consonant place/manner ratios, stress patterns), 15 morphological (POS counts and ratios), 44 syntactic (modifier counts, clause types, case markers), and 13 lexical (total tokens, hapax legomena, Type‑Token Ratio, difficult‑word indices). Only variables present in at least 80 % of the documents were retained, yielding 9 201 observations across the ten essays.

Statistical testing was performed in R. For each feature, a Wilcoxon rank‑sum test compared the AI and human groups, with Holm correction applied to control family‑wise error. Effect sizes were quantified using Hedges’ g with 95 % confidence intervals. To identify the most discriminative cues, separate LASSO regression models were fitted for each linguistic domain, treating the source (AI vs. human) as the binary outcome and the domain‑specific features as predictors. LASSO’s penalization simultaneously performed variable selection and regularization, producing sparse, interpretable coefficient sets.

The results revealed systematic differences across all four linguistic levels. Phonologically, AI‑generated texts showed a higher proportion of certain consonant classes, particularly voiceless plosives, suggesting a bias toward more “hard” sounds. Morphologically, AI essays contained significantly more nouns and fewer pronouns and auxiliary verbs, indicating a denser, more nominal style. Syntactically, the use of modifiers—especially adjectival and prepositional phrases—was markedly lower in AI output, and sentence structures tended to follow a template‑like pattern (e.g., uniform introductions and conclusions). Lexically, AI texts exhibited a higher share of difficult or low‑frequency words, higher lexical density, and a lower Type‑Token Ratio, reflecting a tendency toward content‑heavy vocabulary at the expense of functional word variety.

These findings corroborate earlier qualitative observations that LLM‑generated prose often displays structural uniformity and a proclivity for high‑information content, while human writing relies more heavily on functional morphemes, cohesive devices, and varied lexical choices to maintain discourse coherence. The study demonstrates that automatically extracted linguistic features can reliably differentiate AI from human text, offering an interpretable alternative to black‑box probability‑based detectors such as DetectGPT.

Limitations include the modest sample size (only ten essays), the narrow topical range (IELTS prompts), and the inability to manipulate ChatGPT’s decoding parameters (temperature, top‑p, etc.) through the web interface. Consequently, the generalizability of the identified cues to other genres, languages, or LLM versions remains to be validated.

The authors suggest several avenues for future work: expanding the corpus to include diverse topics, registers, and languages; comparing multiple LLMs (e.g., GPT‑3.5, Claude, LLaMA) under controlled settings; integrating the identified statistical features with existing probability‑curvature detectors to build hybrid, more robust detection systems; and exploring real‑time applications in education, publishing, and plagiarism monitoring. By pinpointing where AI‑generated language diverges from human norms, the research provides actionable insights for improving LLM training regimes, enhancing the naturalness of generated text, and developing transparent tools for assessing AI‑produced content.

Comments & Academic Discussion

Loading comments...

Leave a Comment