Is Mamba Reliable for Medical Imaging?

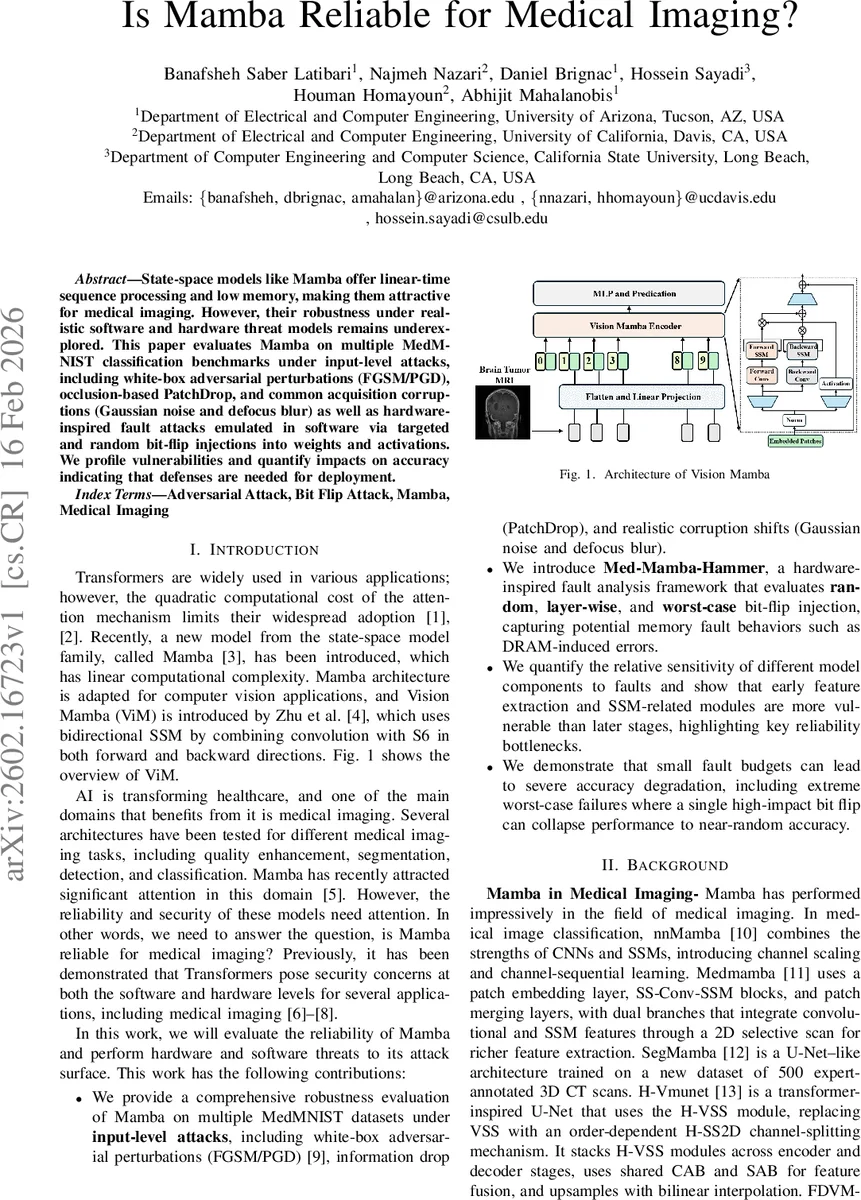

State-space models like Mamba offer linear-time sequence processing and low memory, making them attractive for medical imaging. However, their robustness under realistic software and hardware threat models remains underexplored. This paper evaluates Mamba on multiple MedM-NIST classification benchmarks under input-level attacks, including white-box adversarial perturbations (FGSM/PGD), occlusion-based PatchDrop, and common acquisition corruptions (Gaussian noise and defocus blur) as well as hardware-inspired fault attacks emulated in software via targeted and random bit-flip injections into weights and activations. We profile vulnerabilities and quantify impacts on accuracy indicating that defenses are needed for deployment.

💡 Research Summary

The paper conducts a comprehensive reliability assessment of the state‑space model Mamba when applied to medical image classification. Mamba’s linear‑time complexity and low memory footprint make it an attractive alternative to quadratic‑cost Transformers, especially for resource‑constrained clinical settings. However, the authors argue that robustness against realistic software and hardware threat models has not been systematically explored. To fill this gap, they evaluate a Mamba‑based architecture (MedMamba) on ten MedMNIST benchmark datasets covering a variety of imaging modalities (pathology, dermatology, OCT, chest X‑ray, retinal, breast, blood, and organ classification).

The evaluation is divided into two major threat categories. First, input‑level attacks are examined along three axes: (1) white‑box adversarial perturbations using FGSM and PGD (ℓ∞‑bounded with ε = 1/255, 20 PGD steps), (2) information‑drop attacks implemented as PatchDrop, where a configurable fraction of non‑overlapping patches is replaced by a baseline value, and (3) natural corruption attacks consisting of additive Gaussian noise and defocus blur at five severity levels. Results show dramatic accuracy drops: for example, PathMNIST’s clean accuracy of 89.73 % falls to 26.31 % under FGSM and 10.56 % under PGD; similar trends appear across all datasets, with some (e.g., RetinaMNIST) collapsing to near‑random performance. PatchDrop experiments reveal that removing as little as 18.75 % of patches can reduce BloodMNIST accuracy from 97.63 % to 32.88 %, indicating high sensitivity to missing local information. Corruption experiments demonstrate that increasing noise or blur severity can degrade performance by 30 %–50 % depending on the dataset.

Second, hardware‑level threats are modeled through a novel “Med‑Mamba‑Hammer” framework that injects bit‑flips into model weights and/or activations. Three attack strategies are explored: (a) random global bit‑flips under a fixed budget K, (b) layer‑wise targeted flips to assess sensitivity of specific modules (early feature extractors, SSM blocks, classifier heads), and (c) a worst‑case search that uses random‑search over seeds to identify the most damaging configuration for a given K. The authors provide three algorithms to automate these experiments. Empirical findings indicate that even a single flipped bit (K = 1) can reduce PathMNIST accuracy from 89.72 % to 81.73 %, and with K = 16 the accuracy drops below 35 % for most tasks. Layer‑wise analysis shows that early convolutional layers and the SSM modules are disproportionately vulnerable, suggesting that errors in these stages propagate and amplify throughout the network. The worst‑case search demonstrates that with as few as two to four carefully chosen bit‑flips, the model can be driven to near‑random predictions, highlighting a severe security risk for deployment on edge devices where memory faults (e.g., Rowhammer‑induced DRAM errors) are plausible.

The paper’s contributions are fourfold: (1) a thorough input‑level robustness benchmark for Mamba on standardized medical datasets, (2) the introduction of the Med‑Mamba‑Hammer fault‑analysis framework, (3) a quantitative sensitivity map that identifies the most fragile components of the architecture, and (4) empirical evidence that modest fault budgets can cause catastrophic performance degradation, thereby motivating the need for dedicated defenses.

In the discussion, the authors emphasize that while Mamba offers computational advantages, its current form is insufficiently robust for safety‑critical medical applications. They recommend integrating defensive measures such as adversarial training, input preprocessing (denoising, inpainting), redundancy (duplicate pathways for early layers), and error‑detecting codes for weight storage. Moreover, hardware‑aware design choices—such as using error‑correcting memory, periodic checksum verification, and fault‑tolerant activation quantization—are suggested to mitigate bit‑flip risks.

The conclusion underscores that Mamba’s promise in medical imaging must be balanced against its demonstrated fragility under realistic attack scenarios. Future work should focus on developing hardened Mamba variants that combine efficiency with provable robustness, possibly leveraging formal verification, stochastic weight averaging, or hybrid architectures that fuse convolutional resilience with state‑space expressiveness. Only through such comprehensive hardening can Mamba become a trustworthy component in clinical AI pipelines.

Comments & Academic Discussion

Loading comments...

Leave a Comment