bayesics: Core Statistical Methods via Bayesian Inference in R

Bayesian statistics is an integral part of contemporary applied science. bayesics provides a single framework, unified in syntax and output, for performing the most commonly used statistical procedures, ranging from one- and two-sample inference to general mediation analysis. bayesics leans hard away from the requirement that users be familiar with sampling algorithms by using closed-form solutions whenever possible, and automatically selecting the number of posterior samples required for accurate inference when such solutions are not possible. bayesics} focuses on providing key inferential quantities: point estimates, credible intervals, probability of direction, region of practical equivalance (ROPE), and, when applicable, Bayes factors. While algorithmic assessment is not required in bayesics, model assessment is still critical; towards that, bayesics provides diagnostic plots for parametric inference, including Bayesian p-values. Finally, bayesics provides extensions to models implemented in alternative R packages and, in the case of mediation analysis, correction to existing implementations.

💡 Research Summary

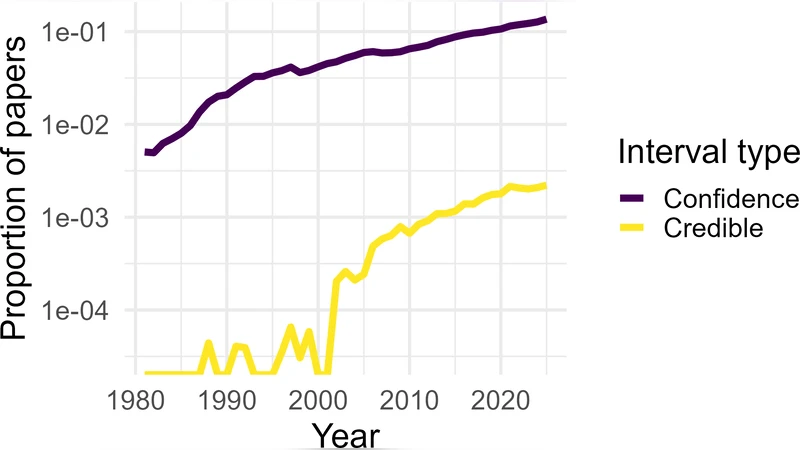

The paper introduces bayesics, an R package that delivers a unified, user‑friendly framework for conducting the most common Bayesian statistical procedures. Recognizing that Bayesian inference has become indispensable across applied sciences, the authors set out to lower the technical barrier that traditionally accompanies Bayesian analysis—namely, the need to understand and manually configure sampling algorithms such as Markov chain Monte Carlo (MCMC).

Core Design Philosophy

bayesics is built around four guiding principles. First, whenever a conjugate prior–likelihood pair yields a closed‑form posterior, the package computes point estimates, credible intervals, and other inferential quantities analytically, eliminating the need for simulation. Second, for models lacking a closed‑form solution, bayesics automatically invokes an MCMC engine (via rstan or cmdstanr) and determines the required number of posterior draws on the fly. The algorithm begins with a pilot run, evaluates effective sample size (ESS) and the Gelman‑Rubin diagnostic (R̂), and iteratively expands the chain until a user‑specified precision target (e.g., ESS ≥ 2000) is met. This adaptive sampling strategy balances computational efficiency with statistical reliability.

Third, the package supplies a comprehensive set of inferential summaries beyond the traditional posterior mean and credible interval. It reports the Probability of Direction (POD)—the posterior probability that an effect is positive (or negative)—which offers an intuitive measure of effect existence. It also calculates the Region of Practical Equivalence (ROPE), a user‑defined interval of effect sizes deemed practically negligible, and provides the posterior probability that the parameter lies within this region. When model comparison is relevant, bayesics computes Bayes factors and posterior model probabilities, employing a prior‑robust formulation to mitigate sensitivity to arbitrary prior choices.

Fourth, model assessment is integrated directly into the workflow. For parametric models, bayesics generates posterior predictive checks, Bayesian p‑values, residual plots, and other diagnostic graphics automatically. These visual tools allow practitioners to detect violations of distributional assumptions, assess fit, and decide whether model refinement is necessary.

Functionality and Syntax

The package offers a small set of intuitively named functions that cover a broad spectrum of analyses: bayes_one_sample(), bayes_two_sample(), bayes_anova(), bayes_lm(), bayes_glmm(), and bayes_mediation(). Each function accepts a data frame, specification of dependent and independent variables, and optional prior definitions. Default priors are provided for common cases (e.g., normal priors for regression coefficients, half‑Cauchy for variance components), but users retain full control to tailor priors to domain knowledge.

Closed‑Form vs. Simulation

When a conjugate relationship exists—such as normal‑normal for means, beta‑binomial for proportions, or gamma‑Poisson for rates—bayesics derives the posterior analytically. Credible intervals are obtained directly from the posterior quantiles. In non‑conjugate settings (e.g., logistic regression, hierarchical models), the package seamlessly switches to MCMC, handling all tuning, warm‑up, and convergence diagnostics behind the scenes.

Extended Compatibility

A notable strength of bayesics is its interoperability with existing R packages. It can accept model objects from lme4, nlme, or brms, re‑fit them in a fully Bayesian manner, and return the enriched set of summaries. For mediation analysis, the authors identified shortcomings in the popular mediation package—specifically, the lack of Bayesian ROPE and POD calculations—and implemented corrected procedures that produce both indirect and direct effect estimates together with practical‑equivalence assessments.

Illustrative Applications

Three real‑world examples demonstrate the package’s capabilities.

- A psychology experiment uses

bayes_one_sample()to test whether a mean difference deviates from zero. The output includes the posterior mean, 95 % credible interval, POD = 0.98, and a 73 % probability that the effect lies within a ROPE of

Comments & Academic Discussion

Loading comments...

Leave a Comment