Just KIDDIN: Knowledge Infusion and Distillation for Detection of INdecent Memes

Toxicity identification in online multimodal environments remains a challenging task due to the complexity of contextual connections across modalities (e.g., textual and visual). In this paper, we propose a novel framework that integrates Knowledge Distillation (KD) from Large Visual Language Models (LVLMs) and knowledge infusion to enhance the performance of toxicity detection in hateful memes. Our approach extracts sub-knowledge graphs from ConceptNet, a large-scale commonsense Knowledge Graph (KG) to be infused within a compact VLM framework. The relational context between toxic phrases in captions and memes, as well as visual concepts in memes enhance the model’s reasoning capabilities. Experimental results from our study on two hate speech benchmark datasets demonstrate superior performance over the state-of-the-art baselines across AU-ROC, F1, and Recall with improvements of 1.1%, 7%, and 35%, respectively. Given the contextual complexity of the toxicity detection task, our approach showcases the significance of learning from both explicit (i.e. KG) as well as implicit (i.e. LVLMs) contextual cues incorporated through a hybrid neurosymbolic approach. This is crucial for real-world applications where accurate and scalable recognition of toxic content is critical for creating safer online environments.

💡 Research Summary

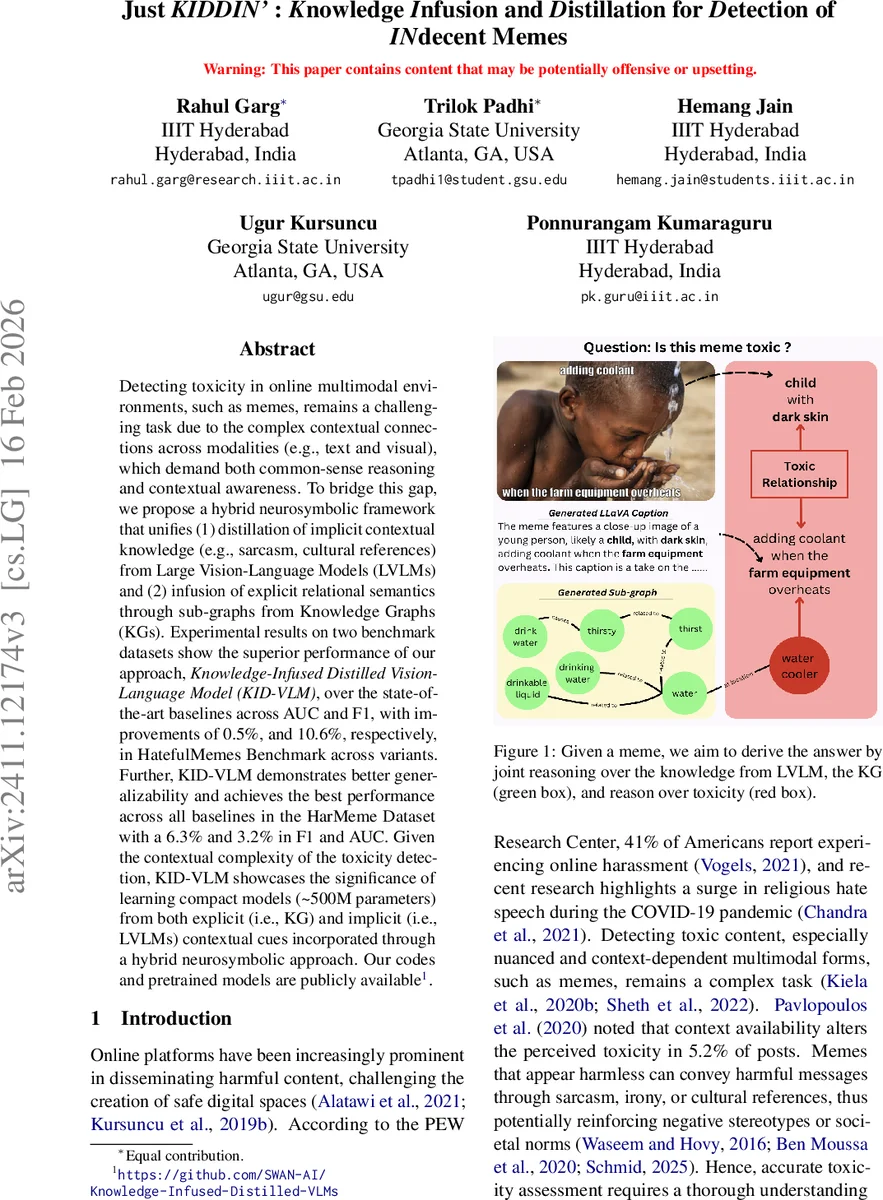

The paper addresses the challenging problem of detecting toxic content in multimodal online memes, where both visual and textual cues must be interpreted in a nuanced, culturally aware manner. Existing approaches either rely solely on large pre‑trained vision‑language models (VLMs) or on textual classifiers, which struggle with sarcasm, irony, and implicit references. To overcome these limitations, the authors propose a hybrid neurosymbolic framework called Knowledge‑Infused Distilled Vision‑Language Model (KID‑VLM) that unifies two complementary knowledge sources: (1) implicit contextual knowledge distilled from a large vision‑language teacher model (LVLM) and (2) explicit commonsense knowledge infused from a knowledge graph (KG).

The teacher model is LLaVA‑NeXT, a state‑of‑the‑art LVLM that generates a caption for each meme, capturing hidden semantics such as sarcasm or cultural references. During training, the student model—a frozen CLIP encoder (≈500 M parameters)—produces multimodal embeddings for the image‑text pair. A consistency loss (L_KD) measures Euclidean distance between the student embedding and the teacher’s caption embedding, encouraging the student to mimic the teacher’s latent reasoning. This distillation occurs only in training, so inference remains lightweight with no caption generation required.

For explicit knowledge, the authors extract a sub‑graph (G_sub) from ConceptNet based on concepts appearing in the meme’s overlaid text and the teacher‑generated caption. Relevance of each candidate node is scored using two methods: MiniLM‑based cosine similarity (primary) and RoBERTa‑based perplexity (secondary). The top‑k (k = 750) most relevant entities are retained, and a “context node” z representing the meme’s overall context is added, linking to all selected entities to form a working graph G_W.

The working graph is processed by a Relational Graph Convolutional Network (R‑GCN), which aggregates relation‑specific information from neighboring nodes. After mean‑pooling, a graph‑level representation h_graph is obtained. This is fused with the distilled multimodal representation h_distilled via a gated fusion mechanism: a learnable gate G (sigmoid of a linear projection of the concatenated vectors) balances the contribution of each source. The final fused vector F_multimodal is fed to a binary classifier trained with a joint loss L_total = λ₁ L_BCE + λ₂ L_KD, where L_BCE is binary cross‑entropy for toxicity prediction.

Experiments are conducted on two benchmark datasets: HatefulMemes (with “Seen” and “Unseen” splits) and HarMeme. KID‑VLM achieves state‑of‑the‑art results, improving AU‑ROC by 0.5 percentage points, F1 by 10.6 pp, and Recall by 35 pp over prior methods on HatefulMemes, and attaining an AU‑ROC of 92.98 % on HarMeme. Notably, these gains are realized with a compact model (~500 M parameters), contrasting with much larger models such as Flamingo‑80B or LENS that demand prohibitive computational resources.

Ablation studies demonstrate that both knowledge distillation and knowledge infusion contribute synergistically: removing KD or KG infusion degrades performance, confirming the complementary nature of implicit and explicit knowledge. Error analysis reveals that the model better handles memes requiring cultural or sarcastic interpretation, which were frequent failure cases for baseline models.

The paper’s contributions are: (1) a novel integration of KD from LVLMs and KI from ConceptNet within a unified neurosymbolic architecture; (2) a practical method for extracting and scoring relevant KG sub‑graphs tailored to each meme; (3) a gated fusion strategy that dynamically balances implicit and explicit knowledge; (4) extensive empirical validation showing superior accuracy and generalization while maintaining computational efficiency.

Limitations include potential noise in KG extraction, dependence on the teacher model’s caption quality, and the static nature of ConceptNet, which may not capture emerging slang or domain‑specific terminology. Future work is suggested to explore dynamic KG updates, multi‑teacher ensembles for more robust caption generation, and richer relational reasoning mechanisms to further improve robustness across diverse cultural contexts.

Comments & Academic Discussion

Loading comments...

Leave a Comment