Dataset Distillation via Committee Voting

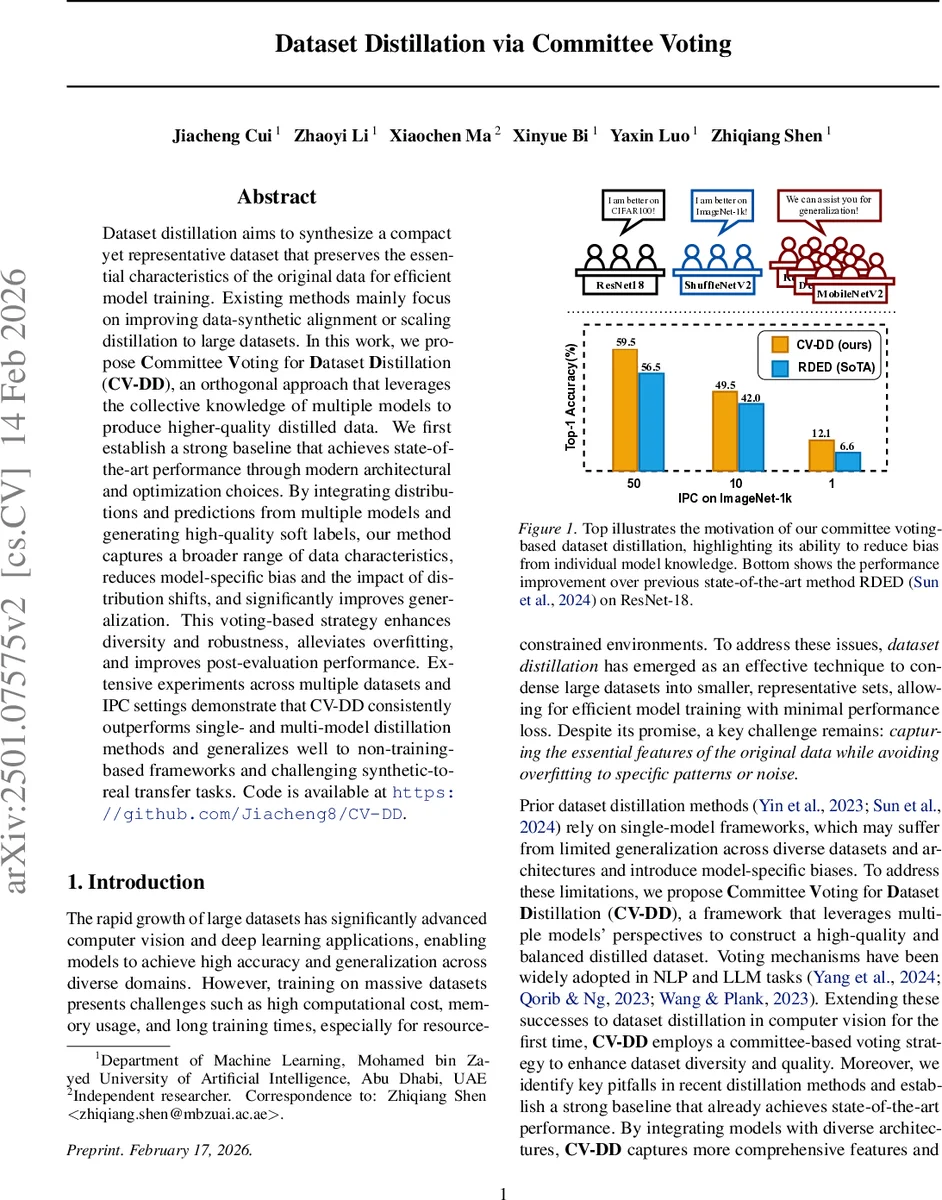

Dataset distillation aims to synthesize a compact yet representative dataset that preserves the essential characteristics of the original data for efficient model training. Existing methods mainly focus on improving data-synthetic alignment or scaling distillation to large datasets. In this work, we propose $\textbf{C}$ommittee $\textbf{V}$oting for $\textbf{D}$ataset $\textbf{D}$istillation ($\textbf{CV-DD}$), an orthogonal approach that leverages the collective knowledge of multiple models to produce higher-quality distilled data. We first establish a strong baseline that achieves state-of-the-art performance through modern architectural and optimization choices. By integrating distributions and predictions from multiple models and generating high-quality soft labels, our method captures a broader range of data characteristics, reduces model-specific bias and the impact of distribution shifts, and significantly improves generalization. This voting-based strategy enhances diversity and robustness, alleviates overfitting, and improves post-evaluation performance. Extensive experiments across multiple datasets and IPC settings demonstrate that CV-DD consistently outperforms single- and multi-model distillation methods and generalizes well to non-training-based frameworks and challenging synthetic-to-real transfer tasks. Code is available at: https://github.com/Jiacheng8/CV-DD.

💡 Research Summary

Dataset distillation seeks to compress a large training set into a tiny synthetic one that still enables high‑performance model training. While prior work has explored meta‑model matching, gradient matching, distribution matching, trajectory matching, and decoupled optimization, most methods rely on a single backbone and thus inherit model‑specific bias and limited diversity. This paper introduces Committee Voting for Dataset Distillation (CV‑DD), an orthogonal approach that leverages the collective knowledge of multiple heterogeneous models to synthesize higher‑quality distilled data.

The authors first build a strong baseline, SRe2L++, which improves upon the original SRe2L by initializing synthetic images with real samples, adding RandomResizedCrop augmentation, using smaller batch sizes, and applying a cosine‑smoothed learning‑rate schedule. On top of this baseline they propose two key innovations:

-

Prior‑Performance Guided Voting – A committee of |S| diverse architectures (ResNet‑18, ResNet‑50, ShuffleNetV2, MobileNetV2, DenseNet121) is assembled. Each model Φ_i is first trained on a split of the original data, then evaluated on a held‑out validation set to obtain a prior performance score α_i. During synthetic data generation, the loss for a candidate synthetic image ŭ is a weighted sum of the individual model losses, where the weights are a softmax over α_i/T (temperature T). This gives stronger models more influence while still allowing weaker models to contribute complementary signals. Theoretical analysis (Theorem 3.1) shows that greater committee diversity K leads to an increase in intra‑class cosine distance over training steps, indicating richer feature separation. Theorem 3.2 proves that the gradient direction obtained by prior‑weighted voting aligns better with the true generalization risk gradient than uniform averaging, thereby promoting better generalization.

-

Batch‑Specific Soft Labeling (BSSL) – In the post‑distillation stage, soft labels are usually generated by a teacher model whose BatchNorm (BN) statistics are frozen from real‑data training. The authors observe a persistent mismatch between BN statistics of synthetic and real data, even when synthetic images are optimized to match BN statistics. BSSL recomputes BN statistics for each synthetic batch while keeping the teacher’s other parameters fixed, thus producing soft labels that reflect the actual distribution of the synthetic batch. This simple modification dramatically improves downstream performance.

The full CV‑DD pipeline consists of: (i) real‑image initialization, (ii) iterative synthetic image updates guided by prior‑performance voting, (iii) generation of batch‑specific soft labels, and (iv) final training of a student model on the distilled set using a smoothed learning‑rate schedule.

Extensive experiments are conducted on CIFAR‑100, Tiny‑ImageNet, and ImageNet‑1K under multiple Images‑Per‑Class (IPC) settings (1, 10, 50). Compared with the previous state‑of‑the‑art non‑training‑based method RDED (Sun et al., 2024), CV‑DD achieves consistent gains across all IPCs and architectures. For example, on ResNet‑18 with IPC = 1 on ImageNet‑1K, CV‑DD reaches 59.5 % top‑1 accuracy versus 49.5 % for RDED, a 10 % absolute improvement. Cross‑architecture generalization tests show that models trained on CV‑DD distilled data outperform those trained on single‑model distilled data by 3–4 % when evaluated with unseen backbones such as MobileNetV2 or DenseNet121. Moreover, CV‑DD integrates seamlessly with non‑training‑based frameworks (e.g., RDED) and remains effective in synthetic‑to‑real transfer scenarios.

The paper also discusses limitations: voting loss computation scales linearly with committee size, and obtaining prior performance scores requires an extra distill‑and‑evaluate step. The authors mitigate the former by sampling a small subset (N = 2) of committee members per iteration, and the latter by a simple 80/20 split and a single evaluation pass.

In summary, CV‑DD advances dataset distillation by (1) exploiting multi‑model ensemble voting with performance‑aware weighting, (2) addressing distribution shift between synthetic and real data through batch‑specific soft labeling, and (3) building on an enhanced baseline to achieve state‑of‑the‑art results. The approach opens avenues for automated committee construction, meta‑learning of voting weights, and extension to domains beyond vision such as NLP or speech.

Comments & Academic Discussion

Loading comments...

Leave a Comment